GPT-5.5 vs Claude Opus 4.7: Benchmarks and Pricing

GPT-5.5 and Claude Opus 4.7 both launched in April 2026 with 1M context windows and agentic coding focus. One leads on math and long-context retrieval, the other on software engineering and vision.

Seven days apart. Same price for input tokens. Identical context windows. April 2026 handed developers two frontier model upgrades from the two labs that have traded the top spot all year, and neither one is a clear winner across the board. This comparison covers the real differences so you can pick the right tool for your workload - not the one with the better press release.

TL;DR

- Choose GPT-5.5 if you need the best long-context retrieval, hard math reasoning, or terminal-heavy DevOps work

- Choose Claude Opus 4.7 if you need the strongest software engineering agent, the highest visual chart reasoning, or a model that costs 17% less per output token

- Both carry a $5/1M input price; GPT-5.5 charges $30/1M output vs Opus 4.7's $25/1M - and Opus 4.7 produces far more tokens per task due to a different generation style

Quick Overview

| Feature | GPT-5.5 | Claude Opus 4.7 |

|---|---|---|

| Provider | OpenAI | Anthropic |

| Released | April 23, 2026 | April 16, 2026 |

| Input pricing | $5 / 1M tokens | $5 / 1M tokens |

| Output pricing | $30 / 1M tokens | $25 / 1M tokens |

| Context window | 1M tokens | 1M tokens |

| Max output | Not specified | 128K tokens |

| Knowledge cutoff | December 2025 | Early 2026 |

| Multimodal | Text, images, audio, video | Text, images |

| Best for | Math, long context, terminal work | Software engineering, vision charts |

Both models are now available in production APIs. Claude Opus 4.7 is accessible through the Anthropic API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. GPT-5.5 shipped to Plus, Pro, Business, and Enterprise users in ChatGPT with API access on April 24.

GPT-5.5: The Efficient Generalist

OpenAI codenamed this one "Spud," and it's the first fully retrained base model since GPT-4.5. Every model between GPT-4.5 and GPT-5.5 - including GPT-5, 5.1, 5.2, 5.3, and 5.4 - built on the same architectural foundation. GPT-5.5 doesn't.

The headline claim is efficiency. On equivalent coding tasks, GPT-5.5 uses 72% fewer output tokens than Opus 4.7. That sounds like it cuts output quality, but the benchmark numbers don't support that interpretation. What it actually means is that GPT-5.5 reasons more compactly: it reaches a conclusion with fewer generation steps. OpenAI says it uses 40% fewer tokens than GPT-5.4 while performing at a higher level.

Multimodal Architecture

GPT-5.5 is natively omnimodal in a way no prior GPT model was. Text, images, audio, and video share a single unified architecture - no separate processing pipelines. This matters for applications that mix data types, since cross-modal reasoning happens within the same attention heads rather than being spliced together after the fact.

The long-context retrieval improvement is the most striking upgrade. On OpenAI's MRCR v2 benchmark at 512K-1M token contexts, GPT-5.5 reaches 74.0% - up from 36.6% for GPT-5.4. That's more than doubling over one generation. Opus 4.7 scores 32.2% on the same range. For workloads that require reasoning over entire codebases, legal document sets, or multi-session conversation histories, this gap is real and large.

Speed Profile

GPT-5.5 matches GPT-5.4's per-token latency in real-world serving, which is notable given the intelligence jump. There's a trade-off at the start of a response: time-to-first-token sits around 3 seconds, versus Opus 4.7's roughly 0.5 seconds. For interactive chat, you notice that. For autonomous agents running unattended, you don't.

GPT-5.5 Pro is also available at $30/1M input and $180/1M output for workloads that need even higher throughput and priority routing. There's also a Codex Fast mode at 1.5x tokens-per-second for 2.5x the cost.

Claude Opus 4.7: The Software Engineer

Anthropic built Opus 4.7 squarely for long-horizon agentic coding. The two headline benchmark improvements make that focus concrete: SWE-bench Verified jumped from 80.8% to 87.6%, and SWE-bench Pro went from 53.4% to 64.3%. These are the benchmarks that reflect real pull-request-level software engineering, not toy coding problems.

What Changed from Opus 4.6

The biggest new mechanism is self-verification. Opus 4.7 can devise its own tests and checks before reporting back. That's not just a safety feature - it's an agent architecture feature. A model that checks its own outputs before finishing a task catches its own mistakes without requiring external scaffolding to catch them.

Three other changes matter for production use. First, the tokenizer update: the same text now maps to 1.0-1.35x more tokens, which means prompts tuned for Opus 4.6 may need re-evaluation. Second, the new xhigh effort level sits between high and max, giving you a finer dial for reasoning depth without jumping to full max-effort cost. Third, file-system memory has improved, so multi-session tasks persist state more reliably.

Vision Upgrade

Opus 4.7 accepts images up to 2,576 pixels on the long edge - roughly 3.75 megapixels, more than three times the resolution of prior Claude models. The practical impact shows in benchmarks: CharXiv-R (visual reasoning over scientific charts) jumped 13.6 points to 91.0% with tools. If your pipeline reads financial charts, technical diagrams, or chemical structures, this is a material difference.

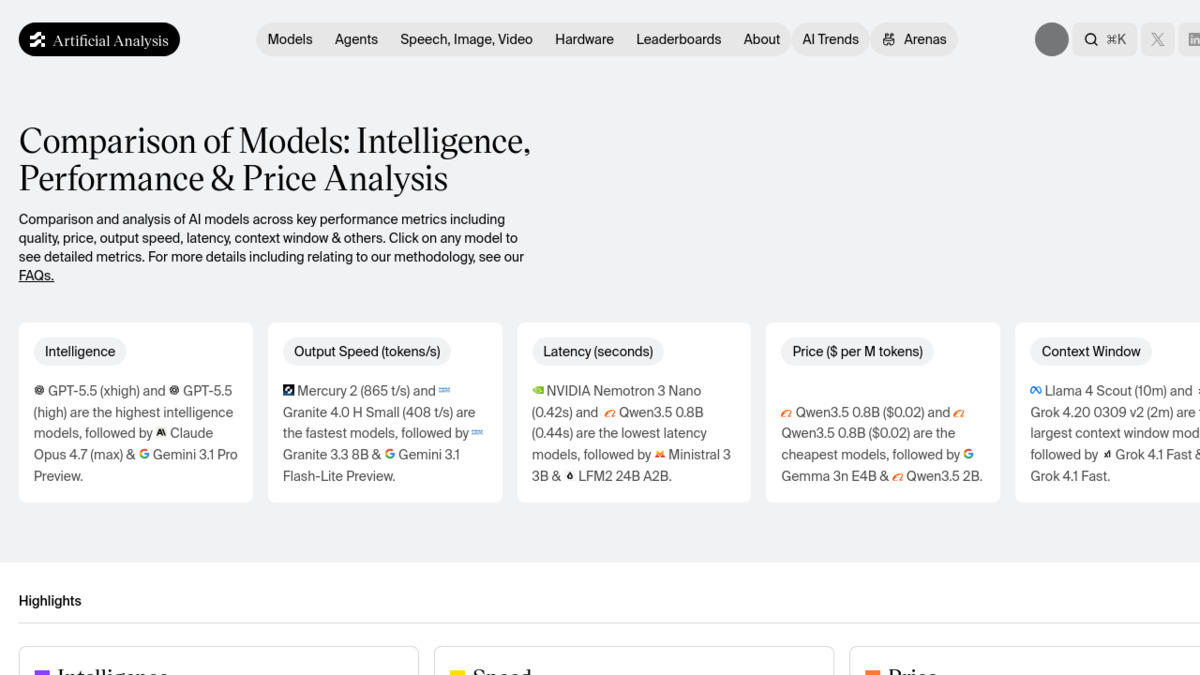

Benchmark tracking sites now show GPT-5.5 and Opus 4.7 trading the top spots across different categories.

Source: artificialanalysis.ai

Benchmark tracking sites now show GPT-5.5 and Opus 4.7 trading the top spots across different categories.

Source: artificialanalysis.ai

Benchmark Head-to-Head

Our coding benchmarks leaderboard tracks these numbers live, but here's the April 2026 snapshot:

| Benchmark | GPT-5.5 | Claude Opus 4.7 |

|---|---|---|

| GPQA Diamond (graduate reasoning) | 93.6% | 94.2% |

| HLE with tools (frontier research) | 64.7% | 54.7% |

| ARC-AGI-2 (novel reasoning) | 85.0% | 75.8% |

| SWE-bench Verified (software engineering) | ~82.6% | 87.6% |

| SWE-bench Pro (hard engineering) | 58.6% | 64.3% |

| Terminal-Bench 2.0 (DevOps / CLI) | 82.7% | 69.4% |

| MRCR v2 at 512K-1M (long context) | 74.0% | 32.2% |

| FrontierMath Tier 4 (advanced math) | 35.4% | 22.9% |

| CharXiv-R with tools (visual charts) | - | 91.0% |

| MCP-Atlas (tool orchestration) | 75.3% | 79.1% |

| MMLU-Pro (knowledge) | - | 89.87% |

GPT-5.5 doubles Opus 4.7's score on long-context retrieval above 512K tokens. Opus 4.7 leads by 5.7 points on the hardest software engineering benchmark.

The pattern is clear: GPT-5.5 wins on math, novel reasoning, long-context retrieval, and terminal work. Opus 4.7 wins on software engineering, tool orchestration, and visual reasoning. On pure knowledge tasks (GPQA Diamond), they're nearly tied.

One caveat on the SWE-bench numbers: Anthropic flagged memorization concerns on a subset of problems, and the 87.6% figure uses post-exclusion data with Anthropic's own scaffolding. The 82.6% GPT-5.5 number comes from OpenAI's evaluation setup. These aren't directly apples-to-apples, and our SWE-bench leaderboard tracks methodology differences.

Pricing Breakdown

Input tokens are priced identically. The difference is on output, and it's compounded by generation style.

| Pricing Tier | GPT-5.5 | Claude Opus 4.7 |

|---|---|---|

| Input (<200K) | $5.00 / 1M | $5.00 / 1M |

| Output (<200K) | $30.00 / 1M | $25.00 / 1M |

| Input (>200K) | $10.00 / 1M | $10.00 / 1M |

| Output (>200K) | $45.00 / 1M | $37.50 / 1M |

| Batch / Flex | 50% discount | 50% discount |

| Priority | 2.5x | 2.5x |

| Pro tier | $30 input / $180 output | N/A |

At face value, Opus 4.7 saves 17% on output tokens. But the real math depends on generation volume. GPT-5.5 produces 72% fewer output tokens on equivalent tasks. If we assume a task that costs 10,000 output tokens with Opus 4.7 costs roughly 2,800 with GPT-5.5, the per-task cost works out to:

- Opus 4.7: 10,000 × $0.000025 = $0.25

- GPT-5.5: 2,800 × $0.000030 = $0.084

That's a roughly 3x cost advantage for GPT-5.5 on coding tasks where its token efficiency holds. For non-coding workloads where both models produce similar output lengths, Opus 4.7's lower rate wins.

The 72% efficiency figure is OpenAI's own claim on coding tasks, so treat it as a best-case scenario for the comparison. Real-world efficiency savings vary by task type.

Strengths and Weaknesses

GPT-5.5: Strengths

- Best long-context retrieval above 512K tokens, by a wide margin

- Native audio and video input with text and images

- 72% fewer output tokens on coding workloads than Opus 4.7

- Strongest math reasoning benchmark scores (FrontierMath, HLE)

- Per-token latency matches GPT-5.4 despite higher intelligence

GPT-5.5: Weaknesses

- 3-second time-to-first-token hurts interactive applications

- SWE-bench Pro trails Opus 4.7 by 5.7 points on hard engineering tasks

- Output is 17% more expensive per token than Opus 4.7

- No equivalent to Opus 4.7's self-verification mechanism

Claude Opus 4.7: Strengths

- Leads every major software engineering benchmark

- Self-verification mechanism reduces scaffold overhead for long agents

- 3.75MP vision resolution - three times the previous Claude maximum

- 17% cheaper output tokens, with competitive per-task cost on non-coding work

- MCP-Atlas lead shows better tool orchestration in multi-step workflows

- Sub-second time-to-first-token for interactive use

Claude Opus 4.7: Weaknesses

- Long-context retrieval at 512K-1M tokens is weak compared to GPT-5.5

- Produces more output tokens per task, increasing costs on verbose generation

- Updated tokenizer adds 0-35% token overhead vs Opus 4.6 on the same prompts

- No native audio or video input

Verdict

For software engineering agents - anything where the model writes, reviews, or fixes code across a real codebase - Opus 4.7 is the stronger choice. The SWE-bench Pro gap of 5.7 points and the MCP-Atlas lead aren't marginal. Cursor, Claude Code, and similar tools build on Opus for a reason.

For long-context document work - processing 500K-token contracts, codebases, or research corpora - GPT-5.5 isn't just better, it's in a different category. The 74% vs 32% MRCR retrieval score at 1M context isn't a benchmark footnote; it's a qualitative difference in whether the model can actually reason over that much text.

For hard mathematics and frontier research, GPT-5.5 pulls ahead on FrontierMath Tier 4 (35.4% vs 22.9%) and HLE with tools (64.7% vs 54.7%).

For visual document analysis - financial reports, scientific charts, technical diagrams - Opus 4.7's 91% CharXiv score and 3.75MP resolution make it the clear pick.

The prior generation comparison, GPT-5.4 vs Claude Opus 4.6, showed a similar pattern of complementary strengths. That hasn't changed. What has changed is that both models got substantially better at their respective specialties - the gaps widened rather than converging.

If you're running a mixed workload and can only pick one, the deciding factor is token volume. GPT-5.5's efficiency advantage turns a 17% higher output rate into a lower per-task cost on long coding runs. Opus 4.7 wins on straight per-token rate for everything else.

Sources:

- Introducing Claude Opus 4.7 - Anthropic

- GPT-5.5 Benchmarks and Pricing - llm-stats.com

- Claude Opus 4.7 launch analysis - llm-stats.com

- GPT-5.5 vs Claude Opus 4.7 - digitalapplied.com

- GPT-5.5 vs Claude Opus 4.7 Benchmarks and Pricing - lushbinary.com

- Claude Opus 4.7 vs GPT-5.5: Which Model Should You Build On? - MindStudio

- OpenAI releases GPT-5.5 - TechCrunch

✓ Last verified May 2, 2026