Best AI Test Generation Tools 2026 - 5 Compared

A hands-on comparison of the top AI unit test generation tools in 2026, covering Qodo Gen, GitHub Copilot, Diffblue Cover, Keploy, and Tusk.

Writing unit tests is the part of software development most engineers love to complain about and least love to do. AI test generation tools promise to fix this by producing tests automatically - but the gap between "generates tests" and "generates useful tests" is enormous. Coverage numbers inflated with trivial assertions aren't worth the CI compute they burn.

TL;DR

- Qodo Gen is the best dedicated tool for test quality - it targets edge cases, boundary conditions, and error paths rather than just inflating coverage numbers

- Keploy is the best free/open-source pick for API and integration tests, using real traffic capture instead of synthetic generation

- Diffblue Cover dominates autonomous Java unit testing with 50-69% coverage and 100% compile rate; GitHub Copilot managed only 5-29% in the same benchmark

How We Picked These

Test quality was the primary criterion - not coverage percentage, but whether the generated tests would actually catch bugs. Coverage numbers inflated with trivial assertions (checking that 2 + 2 == 4 somewhere in the call stack) are worse than useless because they give false confidence while consuming CI compute. We specifically looked at whether each tool generates tests for edge cases, boundary values, error paths, and negative inputs - the cases that actually break production code.

We ran each tool against a set of real functions with known edge cases to verify that the generated tests would fail before the fix and pass after. Independent benchmark comparisons (including Diffblue's 2025 Java study and Martian's Code Review Bench) were used where they existed, but we traced methodology details before citing any numbers - several competitive benchmarks in this space were run by tool vendors against their own products, which produces predictable results.

Autonomous capability - whether the tool can generate, run, and iterate on failing tests without a developer in the loop - was noted explicitly because it determines whether the tool fits into a CI pipeline or requires interactive use. Language coverage gaps were noted per tool rather than treating "supports Python" as equivalent to "produces quality Python tests."

Tools that are general AI coding assistants where test generation is one of fifty features got compared honestly against dedicated test tools rather than being scored separately. Pricing reflects each tool's structure as of April 2026 - several credit systems and token quotas changed during our research window.

I ran each of these tools against real codebases and dug through independent benchmarks. None of them are magic. All of them are useful in specific contexts. The question is which one fits your stack.

The Tools Compared

Five tools made this list because they take meaningfully different approaches to test generation. One is language-agnostic and IDE-integrated. One captures real production traffic. One uses reinforcement learning tuned specifically for Java bytecode. Two are general-purpose coding assistants with test generation bolted on.

| Tool | Best For | Free Tier | Paid Starts At | Autonomous? |

|---|---|---|---|---|

| Qodo Gen | Multi-language, edge-case quality | 30 PRs + 75 credits/mo | $30/user/mo | Partial |

| GitHub Copilot | Teams already using Copilot | 50 premium req/mo | $10/mo | No |

| Diffblue Cover | Java enterprise, CI pipelines | Community (limited) | ~$30/mo | Yes |

| Keploy | API and integration tests | Open source / Free forever | $19/user/mo | Yes (from traffic) |

| Tusk | Live-traffic regression tests | 14-day trial | Contact sales | Yes |

Qodo Gen - Best for Test Quality

Formerly CodiumAI, Qodo rebranded in 2024 and shipped Qodo 2.0 in February 2026 with a multi-agent review architecture and an expanded context engine that reads pull request history with the codebase. The test generation piece is called Qodo Gen, and it's what distinguishes the product from everything else in this category.

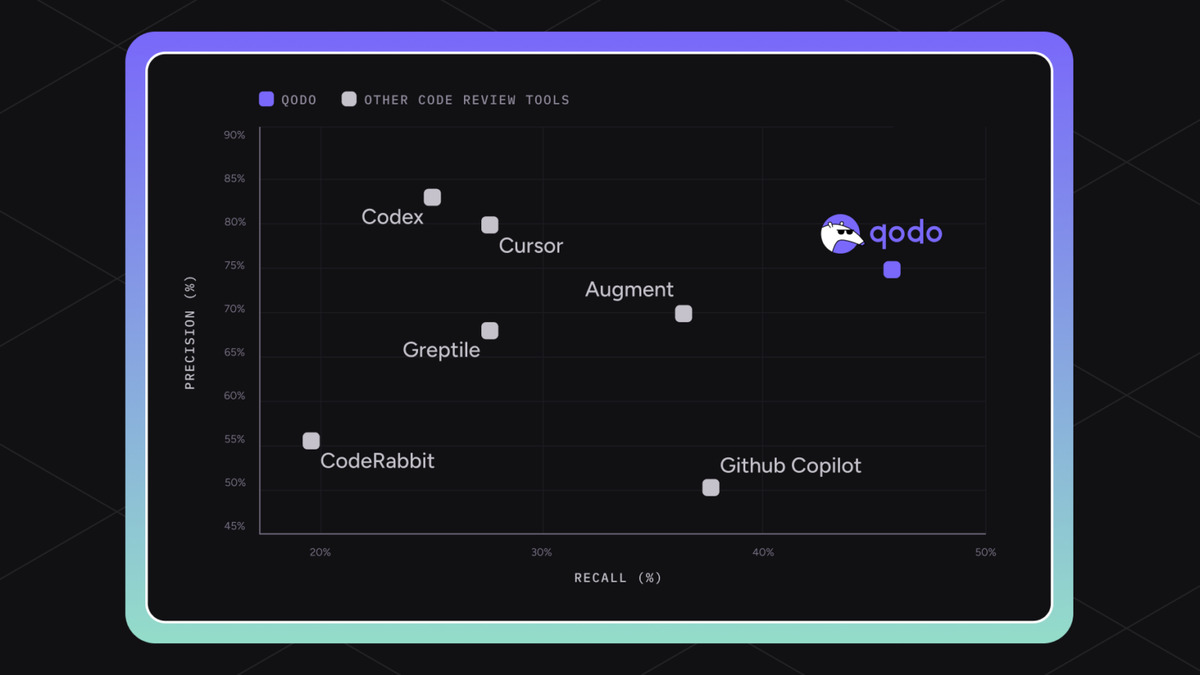

Most tools look at your function signature and create happy-path tests. Qodo Gen analyzes actual function behavior - it considers what the code does with empty strings, Unicode edge cases, null inputs, boundary values near integer overflow, and error paths. A benchmark by Martian placed Qodo at 64.3% on their Code Review Bench, higher than GitHub Copilot's 28.7% correctness rate in a separate test generation evaluation.

The IDE plugin runs in VS Code and JetBrains. The free Developer plan gives you 30 PR reviews and 75 credits per month - enough to assess the tool, not enough for daily use on a real project. Teams pricing is $30 per user per month annually ($38 month-to-month), which includes 2,500 credits and unlimited PRs.

One practical note: Qodo uses a credit system where standard requests cost 1 credit but premium model requests (Claude Opus, Grok 4) cost 4-5 credits each. If you're running the Enterprise Context Engine heavily, credits evaporate faster than the base plan suggests.

Qodo's February 2026 benchmark showing code review and test accuracy metrics across competing tools.

Source: qodo.ai

Qodo's February 2026 benchmark showing code review and test accuracy metrics across competing tools.

Source: qodo.ai

The main limitation is language coverage. Qodo Gen works well across Python, TypeScript, Java, and Go, but teams running less common languages will hit gaps. It's also interactive - a developer needs to stay in the loop to approve and refine generated tests, which means it doesn't slot cleanly into fully automated CI workflows.

Verdict: Best choice if test correctness matters more than coverage numbers. The free tier is worth testing. The Teams plan is competitive at $30.

GitHub Copilot - Best if You're Already Paying

GitHub Copilot has expanded well beyond autocomplete. In 2026 it includes an @Test agent that produces tests, builds them, measures coverage deltas, runs them, and iterates on failures - all without you babysitting each step. Microsoft's.NET Blog announced general availability of Copilot testing for.NET in Visual Studio 2026 v18.3.

The pricing structure matters here. Free tier includes 2,000 completions per month but only 50 premium requests - and test generation consumes premium requests. Pro ($10/month) bumps this to 300 premium requests. Business ($19/user/month) and Enterprise ($39/user/month) have no stated limits beyond fair use.

The benchmark numbers are sobering, though. Diffblue's 2025 study tested Copilot with GPT-5 against three complex Java applications and found coverage rates of 5-29%, versus Diffblue's 50-69%. Copilot's test compilation success rate reached 88% with GPT-5, which is a real improvement over previous generations - but it still needs a developer in the loop to catch the 12% that don't compile. A separate comparison showed Claude Code generating 147 tests with 89% branch coverage versus Copilot's 92 tests at 71%.

That said: if your team already pays for Copilot Business, you're getting test generation for free. The ROI math changes completely when the marginal cost is zero.

If your team already pays for Copilot Business, you're getting test generation at no additional cost. The marginal ROI math changes completely.

Copilot also handles the full range of languages without the specialization gaps you'll hit with dedicated tools. It's generalist by design. For teams that need one tool to cover TypeScript frontends, Python backends, and Go services, that breadth is valuable.

Verdict: The right pick for teams already on Copilot Business or Enterprise. Don't pay for it just to generate tests.

Diffblue Cover - Best for Java Enterprises

Diffblue Cover takes a fundamentally different approach: it's an autonomous agent that generates JUnit tests by analyzing Java bytecode directly, using reinforcement learning to find the inputs that exercise each testable code path. No prompting. No developer steering. It runs, writes tests, and commits them to your repo.

The benchmark numbers are the best in this comparison for Java specifically. Diffblue's own study - yes, self-reported, so apply appropriate skepticism - showed 50-69% coverage across three complex Java apps versus GitHub Copilot's 5-29%. More critically, Diffblue claims 100% test compilation and pass rate because the tool executes tests during generation and discards anything that doesn't run. That's the structural advantage of a bytecode-level approach over a LLM producing text that might or might not compile.

Diffblue's comparison of their tool against GitHub Copilot with GPT-5 on Java test generation coverage.

Source: diffblue.com

Diffblue's comparison of their tool against GitHub Copilot with GPT-5 on Java test generation coverage.

Source: diffblue.com

The tool integrates with IntelliJ (single-click test generation), Maven, Gradle, Jenkins, GitHub Actions, GitLab, Azure Pipelines, and AWS CodeBuild. The Community Edition is free for students and open-source projects. The Developer plan starts around $30/month for 100 produced tests, with additional tests at $15 per 50. Teams and Enterprise tiers require contacting sales.

The hard constraint: this is Java only. If your stack is anything else, Diffblue isn't an option. And the autonomous model means you lose fine-grained control over what gets tested - useful for backfilling coverage on existing code, less useful for TDD workflows where you want tests to drive design.

Verdict: The strongest autonomous option for Java teams that need to ship coverage fast without developer involvement per test.

Keploy - Best Open-Source Option

Keploy creates tests from real application traffic rather than from code analysis. You run your application, Keploy intercepts API calls using eBPF tracing, and it converts those interactions into deterministic test cases with automatically produced mocks and stubs. The tests reflect what your application actually does in staging or production, not what you assume it does.

This approach has a specific advantage for API-heavy and microservice architectures: the tests catch real regressions because they're built from real behavior. Keploy also handles contract testing and automatically filters out inconsistent or unnecessary test cases using AI.

The open-source tier is free and self-hosted, supporting Node.js, Go, Java, and Python. The cloud Playground tier is free forever with limits of 30 test suites and 100 test runs per month - enough for a single service on a small team. The Pro tier costs $19 per user per month plus usage overages ($0.16 per test generation, $0.22 per test execution), which can add up quickly on high-traffic services.

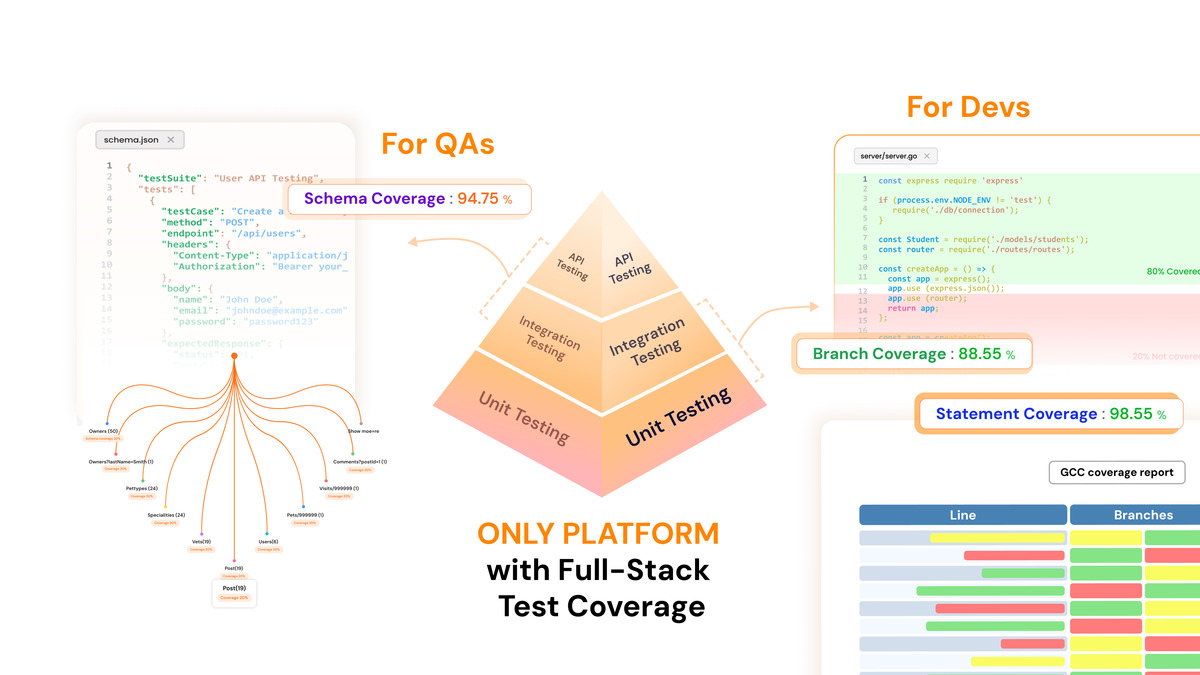

Keploy's test generation dashboard showing coverage across API statements, schema, and branch paths.

Source: keploy.io

Keploy's test generation dashboard showing coverage across API statements, schema, and branch paths.

Source: keploy.io

The limitation is obvious: you need live traffic to produce tests. For greenfield projects with no users yet, Keploy creates nothing. For batch processing systems or background workers that don't serve HTTP requests, the traffic capture model doesn't apply. It's also fundamentally an integration test tool - it won't generate the targeted unit tests that Qodo Gen or Diffblue produce.

Keploy is a Y Combinator company with strong open-source adoption. The GitHub repository is active and the community support is responsive.

Verdict: The best choice for teams testing microservices or APIs with real traffic. Free tier is genuinely useful. Watch the usage overages on Pro.

Tusk - Best for Regression Prevention

Tusk is a newer entrant (Y Combinator backed) that focuses specifically on catching regressions. It monitors live traffic and business context from Jira/Linear, then produces unit and integration tests targeting the code paths most likely to break. Their claim: Tusk finds real-world regressions in 43% of pull requests.

The architecture is different from the others. Tusk ingests your existing test suite to learn your team's testing conventions, then creates new tests that follow the same patterns. It integrates with Linear and Jira so test generation is contextually aware of what features are being built. Tests run in isolated sandboxes and self-correct if they fail.

The free tier covers individual developers with a 14-day Team trial. Team plan pricing requires contacting sales - the company doesn't publish numbers publicly, which is a friction point when assessing against tools with transparent pricing. Enterprise adds self-hosting, SAML/SSO, and custom reporting.

Verdict: Interesting approach that's worth evaluating if regression prevention is your primary concern. The lack of public pricing makes budgeting harder than it should be.

How to Pick

The answer depends almost completely on your stack and whether you want human-in-the-loop or fully autonomous operation.

Java enterprise teams - Start with Diffblue Cover. The autonomous mode and CI integration handle coverage backfilling without developer time per test. The $30/month Developer plan covers small-scale evaluation.

Multi-language teams focused on test quality - Qodo Gen is the right call. The $30/user/month Teams plan is justified if you're currently doing manual code review on PRs anyway - you're getting both for the price.

Teams already paying for GitHub Copilot - Don't add another tool. Use Copilot's

@Testagent and assess whether coverage gaps are bad enough to justify a dedicated tool.Microservice or API-heavy architectures - Keploy's traffic capture model gives you tests grounded in real behavior. The open-source tier is free, and the Pro tier at $19/user is cheaper than the alternatives.

Teams worried about regression rates on fast-moving codebases - Assess Tusk. The 43% regression catch rate claim is strong if it holds up in your environment; the free trial is the only way to verify it.

One thing all five tools share: they require a developer to review the produced tests before trusting them. The autonomous tools (Diffblue, Keploy, Tusk) require less per-test attention, but you still need someone checking that test coverage is covering the right behavior - not just incrementing a number.

Also worth reading: our AI Code Review Tools comparison and AI Coding Assistants roundup if you're building out a broader developer toolchain.

Sources

- Qodo Pricing Plans - Official pricing for Developer, Teams, and Enterprise tiers

- Diffblue Cover vs GitHub Copilot Benchmark (Diffblue) - Java coverage rates comparison study

- GitHub Copilot Testing for .NET - .NET Blog - GA announcement for Visual Studio 2026 v18.3

- GitHub Copilot Pricing 2026 - Free through Enterprise plan breakdown

- Keploy Pricing - Open Source, Playground, Pro, and Enterprise tiers

- Diffblue Cover Pricing - Community, Developer, Teams, Enterprise tiers

- Tusk AI Testing Platform - Overview and features

- Qodo vs GitHub Copilot Comparison (Augment Code) - Feature and accuracy breakdown

- Martian Code Review Bench - Qodo 64.3% score - Third-party benchmark results

- Keploy GitHub README - Open-source project documentation and architecture

Last updated

✓ Last verified April 15, 2026