Best RAG Tools and Vector Databases in 2026

A practical comparison of six vector databases and two RAG frameworks, with real pricing and benchmark data to help you pick the right stack.

Every RAG stack has two layers: something to store and retrieve your embeddings, and something to coordinate the retrieval and generation. The market has crowded up on both sides. On the vector database side alone you've got Pinecone, Qdrant, Weaviate, Milvus, Chroma, and pgvector all competing for the same workload. On the framework side, LangChain and LlamaIndex have both pivoted hard toward production RAG and now overlap significantly.

TL;DR

- For managed cloud with zero ops, Pinecone's Standard plan ($50/month minimum) is the fastest path to production

- Self-hosting Qdrant is the best free option that scales - 1GB free on cloud or unlimited self-hosted under Apache 2.0

- LlamaIndex beats LangChain on pure retrieval quality; LangChain wins if you need complex agent orchestration around your RAG

This article covers six vector databases and two RAG frameworks. I'm not going to rank them in a single leaderboard, because the right choice depends on scale, budget, and team ops capacity. What I'll do is give you enough concrete data to make the call yourself.

The Vector DB Layer

Pinecone - Best Fully Managed Option

Pinecone is the product teams reach for when they want vector search running in a day and don't want to think about infrastructure. It's fully managed, scales automatically, and comes with a free Starter tier that gives you 2GB storage, 2 million write units, and 1 million read units per month with no credit card required.

The Standard plan starts at a $50/month minimum and charges $0.33/GB/month for storage, $4 per million write units, and $16 per million read units. Enterprise is $500/month minimum and adds a 99.95% uptime SLA, private networking, and HIPAA support. For 10 million vectors at 1536 dimensions (OpenAI embeddings) with 5 million queries a month, expect roughly $64-80/month on Standard.

The trade-off is vendor lock-in. Pinecone's query API is proprietary, and migrating off it at scale is painful. The pricing model also becomes expensive at high query volumes - the read unit cost at $16/million adds up fast if your app is query-heavy.

Pinecone's serverless model works well for variable traffic. The read unit pricing model, though, punishes query-heavy workloads quickly.

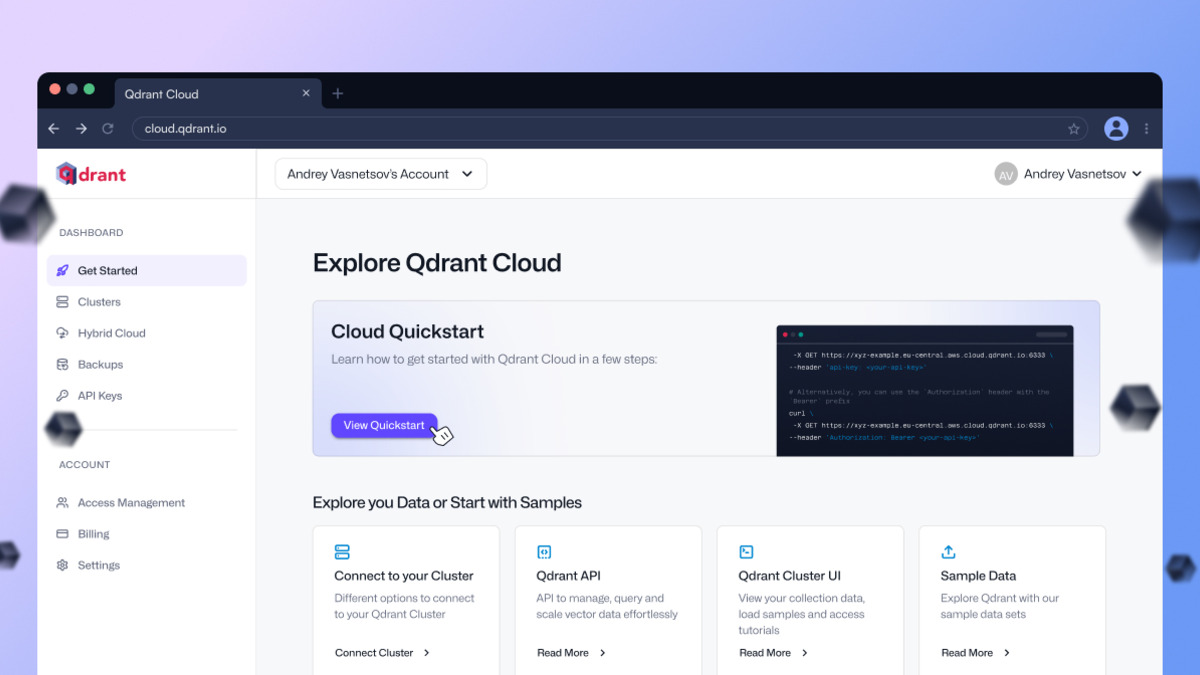

Qdrant - Best Open-Source Performance

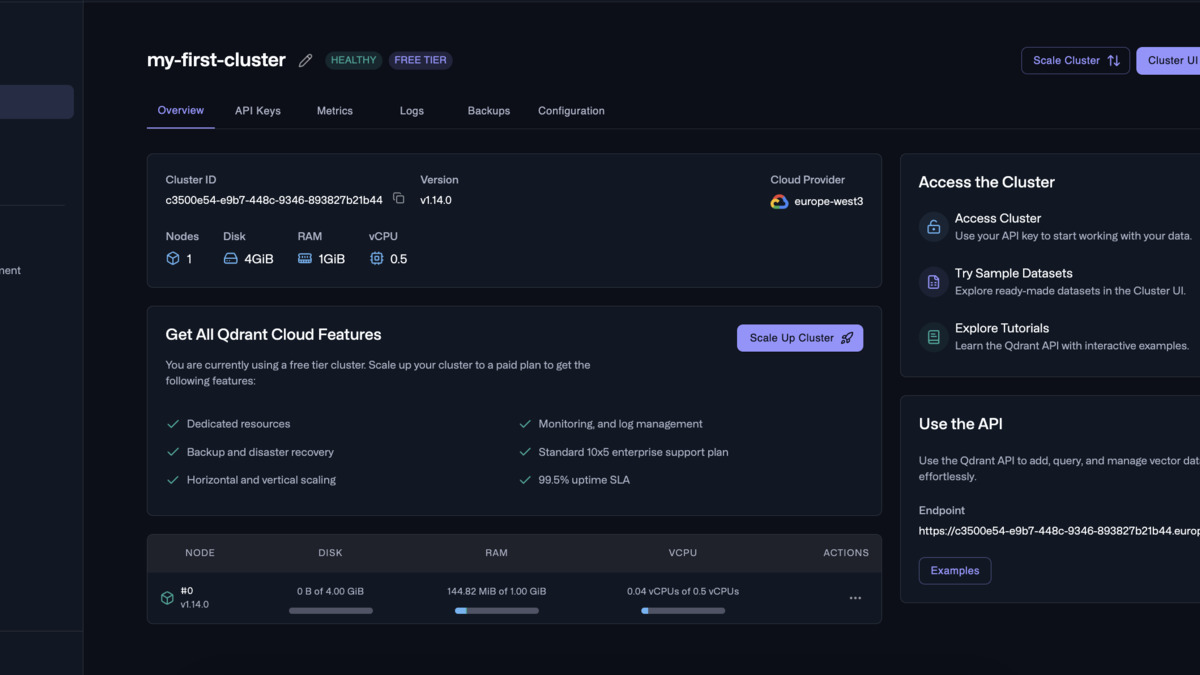

Qdrant is written in Rust, open-source under Apache 2.0, and built specifically for vector similarity search with complex metadata filtering. Its cloud free tier gives you a single-node cluster with 0.5 vCPU, 1GB RAM, and 4GB disk at no cost - permanently, not as a trial. Paid cloud tiers run on usage-based pricing for compute, memory, and storage, with a Premium tier adding SSO and private VPC links.

Self-hosted Qdrant is free with no limits other than your hardware. A three-node production cluster on AWS normally runs $300-500/month in infrastructure depending on the instance types you use.

Performance benchmarks from Qdrant's own testing show it reaches the highest RPS and lowest latencies across most configurations. Third-party benchmarks from LiquidMetal AI put Qdrant at around 8ms p50 query latency at 1M vectors in 768 dimensions. Weaviate comes in around 15ms p50, and Pinecone around 20ms p50 in the same tests - though benchmarks like these vary widely based on hardware, index configuration, and query patterns, so treat them as directional rather than definitive.

Where Qdrant truly stands out is filtered vector search. When you need to combine similarity search with strict metadata conditions - "find the 10 most similar items where category=electronics and price<100" - Qdrant's payload filtering engine is faster and more expressive than most alternatives.

Qdrant Cloud's dashboard shows real-time resource usage per node, making it straightforward to spot when you're approaching capacity limits.

Source: qdrant.tech

Qdrant Cloud's dashboard shows real-time resource usage per node, making it straightforward to spot when you're approaching capacity limits.

Source: qdrant.tech

Weaviate - Best for Hybrid Search

Weaviate's core differentiator is its built-in vectorization modules. You can push raw text into Weaviate and let it call an embedding model automatically, rather than managing that step yourself. It also ships with hybrid search that combines BM25 keyword matching with vector similarity out of the box.

Pricing on the managed cloud is confusing. The open-source version is free under BSD-3. The cloud product moved to a $25/month base after a 14-day trial, with additional charges for vector dimensions stored. Self-hosting Weaviate on cloud infrastructure typically costs $500-1,000/month for a production-grade setup because it's more memory-hungry than Qdrant or Milvus at comparable data volumes.

Weaviate's GraphQL API is distinctive and flexible, but it's also a learning curve if your team is used to REST or gRPC. At datasets above 100M vectors, Weaviate's resource consumption becomes a meaningful cost factor.

Milvus - Best for High-Throughput Scale

Milvus is the most popular open-source vector database by GitHub stars and production deployments. Its cloud-native distributed architecture aims to handle billions of vectors, and it handles concurrent queries better than most alternatives. Zilliz Cloud, the managed version, starts at $99/month.

Self-hosted Milvus is free under Apache 2.0. The operational overhead is higher than Qdrant or Chroma - Milvus requires etcd and MinIO (or S3) as dependencies, which adds to deployment complexity. For teams with existing Kubernetes experience this isn't a blocker, but for smaller teams it can slow down the initial setup by days.

The throughput advantage over Qdrant is real at scale. Under high-concurrency workloads with millions of vectors, Milvus's distributed query execution maintains lower latency than single-node alternatives. Zilliz's benchmarks show 1.5x better QPS than Pinecone on comparable workloads, though Zilliz produced those numbers so apply the appropriate skepticism.

Chroma - Best for Local Development

Chroma is the fastest zero-to-working vector search. Install it in Python, create a collection, and start querying - no external dependencies, no config files. It runs embedded in-process or as a standalone server, and it persists to disk automatically.

It's free and open-source under Apache 2.0. The API is clean and Python-first, with a TypeScript/JavaScript client available. For prototyping and local RAG development, nothing else comes close for developer experience.

In production, Chroma's limits become visible quickly. It's single-node, and scaling past a few million vectors requires switching to a different database. Use it to build and test your retrieval logic, then swap to Qdrant or Milvus when you're moving toward production.

pgvector - Best for PostgreSQL Shops

Pgvector turns a PostgreSQL database into a vector store. If your application already runs on Postgres, pgvector is worth serious consideration before you introduce a dedicated vector database. The extension is free and open-source, and it adds vector column types along with HNSW and IVF indexing.

With the HNSW index, pgvector achieves competitive recall and latency for datasets up to roughly 50 million vectors. Timescale's own benchmarks (note: vendor-produced) showed pgvector combined with their pgvectorscale extension reaching 471 QPS at 99% recall on 50M 768-dimension embeddings - not Milvus-level throughput, but sufficient for most RAG workloads. You also avoid the operational overhead of a second database, a second set of backups, and a second monitoring stack.

The main limitation is scale. Above 100 million vectors, purpose-built databases pull ahead on both cost and performance. For the typical enterprise RAG deployment sitting at 1-10 million vectors, pgvector is frequently the underrated correct answer.

Comparison Table

| Database | License | Cloud Free Tier | Starting Price | Best For |

|---|---|---|---|---|

| Pinecone | Proprietary | Yes (2GB) | $50/month (Standard) | Managed, zero ops |

| Qdrant | Apache 2.0 | Yes (1GB, permanent) | Usage-based | Filtered search, self-hosting |

| Weaviate | BSD-3 | 14-day trial | ~$25/month | Hybrid search, multi-modal |

| Milvus | Apache 2.0 | No (self-host free) | $99/month (Zilliz) | Billion-scale, high concurrency |

| Chroma | Apache 2.0 | N/A (free) | Free | Prototyping, local dev |

| pgvector | PostgreSQL | N/A (free extension) | Infrastructure cost | Teams already on Postgres |

Qdrant's cluster overview makes the node layout and storage distribution visible at a glance - useful when planning capacity for multi-node production deployments.

Source: qdrant.tech

Qdrant's cluster overview makes the node layout and storage distribution visible at a glance - useful when planning capacity for multi-node production deployments.

Source: qdrant.tech

The Framework Layer

The vector database holds your embeddings. The RAG framework handles chunking documents, calling the embedding model, running queries, and feeding results into the LLM. Two frameworks dominate this space: LlamaIndex and LangChain.

For related coverage on agent orchestration frameworks, see our best AI agent frameworks comparison.

LlamaIndex - Best for Retrieval Quality

LlamaIndex is purpose-built for connecting LLMs to your data. Its core strength is the richness of its indexing and retrieval primitives: hierarchical chunking (parent nodes summarize child chunks), Auto-Merging Retriever, hybrid BM25 plus vector search, and LlamaParse for extracting structured content from complex PDFs. These aren't afterthoughts - they're first-class features with good defaults.

Multiple third-party benchmarks put LlamaIndex at 92% retrieval accuracy versus LangChain's 85% on standard RAG test sets. Query latency also runs lower - roughly 0.8s per query versus 1.2s for LangChain in comparable environments. These numbers shift with your dataset and configuration, but the direction is consistent across comparisons from Prem AI and Latenode: LlamaIndex retrieves better, faster, with less configuration work.

The developer experience is good. You can get a working RAG pipeline in 20-30 lines of Python. As complexity grows, LlamaIndex added Workflows for multi-step agent patterns, so you're not forced to switch frameworks when your retrieval needs to involve tool calls or branching logic.

LangChain - Best for Agent Orchestration

LangChain's production story in 2026 centers on LangGraph, its graph-based agent orchestration layer. If you're building systems where RAG is one tool among many - where an agent needs to decide when to retrieve, when to call APIs, when to write code - LangChain gives you more control over that decision logic than LlamaIndex.

LangSmith, LangChain's observability and evaluation platform, is truly useful for teams running RAG in production. It traces every chain execution, logs prompts and outputs, and lets you run evals against a dataset without instrumenting your code manually. For teams who care about monitoring and debugging production retrieval (and you should), LangSmith reduces that overhead significantly. It's also a logical companion to the LLM observability tools and LLM eval tools we've covered separately.

The integration ecosystem is LangChain's other asset. Support for new LLMs, embedding models, and databases shows up in LangChain faster than anywhere else. If you're likely to swap models or databases as the market shifts, the integrations library saves time.

The cost: LangChain is more complex. More abstractions, more config, more things to debug. For teams whose primary goal is accurate document retrieval, that complexity is overhead without payoff.

Using Both

The architecture that's become common in larger production systems uses LlamaIndex for the data layer - ingestion, indexing, query engines - and wraps those as tools inside a LangGraph agent. LangGraph handles the orchestration logic; LlamaIndex handles the retrieval quality. If your requirements are complex enough to need both, this is a reasonable split.

How to Choose

Start with your scale and ops situation. If you're at prototype stage or under 1 million vectors, Chroma locally and then Qdrant's free cloud tier is a reasonable progression. No spend, no ops overhead, solid retrieval quality.

If you're already on PostgreSQL and expect to stay under 20-30 million vectors, pgvector is worth testing before you introduce a dedicated vector database. The simplification is real.

For teams that need managed cloud with minimal ops learning curve, Pinecone Standard is the least-friction option. Budget $50-200/month depending on query volume.

For teams that want open-source and anticipate real scale - millions of concurrent users, billions of vectors, or complex filtering requirements - Qdrant or Milvus are the right starting points. Qdrant is the simpler operational choice; Milvus wins at extreme throughput.

On the framework side: if your primary problem is retrieval quality, start with LlamaIndex. If you're building a system where retrieval is one component of a broader agent workflow, start with LangChain and LangGraph.

Sources

- Pinecone Pricing - official pricing page, verified March 2026

- Qdrant Pricing - official pricing page, verified March 2026

- Vector Search Benchmarks - Qdrant - Qdrant's own benchmark suite

- Best Vector Databases in 2026 - Firecrawl - benchmark data and feature comparison

- LangChain vs LlamaIndex (2026) - Prem AI - production comparison including latency and recall data

- Vector Database Comparison - LiquidMetal AI - third-party benchmark data for Pinecone, Qdrant, Weaviate

- pgvector Guide - Encore - pgvector production performance data

- Milvus vs Qdrant - Zilliz - throughput comparison (note: vendor-produced, apply appropriate skepticism)

- Top 9 Vector Databases - Shakudo - independent overview, March 2026

- RAG Frameworks - AIM Multiple - framework comparison including Haystack

- LangChain vs LlamaIndex 2026 - Latenode - retrieval accuracy and latency benchmark data

- pgvectorscale - Timescale/GitHub - pgvectorscale extension for high-throughput vector search on Postgres

✓ Last verified March 25, 2026