Microsoft Phi-4 Reasoning: Small Model, Big Math

Microsoft's Phi-4 reasoning family delivers near-70B-class math performance in a 14B open-weight package, but the overthinking problem is real and the use case is narrower than the benchmarks suggest.

Microsoft's Phi-4 reasoning family delivers near-70B-class math performance in a 14B open-weight package, but the overthinking problem is real and the use case is narrower than the benchmarks suggest.

NVIDIA's Nemotron 3 Nano 4B packs a Mamba-dominant hybrid architecture, 262K token context, and 95.4% on MATH500 into a model that fits an 8GB Jetson Orin Nano.

Mistral Small 4 packs reasoning, vision, and agentic coding into a 119B MoE under Apache 2.0 - a serious small-model contender at a price that's hard to ignore.

Rankings of the best small language models under 10 billion parameters, comparing Phi-4, Gemma 3, Qwen 3.5, and more across key benchmarks.

Microsoft releases Phi-4-reasoning-vision-15B - a 15B open-weight multimodal model trained on 240 GPUs in 4 days that competes with 100B+ parameter models on math, science, and GUI understanding.

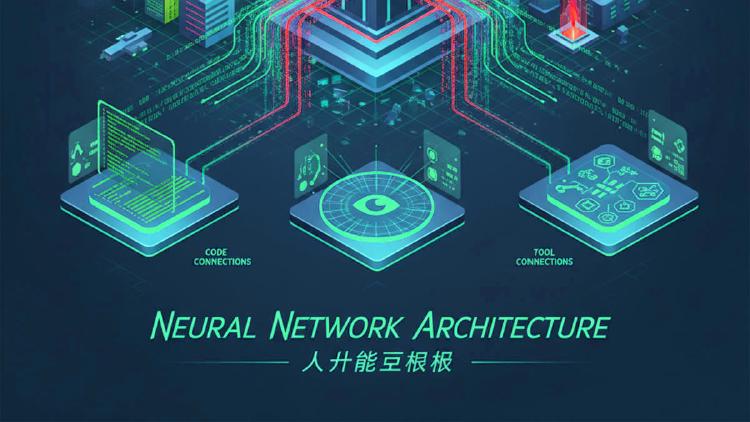

Alibaba completes the Qwen 3.5 lineup with four small models - 0.8B, 2B, 4B, and 9B - all natively multimodal, 262K context, Apache 2.0. The 9B outperforms last-gen Qwen3-30B and beats GPT-5-Nano on vision benchmarks.

Qwen3.5-0.8B is the smallest natively multimodal model in the Qwen 3.5 family - 0.8B parameters handling text, images, and video with 262K context. MathVista 62.2, OCRBench 74.5. Apache 2.0.

Qwen3.5-2B is a 2B dense multimodal model with 262K context, thinking mode, and native vision including video understanding. OCRBench 84.5, VideoMME 75.6. Apache 2.0 licensed.

Qwen3.5-4B is a 4B dense multimodal model that matches Qwen3-30B on MMLU-Pro and beats GPT-5-Nano on vision benchmarks. Runs on 8GB VRAM, Apache 2.0 licensed, 262K-1M context.

Qwen3.5-9B is a 9B dense model that outperforms Qwen3-30B on most benchmarks and beats GPT-5-Nano on vision tasks. Natively multimodal with 262K-1M context, Apache 2.0 licensed.

A detailed comparison of Moonshot AI's 1T-parameter Kimi K2.5 against Microsoft's 14B Phi-4 - the most extreme size gap in frontier AI, with 71x the parameters but vastly different use cases.

Microsoft's 14B dense transformer that consistently beats models 5x its size on MATH and GPQA, available under the MIT license for unrestricted commercial use.