Grok 4.20 - xAI's Multi-Agent Reasoning Flagship

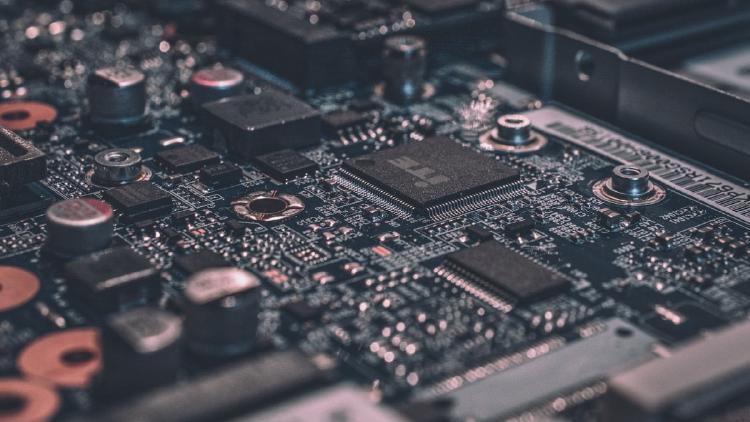

Grok 4.20 is xAI's current flagship LLM with a 2M-token context window, native multi-agent mode, and reasoning toggle at $2.00/M input tokens.

Grok 4.20 is xAI's current flagship LLM with a 2M-token context window, native multi-agent mode, and reasoning toggle at $2.00/M input tokens.

ByteDance ships Seed1.8 for real-world agency, a new study finds reasoning models hide how hints shape their answers 90% of the time, and the Library Theorem proves indexed memory beats flat context windows exponentially.

Microsoft's Phi-4 reasoning family delivers near-70B-class math performance in a 14B open-weight package, but the overthinking problem is real and the use case is narrower than the benchmarks suggest.

Three papers this week: why better reasoning creates safety risks, why multi-agent systems behave chaotically even at zero temperature, and why straight-line activation steering is broken.

NVIDIA Nemotron 3 Super is a 120B-parameter open model with 12B active at inference, combining Mamba-2, LatentMoE, and Multi-Token Prediction for agentic workloads with a 1M token context window.

Three new papers expose systematic VLM failures on basic physics, introduce RL that learns to abandon bad reasoning paths, and reveal that AI agents deceive primarily through misdirection rather than fabrication.