Microsoft Foundry Bets on Open Models With Fireworks

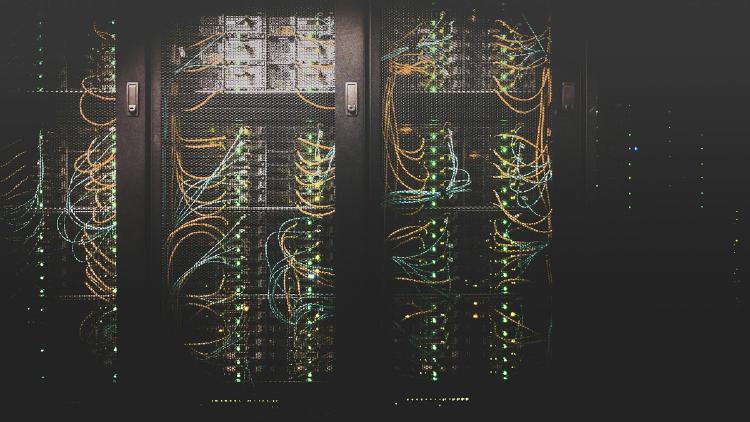

Microsoft Azure's Foundry platform now runs Fireworks AI's inference engine, bringing DeepSeek V3.2, Kimi K2.5, and MiniMax M2.5 into enterprise AI under a unified control plane.

Microsoft Azure's Foundry platform now runs Fireworks AI's inference engine, bringing DeepSeek V3.2, Kimi K2.5, and MiniMax M2.5 into enterprise AI under a unified control plane.

Mistral AI's unified MoE model - 119B total parameters, 6B active per token, 128 experts, 256K context, configurable reasoning, Apache 2.0 license.

Mistral AI releases Small 4 - a 119B MoE with only 6B active parameters, 256K context, configurable reasoning, and Apache 2.0 license. Plus a new NVIDIA partnership to co-develop frontier open models.

Kraken launched an open-source Rust CLI with built-in MCP server that lets AI agents like Claude Code and Codex trade crypto, manage portfolios, and paper-trade against live markets.

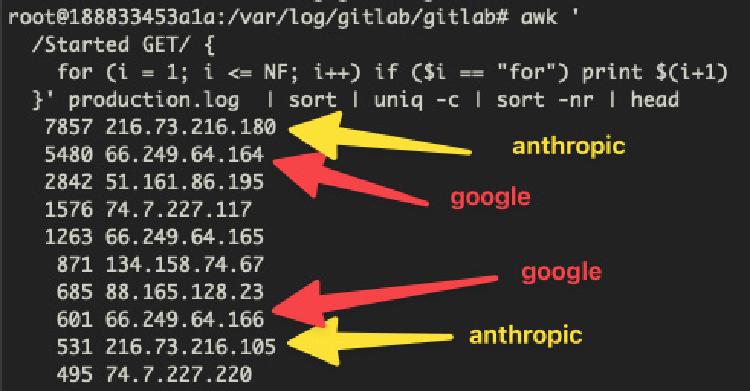

AI training crawlers from Anthropic, Google, and OVHcloud knocked a French national research institute's GitLab server offline for hours - nobody at CNRS's Institute of Complex Systems could work.

LangChain's open-source Deep Agents framework brings planning, subagents, and persistent context to autonomous agents tackling complex multi-step work.

![FLUX.2 [dev]](https://awesomeagents.ai/images/models/flux-2-dev_hu_94ec9e57c8624223.jpg)

Black Forest Labs' 32B open-weight image model - the most powerful open alternative for text-to-image, editing, and multi-reference generation with up to 10 reference images.

![FLUX.2 [klein] 4B](https://awesomeagents.ai/images/models/flux-2-klein-4b_hu_dec233f8cc7116ef.jpg)

Black Forest Labs' fastest open-source image generation model - 4B parameters, Apache 2.0 license, sub-second generation on consumer GPUs with 13GB VRAM.

Tobi Lütke ran Karpathy's autoresearch loop against the Liquid templating engine he created 20 years ago, producing 93 commits from 120 experiments that cut parse+render time by 53% and allocations by 61%.

METR found maintainers would reject roughly half of AI PRs that pass SWE-bench automated grading, with a 24-point gap that suggests benchmark scores substantially overstate production readiness.

Italian-Legal-BERT is a 110M-parameter domain-adapted BERT model for Italian legal NLP, trained on 3.7GB of court decisions from Italy's National Jurisprudential Archive.

Researchers at Scuola Superiore Sant'Anna in Pisa built Italian-Legal-BERT, a 110M-parameter model trained on 3.7GB of Italian court decisions that outperforms general Italian BERT on legal NLP tasks.