NVIDIA's Secret Chip Fuses GPU and Groq for OpenAI

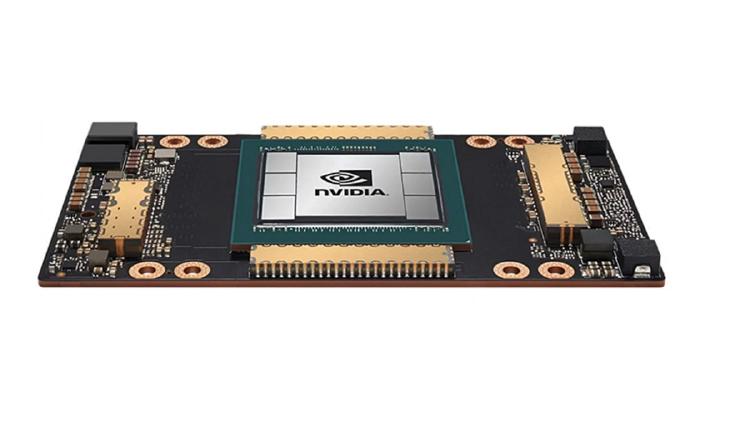

NVIDIA will unveil a new inference processor built on Groq's LPU architecture at GTC 2026, with OpenAI as its first major customer allocating 3 GW of dedicated capacity.

NVIDIA will unveil a new inference processor built on Groq's LPU architecture at GTC 2026, with OpenAI as its first major customer allocating 3 GW of dedicated capacity.

Huawei debuts its Atlas 950 SuperPoD at MWC Barcelona - 8,192 NPUs delivering 8 ExaFLOPS - marking its first overseas showcase of the AI supercomputer that directly targets Nvidia's cluster dominance.

Full specs, benchmarks, and analysis of the NVIDIA Rubin CPX - a purpose-built inference GPU with 128GB GDDR7, 30 PFLOPS NVFP4, and 3x faster attention versus Blackwell, targeting million-token context workloads.

Awesome Agents launches a dedicated Hardware section with detailed spec pages for 21 GPUs, TPUs, and AI accelerators - from datacenter flagships to home lab favorites.

Complete specs, benchmarks, and analysis of the NVIDIA Rubin R200 GPU - the post-Blackwell flagship with 288GB HBM4, 22 TB/s bandwidth, and 50 PFLOPS FP4.

Complete specs, benchmarks, and analysis of the NVIDIA A100 80GB SXM - the Ampere-architecture GPU that remains the most widely deployed AI accelerator in the world.