AI Memory Math, Label-Free RL, and the Productivity Ceiling

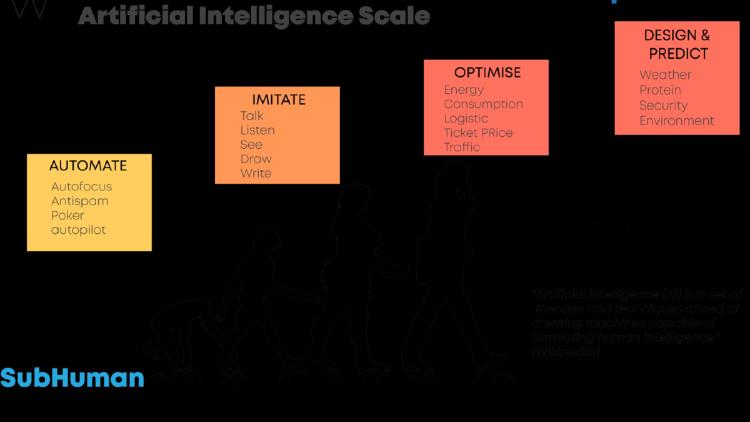

New proofs show semantic memory must forget, SARL trains reasoning models without labels, and the Novelty Bottleneck explains why AI won't eliminate human work.

New proofs show semantic memory must forget, SARL trains reasoning models without labels, and the Novelty Bottleneck explains why AI won't eliminate human work.

ByteDance ships Seed1.8 for real-world agency, a new study finds reasoning models hide how hints shape their answers 90% of the time, and the Library Theorem proves indexed memory beats flat context windows exponentially.

Three arXiv papers rethink transformer theory, expose fatal flaws in in-context LLM memory, and introduce grey-box agent security testing.

Anthropic's claude.com/import-memory page walks users through a two-step process to transfer ChatGPT, Gemini, or any chatbot's stored memories into Claude - no data loss, no starting over.

Nous Research's Hermes Agent is an open-source CLI agent with persistent multi-level memory, cross-platform messaging support, subagent delegation, and a growing skills ecosystem.