Grok 4.20 - xAI's Multi-Agent Reasoning Flagship

Grok 4.20 is xAI's current flagship LLM with a 2M-token context window, native multi-agent mode, and reasoning toggle at $2.00/M input tokens.

Grok 4.20 is xAI's current flagship LLM with a 2M-token context window, native multi-agent mode, and reasoning toggle at $2.00/M input tokens.

NVIDIA Nemotron 3 Super is the strongest open-weight model for agentic coding as of March 2026, but its efficiency-first design means real trade-offs on general knowledge and chat quality.

A practical guide to choosing between RAG and fine-tuning for your AI project, with cost comparisons, latency trade-offs, and a decision framework.

Fine-tuning trains a pre-built AI model on your own data so it learns your specific task, tone, or domain - here is how it works, what it costs, and when to use it.

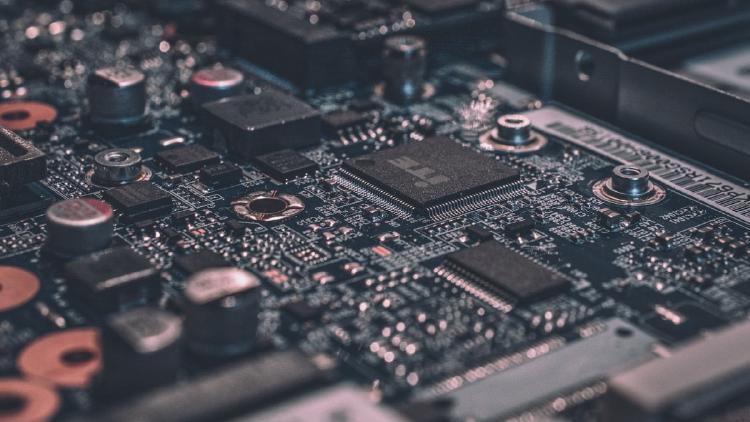

A large language model is an AI system trained on billions of words to understand and generate human language. Learn how LLMs work, what they can do, and how to pick the right one.

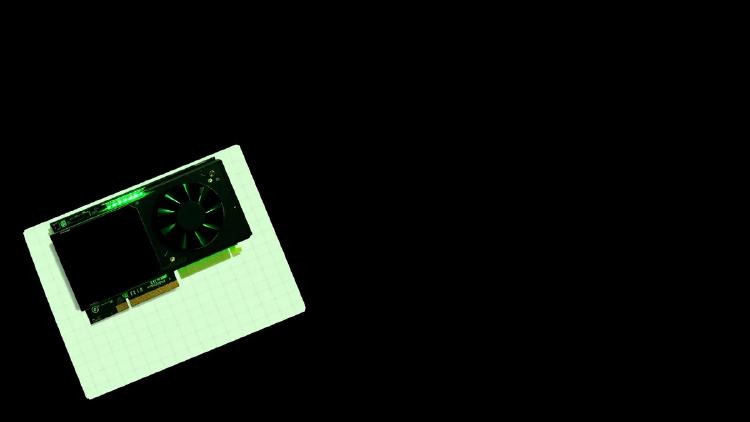

NVIDIA's new Nemotron-Cascade-2-30B-A3B activates just 3B parameters per token, runs on a single RTX 4090, and outscores NVIDIA's own 120B model on coding and math benchmarks.