AI Music Generation Leaderboard 2026: Suno, Udio, More

Ranked benchmarks for AI music generation tools covering FAD, CLAP, MOS listening tests, and MusicCaps evaluation - text-to-music, lyric-to-song, and stem remixing.

They summarize our coverage. We write it.

Newsletters like this one rebroadcast our headlines - often without the full review, the source reading, or the analysis underneath. Our weekly briefing sends the work they paraphrase, straight from the desk, before they get to it.

Free, weekly, no spam. One email every Tuesday. Unsubscribe anytime.

Ranked benchmarks for AI music generation tools covering FAD, CLAP, MOS listening tests, and MusicCaps evaluation - text-to-music, lyric-to-song, and stem remixing.

Rankings of the best LLMs on code completion benchmarks - HumanEval, LiveCodeBench, BigCodeBench, MBPP, and competitive programming - with methodology notes on contamination. Updated April 2026.

Rankings of AI models on creative writing quality benchmarks: EQ-Bench Creative Writing v3, Antislop evaluations, and human-preference judging. Which LLMs can actually write?

Rankings of the best LLMs for on-device edge inference - phones, laptops without GPUs, Raspberry Pi, and Jetson - scored by quality benchmarks and real tokens/sec on iPhone, MacBook, and Raspberry Pi 5.

Rankings of AI models on financial reasoning benchmarks: FinanceBench, FinQA, TAT-QA, CFA-Bench, and more - where hallucination costs real money.

Rankings of AI models on legal benchmarks - LegalBench, LexGLUE, CaseHOLD, ContractNLI, Bar Exam MBE, and more. Where hallucinated citations already got lawyers sanctioned.

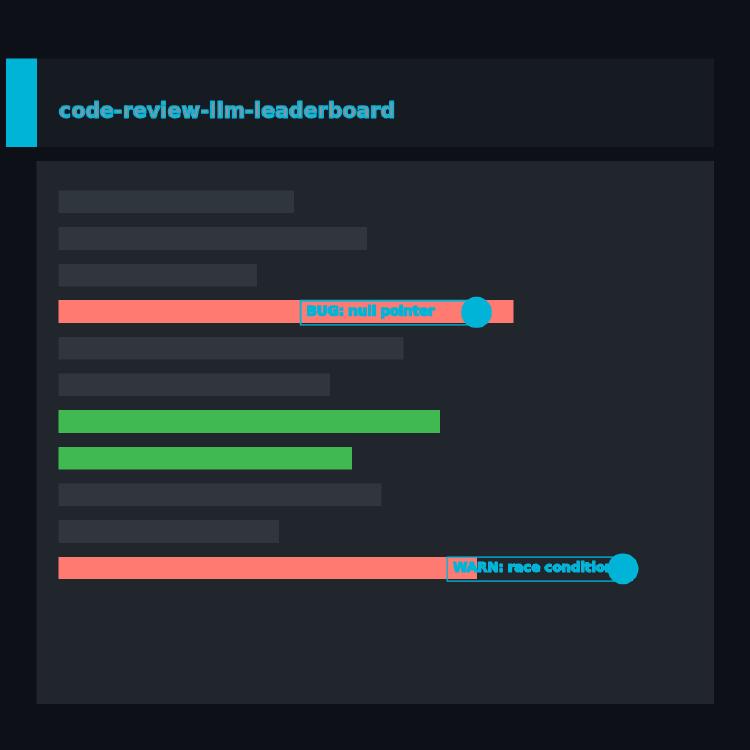

Rankings of the best LLMs and AI agents at automated code review - spotting bugs in diffs, commenting on PRs, and surfacing non-obvious issues across CodeReviewer, CR-Bench, and real-world evaluations.

Rankings of 14 frontier LLMs by adversarial robustness - how well they resist jailbreaks, prompt injection, and harmful-behavior elicitation across HarmBench, AdvBench, StrongREJECT, JailbreakBench, and AgentHarm.

How much quality do LLMs lose when quantized from BF16 to INT8, Q6, Q5, Q4, Q3, Q2? Per-model delta tables across MMLU, HumanEval, and perplexity, with VRAM and throughput data for every major quantization format.

Rankings of AI models on medical QA benchmarks - MedQA USMLE, MedMCQA, PubMedQA, MMLU-Medical, HealthBench, and more. Where a wrong answer has clinical consequences.

Rankings of dedicated reward models and frontier LLMs as judges across RewardBench, RewardBench-2, and JudgeBench - benchmarks that measure preference alignment and human agreement.

Rankings of VLA models and embodied AI systems on real robotics benchmarks: CALVIN, SimplerEnv, LIBERO, RoboCasa, DROID, and real-robot success rates as of April 2026.