Thinking Machines Builds AI That Listens While Talking

Mira Murati's startup unveils TML-Interaction-Small, a 276B MoE model that hits 0.40-second response latency by listening and generating speech at the same time.

They summarize our coverage. We write it.

Newsletters like this one rebroadcast our headlines - often without the full review, the source reading, or the analysis underneath. Our weekly briefing sends the work they paraphrase, straight from the desk, before they get to it.

Free, weekly, no spam. One email every Tuesday. Unsubscribe anytime.

Mira Murati's startup unveils TML-Interaction-Small, a 276B MoE model that hits 0.40-second response latency by listening and generating speech at the same time.

Lumai's optical AI inference server uses light-based computing to run billion-parameter LLMs with up to 90% less power than GPUs.

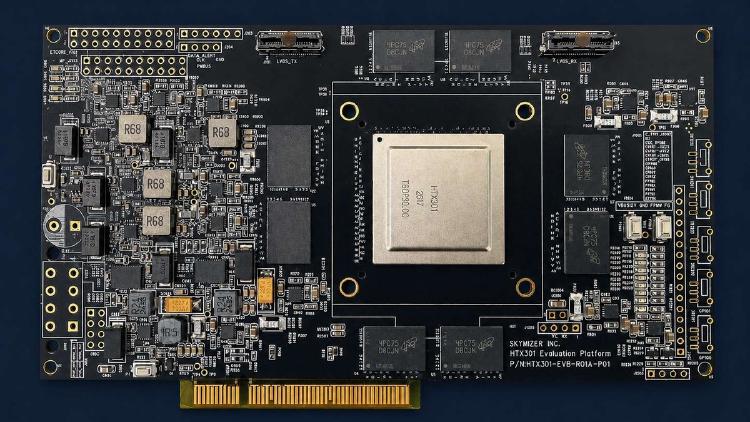

Skymizer's HTX301 uses six 28nm chips and 384 GB LPDDR5 to run 700B-parameter LLMs on a single PCIe card at just 240W.

Meta's MTIA 450 doubles HBM bandwidth to 18.4 TB/s and adds FlashAttention hardware acceleration for GenAI inference in 2027.

Meta's second-gen ASIC delivers 6 PFLOPS FP8 and 288 GB HBM for GenAI and recommendation inference inside Meta's data centers.

Subquadratic's SubQ claims the first linear-scaling LLM with a 12M-token window - but private beta access, self-reported benchmarks, and a 17-point MRCR gap make independent verification the only test that matters.

SubQ is the first LLM built on a fully subquadratic attention architecture, achieving a 12M-token research context and 52x faster inference than FlashAttention at 1M tokens.

Zyphra's ZAYA1-8B matches Claude 4.5 Sonnet on HMMT 2025 math benchmarks at just 760M active parameters, trained entirely on AMD Instinct MI300X GPUs under Apache 2.0.

Subquadratic exits stealth with SubQ, the first frontier model built on a sparse-attention architecture, a $29M seed round, and a 12M-token context window that costs a fraction of Opus.

Nebius agrees to acquire 20-person MIT inference startup Eigen AI for $643M, betting that optimizing every token per Nvidia chip is the real moat in the AI infrastructure race.

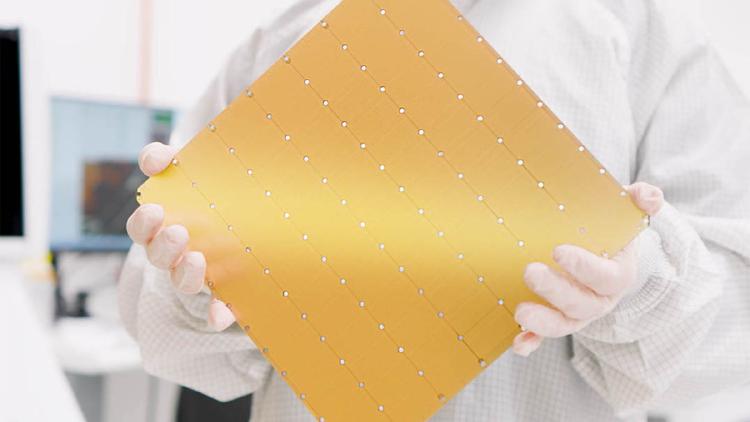

The Cerebras WSE-3 is the largest chip ever built - a TSMC 5nm wafer with 900,000 AI cores, 44GB SRAM, and 21 PB/s bandwidth. Now powering a $20B OpenAI deal and Amazon Bedrock deployments.

Google's TPU 8i is a purpose-built inference chip with 10.1 FP4 PFLOPs, 288GB HBM3e at 8,601 GB/s, and a Boardfly topology that cuts collective latency 5x for agentic AI workloads.