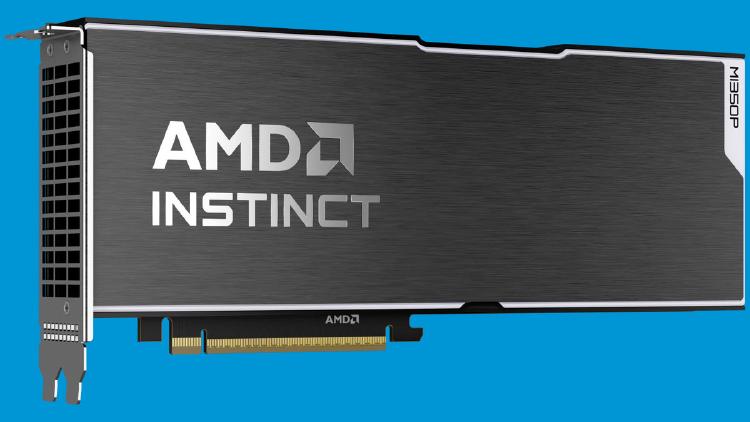

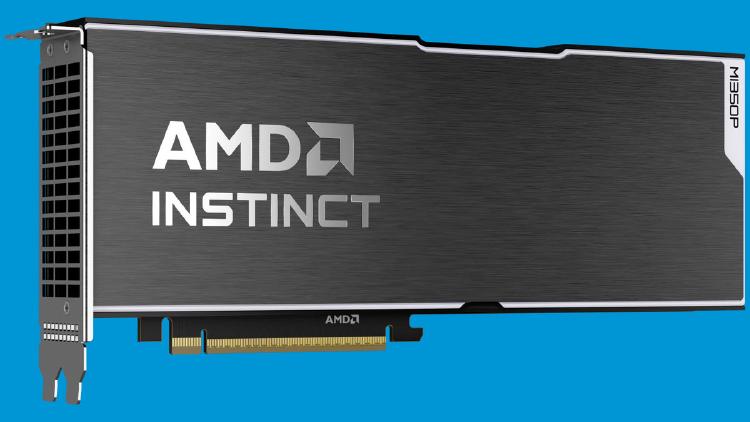

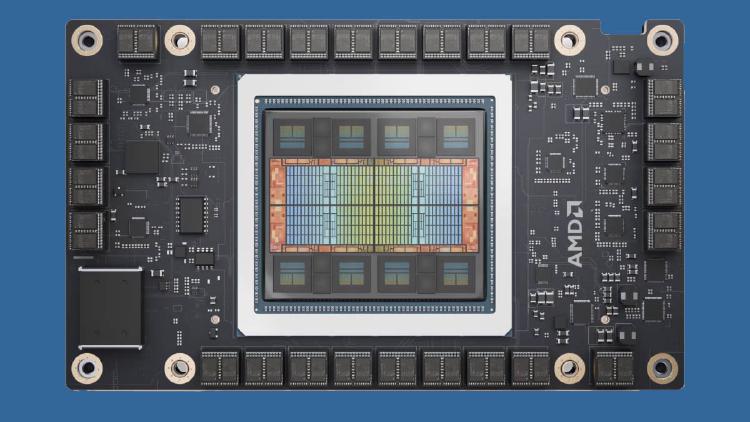

AMD Instinct MI350P - CDNA 4 PCIe Inference Card

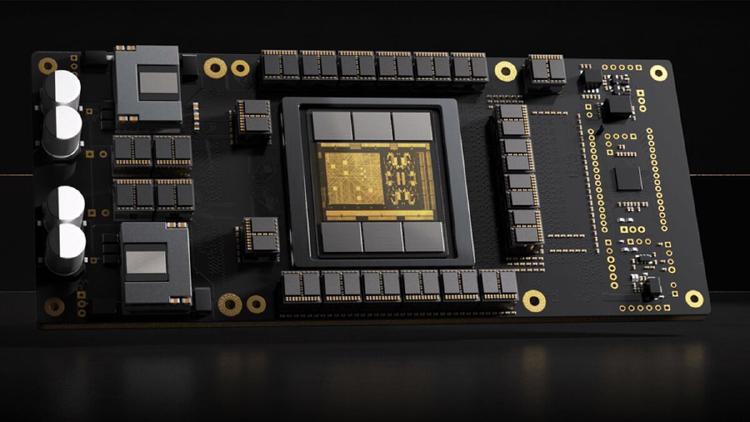

AMD Instinct MI350P brings CDNA 4 to a standard PCIe slot: 144GB HBM3E, 4 TB/s bandwidth, 2.3 PFLOPS MXFP8, and 600W passive cooling for air-cooled servers.

They summarize our coverage. We write it.

Newsletters like this one rebroadcast our headlines - often without the full review, the source reading, or the analysis underneath. Our weekly briefing sends the work they paraphrase, straight from the desk, before they get to it.

Free, weekly, no spam. One email every Tuesday. Unsubscribe anytime.

AMD Instinct MI350P brings CDNA 4 to a standard PCIe slot: 144GB HBM3E, 4 TB/s bandwidth, 2.3 PFLOPS MXFP8, and 600W passive cooling for air-cooled servers.

Google's TPU 8i is a purpose-built inference chip with 10.1 FP4 PFLOPs, 288GB HBM3e at 8,601 GB/s, and a Boardfly topology that cuts collective latency 5x for agentic AI workloads.

Google's TPU 8t packs 12.6 FP4 PFLOPs and 216GB HBM3e per chip, scaling to 9,600-chip superpods with 121 ExaFLOPS and 2 petabytes of shared HBM for massive model training.

The Rebellions RebelRack packs 32 Rebel100 chiplet NPUs with 4.5TB HBM3E and 153.6 TB/s aggregate bandwidth into a rack drawing just 5kW - roughly 4x the compute-per-watt of an H100 DGX.

AMD Instinct MI325X specs, benchmarks, and analysis. 256GB HBM3e at 6 TB/s, 2.6 PFLOPS FP8, CDNA3 architecture - the memory-capacity upgrade to the MI300X targeting large model inference.

Huawei Atlas 350 specs, benchmarks, and analysis. Ascend 950PR chip, 112GB HiBL 1.0 HBM, 1.56 PFLOPS FP4, 600W - China's first domestically developed FP4-capable AI accelerator.

Microsoft Maia 200 specs, benchmarks, and architecture analysis. TSMC 3nm, 216GB HBM3e, 10 PFLOPS FP4, 750W - Microsoft's first inference-only silicon deployed in Azure.

AMD's flagship CDNA 4 AI GPU with 432 GB HBM4, 40 PFLOPS FP4, and 2nm chiplet design targeting H2 2026.

NVIDIA's Rubin-based rack system with 144 R200 GPUs, 3.6 ExaFLOPS FP4, 20 TB HBM4 - arriving H2 2026.

Complete specs, benchmarks, and analysis of AWS Trainium3 - Amazon's TSMC 3nm AI chip with 2.52 PFLOPS FP8, 144GB HBM3e, and NeuronLink-v4, powering Anthropic's Claude through Project Rainier.

Full specs and critical analysis of the Etched Sohu - a transformer-specific ASIC claiming 500K+ tokens/sec on Llama 70B, built on TSMC 4nm with 144GB HBM3E. Bold claims, but no independent benchmarks yet.

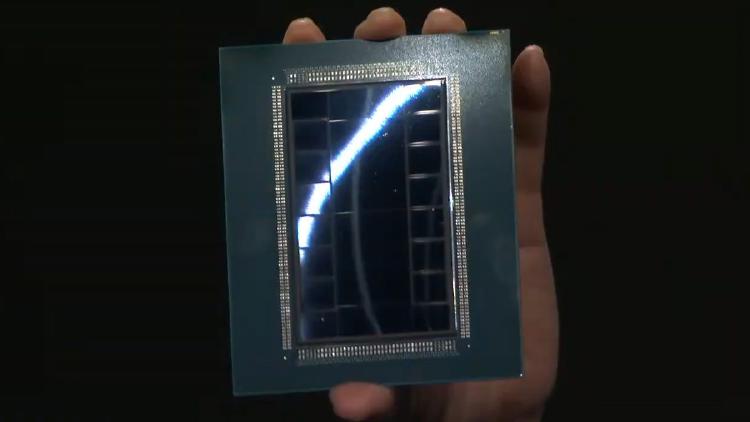

Complete specs and analysis of the AMD Instinct MI440X - a CDNA 5 enterprise GPU with 432GB HBM4, 19.6 TB/s bandwidth, and TSMC 2nm compute chiplets.