AWS Trainium2 - Amazon's Cloud Training Chip

AWS Trainium2 is Amazon's second-generation custom AI training chip, powering EC2 Trn2 instances with 96GB HBM2e per chip and tight integration with the AWS Neuron SDK and SageMaker ecosystem.

AWS Trainium2 is Amazon's second-generation custom AI training chip, powering EC2 Trn2 instances with 96GB HBM2e per chip and tight integration with the AWS Neuron SDK and SageMaker ecosystem.

Full specs and analysis of the Cambricon MLU590 - 192GB HBM2e, ~2,400 GB/s bandwidth, TSMC 7nm, and what it means for AI inference outside the NVIDIA ecosystem.

Huawei Ascend 910B specs, benchmarks, and real-world performance. 64GB HBM2e, ~1,200 GB/s bandwidth, ~600 TFLOPS FP16 - the chip that trained DeepSeek.

Huawei Ascend 910C specs, benchmarks, and performance analysis. 96GB HBM2e, ~1,800 GB/s bandwidth, ~800 TFLOPS FP16 - China's flagship AI chip under US sanctions.

Intel Gaudi 3 is a TSMC 5nm AI accelerator with 128GB HBM2e and 1,835 TFLOPS FP8 performance, positioned as a cost-effective alternative to NVIDIA H100 for training and inference workloads.

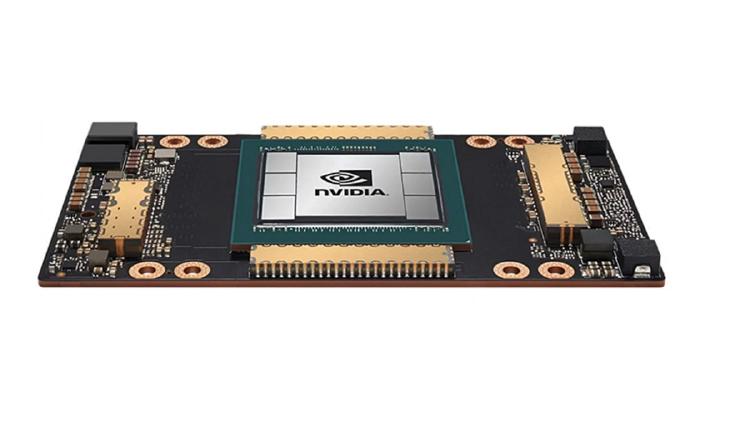

Complete specs, benchmarks, and analysis of the NVIDIA A100 80GB SXM - the Ampere-architecture GPU that remains the most widely deployed AI accelerator in the world.