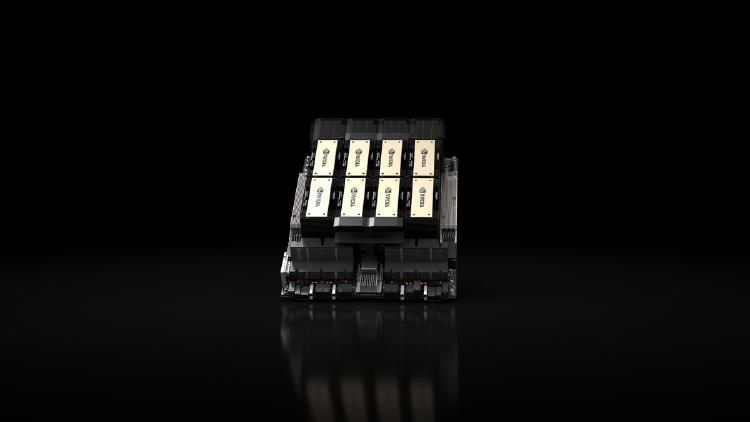

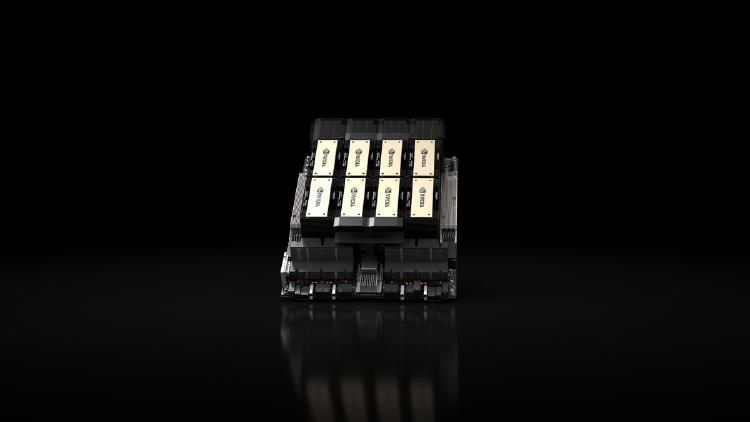

NVIDIA H200 - Inference-Optimized Hopper

Complete specs, benchmarks, and analysis of the NVIDIA H200 - the HBM3e-equipped Hopper GPU that delivers 76% more memory and 43% more bandwidth than the H100 for inference workloads.

Complete specs, benchmarks, and analysis of the NVIDIA H200 - the HBM3e-equipped Hopper GPU that delivers 76% more memory and 43% more bandwidth than the H100 for inference workloads.

Full specs and benchmarks for the NVIDIA GeForce RTX 3090 - 24GB GDDR6X at 936 GB/s, Ampere architecture, and why used 3090s remain the best value option for local AI inference in 2026.

Full specs and benchmarks for the NVIDIA GeForce RTX 4090 - 24GB GDDR6X, 1,008 GB/s bandwidth, Ada Lovelace architecture, and why it remains the default home lab GPU for local AI inference.

Full specs and benchmarks for the NVIDIA GeForce RTX 5090 - 32GB GDDR7, 1,792 GB/s bandwidth, Blackwell architecture, and what it means for local AI inference.

Two very different approaches to desktop AI hardware - a 32 GB eGPU with 1,792 GB/s bandwidth versus a 128 GB unified memory mini PC with full CUDA. Which one should you buy?

A review of the Gigabyte AORUS RTX 5090 AI BOX - a liquid-cooled eGPU packing a full desktop RTX 5090 with 32 GB GDDR7, connecting to any laptop over Thunderbolt 5 for $2,999.