AMD Helios: 72-GPU Rack for AI at Scale

AMD Helios packs 72 Instinct MI455X GPUs and 31 TB HBM4 into a single rack delivering 2.9 FP4 ExaFLOPS for AI workloads.

They summarize our coverage. We write it.

Newsletters like this one rebroadcast our headlines - often without the full review, the source reading, or the analysis underneath. Our weekly briefing sends the work they paraphrase, straight from the desk, before they get to it.

Free, weekly, no spam. One email every Tuesday. Unsubscribe anytime.

AMD Helios packs 72 Instinct MI455X GPUs and 31 TB HBM4 into a single rack delivering 2.9 FP4 ExaFLOPS for AI workloads.

NVIDIA and IREN plan 5 GW of DSX-aligned AI factories, backed by a $2.1B investment warrant and a $3.4B, five-year GPU cloud contract.

Anthropic gains 220,000 GPUs from SpaceX's Colossus 1 in Memphis, immediately doubling Claude Code five-hour rate limits for all paid plans.

Six companies just released MRC, an open networking protocol that routes AI training traffic across hundreds of simultaneous paths to end GPU idle time at supercomputer scale.

May 2026: Together AI adds Llama 4 and DeepSeek fine-tuning, Fireworks raised deployment prices $1/hr, and H100 rentals fell to under $2.40/hr.

Complete buying guide for AI home workstations in 2026 - pre-built machines and DIY builds for running local LLMs from 3B to 70B+ models, with benchmarks, part lists, and price-tier comparisons.

Raw GPU rental rates across 20+ providers normalized to per-GPU-hour - H100, H200, A100, L40S, RTX 4090, on-demand vs spot vs reserved, with hidden costs and value-tier recommendations.

A benchmark-driven comparison of the top open-source LLM inference servers - vLLM, SGLang, TGI, llama.cpp, TensorRT-LLM, LMDeploy, and more.

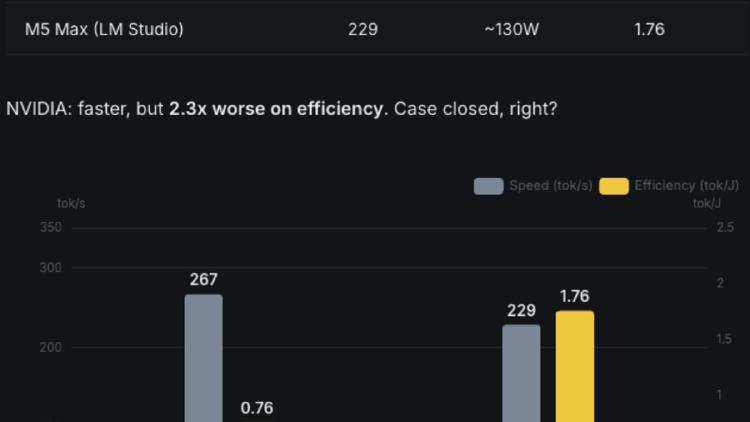

Two researchers fused all 24 layers of Qwen 3.5-0.8B into a single CUDA kernel launch, making a five-year-old RTX 3090 deliver 1.8x the throughput of an M5 Max at equal or better efficiency. The gap was software, not silicon.

The AMD Instinct MI430X is AMD's CDNA 5 HPC accelerator with 432GB HBM4, full FP64 support, and 19.6 TB/s bandwidth - designed for sovereign AI and scientific supercomputing alongside the MI455X AI GPU.

Allbirds sold its entire footwear business for $39 million - roughly 1% of its $4 billion peak valuation - and is rebranding as NewBird AI to buy GPUs and rent compute to AI developers. The stock quadrupled in a day.

Meta expands its CoreWeave partnership by $21 billion through December 2032, bringing total commitments to $35 billion and locking in early NVIDIA Vera Rubin deployments.