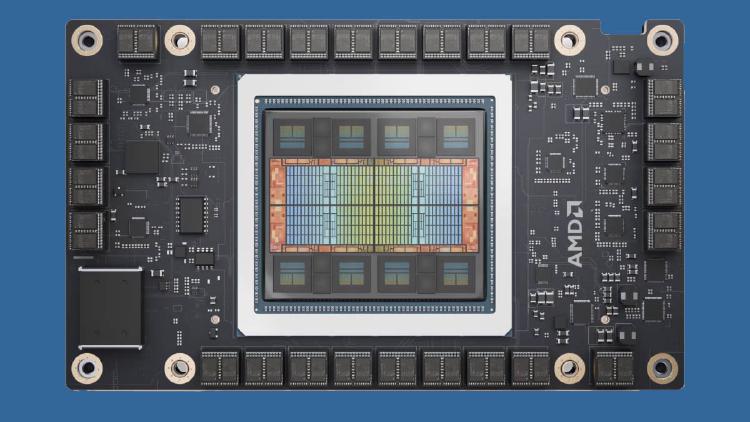

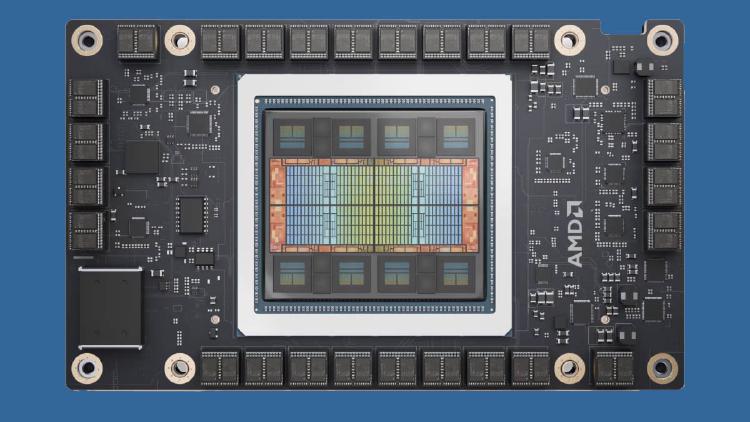

AMD Instinct MI325X - 256GB CDNA3 for Inference

AMD Instinct MI325X specs, benchmarks, and analysis. 256GB HBM3e at 6 TB/s, 2.6 PFLOPS FP8, CDNA3 architecture - the memory-capacity upgrade to the MI300X targeting large model inference.

AMD Instinct MI325X specs, benchmarks, and analysis. 256GB HBM3e at 6 TB/s, 2.6 PFLOPS FP8, CDNA3 architecture - the memory-capacity upgrade to the MI300X targeting large model inference.

Mistral AI secures $830M in debt financing from seven banks to build a 13,800-GPU Nvidia GB300 cluster near Paris, targeting 200MW of European compute by 2027.

Jensen Huang confirmed at GTC 2026 that NVIDIA has export licenses for multiple Chinese customers and is restarting H200 production, with 82,000 GPUs ready to ship after nearly a year of zero deliveries.

Tencent's 2025 results beat estimates, with the company spending $2.6B on AI last year and planning to at least double that in 2026 despite ongoing GPU supply constraints from US export controls.

NVIDIA opens GTC 2026 with the Vera Rubin platform - six co-designed chips delivering 50 PFLOPS of inference per GPU and 10x lower token cost than Blackwell.

AMD's flagship CDNA 4 AI GPU with 432 GB HBM4, 40 PFLOPS FP4, and 2nm chiplet design targeting H2 2026.