Qwen3.5-0.8B

Qwen3.5-0.8B is the smallest natively multimodal model in the Qwen 3.5 family - 0.8B parameters handling text, images, and video with 262K context. MathVista 62.2, OCRBench 74.5. Apache 2.0.

Qwen3.5-0.8B is the smallest natively multimodal model in the Qwen 3.5 family - 0.8B parameters handling text, images, and video with 262K context. MathVista 62.2, OCRBench 74.5. Apache 2.0.

Qwen3.5-2B is a 2B dense multimodal model with 262K context, thinking mode, and native vision including video understanding. OCRBench 84.5, VideoMME 75.6. Apache 2.0 licensed.

Qwen3.5-4B is a 4B dense multimodal model that matches Qwen3-30B on MMLU-Pro and beats GPT-5-Nano on vision benchmarks. Runs on 8GB VRAM, Apache 2.0 licensed, 262K-1M context.

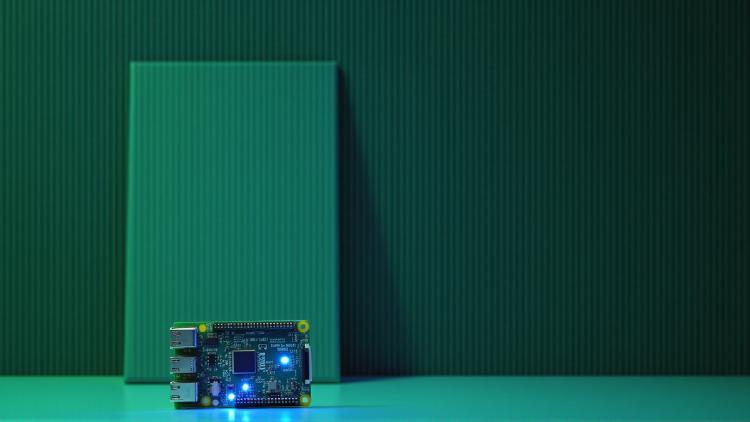

Complete specs, benchmarks, and analysis of the Hailo-10H - a 2.5W edge AI accelerator with 40 TOPS INT4, on-module LPDDR4, and the ability to run LLMs and VLMs on a Raspberry Pi at 10 tokens per second.

PicoClaw runs OpenClaw-compatible skills on a Raspberry Pi 5. We tested whether a $10 edge AI agent can deliver meaningful automation on hardware you can hold in your hand.

Comparing Kimi K2.5's trillion-parameter benchmark dominance against Qwen3.5-27B's single-GPU accessibility - two models from entirely different tiers that both have compelling use cases.

Samsung's Galaxy S26 launches with Perplexity, Google Gemini, and a revamped Bixby as competing AI agents, plus on-device image generation via EdgeFusion.

MIT spinoff Liquid AI releases LFM2-24B-A2B, a hybrid mixture-of-experts model that activates only 2.3B parameters per token, fits in 32GB RAM, and hits 112 tokens per second on a consumer CPU.

Cohere Labs releases Tiny Aya, a 3.35B open-weight multilingual model that beats Gemma 3 4B in 46 of 61 languages on translation and runs at 32 tokens/sec on an iPhone.