China Restricts OpenClaw AI in Government and Banks

Chinese authorities ordered government agencies and state-owned banks to remove or restrict OpenClaw, citing security risks from the AI agent's autonomous operation and broad data access.

Chinese authorities ordered government agencies and state-owned banks to remove or restrict OpenClaw, citing security risks from the AI agent's autonomous operation and broad data access.

Tencent hosted free OpenClaw installations at its Shenzhen headquarters while the local government rolled out subsidies up to 10 million yuan for companies building on the platform.

MiniMax M2.5 matches Claude Opus 4.6 on SWE-Bench at 1/20th the price - but a spike in hallucinations and a distillation controversy complicate the story.

China announced up to $70 billion in semiconductor and AI subsidies during the Two Sessions - one of the largest government chip programs in history, aimed at full self-sufficiency as US export controls tighten.

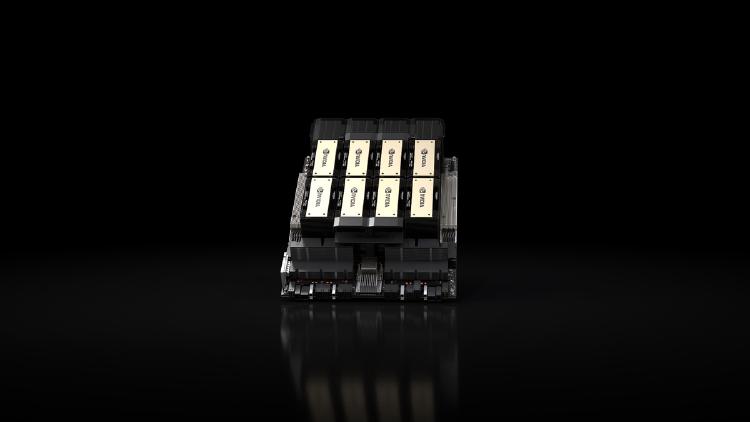

The Trump administration is considering limiting Chinese companies to 75,000 Nvidia H200 GPUs each - less than half what Alibaba and ByteDance want - while zero chips have shipped despite months of export approvals.

Zhipu AI's GLM-5 is a 744B MoE model with 40B active parameters, trained on 100K Huawei Ascend chips, scoring 77.8% SWE-bench and 50 on Artificial Analysis Intelligence Index - MIT licensed.

Junyang Lin, the 32-year-old architect behind Alibaba's Qwen open-source AI models, announces his departure in a brief tweet - the fourth major exit from Tongyi Lab in two years.

China's National People's Congress opens this week with a 15th Five-Year Plan that puts $70 billion in semiconductor subsidies and AI-plus manufacturing at the center of its tech race with the West.

Huawei debuts its Atlas 950 SuperPoD at MWC Barcelona - 8,192 NPUs delivering 8 ExaFLOPS - marking its first overseas showcase of the AI supercomputer that directly targets Nvidia's cluster dominance.

Zhipu AI's 744B open-source model GLM-5 was trained entirely on Huawei Ascend chips and now competes with GPT-5.2 and Claude Opus on major benchmarks.

Two Chinese open-weight trillion-parameter MoE models with ~32B active parameters each - DeepSeek V4 bets on cost and context, Kimi K2.5 bets on Agent Swarm and verified benchmarks.

DeepSeek will release V4, a natively multimodal trillion-parameter model with a 1M token context window, in the first week of March - optimized for Huawei Ascend chips, not Nvidia.