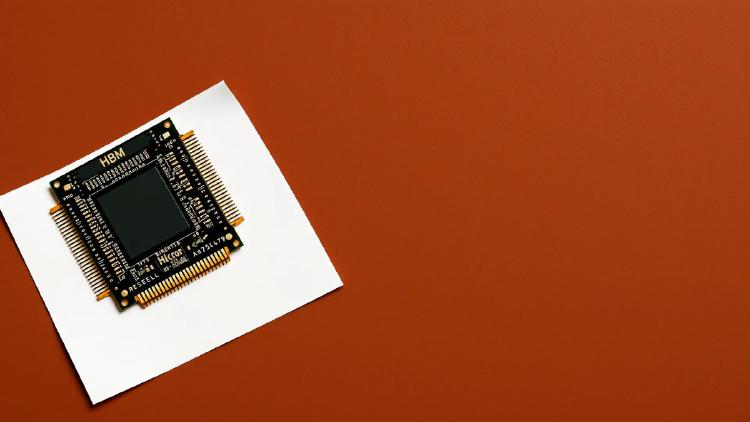

Meta MTIA 450: 18.4 TB/s Inference Accelerator

Meta's MTIA 450 doubles HBM bandwidth to 18.4 TB/s and adds FlashAttention hardware acceleration for GenAI inference in 2027.

They summarize our coverage. We write it.

Newsletters like this one rebroadcast our headlines - often without the full review, the source reading, or the analysis underneath. Our weekly briefing sends the work they paraphrase, straight from the desk, before they get to it.

Free, weekly, no spam. One email every Tuesday. Unsubscribe anytime.

Meta's MTIA 450 doubles HBM bandwidth to 18.4 TB/s and adds FlashAttention hardware acceleration for GenAI inference in 2027.

Meta's second-gen ASIC delivers 6 PFLOPS FP8 and 288 GB HBM for GenAI and recommendation inference inside Meta's data centers.

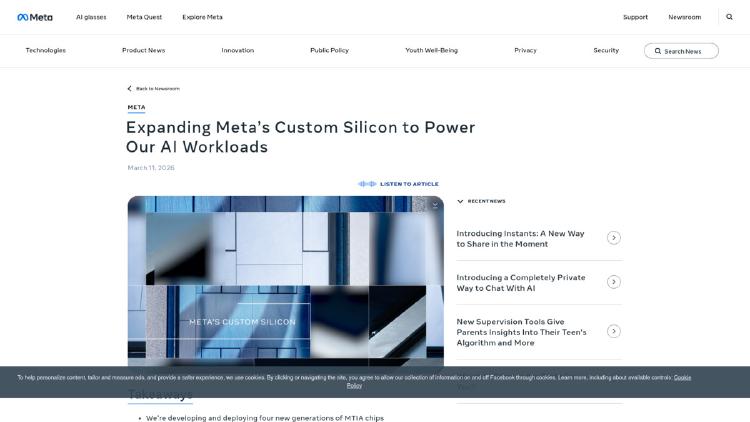

The Cerebras WSE-3 is the largest chip ever built - a TSMC 5nm wafer with 900,000 AI cores, 44GB SRAM, and 21 PB/s bandwidth. Now powering a $20B OpenAI deal and Amazon Bedrock deployments.

The Qualcomm AI250 applies near-memory computing to the same 768GB LPDDR5X design as the AI200, promising 10x higher effective memory bandwidth and lower power for LLM inference at rack scale.

The Rebellions RebelRack packs 32 Rebel100 chiplet NPUs with 4.5TB HBM3E and 153.6 TB/s aggregate bandwidth into a rack drawing just 5kW - roughly 4x the compute-per-watt of an H100 DGX.

TSMC posted $35.9B in Q1 2026 revenue, a 40.6% year-over-year jump, with AI and HPC now accounting for 61% of wafer sales - and CoWoS packaging still fully booked.

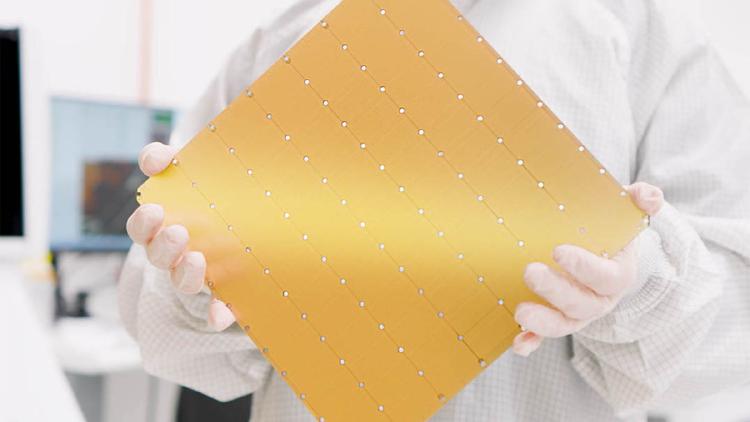

The NVIDIA Groq 3 LPU is a pure-SRAM inference chip delivering 150 TB/s memory bandwidth and 1.2 PFLOPS FP8 per chip, designed to pair with Vera Rubin GPUs for trillion-parameter model serving.

Cerebras Systems has kicked off a $2 billion IPO roadshow targeting a Nasdaq listing under ticker CBRS, anchored by a $10 billion compute contract with OpenAI.

Huawei Atlas 350 specs, benchmarks, and analysis. Ascend 950PR chip, 112GB HiBL 1.0 HBM, 1.56 PFLOPS FP4, 600W - China's first domestically developed FP4-capable AI accelerator.

Microsoft Maia 200 specs, benchmarks, and architecture analysis. TSMC 3nm, 216GB HBM3e, 10 PFLOPS FP4, 750W - Microsoft's first inference-only silicon deployed in Azure.

Percepta AI compiled a WebAssembly interpreter into transformer weights, executing programs deterministically at 33K tokens/sec on CPU - but the community is skeptical about the practical value.

Meta's first mass-deployed RISC-V AI accelerator - 1.2 PFLOPS FP8, 216 GB HBM, powering Facebook and Instagram at scale.