Alibaba Qwen3.6-Plus Launches With 1M Context Window

Alibaba officially launches Qwen3.6-Plus, a 1-million-token context model built for enterprise agentic coding and multimodal reasoning, now free on OpenRouter.

Alibaba officially launches Qwen3.6-Plus, a 1-million-token context model built for enterprise agentic coding and multimodal reasoning, now free on OpenRouter.

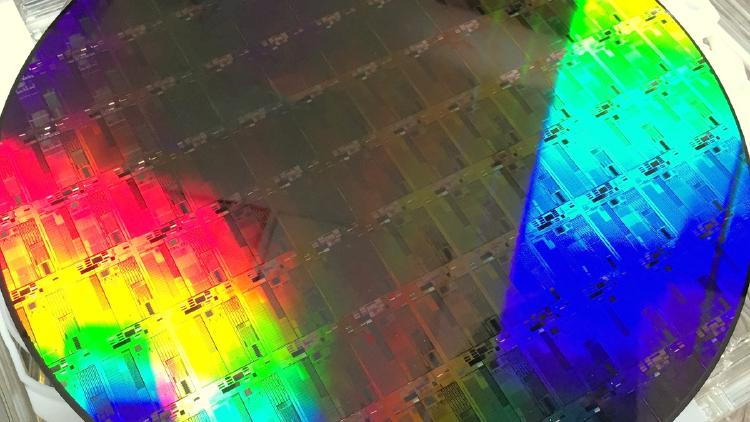

Alibaba's T-Head division launched the XuanTie C950, a 5nm 3.2GHz RISC-V server chip that sets a new world record for RISC-V single-core performance and natively runs billion-parameter models like DeepSeek V3 and Qwen3.

Alibaba's SWE-CI benchmark tested 18 AI models on 100 real codebases across 233 days of maintenance. Most agents accumulate technical debt and break previously working code. Only Claude Opus stays above 50% zero-regression.

Junyang Lin, the 32-year-old architect behind Alibaba's Qwen open-source AI models, announces his departure in a brief tweet - the fourth major exit from Tongyi Lab in two years.

Alibaba completes the Qwen 3.5 lineup with four small models - 0.8B, 2B, 4B, and 9B - all natively multimodal, 262K context, Apache 2.0. The 9B outperforms last-gen Qwen3-30B and beats GPT-5-Nano on vision benchmarks.

Qwen3.5-0.8B is the smallest natively multimodal model in the Qwen 3.5 family - 0.8B parameters handling text, images, and video with 262K context. MathVista 62.2, OCRBench 74.5. Apache 2.0.