Fine-Tuning Costs Comparison - Train Your Own AI

May 2026: Together AI adds Llama 4 and DeepSeek fine-tuning, Fireworks raised deployment prices $1/hr, and H100 rentals fell to under $2.40/hr.

They summarize our coverage. We write it.

Newsletters like this one rebroadcast our headlines - often without the full review, the source reading, or the analysis underneath. Our weekly briefing sends the work they paraphrase, straight from the desk, before they get to it.

Free, weekly, no spam. One email every Tuesday. Unsubscribe anytime.

May 2026: Together AI adds Llama 4 and DeepSeek fine-tuning, Fireworks raised deployment prices $1/hr, and H100 rentals fell to under $2.40/hr.

Under oath in the Musk v. Altman trial, Musk said xAI 'partly' distilled OpenAI's models to train Grok - the same practice US labs have spent months calling theft when Chinese firms do it.

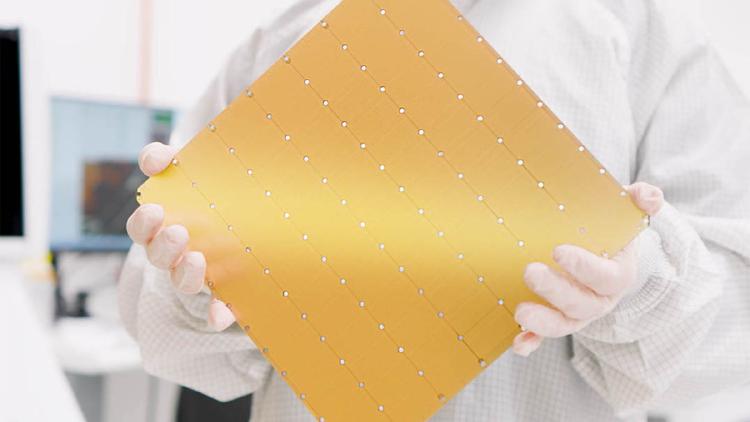

The Cerebras WSE-3 is the largest chip ever built - a TSMC 5nm wafer with 900,000 AI cores, 44GB SRAM, and 21 PB/s bandwidth. Now powering a $20B OpenAI deal and Amazon Bedrock deployments.

A hands-on comparison of the top AI-powered corporate training and L&D platforms in 2026, with verified pricing, feature breakdowns, and honest assessments.

Three new papers on agent prompt injection attack rates, MIT's broad-based AI automation finding, and a silent normalization-optimizer coupling failure in LLM training.

Fine-tuning trains a pre-built AI model on your own data so it learns your specific task, tone, or domain - here is how it works, what it costs, and when to use it.

Anthropic launched the Claude Certified Architect exam and invested $100M in its Partner Network - free for the first 5,000 partner employees, $99 after. Accenture is training 30,000 people.

Mistral's new Forge platform lets enterprises train frontier-grade AI models entirely on proprietary data, without sending any of it to a third party.

Nvidia commits a gigawatt of Vera Rubin chips to Mira Murati's startup, a supply the FT values at tens of billions of dollars, alongside an undisclosed cash investment.

A Hugging Face survey of 16 open-source reinforcement learning libraries finds the entire ecosystem has converged on async disaggregated training to fix a single brutal bottleneck: GPU idle time during long rollouts.

Andrew Ng says AGI is decades away and the real AI bubble risk is in the training layer - not inference. We examine both claims against the data.

Max Schwarzer, VP of Research and Head of Post-Training at OpenAI, leaves after a year leading the team that shipped GPT-5, 5.1, 5.2, and 5.3-Codex to return to RL research at Anthropic.