NVIDIA's Secret Chip Fuses GPU and Groq for OpenAI

NVIDIA will unveil a new inference processor built on Groq's LPU architecture at GTC 2026, with OpenAI as its first major customer allocating 3 GW of dedicated capacity.

NVIDIA will unveil a new inference processor built on Groq's LPU architecture at GTC 2026, with OpenAI as its first major customer allocating 3 GW of dedicated capacity.

Huawei debuts its Atlas 950 SuperPoD at MWC Barcelona - 8,192 NPUs delivering 8 ExaFLOPS - marking its first overseas showcase of the AI supercomputer that directly targets Nvidia's cluster dominance.

DeepSeek will release V4, a natively multimodal trillion-parameter model with a 1M token context window, in the first week of March - optimized for Huawei Ascend chips, not Nvidia.

Huawei Ascend 910B specs, benchmarks, and real-world performance. 64GB HBM2e, ~1,200 GB/s bandwidth, ~600 TFLOPS FP16 - the chip that trained DeepSeek.

Huawei Ascend 910C specs, benchmarks, and performance analysis. 96GB HBM2e, ~1,800 GB/s bandwidth, ~800 TFLOPS FP16 - China's flagship AI chip under US sanctions.

DeepSeek has denied Nvidia and AMD pre-release access to its upcoming V4 model while granting Huawei and domestic Chinese chipmakers a multi-week optimization window, signaling a strategic pivot toward building a parallel AI software ecosystem on Chinese silicon.

Meta has agreed to rent Google's Ironwood TPUs through Google Cloud to train next-generation AI models, adding a third major chip supplier alongside Nvidia and AMD in a single month.

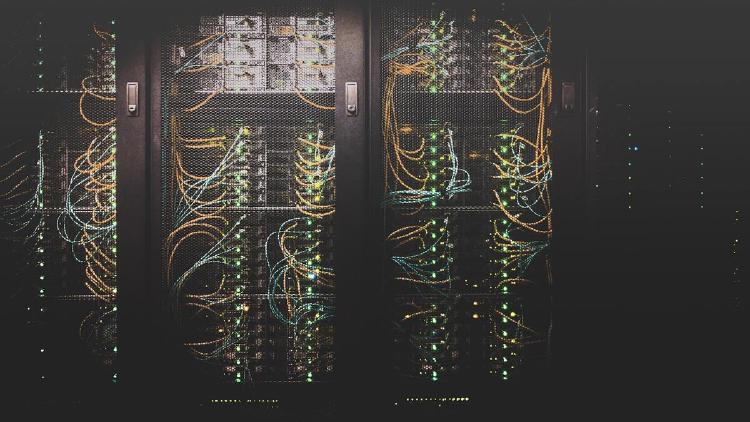

Three AI chip startups - MatX, SambaNova, and Axelera - raised a combined $1.1 billion in one week, signaling an acceleration in the race to break Nvidia's GPU dominance.

A senior Trump administration official confirms DeepSeek trained its upcoming AI model on Nvidia's most advanced Blackwell chips at an Inner Mongolia data center, despite US export controls banning the hardware from reaching China.

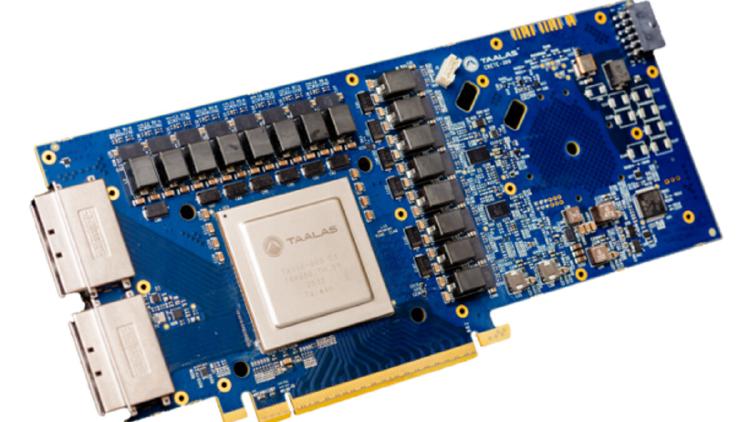

Toronto startup Taalas raises $169M to build custom chips that permanently etch AI model weights into transistors, claiming 73x faster inference than Nvidia's H200 at a fraction of the power.

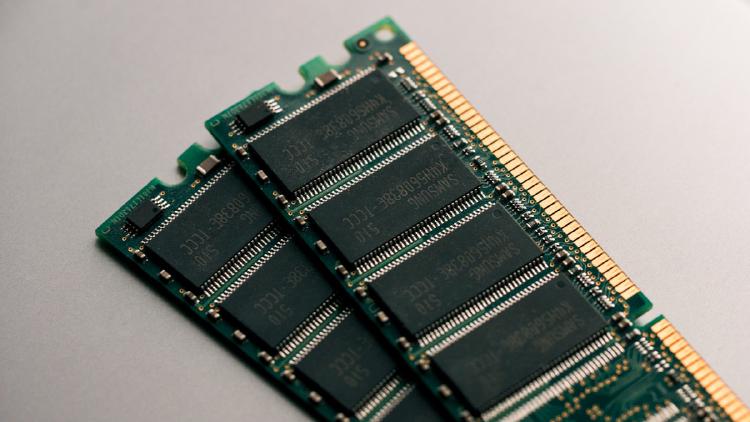

AI data centers now consume 70% of global memory production, triggering price surges, product delays, and warnings of manufacturer bankruptcies across consumer electronics.