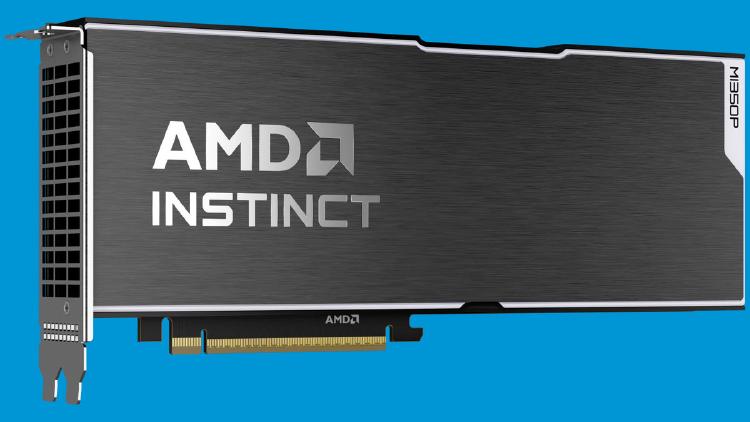

AMD Instinct MI350P - CDNA 4 PCIe Inference Card

AMD Instinct MI350P brings CDNA 4 to a standard PCIe slot: 144GB HBM3E, 4 TB/s bandwidth, 2.3 PFLOPS MXFP8, and 600W passive cooling for air-cooled servers.

They summarize our coverage. We write it.

Newsletters like this one rebroadcast our headlines - often without the full review, the source reading, or the analysis underneath. Our weekly briefing sends the work they paraphrase, straight from the desk, before they get to it.

Free, weekly, no spam. One email every Tuesday. Unsubscribe anytime.

AMD Instinct MI350P brings CDNA 4 to a standard PCIe slot: 144GB HBM3E, 4 TB/s bandwidth, 2.3 PFLOPS MXFP8, and 600W passive cooling for air-cooled servers.

NVIDIA RTX Spark is a 20-core ARM + Blackwell GPU superchip delivering 1 petaFLOP FP4 and 128GB unified memory for AI-first Windows laptops and desktops.

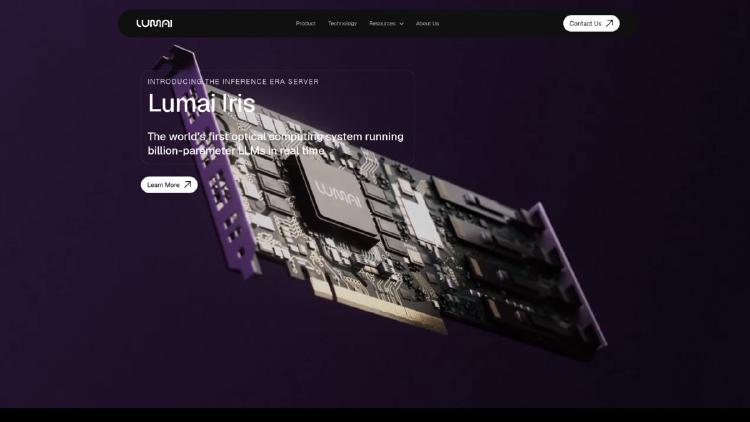

Lumai's optical AI inference server uses light-based computing to run billion-parameter LLMs with up to 90% less power than GPUs.

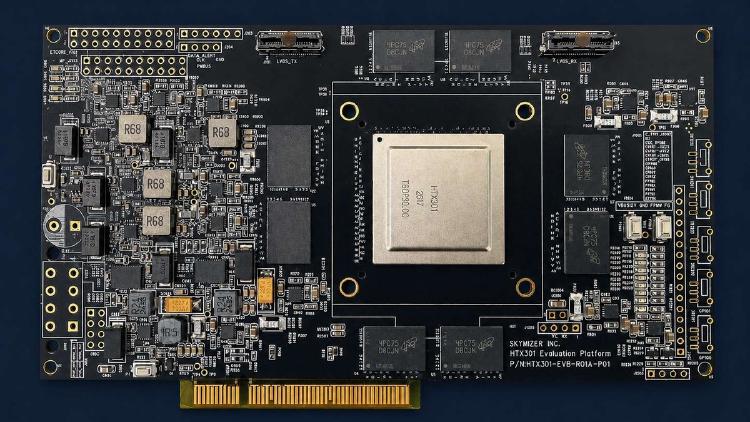

Skymizer's HTX301 uses six 28nm chips and 384 GB LPDDR5 to run 700B-parameter LLMs on a single PCIe card at just 240W.

Meta's MTIA 450 doubles HBM bandwidth to 18.4 TB/s and adds FlashAttention hardware acceleration for GenAI inference in 2027.

AMD Helios packs 72 Instinct MI455X GPUs and 31 TB HBM4 into a single rack delivering 2.9 FP4 ExaFLOPS for AI workloads.

Meta's second-gen ASIC delivers 6 PFLOPS FP8 and 288 GB HBM for GenAI and recommendation inference inside Meta's data centers.

Google's TPU 8i is a purpose-built inference chip with 10.1 FP4 PFLOPs, 288GB HBM3e at 8,601 GB/s, and a Boardfly topology that cuts collective latency 5x for agentic AI workloads.

Google's TPU 8t packs 12.6 FP4 PFLOPs and 216GB HBM3e per chip, scaling to 9,600-chip superpods with 121 ExaFLOPS and 2 petabytes of shared HBM for massive model training.

The Qualcomm AI250 applies near-memory computing to the same 768GB LPDDR5X design as the AI200, promising 10x higher effective memory bandwidth and lower power for LLM inference at rack scale.

The Rebellions RebelRack packs 32 Rebel100 chiplet NPUs with 4.5TB HBM3E and 153.6 TB/s aggregate bandwidth into a rack drawing just 5kW - roughly 4x the compute-per-watt of an H100 DGX.

The AMD Instinct MI430X is AMD's CDNA 5 HPC accelerator with 432GB HBM4, full FP64 support, and 19.6 TB/s bandwidth - designed for sovereign AI and scientific supercomputing alongside the MI455X AI GPU.