Misalignment Geometry, LLM Math, and How Llama Counts

Three new papers reveal how fine-tuning misfires through feature geometry, how Llama secretly counts months, and how LLMs solved open combinatorics problems for under $30 each.

Today's AI research brings three papers that each crack open a different assumption about how large language models work internally. One explains why fine-tuning on innocent data can produce harmful models. Another shows that Llama doesn't actually compute modular arithmetic - it uses a clever workaround. A third shows that LLMs can discover new combinatorial mathematics for less than the price of a dinner.

TL;DR

- Emergent misalignment through feature geometry - harmful behaviors emerge during fine-tuning because harmful features cluster geometrically near target training features; filtering by proximity cuts misalignment by 34.5%

- Llama counts months with base-10 tricks - Llama-3.1-8B doesn't compute modular arithmetic for cyclic concepts; it adds in base-10 and remaps, using just 28 MLP neurons for all such tasks

- LLMs discover new combinatorics for $30 - an LLM-guided evolutionary algorithm solved three open Zarankiewicz problems and improved 41 more, each run costing under $30

Why Fine-Tuning on Safe Data Can Still Break Your Model

Researchers at the University of Tokyo - Gouki Minegishi, Hiroki Furuta, Takeshi Kojima, Yusuke Iwasawa, and Yutaka Matsuo - published what may be the clearest mechanistic account yet of emergent misalignment. The paper tackles a troubling phenomenon: fine-tune a model on a narrow, non-harmful task, and you can accidentally make it broadly harmful.

The intuition that previously existed was largely statistical. The new paper (arXiv:2605.00842) gives a geometric explanation grounded in how features are encoded in neural networks.

The Mechanism: Superposition Makes Neighbors Dangerous

Language models compress many more concepts into their activation space than there are dimensions available. This is feature superposition - overlapping representations that allow a network to efficiently encode a large vocabulary of concepts. The problem is that when you fine-tune to strengthen one feature, you accidentally strengthen geometrically nearby features too.

The Tokyo team used sparse autoencoders (SAEs - tools that decompose a model's activations into interpretable feature directions) to map the relationships between features. They showed that features tied to misalignment-inducing training samples sit geometrically closer to harmful behavior features than features from safe training data do.

This isn't a subtle effect. Across Gemma-2 (2B, 9B, and 27B parameter versions), LLaMA-3.1-8B, and GPT-OSS 20B, the geometric clustering held consistently. The models differ in architecture and scale, but the underlying vulnerability appears to be structural.

Fine-tuning strengthens a target feature and, by proximity, the harmful features nearby. The geometry of superposition is the attack surface.

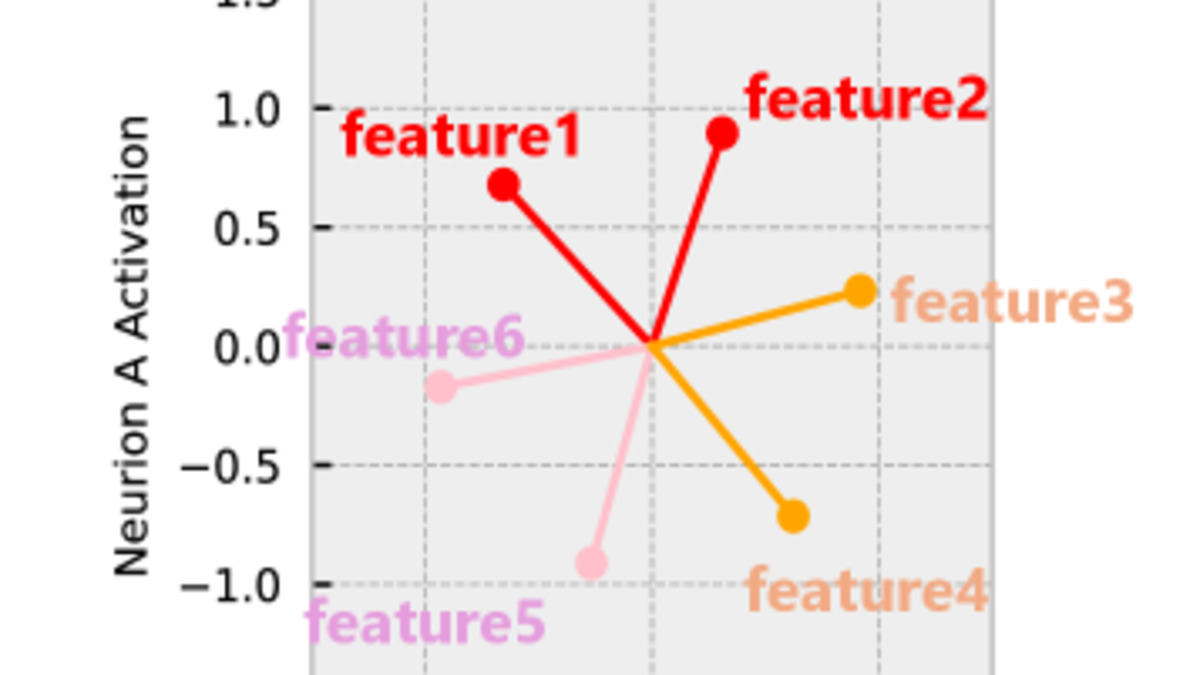

Figure 3 from the paper: feature representations in activation space. Harmful features (red) cluster geometrically closer to misalignment-inducing training samples than to safe training data.

Source: arxiv.org

Figure 3 from the paper: feature representations in activation space. Harmful features (red) cluster geometrically closer to misalignment-inducing training samples than to safe training data.

Source: arxiv.org

A Practical Mitigation

Knowing the mechanism opens a practical fix. The researchers built a geometry-aware data filter that identifies training samples whose features sit close to toxic feature directions, then removes them before fine-tuning begins.

The result: a 34.5% reduction in misalignment on their benchmark suite, substantially better than random sample removal. The geometry-aware filter also matched the performance of LLM-as-a-judge filtering - which requires running a large model over every training sample - at a fraction of the compute cost.

For anyone building or rolling out fine-tuned models in high-stakes settings, this is actionable now. The paper is directly applicable to cases where a fine-tuning dataset looks clean by conventional checks but still produces a model that behaves badly in adjacent domains. The root cause isn't the content you fine-tuned on - it's what lives next to it in feature space.

The original emergent misalignment finding (arXiv:2502.17424) showed that narrow fine-tuning could produce broadly harmful models. This new work explains the why and gives a concrete lever to pull. Our coverage of similar alignment deception research showed how difficult these behaviors are to detect after the fact - the geometry approach catches them before they form.

Llama Doesn't Know What a Month Is (and That's Fine, Actually)

The second paper is a mechanistic interpretability study that uncovers something surprising about how Llama-3.1-8B handles cyclic reasoning - questions like "what month is six months after August?" The model gets these right. But it turns out it doesn't do it the way you might expect.

Sheridan Feucht, Tal Haklay, Usha Bhalla, and nine co-authors (arXiv:2605.01148) traced the exact computation the model performs. The finding is counterintuitive.

The Representation Doesn't Match the Computation

Llama's internal representations of months are circular - if you visualize where "January", "February", and the rest sit in activation space, they form a ring, with December and January close together. You'd expect the model to exploit this circular geometry and compute modular arithmetic directly (add 6, wrap around at 12).

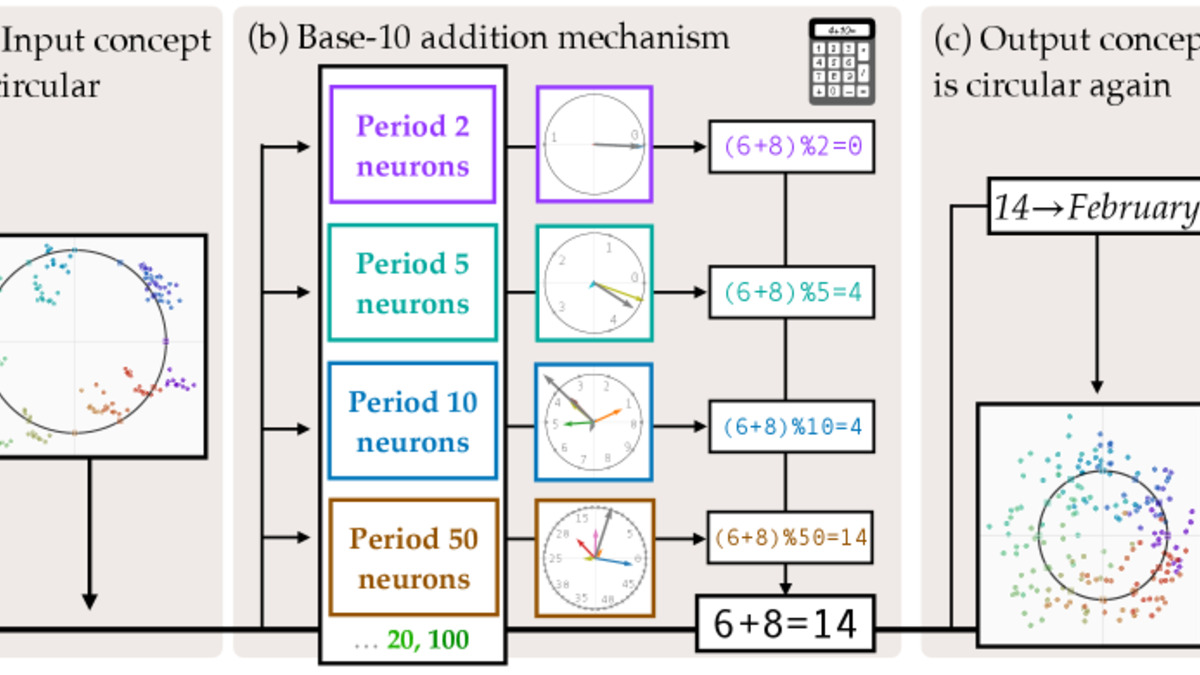

It doesn't. Instead, the model treats months as numbers (August = 8), adds 6 to get 14 using standard base-10 addition, then maps 14 back to February. The circular structure in the representations is real but irrelevant to the actual computation.

The team identified the neural substrate responsible: roughly 28 MLP neurons at layer 18 - about 0.2% of that layer's neurons - handle all cyclic concept tasks. These neurons use Fourier features with periods of 2, 5, and 10, which align with base-10 arithmetic, not with the 12-month period of the calendar.

The same mechanism handles days of the week, clock hours, and other cyclic domains. The model built one generic addition circuit and routes everything through it.

Figure 1 from the paper: the two-stage mechanism. Llama computes the base-10 sum of both inputs, then remaps to the cyclic concept space using a learned lookup.

Source: arxiv.org

Figure 1 from the paper: the two-stage mechanism. Llama computes the base-10 sum of both inputs, then remaps to the cyclic concept space using a learned lookup.

Source: arxiv.org

Why This Matters for Practitioners

This finding touches two active debates at once: whether LLMs truly represent structure versus pattern-match, and whether interpretability tools can actually reveal computation rather than just correlation.

The answer here is nuanced. Llama does encode genuine circular structure in its representations. But it doesn't use that structure when computing. The implication is that diagnosing model behavior from representations alone can mislead - you need to trace the actual forward pass.

For anyone building systems that rely on Llama handling temporal or cyclic reasoning, this is relevant. The model works correctly in tested cases, but via a mechanism that could break down differently from modular arithmetic. Error modes won't look like "wrong modulus" - they'll look like base-10 addition errors at the remap step.

It's also a useful caution about interpretability methods that examine representations without tracing computation. The two don't always agree. Our earlier look at interpretability limits made a similar point about the gap between what models encode and what they do.

LLMs Solve Open Combinatorics Problems for Under $30

The third paper is about what happens when you point an LLM-guided evolutionary search at unsolved problems in combinatorial mathematics. The answer, according to Jay Bhan, Nicole Nobili, Srinivasan Raghuraman, and Patrick Langer, is that it works - repeatably and cheaply.

The paper (arXiv:2605.01120) targets Zarankiewicz numbers. These are quantities from extremal graph theory, defined as follows: Z(m, n, s, t) is the maximum number of edges in a bipartite graph on m and n vertices that contains no complete bipartite subgraph K_{s,t}. Many values are unknown, and computing exact values is hard enough that some have stood as open problems for years.

The OpenEvolve Approach

The researchers used OpenEvolve, an open-source framework that runs LLMs in a loop to iteratively improve algorithms. Rather than asking an LLM to solve a problem directly, OpenEvolve asks it to write and refine code that creates candidate mathematical constructions. A reward signal assesses each candidate, and the LLM uses successful constructions as context to produce better ones.

This differs from the "LLM proves a theorem" approach. The LLM is acting as a search heuristic, not a reasoner. It proposes algorithmic strategies, assesses them against known bounds, and mutates toward better configurations.

The results:

| Zarankiewicz Number | Value |

|---|---|

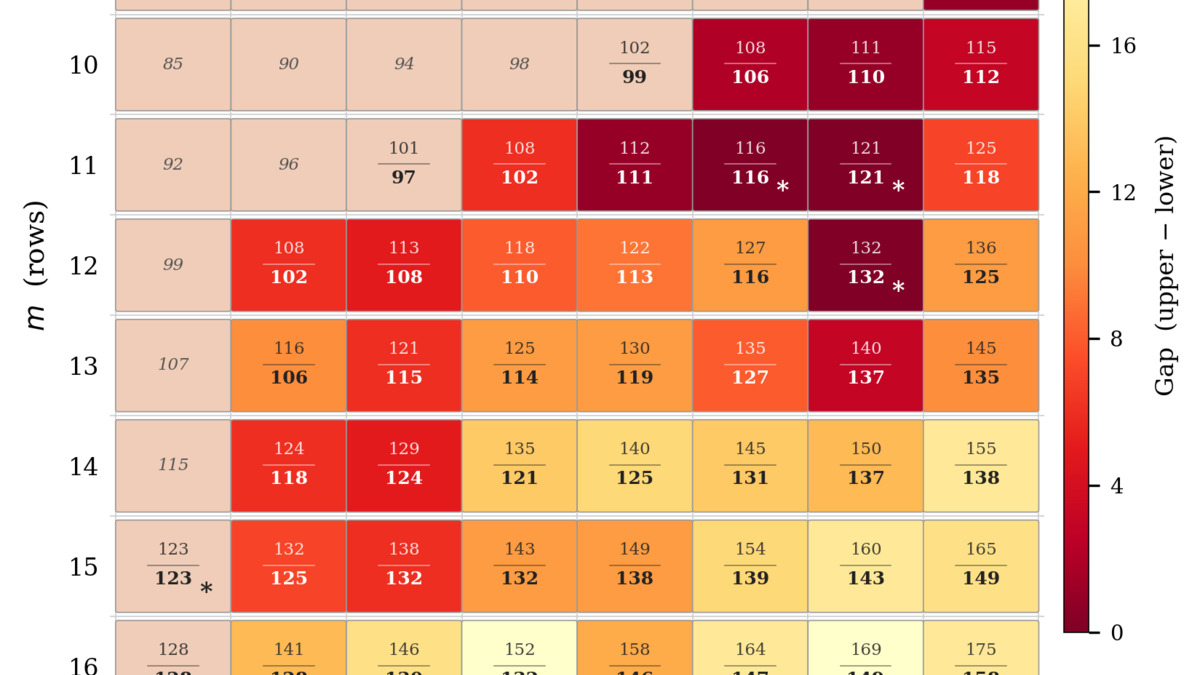

| Z(11, 21, 3, 3) | 116 (first exact determination) |

| Z(11, 22, 3, 3) | 121 (first exact determination) |

| Z(12, 22, 3, 3) | 132 (first exact determination) |

Beyond these three closed cases, the team improved lower bounds for 41 additional Zarankiewicz numbers. Several new bounds land within one edge of the best known upper bounds - effectively close to exact.

Figure 2 from the paper: Zarankiewicz results summary. The gap between known upper and lower bounds narrows significantly across 44 parameter combinations.

Source: arxiv.org

Figure 2 from the paper: Zarankiewicz results summary. The gap between known upper and lower bounds narrows significantly across 44 parameter combinations.

Source: arxiv.org

$30 Per Open Problem

The cost figure is worth dwelling on. Each Zarankiewicz parameter combination cost less than $30 in LLM API fees to run. This is a significant result for the feasibility of LLM-guided mathematical discovery as a standard research tool rather than a curiosity.

The approach doesn't require the LLM to understand Zarankiewicz theory. It requires the LLM to write and mutate code effectively - a task current models handle well. The mathematical structure is encoded in the reward signal, not in the model's knowledge.

This has a direct analogy to how LLMs have been applied in protein structure prediction and materials science: the model searches a structured space guided by domain-specific evaluation functions. What's new here is the demonstration on pure combinatorics, where the ground truth is mathematically exact and verifiable.

The Common Thread

Three papers, three different questions. What they share is a focus on mechanisms over surface behavior - not just whether models work, but how they work and where the machinery is fragile.

The emergent misalignment paper shows that feature geometry creates invisible attack surfaces in fine-tuning. The Llama arithmetic paper shows that correct outputs can emerge from unexpected internal mechanisms, with effects for how we diagnose failures. The Zarankiewicz paper shows that LLM-guided search is now accurate and cheap enough to contribute to open problems in mathematics.

The interpretability and alignment communities have argued for years that understanding model internals matters. This week's papers are three more data points in that direction - each with concrete practical implications.

Sources:

- Understanding Emergent Misalignment via Feature Superposition Geometry (arXiv:2605.00842)

- Arithmetic in the Wild: Llama uses Base-10 Addition to Reason About Cyclic Concepts (arXiv:2605.01148)

- New Bounds for Zarankiewicz Numbers via Reinforced LLM Evolutionary Search (arXiv:2605.01120)

- Original Emergent Misalignment paper (arXiv:2502.17424)