AI Memory Math, Label-Free RL, and the Productivity Ceiling

New proofs show semantic memory must forget, SARL trains reasoning models without labels, and the Novelty Bottleneck explains why AI won't eliminate human work.

Three papers this week cut through the noise in areas practitioners deal with daily: why retrieval systems keep failing you, how to train reasoning models when you don't have labeled answers, and whether AI will ever eliminate the need for human judgment. Each offers something more useful than optimism - actual math.

TL;DR

- Semantic memory must forget - mathematical proof shows interference and false recall are unavoidable across all retrieval architectures, vector or graph

- SARL trains reasoning models without ground-truth labels by rewarding the structure of thinking, beating labeled RL by up to 34.6% on open-ended tasks

- The novelty fraction is the key parameter: it determines whether AI makes your work 10x faster or just marginally so, and no amount of model improvement changes the exponent

Every Semantic Memory Forgets - Mathematically

The paper: The Price of Meaning: Why Every Semantic Memory System Forgets, by Sambartha Ray Barman, Andrey Starenky, Sofia Bodnar, Nikhil Narasimhan, and Ashwin Gopinath.

If you've built a RAG pipeline and watched it retrieve stale or wrong memories, this paper explains why that's not a configuration problem.

The team proves that any memory system organized by meaning will suffer interference, forgetting, and false recall. The argument turns on a property they call finite local intrinsic dimension: when you organize information semantically - using embeddings, attention, or graph edges - similar items cluster together. That's the whole point. But those clusters always have neighbors that don't belong, and as your memory grows, those imposters multiply.

The geometric structure that makes semantic retrieval useful also makes interference unavoidable - similar vectors compete for the same retrieval slots.

Source: commons.wikimedia.org

The geometric structure that makes semantic retrieval useful also makes interference unavoidable - similar vectors compete for the same retrieval slots.

Source: commons.wikimedia.org

They derive four results from this setup. Semantically useful representations have finite effective rank - you can't pack meaning in ways that avoid neighborhood overlap. Finite local dimension guarantees some wrong memories always score high. Under growing memory, retention decays to zero following power-law forgetting curves. And false recall from similar-but-wrong items can't be removed by tuning retrieval thresholds.

The team tested across five architectures: vector retrieval, graph memory, attention-based context, BM25 filesystem retrieval, and parametric memory stored in weights. Pure semantic systems showed exactly the predicted patterns - forgetting and false recall scaled with memory size. Systems with reasoning layers on top partially suppressed the symptoms, but converted graceful degradation into catastrophic failure under load.

The only escape is giving up semantic generalization completely. Exact-key lookups and hash tables don't forget - but they also can't find the document that "talks about the same thing in different words." That tradeoff is structural, not a bug awaiting a fix.

For practitioners: this isn't a reason to abandon RAG. It's a reason to design around expected degradation from the start - periodic reindexing, memory pruning, and hybrid retrieval strategies that mix semantic and lexical signals. Stop treating forgetting as a failure mode you'll eventually engineer away.

Teaching Reasoning Without Knowing the Answer

The paper: SARL: Label-Free Reinforcement Learning by Rewarding Reasoning Topology, by Yifan Wang, Bolian Li, David Cho, Ruqi Zhang, Fanping Sui, and Ananth Grama.

Reinforcement learning for reasoning models normally needs one thing: a way to check whether the answer is correct. For math, that's easy - you run the verifier. For open-ended questions about strategy, ethics, or science, there's no automatic checker. That has left a large chunk of the problem space unreachable by standard RL.

SARL sidesteps the answer completely and rewards how the model thinks.

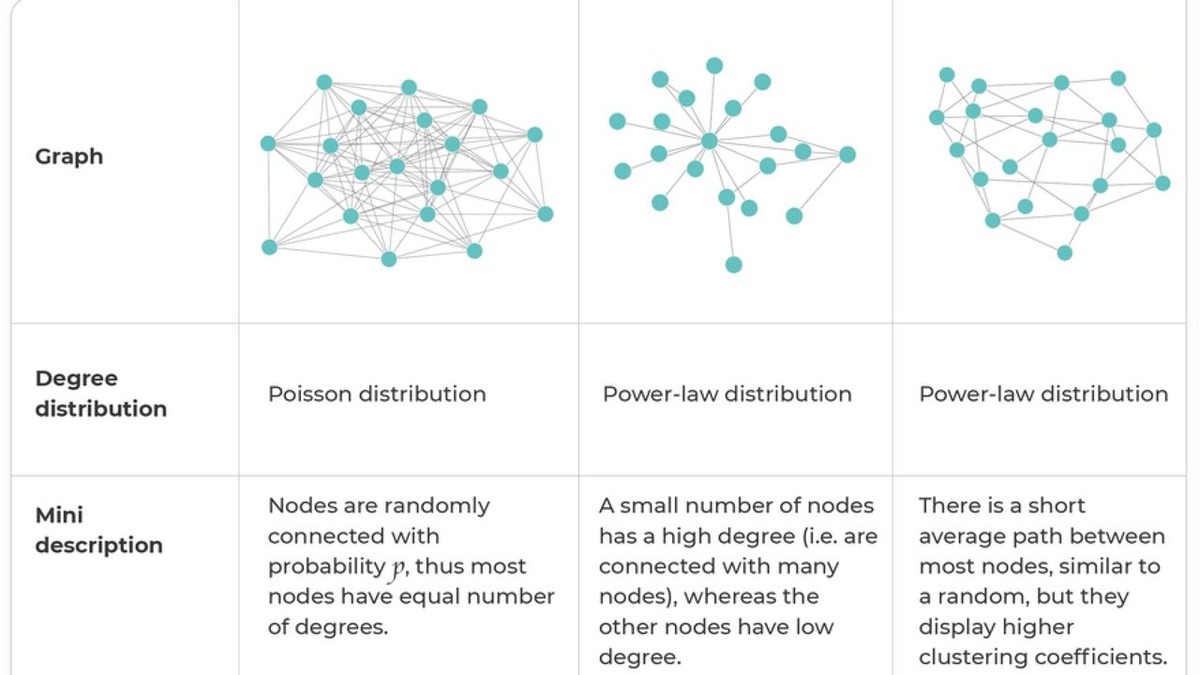

The insight draws from network science. Human reasoning, and functional brain organization, tends to form small-world graphs: clusters of locally related ideas connected by a few long-range bridges. These structures are both locally coherent (reasoning hangs together step by step) and globally efficient (you reach conclusions without excessive backtracking). SARL builds a Reasoning Map from a model's intermediate thinking steps and rewards topologies that match these properties.

SARL rewards reasoning chains that exhibit small-world topology - the same pattern seen in efficient biological and social networks.

Source: commons.wikimedia.org

SARL rewards reasoning chains that exhibit small-world topology - the same pattern seen in efficient biological and social networks.

Source: commons.wikimedia.org

Tested on Qwen3-4B, SARL beats both ground-truth-based RL and prior label-free baselines:

| Task type | PPO gain | GRPO gain |

|---|---|---|

| Math (verifiable) | +9.1% | +11.6% |

| Open-ended tasks | +34.6% | +30.4% |

The open-ended gains are larger than the math gains. That matters because current RL pipelines already have strong tooling for verifiable tasks. The gap SARL closes is on harder terrain where answer verification doesn't exist - which is most of the work that businesses actually care about.

Two properties stand out from training diagnostics. SARL models show lower KL divergence from their base policy, meaning training stays more stable. Policy entropy stays higher, indicating the model continues exploring during reasoning rather than collapsing to repeated patterns. Stable and exploratory together is what you want in a reasoning system built for open-ended use.

What Remains Open

The limitation is honest: Qwen3-4B is one data point. Whether the small-world reward generalizes as cleanly to larger models or different reasoning formats isn't established. The authors frame this as an explicit open question, which is the right call.

Why AI Can't Make Everything Effortless

The paper: The Novelty Bottleneck: A Framework for Understanding Human Effort Scaling in AI-Assisted Work, by Jacky Liang of Google DeepMind.

There's a version of AI optimism that implies human effort per task will approach zero as models improve. Liang's paper gives that claim a precise mathematical form - and shows it fails.

The argument borrows from Amdahl's Law in parallel computing. In that context, the speedup you get from parallelizing a program is bounded by the fraction that must remain serial. Even perfect parallelization of everything else can't push speedup past that ceiling. Liang applies the same logic to tasks. Every task decomposes into atomic decisions, and some fraction nu of those decisions are "novel" - not covered by the agent's prior, requiring human judgment. That fraction is the serial component, and it doesn't shrink just because the agent gets better.

The consequences derive directly from this framing:

- Human effort doesn't smoothly decline as AI improves. The transition from O(E) to O(1) is sharp, with no intermediate sublinear regime.

- Better agents reduce the coefficient on human effort but not the exponent. Work that scales linearly in task size stays linear.

- Best team size shifts as agents improve - fewer humans needed - but the relationship between effort and task size doesn't change shape.

- Wall-clock time scales as O(sqrt(E)) via team parallelism. Total human effort stays O(E).

The asymmetry in the last point carries real weight for AI safety discussions. Agents face no novelty bottleneck when exploiting existing knowledge - writing variations on known content, finding known patterns in new data, applying established procedures. Genuine frontier work, where humans are still figuring out what the rules are, stays expensive by construction.

The novelty fraction is what matters most, and it's specific to each task domain. Low-novelty domains already see near-O(1) human effort per task with good AI tooling. High-novelty domains - fundamental research, novel legal interpretations, engineering problems without established precedent - will remain human-intensive regardless of model improvements. Knowing where your domain sits on that spectrum is now a concrete planning question, not a philosophical one.

The Common Thread

None of these papers announces a product or scores a new benchmark. All three identify structural constraints on what AI systems can do - the kind of limits that don't go away with more compute.

Semantic memory can't avoid forgetting without giving up generalization. RL doesn't need labeled answers if you reward the shape of thinking instead. And AI can't remove human effort in domains where novelty is high, because novelty creates irreducible serial work by definition. These aren't pessimistic findings. They're the walls of the room. You build differently once you know where they are.

Sources:

- The Price of Meaning: Why Every Semantic Memory System Forgets - arXiv:2603.27116

- SARL: Label-Free Reinforcement Learning by Rewarding Reasoning Topology - arXiv:2603.27977

- The Novelty Bottleneck: A Framework for Understanding Human Effort Scaling in AI-Assisted Work - arXiv:2603.27438