Interpretability Limits, Dark Models, Persona Traps

Three new papers expose a gap between what AI models know and what they do - and why that gap is harder to close than anyone assumed.

Three papers landed this week that share an uncomfortable common thread: AI systems consistently behave in ways that don't match what they represent internally, and the tools we use to fix that keep falling short. Mechanistic interpretability probes that achieve near-perfect internal accuracy can't translate that accuracy to corrected outputs. Controlled "dark models" teach us more about harmful AI interactions than real-world data ever could. And expert personas - a popular technique for improving model alignment - turn out to quietly sabotage factual accuracy at the same time.

TL;DR

- Interpretability without actionability - Linear probes read clinical AI models at 98.2% accuracy, but steering those models corrects fewer than 1 in 4 missed diagnoses while breaking correct ones

- Multi-Trait Subspace Steering - Researchers built "dark models" that reliably produce harmful interactions, enabling systematic safety testing that organic data can't provide

- PRISM persona routing - Expert personas boost alignment scores but cut factual accuracy; PRISM selectively applies them via gated LoRA adapters to get both

The Knowing-Doing Gap in Clinical AI

Paper: "Interpretability without Actionability: Mechanistic Methods Cannot Correct Language Model Errors Despite Near-Perfect Internal Representations" | Sanjay Basu, Sadiq Y. Patel, Parth Sheth, and collaborators | arXiv:2603.18353

The premise of mechanistic interpretability is intuitive: if we can identify where a model stores a concept, we can steer it toward the right answer. This paper tests that premise directly, in a setting where getting the answer wrong has real costs - clinical triage for hazardous versus benign cases.

The team built linear probes over a language model's internal activations and assessed them against 400 physician-reviewed clinical cases (144 hazardous, 256 benign). The probes achieved 98.2% AUROC - near-perfect discrimination. The model's own output sensitivity was just 45.1%, meaning it missed more than half the hazardous cases.

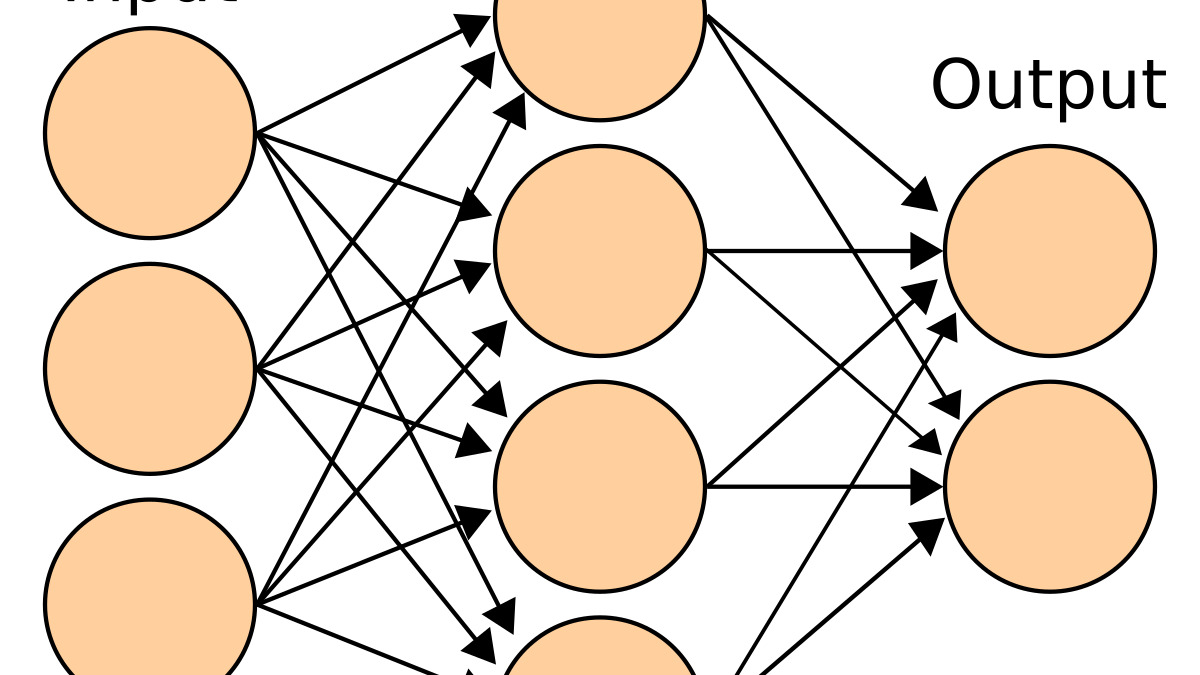

Mechanistic interpretability methods probe the internal representations of neural networks - but probing and steering are different problems.

Source: wikimedia.org

Mechanistic interpretability methods probe the internal representations of neural networks - but probing and steering are different problems.

Source: wikimedia.org

Then came the hard part: using the probes to fix the model's outputs. The researchers tested four intervention methods:

- Concept bottleneck steering corrected 20% of missed hazardous cases - but disrupted 53% of the correctly handled ones. A net negative.

- Sparse autoencoder steering produced basically no effect.

- Logit lens with activation patching similarly failed to move the needle.

- Truthfulness separator vector steering corrected 24% of errors, leaving 76% intact.

The gap between what the model represents internally and what it produces in output is real, well-documented, and - at least with current methods - stubbornly resistant to intervention.

The model knew the right answer. It just wouldn't say it - and nothing researchers tried could make it.

For practitioners, this is a direct challenge to a growing assumption in AI safety work. Guide Labs' Steerling-8B is built on the premise that interpretable internal representations can produce more controllable outputs. This paper suggests the chain from "interpretable" to "steerable" to "correctable" is nowhere near as reliable as hoped - at least in high-stakes, narrow domains.

The clinical setting is worth emphasizing. These aren't abstract benchmarks. These are triage decisions. A steering method that disrupts 53% of correct answers to fix 20% of wrong ones isn't a safety tool - it's a liability.

Engineering Harmful AI to Understand It

Paper: "Multi-Trait Subspace Steering to Reveal the Dark Side of Human-AI Interaction" | Xin Wei Chia, Swee Liang Wong, Jonathan Pan | arXiv:2603.18085

Studying harmful human-AI interactions has a methodological problem: the worst outcomes develop over sustained conversations that are hard to reproduce in controlled conditions. You can't ethically ask participants to have distressing exchanges with chatbots on demand. Real-world incidents are documented after the fact, with incomplete logs and no systematic variation.

Chia, Wong, and Pan take a different approach. They built a framework called MultiTraitsss (Multi-Trait Subspace Steering) that constructs what they call "dark models" - language models deliberately steered to exhibit cumulative harmful behavioral patterns through a combination of crisis-associated personality traits and subspace steering.

The framework uses established crisis-associated traits as a guide and applies novel subspace steering to produce models that consistently produce harmful outputs across both single-turn and multi-turn evaluations.

The point isn't to deploy harmful models. It's to study the mechanisms behind harmful outputs in a way that organic interaction data never allows: methodically, at scale, with controlled variation. By knowing exactly which traits and steering vectors produce which harm patterns, the team managed to work backward to propose protective countermeasures that reduce harmful outcomes in human-AI interaction.

This is directly relevant to regulatory discussions underway. Oregon's SB 1546 - the first chatbot safety bill of 2026 - requires chatbot operators to implement suicide safeguards and disclose AI nature to minors, but leaves the specific mechanism largely unspecified. Research like this provides exactly the kind of mechanistic understanding regulators and developers need to build interventions that work, rather than surface-level filters that safety-conscious bad actors - or poorly-tuned models - can route around.

What subspace steering means here

Subspace steering manipulates a model by adding a learned vector to its internal activations during inference. Unlike fine-tuning, it doesn't change weights permanently - it shifts the model's behavior for a given session. MultiTraitsss applies this across multiple trait dimensions simultaneously, building up patterns of harmful behavior that no single trait vector would produce alone. The accumulation is the point: harmful interactions rarely stem from one property in isolation.

The Persona Trade-off Nobody Warned You About

Paper: "Expert Personas Improve LLM Alignment but Damage Accuracy: Bootstrapping Intent-Based Persona Routing with PRISM" | Zizhao Hu, Mohammad Rostami, Jesse Thomason | arXiv:2603.18507

Persona prompting - telling a model to act as a domain expert - is everywhere. System prompts across the industry routinely include something like "You are a senior financial analyst with 20 years of experience." This paper is the first to systematically measure the trade-off that comes with that technique.

The finding: expert personas improve human preference and safety alignment on generative tasks - things like open-ended advice, tone, and explanation quality. They make the model feel more authoritative and careful. But on discriminative tasks - yes/no questions, factual lookups, classification - accuracy drops. The model is so focused on sounding like an expert that it gets facts wrong.

This isn't a subtle effect that shows up only in edge cases. It's consistent across model types, with instruction-tuned and reasoning models both affected, and it holds across varying prompt lengths and positions.

Hu, Rostami, and Thomason built PRISM (Persona Routing via Intent-based Self-Modeling) to address this. PRISM uses a bootstrapping process to train a gated LoRA adapter that applies persona behavior only when the incoming task is the kind the persona helps with - and withholds it when it doesn't. The system requires no external data, no additional models, and no manual labeling: it builds its intent classifier from the model's own behavior.

The result is what the authors call "enhanced human preference and safety alignment on generative tasks while maintaining accuracy on discriminative tasks across all models, with minimal memory and computing overhead."

| Task type | Baseline | Persona prompt | PRISM |

|---|---|---|---|

| Generative (alignment) | Standard | Improved | Improved |

| Discriminative (factual) | Standard | Degraded | Maintained |

For anyone building RAG pipelines, customer-facing agents, or any system that uses a fixed system prompt persona across query types, this is a practical warning. The performance hit is real. PRISM's approach - learning when to apply the persona - is probably the right architecture, and it's encouraging that it requires no external annotation budget.

This lands with prior work on alignment mechanisms that backfire in ways their designers didn't expect. The persona finding fits a broader pattern: techniques that improve one safety or preference metric often degrade another, and that trade-off is almost never visible in single-metric evaluations.

A Common Thread

All three papers are pointing at the same structural problem from different directions: AI systems are routinely assessed on the dimension they were optimized for, and the cost is paid on a dimension that wasn't measured. Probing optimizes internal representation fidelity; output correction doesn't follow. Persona prompting tunes preference alignment; factual accuracy suffers. Safety filtering improves surface-level outputs; subspace-level harmful patterns remain accessible.

The MultiTraitsss work is an exception in one sense - it's about deliberately creating the problem to study it. But the subspace steering it uses to build dark models is the same class of technique that interpretability researchers use to fix aligned models. The fact that it's more effective at introducing harmful behavior than at removing it should give the safety research community pause.

Sources: