Agent Memory in 2026: Circuits, Tiers, Evolution

Three new papers reveal how agent memory silently breaks, how a tiered architecture recovers it, and how models can self-improve without human labels.

Three papers landed this week that, taken together, cover the full arc of agent memory: where it breaks inside the model, how to engineer systems that don't degrade over days of operation, and how to train evaluation capacity into a model without human labels. If you're shipping agents that run beyond a few turns, all three matter.

TL;DR

- What Happens Inside Agent Memory - Qwen-3 models under 4B silently fail at memory content retrieval despite producing fluent output; the gap between detectable and steerable memory circuits doesn't close until 8B

- MEMTIER - A tiered memory system for the OpenClaw runtime lifts agent accuracy from 5% to 38% on LongMemEval-S across 72-hour operation windows, running on a 6GB consumer GPU

- EvoLM - A Qwen3-8B model trained with co-evolved rubrics beats GPT-4.1 on RewardBench-2 by 25.7% using no human annotations and no external reward models

Where agent memory silently breaks

Paper: "What Happens Inside Agent Memory? Circuit Analysis from Emergence to Diagnosis" Authors: Xutao Mao, Jinman Zhao, Gerald Penn, Cong Wang arXiv: 2605.03354

Memory failures in agents are quiet. The model retrieves the wrong context, or stores nothing at all, and the output is still fluent, still confident, and still wrong. There's no traceback, no flag - just incorrect behavior wrapped in grammatical calm.

Mao et al. trace feature circuits across Qwen-3 models ranging from 0.6B to 14B, using two widely-deployed agent memory frameworks: mem0 and A-MEM. Their goal is precise: map which internal components handle which memory operations, and at what scale those components start working reliably.

The 4B cliff

The results are uncomfortable for anyone running small models in production agentic systems. Routing circuits - the components that decide what type of memory operation to execute - activate as early as 0.6B. That makes the model look capable. A sub-4B agent will correctly classify that it needs to write something to memory, or retrieve something. The routing is fine.

Content processing isn't. The circuits responsible for actually reading or writing the right information don't become detectable until 4B. Below that threshold, a model can route memory operations perfectly while silently failing at the content work. The output doesn't reveal this.

There's a second gap above 4B. The paper separates detection from intervention: being able to observe that a memory operation is failing versus being able to correct it. Content circuits become detectable at 4B, but aren't reliably steerable until 8B. So if you need diagnosable memory behavior - not just working behavior - you're looking at 8B as the practical floor.

Shared infrastructure, practical diagnostics

The architectural finding is also useful. Write and Read operations share a late-layer hub that's a context-grounding substrate, and this structure transfers across both memory frameworks tested. That shared infrastructure is what allows the authors to localize per-operation memory failures at 76.2% accuracy without supervision - meaning you can flag broken memory operations without collecting labeled failure data first.

For teams running AI memory at scale, that's a direct path to better monitoring. The circuit analysis gives you a hook into what the model is actually doing, rather than treating failures as black-box output anomalies.

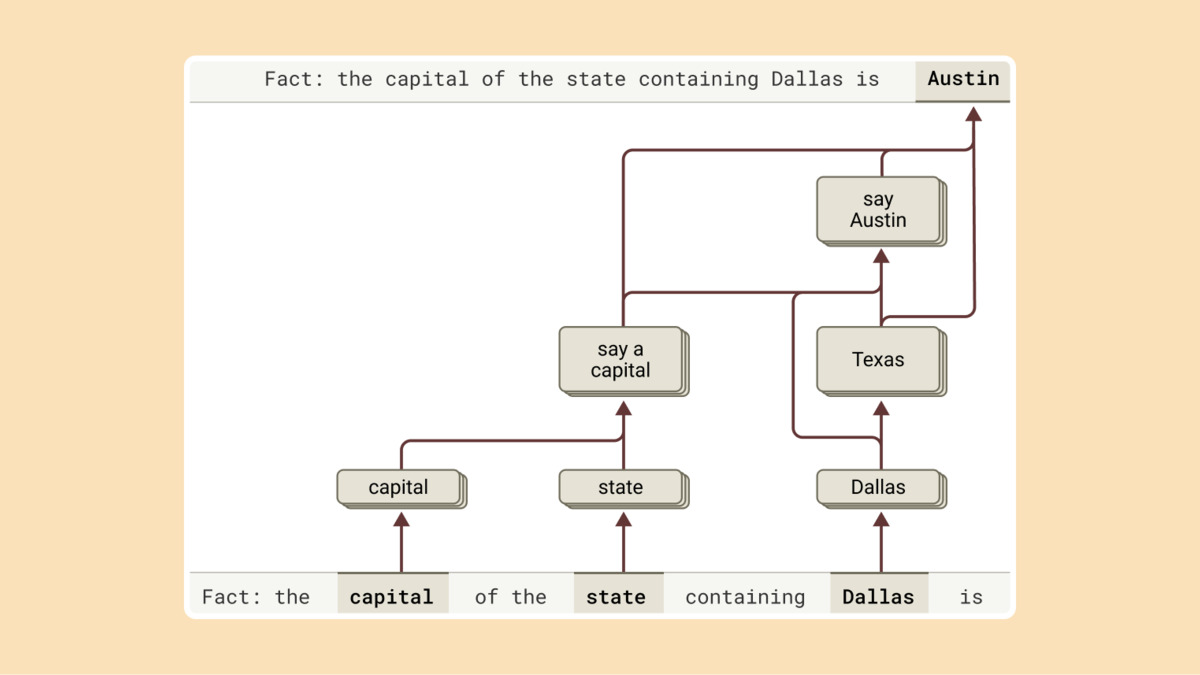

Attribution graphs trace the internal steps a model takes to arrive at an output - the same circuit analysis approach used to diagnose silent memory failures.

Source: anthropic.com

Attribution graphs trace the internal steps a model takes to arrive at an output - the same circuit analysis approach used to diagnose silent memory failures.

Source: anthropic.com

Building memory that survives three days

Paper: "MEMTIER: Tiered Memory Architecture and Retrieval Bottleneck Analysis for Long-Running Autonomous AI Agents" Authors: Bronislav Sidik, Lior Rokach arXiv: 2605.03675

Most memory evaluations measure a session. MEMTIER starts from a different baseline: tool-execution success rates degrade 14 percentage points over 72-hour operation windows. The paper isn't benchmarking a single conversation - it's measuring what happens when an agent runs continuously for three days, and flat-file memory systems fall apart under that load.

Five signals and a consolidation daemon

The architecture runs inside the OpenClaw agent runtime and has five moving parts: a structured episodic JSONL store, a five-signal weighted retrieval engine, an attention-attributed cognitive weight update loop, an asynchronous consolidation daemon that promotes episodic facts to a semantic tier, and a PPO-based policy that adapts retrieval weights from tool-execution outcomes.

That last point is the design choice worth noting. Most retrieval systems train on human preference data or use fixed weights. Here, the reward signal comes from whether the tool call worked. The policy learns from actual agent behavior, not from conversation ratings.

| Metric | Full-context baseline | MEMTIER (Qwen2.5-7B, 6GB GPU) |

|---|---|---|

| Overall accuracy | 5.0% | 38.2% |

| Single-session recall | - | 68.6-71.4% |

| Temporal reasoning | - | 32.3% |

| Multi-session synthesis | - | 17.3% |

The +33 percentage point improvement runs on a consumer GPU. The GPT-4o RAG BM25 baseline the paper compares against sits at 56% on single-session recall - MEMTIER passes that category with a model an order of magnitude cheaper.

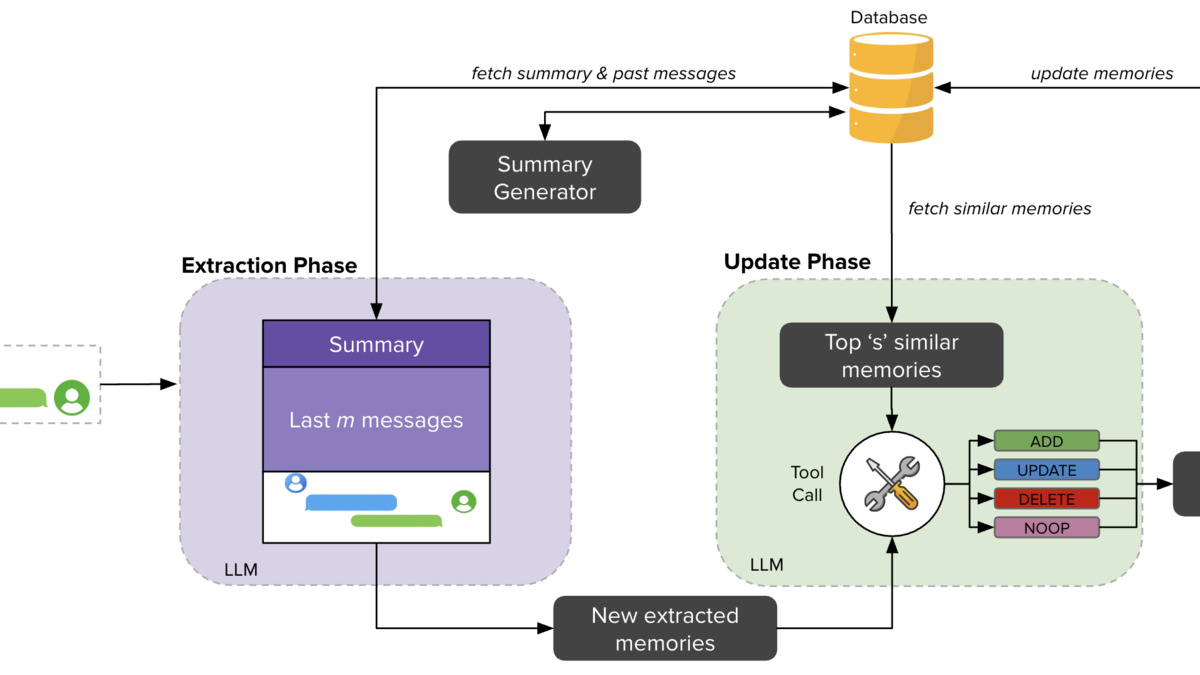

The mem0 pipeline - one of the two memory frameworks used in the circuit analysis paper, and a direct architectural predecessor to MEMTIER's tiered approach.

Source: arxiv.org

The mem0 pipeline - one of the two memory frameworks used in the circuit analysis paper, and a direct architectural predecessor to MEMTIER's tiered approach.

Source: arxiv.org

Multi-session synthesis at 17.3% is the weak point, and the paper doesn't hide it. Reasoning across many sessions is truly unsolved, and the consolidation daemon has limits. Temporal reasoning at 32.3% is respectable but not a closed problem. The honest framing here - strong on single-session, weak on synthesis - is more useful than most memory papers that cherry-pick their benchmark categories.

Teaching a model to grade itself

Paper: "EvoLM: Self-Evolving Language Models through Co-Evolved Discriminative Rubrics" Authors: Shuyue Stella Li, Rui Xin, Teng Xiao, Yike Wang, Rulin Shao, Zoey Hao, Melanie Sclar, Sewoong Oh, Faeze Brahman, Pang Wei Koh, Yulia Tsvetkov arXiv: 2605.03871

All preference signals are constructed from the policy's own outputs via temporal contrast with earlier checkpoints - no human annotation, no external supervision.

The standard post-training recipe needs either human raters, a proprietary reward API, or a separately trained reward model. EvoLM cuts all three from the pipeline.

The approach trains two alternating capabilities inside a single model. A rubric generator produces instance-specific evaluation criteria - not generic rubrics, but criteria optimized to discriminate between good and less-good outputs for that specific input. A policy component trains on scores produced by those rubrics. The two evolve together.

Why temporal contrast works

The preference signal comes from comparing the current model checkpoint against earlier ones. Rather than asking whether response An is better than response B (which requires an external judge), the system asks whether the current version's output is better than what a weaker version of itself would have produced. The rubric generator is calibrated to make that comparison legible - it produces criteria that maximize the judge's ability to distinguish between the two generations.

A Qwen3-8B model trained this way beats GPT-4.1 on RewardBench-2 by 25.7%. It outperforms SkyWork-RM, the current state-of-the-art 8B reward model, by 16%. The trained policy hits 69.3% average on the OLMo3-Adapt suite.

The open question is long-run stability. Co-evolved systems can converge toward shared blind spots as easily as they converge toward correctness. The paper addresses this across 32 pages and 21 tables, and the results hold within the assessed window. Whether the calibration holds over longer training runs or distribution shifts is what the field needs to test next.

The thread connecting all three

These papers aren't coordinated, but they diagnose the same gap. We don't have reliable primitives for agent cognition at scale - not for understanding what models do with memory (the circuits paper), not for keeping memory coherent over time (MEMTIER), and not for building the feedback loops that let models improve without expensive human scaffolding (EvoLM).

What's changed is precision. The diagnostics are sharper now: 76.2% unsupervised failure localization, 14 percentage points of degradation over 72 hours, a 25.7% gap against GPT-4.1 on reward modeling. Sharp numbers make the remaining gaps tractable rather than vague. That's progress, even when the problems aren't solved.

Sources:

- "What Happens Inside Agent Memory? Circuit Analysis from Emergence to Diagnosis" - arXiv 2605.03354

- "MEMTIER: Tiered Memory Architecture and Retrieval Bottleneck Analysis for Long-Running Autonomous AI Agents" - arXiv 2605.03675

- "EvoLM: Self-Evolving Language Models through Co-Evolved Discriminative Rubrics" - arXiv 2605.03871

- mem0 GitHub repository

- A-MEM: Agentic Memory for LLM Agents - arXiv 2502.12110