NVIDIA Ising Review: AI Models for Quantum Hardware

NVIDIA Ising is the first open AI model family for quantum computing - a 35B VLM for processor calibration and CNN decoders for real-time error correction, already deployed at 20+ research institutions.

NVIDIA isn't waiting for quantum computers to become useful on their own. With the April 2026 release of NVIDIA Ising - the first open AI model family designed specifically for quantum hardware - the company is betting that machine learning can close the gap between where quantum processors are now and where they need to be to run meaningful computations. That's a genuinely interesting bet, and after spending time with the technical details, the benchmarks, and the list of institutions already rolling out these models, I think it's one worth taking seriously. With some caveats.

TL;DR

- 8.0/10 - a technically strong first-in-class open model family for quantum hardware, but built for quantum labs, not software developers

- Ising Calibration 1 (35B VLM) scores 14.5% above GPT-5.4 on NVIDIA's QCalEval benchmark for quantum processor analysis; Ising Decoding cuts error correction latency 2.5x vs. the standard pyMatching decoder

- Vendor-created benchmark and hardware dependency limit independent validation for now - 20+ research institutions are launching it but consumer access requires QPU hardware

- Who should use it: quantum hardware labs, national laboratories, university research groups running surface code quantum processors; skip if you have no QPU access

What NVIDIA Ising Actually Solves

To understand why this release matters, you need to grasp the scale of the operational problem in quantum computing today. A useful quantum processor needs to run millions of quantum operations reliably. Current hardware makes roughly one error per thousand operations. Getting from that error rate to the one-in-a-trillion threshold required for fault-tolerant computation involves two gruelling engineering challenges that NVIDIA is now targeting directly.

The first is calibration. Every quantum processor drifts constantly - temperature fluctuations, electromagnetic interference, and material imperfections all shift qubit behavior over time. Traditionally, engineers re-calibrate manually, a process that can take days and requires deep domain expertise for each new hardware configuration. The second is decoding. Quantum error correction codes generate enormous volumes of syndrome data that classical systems must decode in real time, faster than the quantum processor continues operating. Traditional decoders like pyMatching can keep up - but only barely, and accuracy degrades at scale.

NVIDIA Ising targets the two critical operational bottlenecks preventing quantum computers from reaching fault tolerance: calibration and real-time error correction.

Source: developer-blogs.nvidia.com

NVIDIA Ising targets the two critical operational bottlenecks preventing quantum computers from reaching fault tolerance: calibration and real-time error correction.

Source: developer-blogs.nvidia.com

NVIDIA Ising addresses both problems with separate models. Ising Calibration 1 is a 35-billion parameter vision-language model that interprets experimental output from quantum processors and recommends calibration actions autonomously. Ising Decoding is a pair of compact 3D convolutional neural networks (912,000 and 1.79 million parameters) that decode surface-code quantum error syndromes in real time. Both are open-source under Apache 2.0 and available on HuggingFace, GitHub, and NVIDIA NIM.

Ising Calibration 1 - The 35B Quantum VLM

The calibration model is the more novel of the two. NVIDIA trained a 35B MoE vision-language model - internally designated Ising-Calibration-1-35B-A3B, activating 3B parameters per forward pass - on multi-modality qubit data from partners spanning superconducting qubits, quantum dots, trapped ions, and neutral atoms. The training corpus includes both synthetic simulation data and real QPU measurements from IQM, Conductor Quantum, EeroQ, Fermilab, and the UK National Physical Laboratory.

The model can look at a calibration plot - the kind of experimental output a quantum physicist normally interprets manually - and generate both a diagnosis and a recommended next step. Integrated with NVIDIA's NeMo Agent Toolkit, it runs agentic calibration loops that handle processor bring-up autonomously, reducing calibration time from days to hours.

| Organization | QPU Architecture |

|---|---|

| Academia Sinica | Superconducting |

| Fermi National Accelerator Laboratory | Superconducting |

| Harvard University | Neutral atoms |

| IQM Quantum Computers | Superconducting |

| Lawrence Berkeley National Lab | Superconducting |

| UK National Physical Laboratory | Multiple modalities |

QCalEval: A Useful Benchmark, With a Catch

NVIDIA created a new benchmark for this release - QCalEval, the first VLM evaluation dataset for quantum processor calibration tasks. It contains 243 samples across 87 scenario types from 22 experiment families, evaluated on six question types covering interpretation, classification, significance assessment, fit quality analysis, and next-step recommendations.

On QCalEval, Ising Calibration 1 scores 74.7 mean, against 72.3 for Gemini 3.1 Pro, 67.8 for Claude Opus 4.6, and 64.6 for GPT-5.4. Those are sizable margins - 14.5 percentage points over GPT-5.4 is not a rounding error.

The catch is that QCalEval is a vendor-created benchmark built specifically to assess models trained on exactly this kind of data. The general-purpose models weren't trained for quantum calibration tasks and scored in zero-shot conditions, while Ising Calibration 1 was purpose-trained on the same data distribution the benchmark draws from. It's comparing a specialist to generalists on the specialist's home court. That doesn't make the performance meaningless - specialists should beat generalists on their domain - but "outperforms Gemini 3.1 Pro on our benchmark" needs that context.

The benchmark data was contributed by real quantum labs, which gives QCalEval more credibility than a purely synthetic eval. Northwestern and Fermilab both contributed hardware measurement data to the dataset.

Ising Calibration 1 cuts quantum processor bring-up from days to hours - for the institutions that can run it, this is a concrete operational improvement, not a benchmark exercise.

Ising Decoding - Where the Numbers Get Cleaner

The Ising Decoder models are easier to assess independently, because their competitor (pyMatching) is a well-understood open-source tool with years of production deployment. The benchmarks here are also more straightforwardly interpretable.

Two model variants cover different deployment requirements:

| Variant | Parameters | Latency vs pyMatching | Accuracy (LER) vs pyMatching |

|---|---|---|---|

| Ising Decoder Fast | 912,000 | 2.5x faster | 1.11x better at d=13, p=0.003 |

| Ising Decoder Accurate | 1,790,000 | 2.25x faster | 1.53x better at d=13, p=0.003 |

| Ising Decoder Accurate (large) | 1,790,000 | - | 3x better LER at d=31 |

The Fast model targets latency-constrained pipelines - NVIDIA projects it capable of 0.11 microseconds per round on 13 GB300 GPUs with FP8 quantization, running 1000 rounds simultaneously. The Accurate model accepts a small speed penalty for significantly better logical error rates, especially at larger code distances (d=31) where the 3x LER improvement is most relevant.

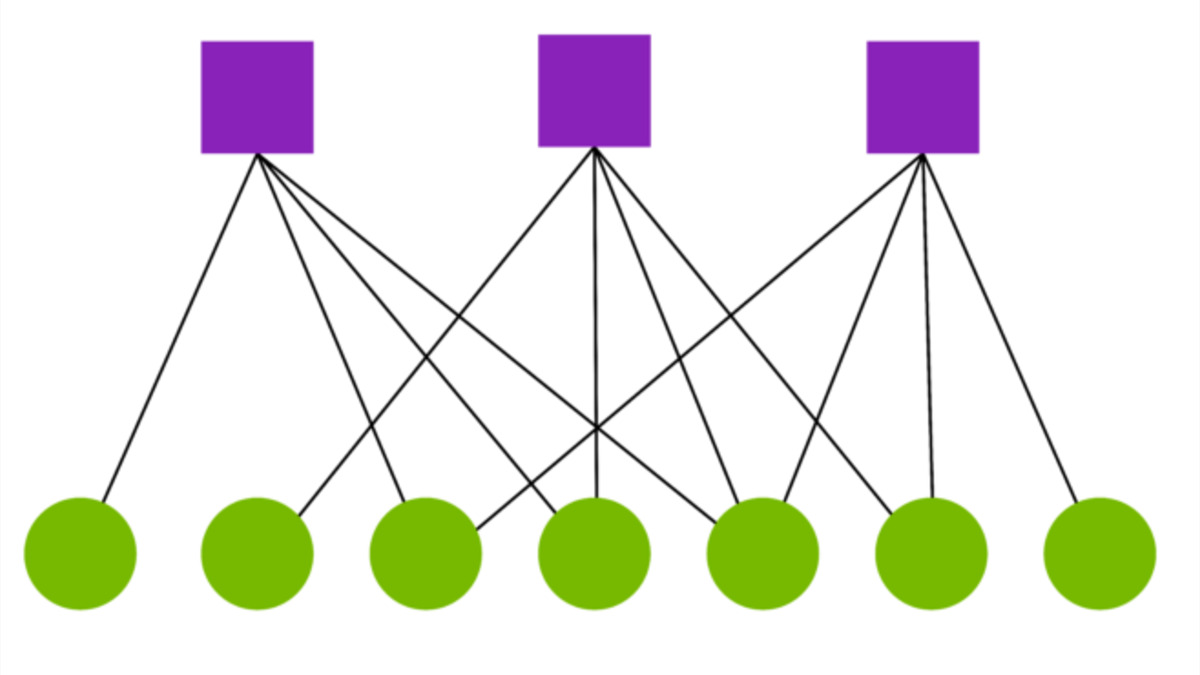

NVIDIA's approach to quantum error correction uses AI to decode surface-code syndromes in real time, a critical bottleneck on the path to fault-tolerant quantum computing.

Source: developer-blogs.nvidia.com

NVIDIA's approach to quantum error correction uses AI to decode surface-code syndromes in real time, a critical bottleneck on the path to fault-tolerant quantum computing.

Source: developer-blogs.nvidia.com

Both models run on CUDA-Q and integrate with PyTorch training pipelines, supporting custom noise models and fine-tuning for hardware-specific configurations. The open training framework is probably the most practically useful part of the release for research groups: you can retrain the decoders on your own QPU's error profile without building the architecture from scratch.

Who Is Actually Using This

Twenty-plus research organizations were confirmed launching Ising at launch. The list cuts across hardware types and geographies: Atom Computing, Cornell University, Infleqtion, EeroQ, and multiple national labs. That breadth matters. If the models only worked on a single QPU architecture, the adoption list would look narrower.

Ising Calibration 1 is already in use by Atom Computing, Academia Sinica, and Conductor Quantum. Ising Decoding is rolled out at Cornell and Infleqtion. Harvard and Fermilab contributed data to the benchmark and are evaluating integration with their own quantum systems.

This isn't a research paper with a future roadmap. Real institutions are running this code on real hardware, which is a much stronger validation signal than benchmark scores alone.

Where the Skepticism Is Warranted

Three concerns are worth naming.

First, the calibration benchmark. As noted, QCalEval is vendor-created and NVIDIA controls the training data and the evaluation protocol. Until an independent research group publishes results using their own QPU data, the margins over general-purpose models should be treated as directionally correct but unaudited.

Second, model generalization. Quantum processors drift - that's the problem these models are solving - and the drift behavior varies by architecture, fabrication run, and operating environment. A calibration model trained on data from IQM's superconducting qubits doesn't automatically generalize to a different qubit technology. NVIDIA provides fine-tuning tools for this reason, but that means each lab needs the engineering resources to run a custom training pipeline. This is manageable for national labs and well-funded university groups; it's a significant barrier for smaller operations.

Third, the hardware dependency. NVIDIA's recommended deployment stack - NVQLink for real-time QPU interconnect, Grace Blackwell for inference, CUDA-Q for the software layer - is deeply integrated with NVIDIA infrastructure. The models are open-source, but the optimized deployment path runs on NVIDIA hardware. That's not unique to this release (the same dynamic applies to CUDA for GPU workloads), but "open source" and "hardware-agnostic" are not the same thing here.

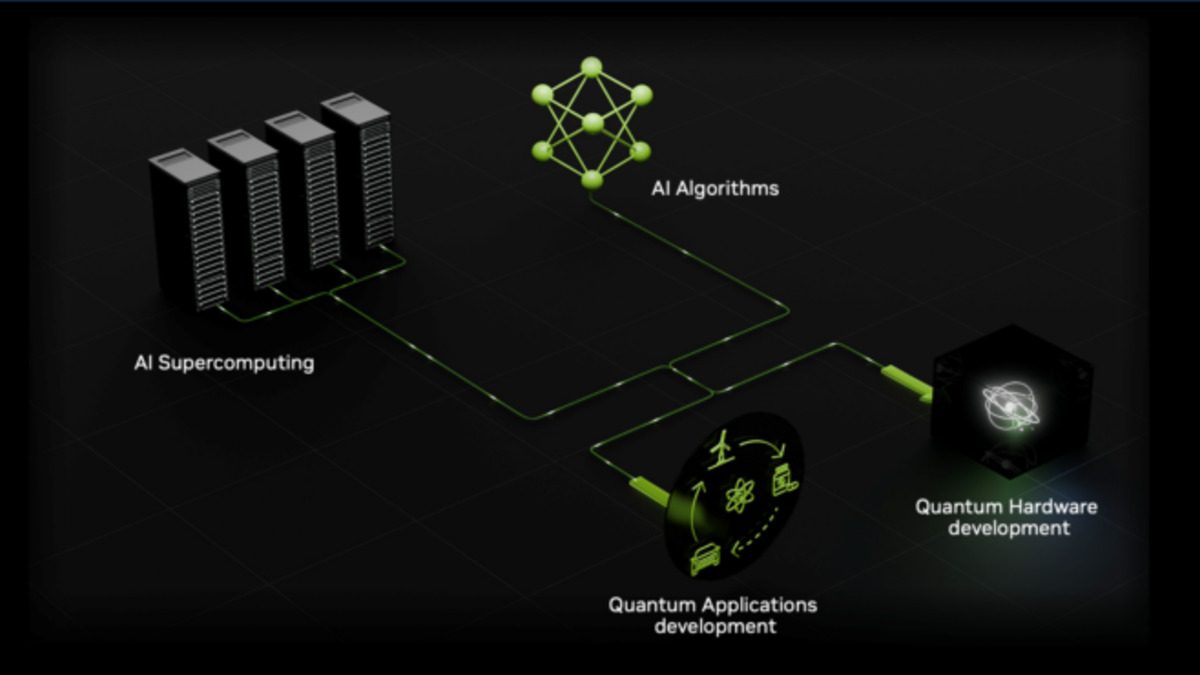

NVIDIA's quantum strategy pairs AI inference on GPU supercomputers with real-time classical control of quantum processors via NVQLink.

Source: developer-blogs.nvidia.com

NVIDIA's quantum strategy pairs AI inference on GPU supercomputers with real-time classical control of quantum processors via NVQLink.

Source: developer-blogs.nvidia.com

How It Fits NVIDIA's Broader Strategy

This release follows a pattern visible in NVIDIA's other domain-specific model work. Nemotron 3 Super targeted agentic coding with architecture decisions that sacrificed general knowledge for agent throughput. Alpamayo went after robotics with a specialized model that reached 100K downloads in its first weeks. Ising continues the logic: find a domain where general-purpose models can't compete, build a purpose-trained open model, release it with the data and training frameworks, and let adoption prove the value.

The quantum bet is longer-dated than robotics or coding. Fault-tolerant quantum computers are still years away, and the organizations that benefit from Ising today are a narrow slice of the global research community. But those organizations are exactly the ones that will determine whether quantum computing becomes practically useful - and equipping them with better tools now builds NVIDIA into the quantum stack before it matures.

Strengths and Weaknesses

Strengths

- First open AI model family for quantum hardware, with no direct competitor

- Concrete performance improvements on real operational metrics (calibration time, decoding latency, logical error rate)

- Open-source under Apache 2.0 with training frameworks and fine-tuning tools included

- Strong institutional adoption signal at launch: national labs, universities, hardware vendors across multiple QPU architectures

- QCalEval benchmark provides the first standardized evaluation dataset for this task class, useful even independent of the Ising models

Weaknesses

- No QPU access means no hands-on testing for most reviewers or developers

- QCalEval is vendor-created and lacks independent validation as of May 2026

- Generalization to non-training-distribution QPU architectures requires lab-specific fine-tuning

- Ideal deployment requires NVIDIA's hardware stack (NVQLink, Blackwell GPUs, CUDA-Q)

- Real-time decoder performance figures are projections for GB300 GPU configurations not yet widely deployed

Verdict

NVIDIA Ising is a serious technical release that tackles two genuinely hard problems in quantum computing with models that show real performance improvements over established baselines. The 2.5x decoding speedup over pyMatching is independently meaningful, and the 20+ institutional adopters at launch are a better validation signal than any benchmark NVIDIA could publish.

The limitations are real: you need quantum hardware to use it, the calibration benchmark needs external validation, and the optimized path runs on NVIDIA's own silicon. None of those concerns undercut the core technical achievement, but they do make this a tool for a specific audience.

Score: 8.0/10. Exceptional for quantum hardware labs. Interesting to follow for everyone else - this is what AI infrastructure for the next generation of computing looks like in its early form.

Sources

- NVIDIA Newsroom: NVIDIA Launches Ising - the World's First Open AI Models for Quantum Computing

- NVIDIA Technical Blog: Ising Introduces AI-Powered Workflows for Fault-Tolerant Quantum Systems

- NVIDIA Developer: Ising Model Page and Documentation

- arXiv:2604.25884 - QCalEval: Benchmarking Vision-Language Models for Quantum Calibration Plot Understanding

- HuggingFace: nvidia/Ising-Calibration-1-35B-A3B model card

- HuggingFace: nvidia/QCalEval dataset

- InfoQ: NVIDIA Launches Ising Open Models for Quantum Computing

- Northwestern University Quantum: Fermilab and Northwestern data contributed to QCalEval

- TradingKey: NVIDIA Ising Launch Boosts Quantum Stocks, IonQ Gains Over 20%