LTX-2.3 Review: Open-Source Video AI That Delivers

LTX-2.3 is a 22-billion-parameter open-source video and audio generation model from Lightricks that rivals closed commercial tools - at zero cloud cost.

Open-source AI video generation has always carried an asterisk. You could run it locally, sure, but you paid in quality - washed-out textures, unstable motion, audio that sounded like it was recorded inside a washing machine. Lightricks' LTX-2.3, released March 5, 2026, is the first open-weights model to credibly remove that asterisk across multiple dimensions at once.

TL;DR

- 8.2/10 - the strongest open-source video+audio model available, with real 4K generation and local inference

- Rebuilt VAE produces sharper textures and fine detail than any prior open model; native portrait mode is a genuine addition for social media workflows

- Complex physics (water, crowds) and emotional tonal subtlety still lag behind top closed systems; I2V instability bugs are present in the current release

- Developers and indie creators who want local inference and commercial-grade 4K quality should use it; teams requiring the highest cinematic coherence should also evaluate Wan 2.2 or Kling 3.0

At 22 billion parameters, LTX-2.3 is nearly three times larger than its predecessor. It runs the full video-audio pipeline in a single diffusion pass rather than layering audio in post. You can run it on a RTX 3080 with FP8 quantization, access it via the fal.ai API at $0.06 per second per 1080p clip, or build on top of its Apache 2.0-licensed weights however you want. This is not a research preview or a limited beta.

What Changed in 2.3

LTX-2 (released January 2026) established the architecture. LTX-2.3 is a point release but the improvements are structural, not cosmetic.

The most consequential change is a rebuilt VAE with a redesigned latent space. In practice this means sharper fabric, cleaner hair, and stable chrome reflections during camera moves - the kinds of fine-detail tests that previous open-source models failed consistently. Curious Refuge Labs, which ran quantitative scoring on the LTX-2 architecture, gave visual fidelity a 7.3 out of 10, calling it "a standout strength" while noting motion coherence at 5.8 and prompt adherence at 6.3. LTX-2.3 addresses the second two scores directly.

The second change is a 4x larger gated attention text connector. Multi-subject prompts with specific spatial relationships - "a red car parked behind a white truck at night" - now hold across the full clip rather than drifting after 3-4 seconds. It's not perfect, but it's measurably better.

Native portrait video (1080x1920) is new in 2.3 and trained on actual vertical data rather than cropped landscape footage. For anyone producing content for TikTok, Reels, or Shorts, this alone is worth the upgrade.

The fourth change is the most technically satisfying: a new vocoder and filtered training data for audio. Because LTX-2.3 produces audio within the same diffusion pass as the video - not as a separate step - a door slam lands on exactly the right frame. It's synchronized at the model level. Community user Aurel Manea, who ran a 40-second chained narrative test using LTX-2.3, wrote that "results were indeed much better than LTX 2.0 regarding movement and coherence," though he noted that 1080p generation introduced artifacts and stayed at half-res with post-processing upscaling.

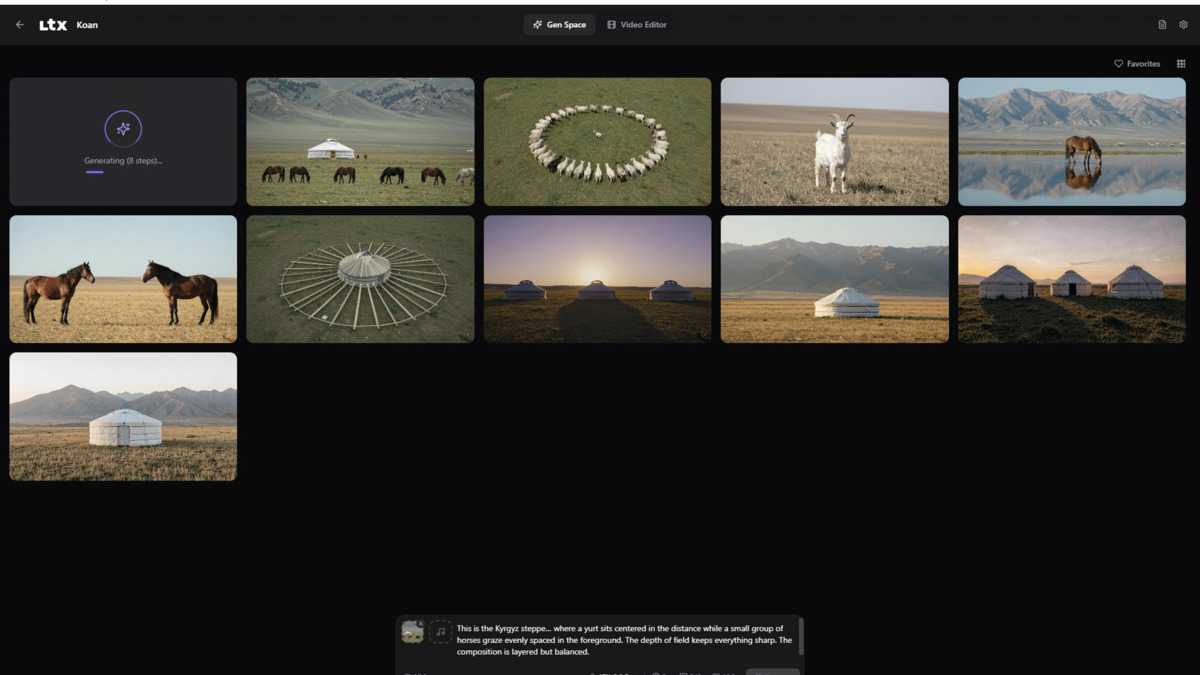

The LTX Desktop app provides a free, local generation workspace with text-to-video, image-to-video, and timeline tools - no cloud account needed.

Source: github.com/Lightricks

The LTX Desktop app provides a free, local generation workspace with text-to-video, image-to-video, and timeline tools - no cloud account needed.

Source: github.com/Lightricks

Running It Locally

This is where LTX-2.3 diverges most sharply from its cloud-only competition. The model weights are on HuggingFace under the Lightricks license (free for organizations under $10M annual revenue, commercial terms above that). There are four weight variants to choose from:

ltx-2.3-22b-dev- Full BF16, trainable, 42GB on diskltx-2.3-22b-distilled- 8 steps, CFG=1, faster inferenceLightricks/LTX-2.3-fp8- Quantized, roughly 18-20GB- GGUF quantizations down to Q2_K at ~9-12GB

The official requirements ask for 32GB VRAM and Python 3.12+ with CUDA 12.7+. In practice, the community has pushed this lower. One DEV Community member ran the Q4_K_S GGUF on a RTX 3080 (10GB VRAM) and got a 960x544, 5-second clip with audio in about 2-3 minutes - accepting roughly 5-8% softening in fine detail versus the BF16 baseline, but with identical motion coherence. Under 12GB VRAM requires the Gemma 3 12B text encoder to run on CPU, which demands 32GB system RAM to avoid paging.

ComfyUI v0.16.1+ includes native LTX-2.3 support with day-0 templates covering text-to-video, image-to-video, and multi-stage generation with latent upscaling. The key nodes are LTXVSeparateAVLatent, LTXVAudioVAEDecode, CreateVideo, and SaveVideo. Recommended generation parameters are a guidance scale of 3.0-3.5, 20-30 steps for iteration, and 40+ for final renders.

One constraint to plan around: width and height must be divisible by 32, and frame count must follow the formula divisible by 8 plus 1. These are hard requirements, not suggestions.

Speed Advantage

LTX-2.3 is about 18 times faster than Wan 2.2-14B on the same H100 hardware, according to the LTX team's own benchmarking. That number is unverified by independent third parties, but the community consensus on generation times is consistent with a large speed advantage. Inference speed compounds: rapid iteration on 5-10 second draft clips is practical on hardware most developers already own.

The distilled variant (8 steps, CFG=1) trades a small quality margin for a further speed gain. For storyboarding and iteration, the distilled variant is the right choice; for final renders at 4K, the full dev model is worth the extra time.

The Cloud Option: fal.ai

If you don't want to manage GPU infrastructure, fal.ai exposes LTX-2.3 via seven serverless endpoints: text-to-video (standard and fast), image-to-video (standard and fast), audio-to-video, extend-video, and retake-video. The Python SDK is 3 lines to get a clip.

Pricing is per second of generated video:

| Resolution | Standard | Fast |

|---|---|---|

| 1080p | $0.06/s | $0.04/s |

| 1440p | $0.12/s | $0.08/s |

| 4K | $0.24/s | $0.16/s |

A 10-second 4K clip at standard quality costs $2.40. Audio-to-video, Extend, and Retake are priced at $0.10/s regardless of resolution. Worth noting: fal.ai lists the model as Apache 2.0 on their platform page. Lightricks' own license says free for under $10M ARR. If you're building a commercial product above that threshold, verify which terms apply directly with Lightricks before assuming either license governs.

"We chose to release LTX-2 as fully open source. Not to lead a technological debate, but to enable others to integrate this technology into their own work on their own terms." - Zeev Farbman, CEO of Lightricks

How It Compares

The clearest head-to-head is with Kling 3.0, which we reviewed earlier this year. Kling focuses on multi-shot narrative sequences with strong subject consistency across camera angles - it's a cinematic storytelling tool. LTX-2.3 wins on resolution (4K vs 1080p), open-source availability, speed, and native portrait mode. Kling 3.0 still has stronger multi-character audio with voice reference capability.

Against Wan 2.2, the other major open-source competitor, LTX-2.3 wins on speed, resolution ceiling, native audio, and portrait support. Wan 2.2 retains the edge on cinematic camera control - complex dolly, crane, and tracking shots - and has a more mature community ecosystem given it launched earlier.

Against Seedance 2.0 and Sora, LTX-2.3 matches or exceeds them on resolution and frame rate. The closed systems retain advantages in very long-form coherence, complex multi-subject scenes, and physics-intensive content like water and fabric dynamics.

The community around LTX-2.3 has grown quickly since the LTX-2 release in January. The model passed 5 million downloads before LTX-2.3 shipped, and community members contributed EasyCache (2.3x inference speedup), NVFP8 quantization, custom LoRAs, and ComfyUI node extensions. The HuggingFace model page has 796,000 monthly downloads and 694 likes; the launch Reddit thread hit 700 upvotes. That momentum matters for an open-source project more than any benchmark number.

Known Issues and Limitations

The current release has real bugs. Image-to-video crashes and instability are documented in HuggingFace community discussions. The spatial upscaler x2 adds unwanted text overlays on some outputs. Diffusers library support isn't yet complete - model_index.json is missing. The audio-to-video mode is broken for some users. The LTX-2.3 Fast variant doesn't support audio-to-video, Extend, or Retake.

Beyond bugs, there are model-level weaknesses. Curious Refuge Labs noted that "LTX reads emotional cues as a sequence of surface gestures" - it follows sentence structure but misses tone and sentiment. Complex physics (water interactions, crowd dynamics, multi-subject motion) degrade markedly. Human behavior logic has gaps: the model has been observed cutting a cake from the edge rather than the center, a minor example of the general pattern that physical world knowledge is shallow.

Out-of-memory errors occur when loading the Gemma 3 12B text encoder alongside the main model, sometimes retaining up to 37GB of graph activations. Tensor dimension mismatches appear when mixing LTX-2.0 assets with 2.3 pipelines.

These are solvable problems. The model is two weeks old. A project with 5 million downloads and an active community will iterate quickly. But they're real problems that'll affect any production workflow right now.

Strengths

- Truly runs locally on consumer hardware with FP8 and GGUF quantization

- 4K at up to 50 FPS, with 20-second clip support - resolution and length that closed models often cap lower

- Native audio sync in-model, not post-processed - the timing is correct

- Portrait 9:16 mode trained on real vertical data, not cropped landscape

- Apache 2.0 license for smaller organizations removes the cloud dependency entirely

- ~18x faster than Wan 2.2 on equivalent hardware

- ComfyUI day-0 support and active community ecosystem

Weaknesses

- I2V instability and several reproducible bugs in the current v2.3 release

- Complex physics, emotional nuance, and multi-character coordination still behind Wan 2.2 for cinematic work

- VRAM requirements are high for the full BF16 model; quantization involves quality tradeoffs

- Diffusers integration incomplete

- License clarity between fal.ai and Lightricks terms requires verification for commercial users

Verdict

8.2/10

LTX-2.3 is the strongest open-source video generation model available today. The rebuilt VAE and native portrait support are real advances. The speed advantage over Wan 2.2 is sizable. For developers who want to build video generation into applications without a cloud dependency, or for indie creators running 4K video workflows on their own hardware, this is the best option in the market right now.

The bugs are real and the emotional depth limitations are genuine. Teams doing cinematic long-form work should run comparative tests against Wan 2.2 before committing to LTX-2.3 as their primary model. But for most practical use cases - social media content, product visualization, storyboarding, API-powered apps - LTX-2.3 earns the recommendation.

Zeev Farbman's open-source strategy is paying off. Community contributions have already delivered a 2.3x inference speedup and NVFP8 quantization support. The model launched two weeks ago. It's going to be noticeably different in two months.

Sources

- LTX-2.3 Release Announcement - ltx.io

- LTX-2.3 Model Card - HuggingFace

- LTX-2 open-source announcement - GlobeNewswire

- LTX-2 Review - Curious Refuge Labs

- LTX-2.3 Low VRAM Long Video Workflow - Aurel Manea

- Running LTX-2.3 Locally - DEV Community

- fal.ai LTX-2.3 API and Pricing

- LTX-2.3 System Requirements - docs.ltx.video

- LTX-2.3 vs Wan 2.2 comparison

- ComfyUI Day-0 LTX-2.3 Support

- Zeev Farbman LinkedIn - LTX-2 Announcement

- LTX-2.3 Community Discussions - HuggingFace