GPT-5.5 Review: OpenAI's First Full Retrain Shines

GPT-5.5 is OpenAI's first completely retrained base model since GPT-4.5, leading the field on agentic coding and computer use - but the doubled per-token pricing and delayed API access require careful evaluation.

Four days after its April 23 launch, GPT-5.5 is already reshaping the conversation about what a frontier model should be able to do on its own. This is the first time OpenAI has trained a new base model from scratch since GPT-4.5 - every GPT-5.x release between them was a fine-tune or variant, not a genuine ground-up retrain. The results show. GPT-5.5 leads the field on Terminal-Bench 2.0 at 82.7%, tops the computer-use rankings with 78.7% on OSWorld-Verified, and nearly doubles its predecessor's long-context recall at 1M tokens. It also costs twice as much per token as GPT-5.4 and, at launch, had no API access at all. Both of those facts matter.

TL;DR

- 9.0/10 - the best agentic coding and computer-use model available right now, with the first genuine architectural leap from OpenAI in over a year

- Key strength: leads the field on Terminal-Bench 2.0 (82.7%) and OSWorld-Verified (78.7%), with token efficiency gains that largely offset the doubled per-token price for long agentic runs

- Key weakness: loses to Claude Opus 4.7 on SWE-Bench Pro (58.6% vs 64.3%), API delayed at launch, and short-prompt workloads pay 2x GPT-5.4 pricing without benefitting from the efficiency gains

- Use it if your workflows are agentic, multi-step, and run in Codex or ChatGPT; skip it if you need API-native access today or your workload is short discrete prompts at volume

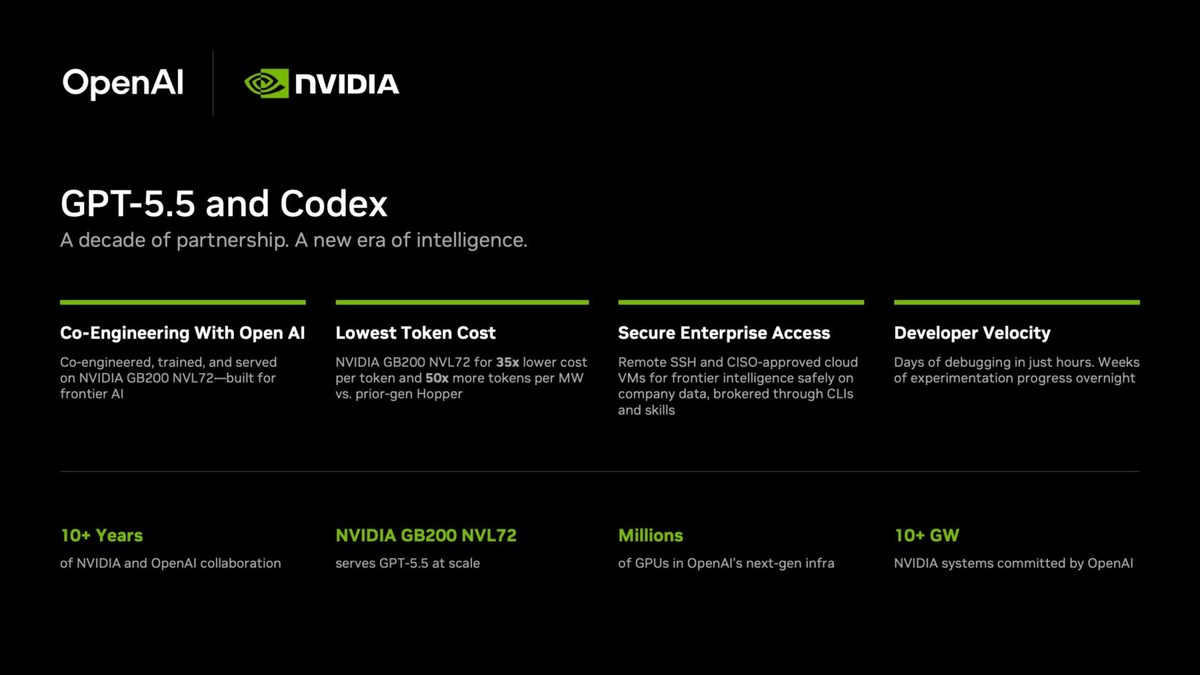

What Changed in the Retraining

OpenAI's internal codename for GPT-5.5 was "Spud." The name is modest; the scope isn't. Unlike the GPT-5.1 through 5.4 releases - which were fine-tunes layered on existing checkpoints - GPT-5.5 was trained from scratch on NVIDIA GB200 and GB300 NVL72 rack-scale systems. The result is natively omnimodal from the base: text, image, audio, and video processing are integrated during training rather than patched in afterward.

Why does the architecture distinction matter? A fine-tuned model is bounded by the inductive biases of its base checkpoint. A retrained model can develop entirely different internal representations - and you can see that in the long-context numbers. On MRCR v2 at 1M tokens, a retrieval benchmark designed to stress long-context fidelity, GPT-5.5 scores 74.0% compared to GPT-5.4's 36.6%. That isn't gradual. It's the kind of improvement that changes what you can actually do with a model: suddenly, 1M-token sessions become genuinely useful rather than nominally supported.

Greg Brockman described the result as "a big step towards more agentic and intuitive computing." That framing is vendor copy, but the benchmark numbers behind it aren't invented.

What GPT-5.5 Optimizes For

OpenAI didn't publish MMLU-Pro or GPQA Diamond scores. They published Terminal-Bench, Expert-SWE, GDPval, OSWorld, GeneBench, BixBench, and Tau2-bench Telecom. The signal is intentional: GPT-5.5 is built around tasks with real-world structure - multi-step, tool-dependent, environment-requiring - not academic multiple-choice. Whether this framing reflects genuine capability or a strategic choice to avoid weak academic scores is a question worth keeping open. The agentic benchmarks are harder to game than MMLU, but they're also newer and less independently reproduced.

Agentic Coding: The Clearest Win

The 82.7% Terminal-Bench 2.0 score is GPT-5.5's loudest number, and it holds up under scrutiny. Terminal-Bench measures complex command-line workflows that require planning, iteration, and tool coordination - the kind of work where a model must decide what to run, interpret results, and adjust course without human input. GPT-5.5 leads the field by a wide margin here, beating Claude Opus 4.7 by roughly 13 points.

Independent testing from CodeRabbit adds texture. On a curated code review benchmark, GPT-5.5 found expected issues at a 79.2% rate against a 58.3% baseline. On a large-scale real-world review set - a much harder test - it reached 65.0% versus a 55.0% baseline. The comment volume increased slightly (722 vs 558 comments on the large set), but precision improved from 11.6% to 13.2%. For code review, that precision gain matters more than raw volume.

GPT-5.5 doesn't just write more code. It understands what shouldn't change.

Hands-on tests by developers working with Codex describe a model that's "quicker, leaner, and more direct" than its predecessors - shorter responses, a bias toward minimal targeted changes rather than broad rewrites, and better behavior on scoped tasks like bug fixes, API adjustments, and security-sensitive refactors. Those observations align with what the benchmark numbers suggest: the model is better at distinguishing signal from noise in a codebase and acting on the right scope.

The Expert-SWE score of 73.1% and SWE-Bench Pro score of 58.6% are strong in absolute terms. On SWE-Bench Pro, however, Claude Opus 4.7 leads at 64.3% - a gap that reflects a real difference in how the two models handle broad architectural reasoning across large repositories. GPT-5.5 is ahead on focused, scoped coding tasks. Opus 4.7 is ahead when the task requires understanding how a change spreads across a codebase. Both claims are defensible.

Token efficiency is the part of the coding story that gets less attention than it deserves. OpenAI's own data shows GPT-5.5 using 40% fewer output tokens than GPT-5.4 on equivalent Terminal-Bench tasks. For long agentic coding runs - where output tokens compound across many iterations - this largely offsets the doubled per-token price. For short discrete prompts, it doesn't. See the coding benchmarks leaderboard for how these scores compare across the full field.

Computer Use and Long Context

OpenAI's GPT-5.5 runs on NVIDIA GB200 and GB300 NVL72 systems - the first time a GPT model was trained from scratch on rack-scale AI infrastructure.

Source: blogs.nvidia.com

OpenAI's GPT-5.5 runs on NVIDIA GB200 and GB300 NVL72 systems - the first time a GPT model was trained from scratch on rack-scale AI infrastructure.

Source: blogs.nvidia.com

The 78.7% OSWorld-Verified score puts GPT-5.5 just barely ahead of Claude Opus 4.7 (78.0%) on autonomous desktop navigation - a benchmark where the margin between the two is essentially a coin flip. What's more interesting is the trajectory: GPT-5.4 scored in the mid-70s, GPT-5.2 scored 47.3%. This area has improved faster than any other capability in the GPT-5 family, and GPT-5.5 extends that trend.

Codex gained meaningful new features with this release. The model can now operate an in-app browser for local development servers and file-backed pages: click through rendered UI, reproduce visual bugs, capture screenshots, and iterate on what it sees. This isn't just screenshot interpretation - it's the model actively running its own test environment as part of a debugging loop. OpenAI demonstrated a math professor building an algebraic geometry app from a single prompt in 11 minutes using Codex with GPT-5.5. That demo is curated, but it's not implausible given the benchmark profile.

The context window is 1M tokens in the Chat API, and 400K in Codex specifically. That Codex cap is lower than GPT-5.4's Codex context window - a regression for very long sessions that deserves more attention than it's gotten in the coverage so far. Codex also gets a Fast mode option: 1.5x faster token generation at 2.5x the cost, useful for interactive sessions where latency matters more than budget.

Knowledge Work and Scientific Research

GDPval is an OpenAI-commissioned benchmark that tests model performance across 44 occupations covering the top-9 U.S. GDP industries - finance, healthcare, law, engineering, and others. GPT-5.5 scores 84.9%, meaning it performed at or above the level of human professionals on roughly 85% of the tasks. GPT-5.4 scored 83.0%, Claude Opus 4.7 scored 80.3%, and Gemini 3.1 Pro scored 67.3%.

That's a self-reported benchmark from a company incentivized to show large gains, so the usual caveats apply. Bank of New York CIO Leigh-Ann Russell is quoted in OpenAI's press materials calling out "really impressive hallucination resistance" with the quality gains - a specific claim worth tracking as independent evaluations arrive. Whether that hallucination reduction holds outside the benchmark conditions is the most important open question for enterprise users.

Scientific research is where the numbers get both exciting and honestly tentative. GeneBench measures multi-stage data analysis pipelines in genetics, where models must reason about ambiguous or errorful experimental data. GPT-5.5 scores 25.0% versus GPT-5.4's 19.0% - a 31% relative improvement. BixBench, covering real-world bioinformatics, comes in at 80.5%. Neither benchmark is anywhere near solved. But the improvement arc is real, and it suggests GPT-5.5 is meaningfully more useful as a research collaborator in life sciences than its predecessors.

GPT-5.5 leads Claude Opus 4.7 on Terminal-Bench 2.0 by roughly 13 points, but trails on SWE-Bench Pro - the two models are optimized for different coding workload types.

Source: mindwiredai.com

GPT-5.5 leads Claude Opus 4.7 on Terminal-Bench 2.0 by roughly 13 points, but trails on SWE-Bench Pro - the two models are optimized for different coding workload types.

Source: mindwiredai.com

GPT-5.5 vs Claude Opus 4.7

Across 10 shared benchmarks, the split is fairly clear. GPT-5.5 leads on Terminal-Bench 2.0, OSWorld-Verified, FrontierMath (51.7% vs 43.8%), and BrowseComp. Claude Opus 4.7 leads on SWE-Bench Pro (64.3% vs 58.6%), GPQA, HLE, MCP Atlas, and FinanceAgent v1.1. Pricing is nearly matched at $5 per million input tokens for both, with GPT-5.5 output slightly more expensive ($30/M vs $25/M for Opus 4.7). Both ship 1M-token context windows.

The practical split: if you're running long-horizon agentic coding workflows in Codex or terminal environments, GPT-5.5 is the better choice. If you're building on top of a MCP ecosystem, working on large-codebase architectural tasks, or need peak accuracy on GPQA-style reasoning, Opus 4.7 holds the edge. These aren't competing for the same workload. See the overall LLM rankings for April 2026 and the SWE-Bench coding agent leaderboard for full cross-model comparisons.

Pricing and Availability

GPT-5.5 launched on April 23, 2026 directly into ChatGPT (Plus, Pro, Business, Enterprise) and Codex, with no waitlist. The API followed on April 24. GitHub Copilot (Pro+, Business, Enterprise) got access the same day, at a 7.5x premium request multiplier under promotional pricing.

The pricing doubles GPT-5.4:

| Tier | Input | Cached Input | Output |

|---|---|---|---|

| GPT-5.5 | $5.00/M | $0.50/M | $30.00/M |

| GPT-5.5 Pro | $30.00/M | - | $180.00/M |

| GPT-5.4 (reference) | $2.50/M | $0.25/M | $15.00/M |

OpenAI's argument for net cost parity holds for agentic workloads: fewer tokens per completed task means the per-token premium largely washes out. For high-volume batch inference with short, fixed prompts - classification, extraction, summarization - the calculus doesn't work and GPT-5.4 remains the smarter choice unless you specifically need GPT-5.5's capabilities. The cost efficiency leaderboard will reflect independently measured per-task costs as third-party evaluations come in.

Strengths and Weaknesses

Strengths:

- First genuine base retrain since GPT-4.5 - new architecture, not just a fine-tune

- Field-leading Terminal-Bench 2.0 score (82.7%) and OSWorld-Verified (78.7%)

- MRCR v2 long-context recall nearly doubles GPT-5.4: 74.0% vs 36.6% at 1M tokens

- 40% fewer output tokens on agentic tasks - offsets pricing for long runs

- Natively omnimodal, trained on GB200/GB300 hardware

- Codex browser use supports real rendered-UI testing within agent loops

- Immediate ChatGPT rollout and same-day API access for Plus through Enterprise

Weaknesses:

- Loses to Claude Opus 4.7 on SWE-Bench Pro (58.6% vs 64.3%) - not the top coding model for every workload

- Per-token cost is 2x GPT-5.4 - short-prompt workloads don't benefit from efficiency gains

- Codex context window (400K) is lower than GPT-5.4 Codex - a step back for very long sessions

- No MMLU-Pro, GPQA Diamond, or Chatbot Arena scores at launch - independent academic benchmarking is pending

- GDPval is an OpenAI-commissioned benchmark and hasn't been independently reproduced

- Parameters undisclosed

Verdict

GPT-5.5 is the best model for agentic coding and computer use right now, and the first GPT-5 series release that represents a genuine architectural step forward rather than fine-tuned increments. The Terminal-Bench score is real, the long-context improvement is dramatic, and the token efficiency gains are large enough to make the pricing defensible for the workloads the model was built for.

It isn't the best model at everything. Claude Opus 4.7 leads on SWE-Bench Pro and broad-codebase architectural reasoning. GPQA and MMLU benchmarks haven't been reported. The GDPval score comes from OpenAI's own evaluation framework.

For developers and enterprises running multi-step agentic workflows in Codex, this is the current state of the art. For everyone else, the choice depends more on specific workload fit than on any overall ranking. The computer use leaderboard will track OSWorld and related scores as independent evaluations publish.

Score: 9.0/10

Sources

- GPT-5.5 is here - OpenAI Developer Community

- OpenAI releases GPT-5.5, bringing company one step closer to an AI super app - TechCrunch

- OpenAI GPT-5.5 Benchmark Results - CodeRabbit

- GPT-5.5 is generally available for GitHub Copilot - GitHub Changelog

- OpenAI Releases GPT-5.5: A Fully Retrained Model - MarkTechPost

- OpenAI GPT-5.5 Coding Model: Codex Test - Uygar Duzgun

- OpenAI's GPT-5.5 masters agentic coding with 82.7% benchmark score - Interesting Engineering

- OpenAI's New GPT-5.5 Powers Codex on NVIDIA Infrastructure - NVIDIA Blog

- GPT-5.5: Pricing, Benchmarks and Performance - LLM Stats

- GPT-5.5 vs Claude Opus 4.7: Real-World Coding Performance Compared - MindStudio