Google ADK Review: The Agent Framework for Gemini

A hands-on review of Google's Agent Development Kit - the open-source framework for building multi-agent AI systems, with a look at its strengths, limitations, and how it stacks up against LangGraph and CrewAI.

Google's Agent Development Kit has had a quiet twelve months. Announced at Google Cloud NEXT in April 2025, it was initially received with some skepticism - another framework from a large vendor, optimized for that vendor's models, locked into that vendor's cloud. A year later, with 18.7k GitHub stars, a stable Python v1.28.1, production-ready Go and Java ports, and a growing ecosystem of third-party integrations, the picture is more complicated. ADK has real strengths and real gaps, and neither impression - dismissal nor uncritical enthusiasm - holds up.

TL;DR

- 7.6/10 - ADK is the right choice if you're already in the Google Cloud ecosystem and need production-grade multi-agent orchestration

- Strongest multi-agent architecture among major frameworks, with genuine multimodal support through Gemini Live API

- Strict file/folder conventions, single built-in tool per agent limit, and weak unit testing support for sub-agents remain frustrating

- Built for teams launching on Vertex AI; less compelling for infrastructure-agnostic projects

What ADK Actually Is

ADK isn't a no-code platform and not a thin wrapper over the Gemini API. It's a code-first Python (and now Go, TypeScript, Java) toolkit for building stateful, multi-agent systems. The core abstraction is the Agent - a unit that receives events, calls tools, and emits outputs. Agents compose into hierarchies: a parent LlmAgent can delegate to child agents via sub_agents, with the runtime managing event routing between them.

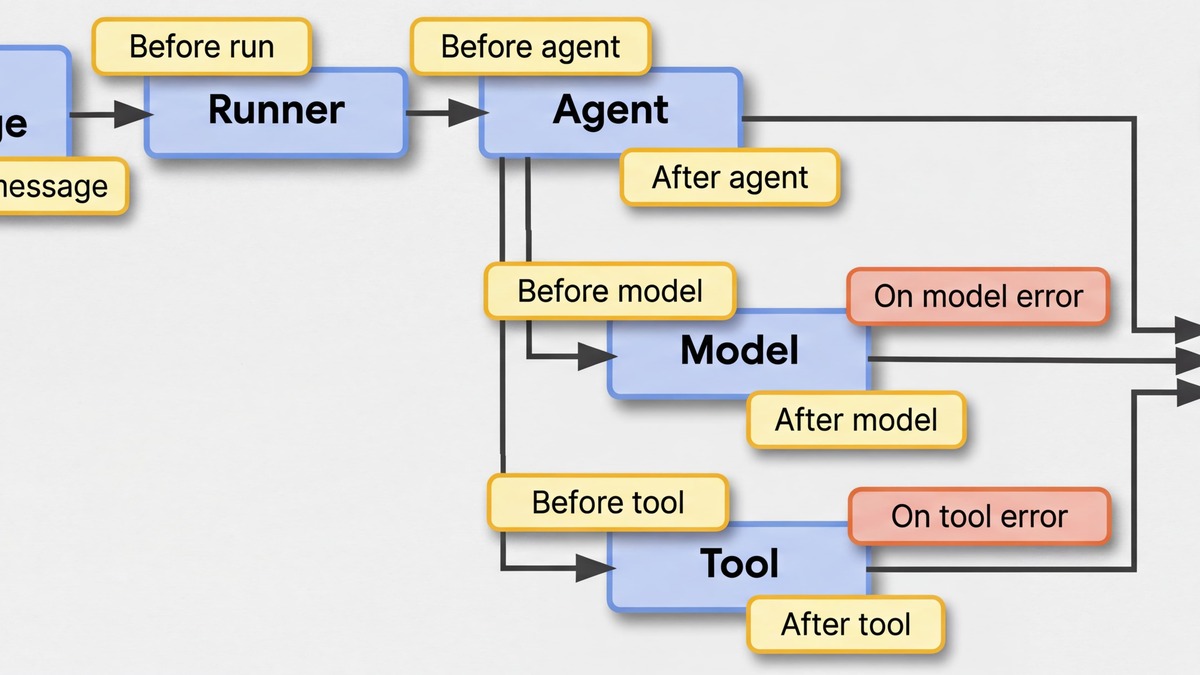

The event-driven model is the most distinctive part of the design. Every interaction - user message, tool call, LLM response - flows through a shared event bus managed by a Runner. This gives you clean separation between orchestration logic and agent behavior, and makes the execution trace inspectable at every step.

Five agent types cover most architectures: LlmAgent for LLM-driven reasoning, SequentialAgent for deterministic pipelines, ParallelAgent for concurrent tasks, LoopAgent for iterative workflows, and arbitrary composition through hierarchical nesting. Compared to frameworks that force you to choose between rigid pipelines and fully dynamic routing, ADK's mix-and-match approach is truly useful.

Google announced ADK with A2A and Agent Engine updates at Google I/O 2025, positioning the framework as its primary agent development offering.

Source: developers.googleblog.com

Google announced ADK with A2A and Agent Engine updates at Google I/O 2025, positioning the framework as its primary agent development offering.

Source: developers.googleblog.com

The Multi-Agent Story

This is where ADK earns its score. Building a multi-agent system in ADK is straightforward: you define specialized agents, wire them together through sub_agents, and the parent agent handles routing. The framework supports three delegation patterns - sequential pipelines for predictable order, parallel fans for concurrent subtasks, and LLM-driven dynamic routing where the parent decides which child to call based on the conversation.

from google.adk.agents import LlmAgent, SequentialAgent, ParallelAgent

researcher = LlmAgent(

name="researcher",

model="gemini-2.5-flash",

description="Searches the web and summarizes findings",

tools=[google_search_tool],

)

analyst = LlmAgent(

name="analyst",

model="gemini-2.5-pro",

description="Analyzes data and produces structured output",

tools=[code_execution_tool],

)

coordinator = LlmAgent(

name="coordinator",

model="gemini-2.5-pro",

description="Routes requests to researcher and analyst",

sub_agents=[researcher, analyst],

)

The runtime handles the event loop. You don't manage thread pools, message queues, or state synchronization manually. For teams building moderately complex agentic workflows - say, a research pipeline with 3-6 specialized agents - the abstraction level is close to right.

ADK also has native A2A (Agent2Agent) protocol support, which lets your agents communicate with agents built in other frameworks. The protocol, announced with ADK and now on version 0.2, uses standardized "Agent Cards" for capability discovery and JSON-RPC for message passing. Microsoft Azure AI Foundry, SAP Joule, Box, Auth0, and Zoom have all added A2A support. This matters less today than it'll in twelve months, but ADK being an early adopter is a genuine differentiator if cross-organization agent networks become real.

Tooling and Integrations

ADK ships with a pre-built Google Search tool, a Vertex AI Search tool, and a code execution sandbox. Custom tools are Python functions with automatic Pydantic schema generation. MCP (Model Context Protocol) tools work the same way as custom tools - a single McpToolset import connects your agent to any MCP server. See our guide to MCP for background on the protocol.

The integrations ecosystem expanded markedly in early 2026. Google announced partnerships with over 30 platforms: GitHub, GitLab, Asana, Linear, Notion, MongoDB, Pinecone, Stripe, PayPal, ElevenLabs, and Hugging Face, among others. These are plug-in integrations, not first-party tools, but they follow the same McpToolset pattern and add maybe 10 lines of code to a project.

One hard limitation affects every ADK user: you can only attach one built-in tool (like Google Search) per agent, and built-in tools can't be combined with custom function tools in the same agent. This was a significant constraint in v1.15.0 and earlier. Version 1.16.0 introduced a workaround, but the underlying restriction remains architectural. Teams with complex tool requirements end up creating wrapper agents - a SequentialAgent that calls a search-only agent then hands off to a function-tool agent - which works but adds unnecessary indirection.

Developer Experience

The web UI (adk web) is truly good. It provides a chat interface for testing agents, real-time event inspection, execution tracing with timestamps, and an evaluation dashboard. The Visual Builder offers drag-and-drop agent construction for simpler workflows, though most production users will stay in code.

The CLI is clean: adk run, adk eval, adk deploy cover local development, benchmarking, and deployment. The local feedback loop - edit code, run adk run, inspect traces in the web UI - is fast.

Where the experience breaks down is in conventions. ADK requires strict folder and file naming: your agent must live in agent.py, your package must have __init__.py, and if the names don't match, you get error messages that don't tell you why. This "convention over configuration" approach is defensible but the error messages need work. Developers new to the framework consistently report spending time debugging file structure rather than agent logic.

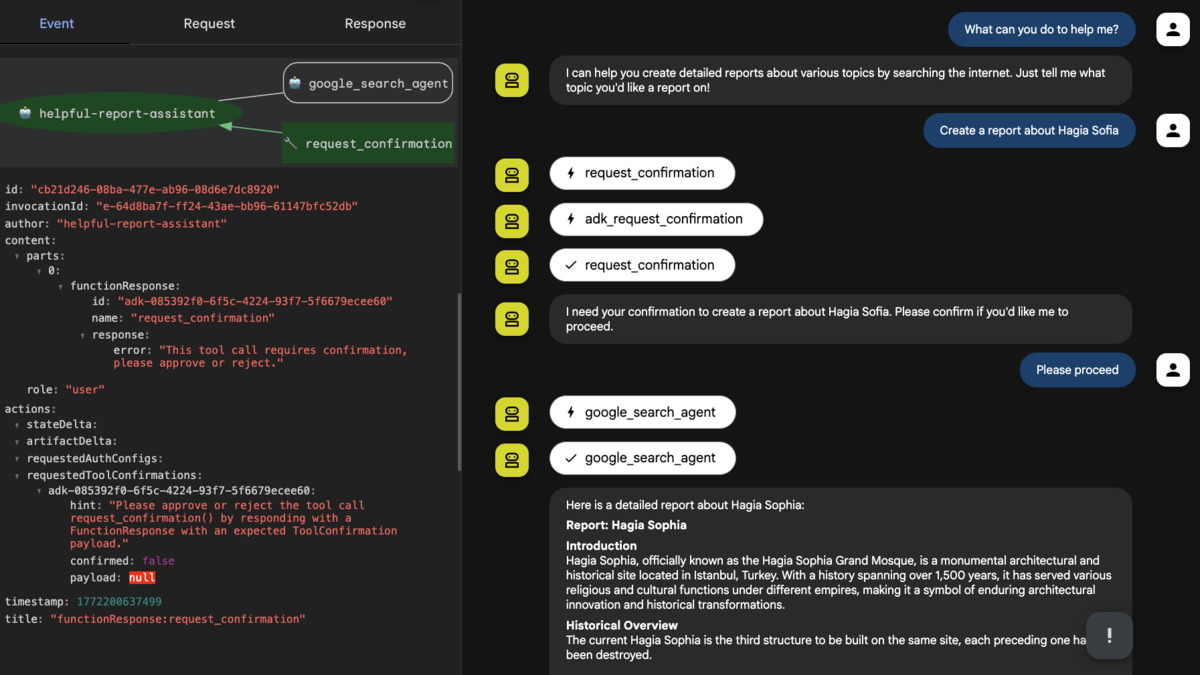

ADK's Human-in-the-Loop (HITL) support lets agents pause execution and wait for user approval before sensitive tool calls, shown here in the Java 1.0.0 release.

Source: developers.googleblog.com

ADK's Human-in-the-Loop (HITL) support lets agents pause execution and wait for user approval before sensitive tool calls, shown here in the Java 1.0.0 release.

Source: developers.googleblog.com

Unit testing is a notable miss. Sub-agents can't be tested independently because they're not root agents in the current architecture. You can assess top-level agents against multi-turn datasets using ADK's built-in evaluation harness, and that tooling is solid. But the inability to unit test sub-agents in isolation creates friction in teams with standard software engineering practices. It's the kind of gap that matters more as projects scale.

Model Support

ADK is optimized for Gemini - specifically Gemini 2.5 Pro and Gemini 2.5 Flash for most workflows. The multimodal capabilities are the clearest advantage here: Gemini's native image, audio, and video processing works without any adapter layer, enabling visual inspection agents, voice-based customer support flows, and document understanding pipelines that would require significant integration work in other frameworks. The Gemini Live API Toolkit adds bidirectional audio and video streaming for real-time applications.

Model support beyond Gemini comes through LiteLLM, which connects to Claude, Llama, Mistral, and over 100 other providers. Gemma models via Ollama or vLLM work for local deployments. The LiteLLM integration is reliable and well-documented, though teams that primarily use non-Gemini models should consider whether framework-level Gemini optimization is a benefit or a source of friction.

Deployment and Pricing

Local development is free. The framework is Apache 2.0 licensed. Deployment options include Cloud Run (straightforward containerization), Google Kubernetes Engine, and Vertex AI Agent Engine (Google's managed runtime for agents).

Agent Engine pricing is usage-based: $0.00994 per vCPU-hour and $0.0105 per GiB-hour, billed per second. Idle agents aren't charged. For context, a lightweight agent handling moderate traffic runs well under $10/month on Agent Engine. The managed runtime also provides session management, memory bank persistence, and monitoring dashboards - capabilities you'd otherwise build yourself.

The full Google Cloud dependency is the trade-off. If your organization already uses GCP, ADK + Agent Engine is a natural fit. If you run on AWS or Azure, you're either accepting cross-cloud dependencies or containerizing everything and ignoring Agent Engine - which removes most of the managed deployment value.

ADK's plugin architecture, introduced in Java 1.0.0, centralizes cross-cutting concerns like logging, guardrails, and execution control across agent hierarchies.

Source: developers.googleblog.com

ADK's plugin architecture, introduced in Java 1.0.0, centralizes cross-cutting concerns like logging, guardrails, and execution control across agent hierarchies.

Source: developers.googleblog.com

How It Compares

The agent framework field in 2026 has four serious contenders: ADK, LangGraph, CrewAI, and OpenAI Agents SDK. Our agent frameworks comparison guide covers the full picture, but the relevant comparisons here are:

| ADK | LangGraph | CrewAI | |

|---|---|---|---|

| Multi-agent patterns | Strong | Strong | Medium |

| Multimodal support | Native | Via integrations | Via integrations |

| Production readiness | Medium-High | High | Medium |

| Learning curve | Medium | High | Low |

| Vendor lock-in | High (GCP) | Medium (LangSmith) | Low |

| Unit testing | Weak | Strong | Medium |

| Community | Growing | Established | Large |

LangGraph has the strongest story for complex, stateful workflows with custom persistence and checkpointing. CrewAI remains the fastest path from idea to working multi-agent prototype. ADK wins on multimodal capability, A2A interoperability, and Google Cloud integration depth.

If you're building your first AI agent and want to understand the concepts before committing to a framework, ADK's documentation is unusually good for an early-stage project. The quickstart, tutorials, and codelabs are consistently maintained and updated with each release.

Strengths

- Hierarchical multi-agent composition is clean and works well in practice

- Native multimodal support through Gemini - no adapter layers, no workarounds

- A2A protocol support positions ADK well for cross-framework agent networks

- Built-in evaluation harness with multi-turn dataset support

- Web UI for development and debugging is truly useful

- 30+ third-party integrations via plugin system

- Apache 2.0 license, active release cadence (v1.28.1 as of April 2, 2026)

Weaknesses

- One built-in tool per agent limit, though partially addressed in v1.16.0

- Sub-agents can't be unit tested independently

- Strict naming conventions with unhelpful error messages when violated

- Meaningful deployment value tied to Google Cloud / Vertex AI

- Python default parameter values don't work for tool functions - input schema must match exactly

- Youngest of the major frameworks; LangGraph's checkpointing and persistence story is more mature

Verdict

ADK is a 7.6/10 framework. That score reflects genuine capability with real frustration points. The multi-agent architecture and multimodal support are the best available. The developer experience - especially around conventions, error messages, and unit testing - needs another 6-12 months of work.

If your team is in the Google Cloud ecosystem and you need multi-agent orchestration with multimodal capabilities, ADK is the right choice. If you're building infrastructure-agnostic agents or need mature checkpointing and sub-agent testability, LangGraph is still ahead. If you need the fastest prototype-to-demo pipeline, CrewAI. ADK is a serious option, not a default.

For teams already familiar with what AI agents are and evaluating frameworks, ADK's 18.7k stars and Google's sustained investment make it worth a week-long evaluation against your specific use case. The Java and Go ports reaching 1.0.0 in early 2026 signal that Google intends to maintain this long-term - a consideration that matters more as production deployments build up.

Sources

- Agent Development Kit - Official Documentation

- Google ADK Python - GitHub Repository (v1.28.1)

- Agent Development Kit: Making it easy to build multi-agent applications - Google Developers Blog

- What's new with Agents: ADK, Agent Engine, and A2A Enhancements - Google Developers Blog

- Announcing ADK for Java 1.0.0 - Google Developers Blog

- Announcing the Agent2Agent Protocol (A2A) - Google Developers Blog

- Supercharge your AI agents: The New ADK Integrations Ecosystem - Google Developers Blog

- Google ADK vs LangGraph: Which One Develops and Deploys AI Agents Better - ZenML

- Google Releases Open-Source Agent Development Kit for Multi-Agent AI Applications - InfoQ

- Vertex AI Agent Engine Pricing - Google Cloud