DeerFlow 2.0 Review: ByteDance's Open SuperAgent

ByteDance's DeerFlow 2.0 is a powerful open-source agent harness that executes long-horizon tasks inside Docker sandboxes - impressive engineering, but not a turnkey solution.

When ByteDance published DeerFlow 2.0 on February 27, 2026, the repository hit 35,300 GitHub stars within 24 hours and climbed to number one on GitHub Trending by the following morning. That kind of reception demands scrutiny. Is DeerFlow truly a step change in what open-source agent infrastructure can do, or is it another "superagent" demo dressed up with superlatives?

TL;DR

- 8.1/10 - the most architecturally serious open-source agent harness available today, but demands real engineering investment to deploy

- Genuinely executes long-horizon tasks in isolated Docker sandboxes - writes and runs code, produces research reports, builds web pages, all autonomously

- Setup requires Python 3.12, Node 22, Docker, and comfort with YAML and CLI; non-technical users will struggle

- Engineers and technical teams who want data sovereignty and full control over their agent stack; skip it if you need a polished no-code product

The answer, after a week of testing, is that DeerFlow 2.0 is the real thing - at the infrastructure level. ByteDance hasn't shipped a product. It has shipped the skeleton of one: a tightly engineered execution harness that, given the right operator, can do things that commercial agent platforms charge monthly subscriptions for. The tradeoffs are clear, and they're worth understanding before you invest the setup time.

What DeerFlow 2.0 Actually Is

The name is an acronym: Deep Exploration and Efficient Research Flow. Version 2.0 shares no code with the original DeerFlow (May 2025), which was a modular multi-agent research framework built for academic and structured research pipelines. The v2 rewrite abandons that design completely. Where v1 was a library you assembled, v2 is a harness you deploy.

The DeerFlow 2.0 web UI running locally. The dark interface accepts natural language tasks and streams execution progress in real time.

Source: apidog.com

The DeerFlow 2.0 web UI running locally. The dark interface accepts natural language tasks and streams execution progress in real time.

Source: apidog.com

The core insight is straightforward: most AI agent frameworks stop at the instruction layer. They send prompts to a model and relay the model's text output. DeerFlow executes in a real environment. When you ask it to analyze a dataset, it doesn't describe how to analyze the dataset - it spins up a Python interpreter inside a Docker container, installs the required libraries, runs the code, and hands back the chart. That's the key distinction.

Under the hood this runs on LangGraph 1.0 and LangChain (Python backend), with a Next.js frontend and a FastAPI gateway. All four services sit behind Nginx, accessible on a single port (2026 by default). The architecture enforces a strict boundary between the harness layer - the publishable agent framework - and the application layer that adds messaging integrations and the web gateway. This isn't accidental design; a dedicated test file (test_harness_boundary.py) will fail the build if any application code imports back into the harness.

The Execution Model

Every task routes through a 14-step middleware pipeline before the language model sees it. The pipeline handles thread isolation, file context injection, sandbox acquisition, safety guardrails, error standardization, token management, task tracking, and more. It reads as over-engineered until you consider what happens when you run a five-step research task that calls three subagents and writes to disk: without that infrastructure, a single tool error cascades into an unrecoverable state. With it, errors are caught, logged, and surfaced cleanly.

DeerFlow doesn't ask a model what it'd do. It asks a model what to do, then does it.

The agent hierarchy works like this: a Lead Agent receives your task and orchestrates execution. When subtasks require specialization, the Lead spawns Subagents via a task() tool call. Up to three subagents can run in parallel, each with a 15-minute timeout. Built-in subagent types include a general-purpose agent with full tool access and a bash specialist for command-line heavy work. The separation keeps context windows manageable and lets the system tackle truly long-horizon tasks without hitting token ceilings.

Sandboxes are where DeerFlow earns serious credibility. The production configuration uses Docker or Kubernetes pods for fully isolated execution environments. Each conversation thread gets its own directory structure with workspace, uploads, and outputs folders. Inside the sandbox the agent has a persistent filesystem, bash terminal, Python runtime, MCP server access, and a browser interface. ByteDance calls it an "All-in-One Sandbox." That's marketing, but it's accurate marketing.

Skills and Tools

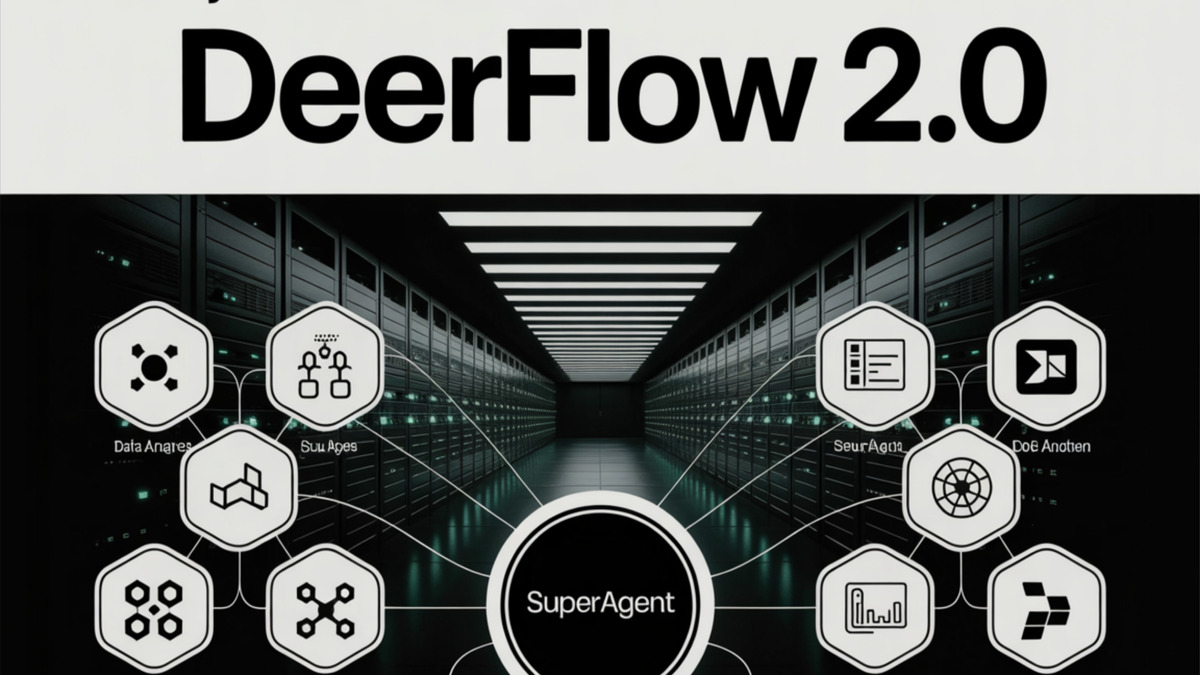

The SuperAgent architecture: a central orchestrator radiating out to specialized capability nodes including code execution, sub-agents, search, and content generation.

Source: marktechpost.com

The SuperAgent architecture: a central orchestrator radiating out to specialized capability nodes including code execution, sub-agents, search, and content generation.

Source: marktechpost.com

DeerFlow's skill system is architecturally clever. Skills are Markdown files (SKILL.md) that define a workflow, best practices, and tool references. The system loads them progressively - only activating a skill's capabilities when the task requires them, keeping context windows lean. Built-in skills cover deep web research, report generation with charts, slide deck creation, web page scaffolding, image and video generation, EDA notebooks, and podcast analysis.

The tools list out of the box includes web search via Tavily, web crawling via BytePlus InfoQuest (ByteDance's own search product), file read/write, code execution, and MCP server integration with OAuth support. The InfoQuest integration gives DeerFlow access to ByteDance's crawling infrastructure, which is worth noting from a data flow perspective - queries pass through ByteDance systems, which may concern privacy-conscious deployments.

Model support is agnostic. DeerFlow works with any OpenAI-compatible API endpoint. The repo recommends ByteDance's own Doubao-Seed-2.0-Code, DeepSeek v3.2 (which we reviewed here at Awesome Agents), and Kimi K2.5 - but GPT-5 variants, Claude, Gemini Flash, and local Ollama models all work. One real constraint: the task decomposition and subagent spawning depend on reliable structured output from the model. Smaller local models frequently fail this requirement in practice.

Installation and Setup

Getting DeerFlow running takes meaningful effort. Requirements: Python 3.12+, Node.js 22+, Docker, and comfort with CLI and YAML configuration. The Docker path is the cleanest:

git clone https://github.com/bytedance/deer-flow.git

make config # generates .env and config.yaml

# populate API keys in .env

make docker-init # pulls images (~5-8 min on first run)

make docker-start # available at http://localhost:2026

That sequence works. The make config step produces sensible template files, and the init step handles dependency resolution. On a machine with Docker already running, from a fresh clone to first task execution took me about 25 minutes - most of that waiting on the docker pull. The local dev path (make dev) is faster once Node modules are cached but requires more manual intervention when configs change.

This isn't Manus AI, where you create an account, type a task, and get a result in seconds. If you want a Manus-style turnkey experience, DeerFlow will disappoint you. If you want the underlying machinery that products like Manus are built on top of, and you want to run it on your own hardware, DeerFlow is exactly that.

Real-World Performance

I tested DeerFlow against a set of tasks across the skill categories.

Research report generation was the strongest result. Given a prompt to produce a 3,000-word competitive analysis of self-hosted LLM inference options, DeerFlow ran five parallel web searches, extracted content from eight sources, synthesized a structured report with section headers and citations, and delivered it in 11 minutes. The quality matched what you'd get from a competent analyst with access to the same sources, though it over-cited primary documentation and under-cited community benchmarks.

DeerFlow's sub-agent spawning model: a central orchestrator delegates parallel workstreams to specialized agents, each running in an isolated execution context.

Source: byteiota.com

DeerFlow's sub-agent spawning model: a central orchestrator delegates parallel workstreams to specialized agents, each running in an isolated execution context.

Source: byteiota.com

Code execution tasks were reliable but not sophisticated. Asking it to analyze a CSV file, identify outliers, and produce a visualization worked correctly. Asking it to build a functional multi-route web application from a description produced a runnable scaffold but missed business logic edge cases that any experienced developer would foresee. This matches what you'd expect from the underlying LLM capabilities - DeerFlow doesn't make models smarter, it gives them a real execution environment.

Multi-session continuity is a known gap. DeerFlow's persistent memory stores confidence-scored facts as JSON at .deer-flow/threads/{id}/memory.json. The architecture is thoughtful - memories are ranked, deduplicated, and injected into system prompts when relevant. In practice, across sessions that reference earlier work, memory retrieval is inconsistent. The system sometimes correctly recalls prior context; sometimes it doesn't surface what you'd expect. This is an open research problem in agent design, not a DeerFlow-specific failure, but it limits the framework's utility for iterative multi-day projects.

Strengths

- Genuine execution, not simulation. Docker sandboxes give the agent a real environment to work in. It installs, runs, and returns actual outputs.

- Architectural discipline. The harness/app boundary, middleware pipeline, and test enforcement reflect serious software engineering.

- Full data sovereignty. Everything runs on your hardware. No data leaves your infrastructure unless you configure integrations that route outbound.

- Model agnosticism. Any OpenAI-compatible endpoint works. Switch between DeepSeek, Claude, and local models by changing one environment variable.

- MIT license. No usage restrictions. Fork it, extend it, productize it.

- 55,900+ GitHub stars and an active community with 107+ contributors pushing real features.

Weaknesses

- No project continuity. Long-horizon means single-session. Multi-day, multi-week iterative workflows lack the plan-build-test-refine cycles that knowledge work actually requires (GitHub Issue #1114 tracks this gap).

- Setup friction. Docker, YAML, CLI, and Python 3.12 are hard requirements. The learning curve filters out non-technical users completely.

- InfoQuest dependency. The default web crawling tool routes through ByteDance's infrastructure. Privacy-sensitive deployments need to swap this for Tavily or a self-hosted alternative.

- Memory reliability. Persistent cross-session memory is architecturally present but inconsistent in practice.

- No independent security audit. Sandbox execution is powerful; there is no published third-party security assessment of the isolation guarantees.

- ByteDance provenance. In regulated industries (finance, healthcare, government), the Chinese corporate parent will trigger compliance review regardless of the MIT license.

How It Compares

DeerFlow occupies different territory than the agent tools we've covered here. n8n is a workflow automation platform - deterministic, node-based, excellent for repeatable business processes. DeerFlow is for open-ended goal execution where the path isn't known in advance. Cline is an IDE-embedded coding assistant; DeerFlow is an execution environment for tasks that span research, code, and content. The closest analogy is Manus AI, but Manus is a finished product where ByteDance has built the engine.

For teams currently paying for Manus credits to run research tasks or produce structured reports, DeerFlow is worth the setup investment if you have the engineering bandwidth. The per-task cost drops to pure LLM API fees - no subscription, no credit drain on expensive cloud compute.

Verdict

DeerFlow 2.0 is 8.1/10 for what it is: the most credible open-source agent execution harness available today. ByteDance has done serious engineering work here - the middleware architecture, sandbox system, and memory design are all well-considered. The framework truly executes tasks rather than simulating them, which puts it in a different category from most open-source agent projects.

The score reflects what it's not: a finished product. Setup requires Docker proficiency and CLI comfort. Memory continuity across sessions is unreliable. The InfoQuest dependency deserves scrutiny in privacy-sensitive contexts. And the ByteDance corporate parent will create friction in regulated enterprise environments regardless of the license terms.

If you run a technical team that needs a self-hosted agent execution layer and you're comfortable investing a day to configure it properly, DeerFlow 2.0 is the best option in its class. If you need results this afternoon without an engineering budget, look at Manus instead.

Sources

- ByteDance DeerFlow 2.0 release - MarkTechPost

- DeerFlow 2.0 GitHub repository (bytedance/deer-flow)

- DeerFlow official site - deerflow.tech

- DeerFlow 2.0 puts new spin on Claw-like agents - DeepLearning.ai The Batch

- DeerFlow 2.0: What it is, how it works - DEV.to

- ByteDance DeerFlow 2.0 open-source super-agent - AIToolly

- DeerFlow 2.0 installation and setup - Byteiota

- DeerFlow architecture deep dive - DeepWiki