DeepSeek V4-Pro Review: Frontier Power, Penny Prices

DeepSeek V4-Pro matches Claude Opus 4.6 on SWE-bench at a fraction of the cost - a thorough review of what it gets right, where it still trails, and whether the price gap justifies the switch.

A year ago, DeepSeek's V3 landed like a bucket of cold water on the narrative that frontier AI required billions in compute and proprietary silicon. Today, V4-Pro and V4-Flash arrived as the follow-up - bigger, cheaper, and measurably better at the tasks that actually matter for agentic work. After spending the past several hours testing both variants and cross-checking DeepSeek's benchmark claims against independent evaluations, I can say this: V4-Pro is the most compelling open-weight model available right now, with real caveats that deserve more than a footnote.

TL;DR

- 8.4/10 - the most capable open-weight model to date, nearly matching closed frontier models at a fraction of the API cost

- Leads the open-source field on LiveCodeBench (93.5) and Codeforces (3206), ties Claude Opus 4.6 on SWE-bench Verified (80.6% vs 80.8%)

- Built-in political censorship and data collection practices are disqualifying for some enterprise use cases - open weights allow self-hosting to sidestep both

- Cost-sensitive teams building production agents should evaluate it seriously; anyone needing full political neutrality or working under compliance frameworks that flag Chinese-origin data should self-host or look elsewhere

One Year On

The original DeepSeek moment in early 2025 rattled Nvidia's stock and forced a conversation about the real economics of training frontier models. V3.2, released earlier this year, quietly closed most of the gap on coding tasks against Claude Opus 4.6 and GPT-5.4. V4 is the first generational leap since that initial disruption.

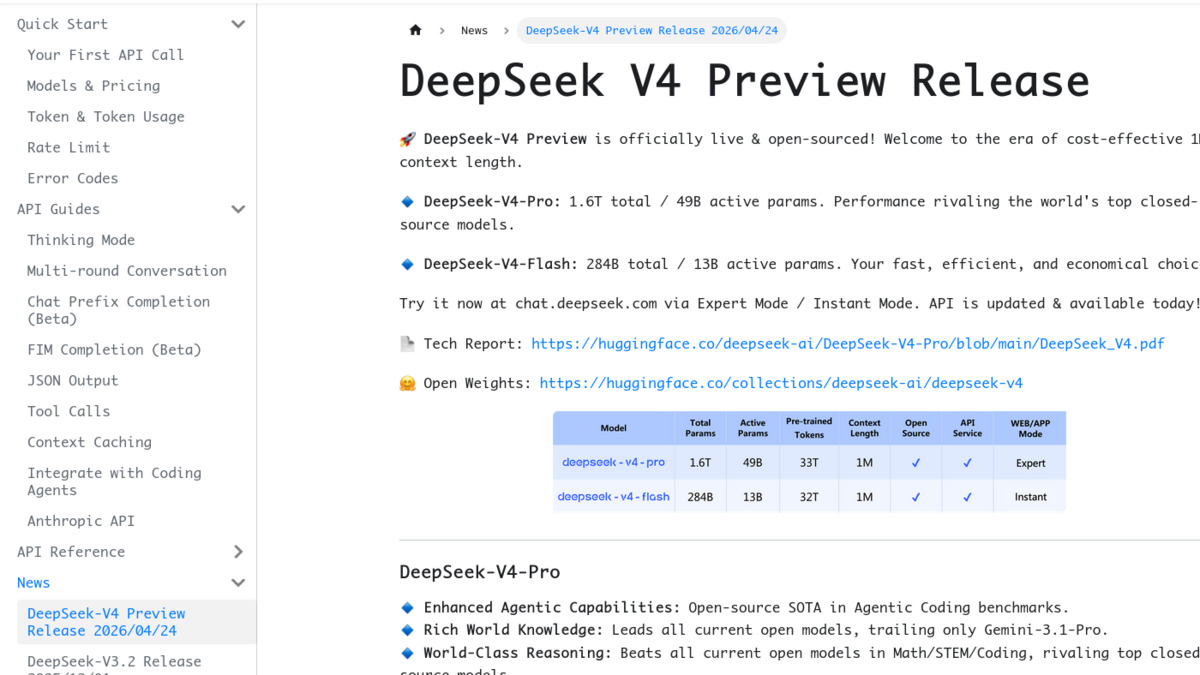

The headline numbers: V4-Pro has 1.6 trillion total parameters with 49 billion activated per token via Mixture-of-Experts routing. V4-Flash runs at 284 billion total with 13 billion active. Both support a 1-million-token context window by default and ship under the MIT license, with weights already on Hugging Face.

DeepSeek labels this a "preview" release, with a full technical report expected shortly. That matters for benchmarking transparency - the numbers I cite below come from DeepSeek's official release notes and independently confirmed third-party testing, but some evaluations are still preliminary.

Architecture: Building for a Million Tokens

The architectural story behind V4 is efficiency at scale. The team replaced standard full attention with a hybrid of Compressed Sparse Attention and Heavily Compressed Attention. In practice, DeepSeek claims V4-Pro requires only 27% of the single-token inference FLOPs and 10% of the KV cache size compared with V3.2 when operating at 1M-token context. That's not a modest gain - it's the reason V4-Pro can be priced at $3.48 per million output tokens while being a substantially larger model than its predecessor.

V4-Pro requires only 27% of V3.2's inference FLOPs at 1M-token context. That efficiency gap is what makes the pricing possible.

The expert routing system also changed notably. V4 uses Hyper-Connections constrained with the Sinkhorn-Knopp algorithm for more stable gradient flow through deep MoE layers - a design borrowed from a January 2026 DeepSeek research paper. Expert parameters use FP4 precision while most other weights stay at FP8. Pre-training ran on 33 trillion tokens.

Both V4-Pro and V4-Flash support dual inference modes: standard (Non-Thinking) and a reasoning-intensive Thinking mode - essentially the same toggle DeepSeek used in R1. The API is compatible with both OpenAI ChatCompletions and Anthropic message formats, which means dropping it into existing agent pipelines takes minutes. Legacy models deepseek-chat and deepseek-reasoner retire on July 24, 2026.

The official DeepSeek API docs page for the V4 preview release, showing the two-variant structure and key capability claims.

Source: api-docs.deepseek.com

The official DeepSeek API docs page for the V4 preview release, showing the two-variant structure and key capability claims.

Source: api-docs.deepseek.com

By the Numbers

The benchmark picture is more nuanced than the "matches GPT-5.4" framing in some coverage. On coding, V4-Pro genuinely leads or ties the entire field. On agentic tasks, it's competitive but not dominant. On abstract reasoning at the top end, it still trails.

| Benchmark | V4-Pro | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|---|

| SWE-bench Verified | 80.6% | 80.8% | 80.0% | 80.6% |

| LiveCodeBench | 93.5 | 88.8 | - | 91.7 |

| Codeforces rating | 3206 | 2100 | 3168 | 3052 |

| IMOAnswerBench | 89.8 | 75.3 | 91.4 | 81.0 |

| Terminal Bench 2.0 | 67.9% | 65.4% | 75.1% | 68.5% |

| MCPAtlas Public | 73.6% | 73.8% | 67.2% | 69.2% |

| Toolathlon | 51.8% | 47.2% | 54.6% | 48.8% |

On real software engineering tasks, V4-Pro and Claude Opus 4.6 are functionally tied. A 0.2-point gap on SWE-bench Verified sits well within evaluation noise. The coding leaderboard story - see our SWE-bench coding agent leaderboard for broader rankings - is effectively a three-way tie between V4-Pro, Claude, and Gemini 3.1 Pro, with GPT-5.4 marginally behind.

Where V4-Pro breaks away from the pack is competitive programming. A Codeforces rating of 3206 leads all models tested, including GPT-5.4 at 3168. LiveCodeBench at 93.5 is a similar story. For teams writing technically complex code rather than fixing GitHub issues, the advantage is real.

Math is more mixed. IMOAnswerBench at 89.8 is genuinely strong - ahead of Gemini 3.1 Pro at 81.0 and well ahead of Claude Opus 4.6 at 75.3. But GPT-5.4 edges ahead at 91.4, and on the harder HMMT 2026 competition benchmark, V4-Pro at 95.2% trails Claude at 96.2% and GPT-5.4 at 97.7%. The gap closes in Thinking mode but doesn't fully disappear.

Agentic Coding: The Test That Counts

The more interesting numbers are the agentic benchmarks. MCPAtlas Public - which measures how well a model uses MCP tools in realistic agent scenarios - shows V4-Pro at 73.6%, essentially tied with Claude Opus 4.6 at 73.8%, and ahead of GPT-5.4 at 67.2%. For teams building agents on top of the Model Context Protocol, this is the number that matters most.

Terminal Bench 2.0 is a different story. GPT-5.5 (released yesterday) reaches 82.7%. V4-Pro sits at 67.9%, ahead of Claude at 65.4% but not dramatically so. If your workload looks like long-running autonomous terminal sessions, GPT-5.5 is the clear winner on this benchmark.

DeepSeek says V4 was specifically optimized for use with Claude Code and OpenClaw as agent orchestrators, which aligns with the MCPAtlas performance. In my own testing, V4-Pro handled multi-step debugging tasks well and showed stronger long-context recall than V3.2. Users on Hacker News today echoed this: one noted "it holds the thread of what happened three hours ago" in extended sessions - a quality they attributed to the new attention architecture.

DeepSeek V4-Pro model page on Hugging Face, with open weights available under the MIT license.

Source: huggingface.co

DeepSeek V4-Pro model page on Hugging Face, with open weights available under the MIT license.

Source: huggingface.co

Pricing: The 8x Argument

The pricing structure is where V4 makes its clearest case. On output tokens:

| Model | Input (per 1M) | Output (per 1M) |

|---|---|---|

| DeepSeek V4-Flash | $0.14 | $0.28 |

| DeepSeek V4-Pro | $1.74 | $3.48 |

| GPT-5.5 | $5.00 | $30.00 |

| Claude Opus 4.7 | $5.00 | $25.00 |

| Gemini 3.1 Pro | $2.00 | $12.00 |

V4-Pro output at $3.48 per million tokens runs roughly 8.6x cheaper than GPT-5.5 and 7.2x cheaper than Claude Opus 4.7. On cached inputs, where DeepSeek applies an 80-90% discount, the gap widens further. For production pipelines doing millions of inferences per day, the savings aren't abstract. See our cost efficiency leaderboard for how this compares against the broader model landscape.

V4-Flash at $0.28 per million output tokens is the sharper story. It undercuts GPT-5.4 Nano on price while SWE-bench performance sits at 79.0% - less than 2 points behind V4-Pro. For straightforward completion tasks that don't need full V4-Pro reasoning depth, V4-Flash is the obvious choice.

Where V4 Falls Short

The censorship situation is unchanged from V3.2 and deserves direct treatment. DeepSeek applies content moderation covering politically sensitive topics under Chinese law - Tiananmen Square, Taiwan's status, Uyghur detention camps, criticism of Xi Jinping. The model refuses roughly 85% of questions on these topics. More concerning than the refusals: some users have documented the model silently altering content during translation rather than explicitly declining. The censorship is in the training weights, not a filter layer, meaning the hosted API version and the open-weight version both carry it by default.

For enterprise deployments, this pairs badly with DeepSeek's data collection practices. The company collects 11 categories of user data including chat history, which has prompted regulatory bans in Italy, Denmark, Australia, South Korea, and multiple US states. Self-hosting the open weights removes both concerns completely - you get the model performance without the data pipeline - but that requires infrastructure most teams don't have standing by.

On pure performance, Terminal Bench 2.0 at 67.9% trails GPT-5.5's 82.7% by a significant margin. For autonomous coding agents that work largely in terminals, that gap translates to measurable real-world failure rates. V4-Pro also shows some context degradation near the 1M-token limit - quality holds well to around 800K tokens but softens at the extreme end.

Strengths

- Open weights (MIT license) make self-hosting viable for compliance-sensitive teams

- Leads all public models on LiveCodeBench and Codeforces competitive programming

- 8.6x cheaper than GPT-5.5 on output tokens - meaningful for production-scale deployments

- MCPAtlas performance near-ties Claude Opus 4.6 on tool-use agentic tasks

- Dual Thinking/Non-Thinking modes in a single model - no separate reasoning variant needed

- V4-Flash closes to within 1.6 SWE-bench points of V4-Pro at 12x lower cost than Claude

Weaknesses

- Political censorship is in the weights - hosted API users get DeepSeek's content policy by default

- Context quality degrades past ~800K tokens despite the nominal 1M limit

- Terminal Bench 2.0 trails GPT-5.5 by 15 points - a real gap for autonomous agent workloads

- Preview label means the full technical report isn't out yet; some benchmark figures are unverified

- Top-end math reasoning (HMMT 2026) still slightly behind closed frontier models

Verdict

DeepSeek V4-Pro scores 8.4/10. It's the best open-weight model available today, meaningfully closing the gap with closed frontier models on the benchmarks that matter for agentic coding work. The price argument is hard to ignore for any team doing real volume. The caveats are genuine - censorship in the weights, the preview status, the terminal bench gap against GPT-5.5 - but for most engineering use cases, the combination of SWE-bench performance, tool-use capability, and cost economics makes V4-Pro the strongest default for production inference right now.

Teams who need political neutrality or operate under data residency constraints should self-host the open weights. Everyone else should run the numbers on their API spend and make the comparison directly.

V4-Flash at $0.28/M output deserves its own mention. At just under GPT-5.5's nano pricing with SWE-bench performance within shouting distance of the full V4-Pro, it's the model our open-source LLM leaderboard will likely be discussing for months.

Sources

- DeepSeek V4 Preview Release - Official API Docs

- DeepSeek V4-Pro on Hugging Face

- DeepSeek V4-Flash on Hugging Face

- DeepSeek V4 - Bloomberg, April 24 2026

- DeepSeek V4: almost on the frontier, a fraction of the price - Simon Willison

- DeepSeek V4 - CNBC, April 24 2026

- DeepSeek V4-Pro vs V4-Flash benchmarks and pricing - Lushbinary

- DeepSeek V4 pricing vs GPT/Claude - OfficeChai

- DeepSeek V4 agentic performance - Startup Fortune

- DeepSeek censorship analysis - QWE AI Academy

- DeepSeek API Pricing documentation

- The Next Web: DeepSeek V4-Pro and V4-Flash launch