Reasoning Model API Pricing Compared - May 2026

Updated May 2026: DeepSeek V4-Flash reasoning now $0.28/MTok output (8x cheaper than R1), o3-pro launched at $20/$80, Grok 4 retires May 15 - verified pricing across 11 models.

Reasoning model pricing is deceptive - the headline per-token rate understates real cost by 5x to 30x depending on model and task.

TL;DR

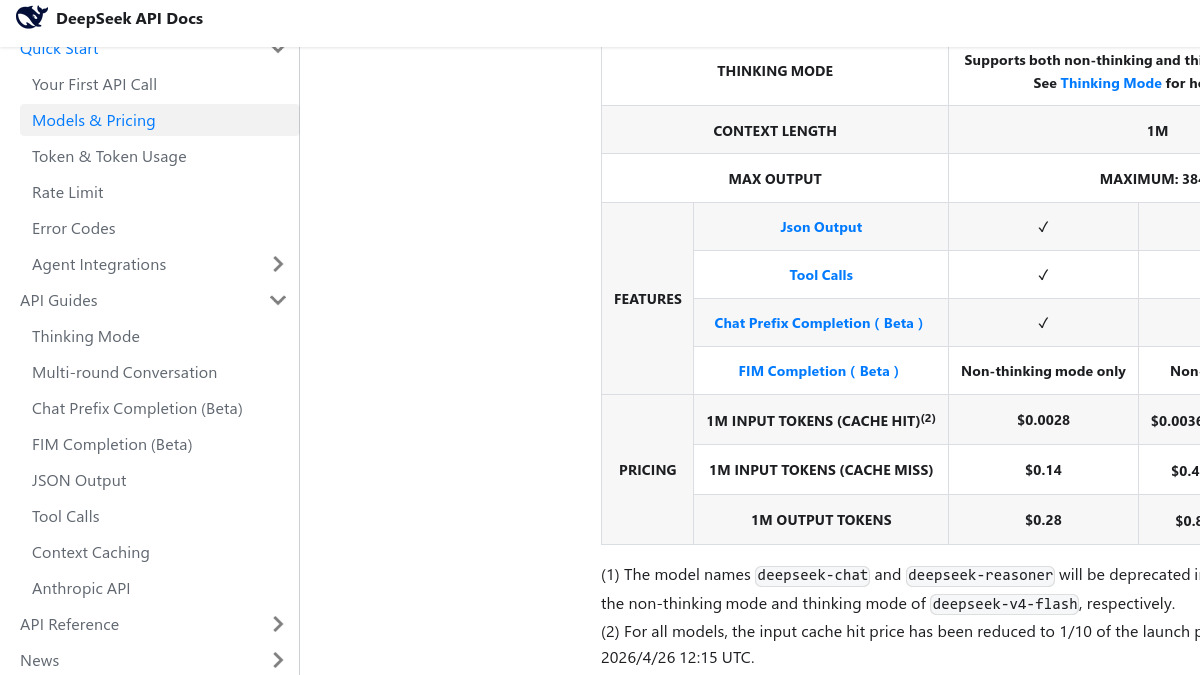

- DeepSeek V4-Flash thinking mode is now $0.14/$0.28 per MTok - nearly 8x cheaper on output than R1 was six weeks ago, and the new

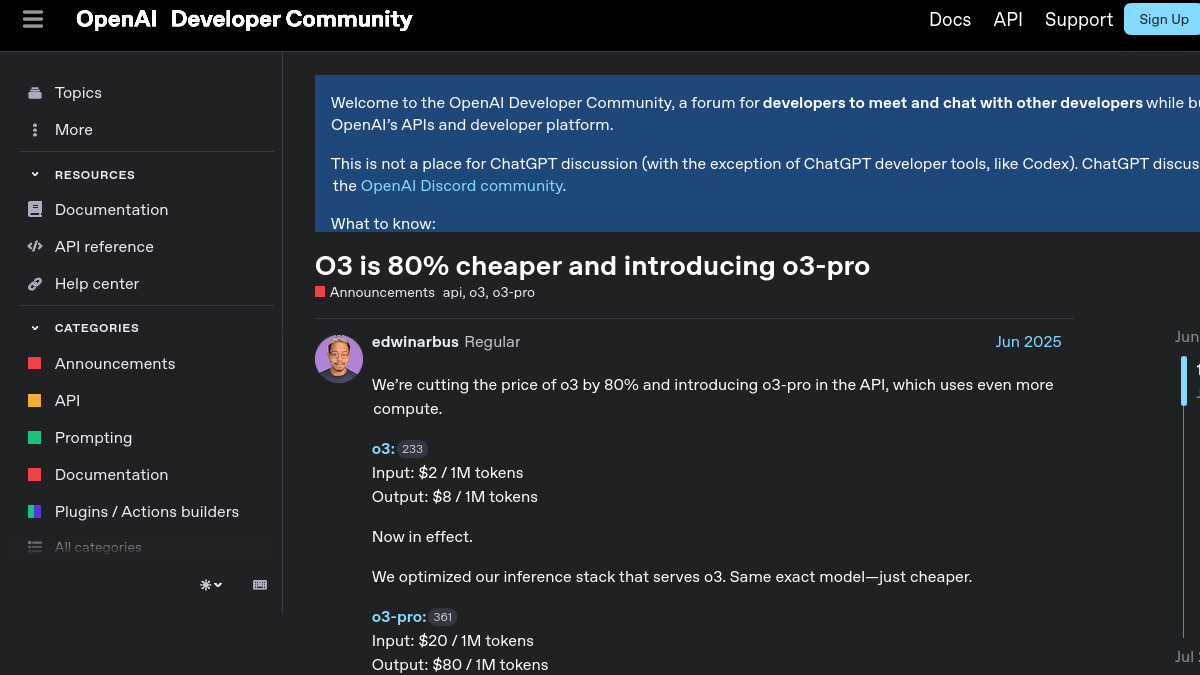

deepseek-reasoneralias routes here - o3-pro has been available in the API since June 2025 at $20/$80 per MTok (10x o3's rate); it wasn't in the April edition - it's added now alongside updated coverage across all models

- Grok 4 retires May 15, 2026 - migrate to Grok 4.3 at $1.25/$2.50, which is cheaper and supports 1M context

- Google dropped Gemini 2.5 Flash thinking tokens from $3.50 to $2.50/MTok and removed the 2.5 Pro free tier on April 1

- The reasoning token overhead problem hasn't changed: you pay for 5x to 45x more tokens than you receive, depending on model and task difficulty

If you've been following my general LLM API pricing comparison, you already know the headline rates. This page is specifically about the hidden cost structure of reasoning models - the pattern that catches every engineering team off guard when their first production invoice lands.

The core problem is simple. Reasoning models create an internal chain of thought before producing a final answer. Those "thinking tokens" are billed at the same rate as visible output tokens, but you never see them. You get the answer; you pay for the scratchpad. On a hard math problem, a model might burn 20,000 reasoning tokens before writing a 400-token response. The visible output suggests a cheap call. The actual bill reflects 20,400 output tokens.

Prices have moved clearly since April's update. The biggest change is at the low end: DeepSeek V4-Flash's reasoning mode is now nearly 8x cheaper than the R1 it replaced. At the high end, o3-pro (available since June 2025 but absent from the April edition) is now included as the maximum-compute option for problems where o3 falls short. And Grok 4's retirement on May 15 forces a migration to Grok 4.3, which is actually better priced.

The Full Pricing Table

All prices in USD per million tokens (MTok). Verified against official provider pricing pages as of May 11, 2026. Sorted by effective output cost.

| Model | Provider | Input /MTok | Cached Input | Thinking /MTok | Output /MTok | Context | Notes |

|---|---|---|---|---|---|---|---|

| DeepSeek V4-Flash (thinking) | DeepSeek | $0.14 | $0.003 | $0.28 | $0.28 | 1M | deepseek-reasoner alias; thinking and output same rate |

| Gemini 2.5 Flash (thinking) | $0.30 | $0.03 | $2.50 | $2.50 | 1M | Down from $3.50; moved to paid-only April 1 | |

| Qwen3.6 Plus (thinking) | Alibaba | $0.33 | N/A | $1.95 | $1.95 | 1M | Via OpenRouter; 1M context; thinking toggleable |

| o4-mini | OpenAI | $1.10 | $0.28 | $4.40 | $4.40 | 200K | Best value for most production reasoning workloads |

| Grok 4.3 (reasoning) | xAI | $1.25 | N/A | $2.50 | $2.50 | 1M | Replaces Grok 4 (retiring May 15); higher rate above 200K |

| Gemini 2.5 Pro (thinking) | $1.25 | $0.13 | $10.00 | $10.00 | 2M | $2.50 input / $15 output above 200K context | |

| o3 | OpenAI | $2.00 | $0.50 | $8.00 | $8.00 | 200K | Down 80% from launch price |

| Gemini 3.1 Pro (thinking) | $2.00 | $0.20 | $12.00 | $12.00 | 2M | $4 input / $18 output above 200K; newest Google frontier | |

| Claude Sonnet 4.6 (thinking) | Anthropic | $3.00 | $0.30 | $15.00 | $15.00 | 1M | thinking and text blocks billed at same output rate |

| Claude Opus 4.7 (thinking) | Anthropic | $5.00 | $0.50 | $25.00 | $25.00 | 1M | Latest Anthropic flagship; new tokenizer (up to 35% more tokens vs Opus 4.6) |

| o3-pro | OpenAI | $20.00 | $5.00 | $80.00 | $80.00 | 200K | Maximum compute; 10x o3's rate; for tasks where o3 fails |

On Anthropic's tokenizer note: Claude Opus 4.7 ships with a new tokenizer that can consume up to 35% more tokens for identical input text. Benchmark the change before migrating from Opus 4.6, as your effective input costs may increase even though the per-token rate stayed the same.

On Grok's context tiers: Grok 4.3 bills requests above 200K total tokens at a higher rate. If your use case involves long documents, model the context-tier pricing before committing.

On DeepSeek's V4-Pro: A V4-Pro tier also exists at $1.74/$3.48 per MTok (standard price), but is currently discounted 75% to $0.44/$0.88 until May 31, 2026. After that date, V4-Flash is almost certainly the better value option unless V4-Pro shows meaningfully better reasoning quality on your workload.

What Changed Since April 2026

Several entries in this table are substantially different from six weeks ago.

April 1, 2026 - Google removed the free tier for Gemini 2.5 Pro. Previously available under rate limits, it now requires a paid API key. Free access to thinking models now means Gemini 2.5 Flash only.

April 16, 2026 - Anthropic released Claude Opus 4.7 at the same $5/$25 price as Opus 4.6, but with a new tokenizer. The performance uplift is real (87.6% on SWE-bench Verified), but so is the token count increase.

April 26, 2026 - DeepSeek cut its cache-hit input price to one-tenth of launch pricing. And more clearly, the

deepseek-reasoneralias now routes to V4-Flash thinking mode rather than the old R1 model. The effective output price dropped from $2.19 to $0.28 per MTok.June 10, 2025 - OpenAI cut o3's price 80% (from ~$10/$40 to $2/$8) and launched o3-pro at $20/$80. Both were not included in the April edition of this comparison; they're added in this update.

May 15, 2026 - Grok 4, Grok 4.1 Fast, and several related xAI models reach end of life. Grok 4.3 at $1.25/$2.50 is the direct replacement and offers a 1M context window.

Why the Advertised Price Misleads

The headline output rate is the price per visible output token. Reasoning models create a second class of tokens before that output - the internal chain of thought - and those tokens are invisible to you but billed identically.

Consider a request: "Find the bug in this code and fix it." The prompt is 6,000 tokens. A reasoning model internally traces call graphs, checks variable scoping, considers three candidate fixes, and evaluates edge cases before writing the repair. That might produce 8,000 reasoning tokens and 500 visible output tokens. You pay for 8,500 output-priced tokens, not 500.

The ratio varies by task. On simple questions, reasoning models sometimes skip extended thinking and the overhead is low. On competition math, they can hit 25,000 reasoning tokens for a 500-token proof. The same model, the same output rate, but a 50x difference in effective cost per response.

OpenAI Developer Community announcement from June 2025 confirming o3's 80% price cut and the launch of o3-pro at $20/$80.

Source: openai.com

OpenAI Developer Community announcement from June 2025 confirming o3's 80% price cut and the launch of o3-pro at $20/$80.

Source: openai.com

Reasoning Token Overhead by Task Type

These estimates use median token counts from model documentation, community benchmarks, and API usage metadata. Actual counts vary by 2-3x depending on prompt structure, which you can influence.

Hard Math (Competition Level)

Problems at AIME / AMC 12 difficulty. These require multi-step chained reasoning with backtracking.

| Model | Typical thinking tokens | Visible output | Total billed output | Effective cost/query |

|---|---|---|---|---|

| o3-pro | 30,000 - 60,000 | 400 - 600 | 30,400 - 60,600 | $2.43 - $4.85 |

| o3 | 18,000 - 25,000 | 400 - 600 | 18,400 - 25,600 | $0.15 - $0.20 |

| Claude Opus 4.7 | 8,000 - 15,000 | 400 - 700 | 8,400 - 15,700 | $0.21 - $0.39 |

| Gemini 3.1 Pro | 8,000 - 14,000 | 300 - 500 | 8,300 - 14,500 | $0.10 - $0.17 |

| Gemini 2.5 Pro | 8,000 - 14,000 | 300 - 500 | 8,300 - 14,500 | $0.083 - $0.145 |

| o4-mini | 10,000 - 16,000 | 400 - 600 | 10,400 - 16,600 | $0.046 - $0.073 |

| Gemini 2.5 Flash | 6,000 - 12,000 | 300 - 500 | 6,300 - 12,500 | $0.016 - $0.031 |

| DeepSeek V4-Flash | 6,000 - 14,000 | 350 - 600 | 6,350 - 14,600 | $0.0018 - $0.0041 |

The DeepSeek number is striking. At $0.002-$0.004 per competition math query, it's more than 50x cheaper than o4-mini and roughly 100x cheaper than o3. The quality delta is real - o3 scores 96%+ on AIME 2025 while DeepSeek V4-Flash benchmarks around 82% - but for non-critical education or research pipelines, that accuracy difference may not be worth $0.07 per query.

Complex Code Fix (Multi-File Bug)

A bug spanning multiple files with 8,000-token context prompt.

| Model | Typical thinking tokens | Visible output | Total billed | Effective cost/query |

|---|---|---|---|---|

| o3 | 5,000 - 10,000 | 800 - 1,500 | 5,800 - 11,500 | $0.046 - $0.092 |

| o4-mini | 3,000 - 7,000 | 800 - 1,500 | 3,800 - 8,500 | $0.017 - $0.037 |

| Claude Sonnet 4.6 | 2,000 - 6,000 | 800 - 1,500 | 2,800 - 7,500 | $0.042 - $0.113 |

| Gemini 2.5 Flash | 2,500 - 6,000 | 700 - 1,200 | 3,200 - 7,200 | $0.008 - $0.018 |

| DeepSeek V4-Flash | 2,000 - 5,000 | 700 - 1,200 | 2,700 - 6,200 | $0.00076 - $0.0017 |

For coding workloads at scale, DeepSeek V4-Flash is roughly 20-25x cheaper than Claude Sonnet 4.6 in thinking mode. The SWE-bench gap is around 8-12 percentage points. Measure that gap on your actual CI failure rate before deciding whether it's worth the cost premium. See our coding benchmarks leaderboard for current scores.

Agentic Task (Multi-Turn Planning)

Agent session: "research competitors, summarize findings, draft a report." Eight to fifteen turns with growing context.

| Model | Thinking tokens/turn | Turns | Total thinking | Session output cost |

|---|---|---|---|---|

| o3 | 4,000 - 8,000 | 8 - 15 | 32,000 - 120,000 | $0.26 - $0.96 |

| o4-mini | 2,000 - 5,000 | 8 - 15 | 16,000 - 75,000 | $0.07 - $0.33 |

| Claude Sonnet 4.6 | 1,500 - 4,000 | 8 - 15 | 12,000 - 60,000 | $0.18 - $0.90 |

| Grok 4.3 | 1,500 - 3,500 | 8 - 15 | 12,000 - 52,500 | $0.030 - $0.13 |

| Gemini 2.5 Flash | 1,500 - 4,000 | 8 - 15 | 12,000 - 60,000 | $0.030 - $0.15 |

| DeepSeek V4-Flash | 1,500 - 4,000 | 8 - 15 | 12,000 - 60,000 | $0.0034 - $0.017 |

Grok 4.3 is a new entry worth considering for agentic pipelines. At $1.25/$2.50 per MTok with a 1M context window, it's in the same price tier as Gemini 2.5 Flash for multi-turn sessions but offers much longer context without tier pricing penalties. Check the agentic AI benchmarks leaderboard for current task scores.

Effective Cost Per Query

The effective output multiplier captures how much you're actually paying relative to what you receive:

multiplier = (thinking_tokens + visible_output_tokens) / visible_output_tokens

If the model produces 10,000 thinking tokens for 500 visible output tokens, the multiplier is 21x. You pay for 21x the tokens you receive.

| Model | Hard math | Code fix | Agent turn |

|---|---|---|---|

| o3-pro | 70x | 10x | 6x |

| o3 | 45x | 8x | 5x |

| Gemini 3.1 Pro | 30x | 7x | 5x |

| Gemini 2.5 Pro | 28x | 6x | 4x |

| o4-mini | 28x | 6x | 4x |

| Gemini 2.5 Flash | 22x | 5x | 4x |

| Claude Opus 4.7 | 22x | 5x | 4x |

| DeepSeek V4-Flash | 23x | 6x | 4x |

The multipliers are similar across models. DeepSeek V4-Flash at a 23x multiplier still beats Claude Sonnet 4.6 at 16x because $0.28/MTok × 23 = $6.44 effective per billion visible tokens, versus $15/MTok × 16 = $240. The token rate dominates.

When Reasoning Mode Earns Its Cost

Reasoning tokens add cost and latency to every call. They only pay off when correctness on hard problems carries real consequences.

High-value use cases:

Competition math and formal proofs: o3 and o4-mini score 93-96% on AIME 2025 benchmarks. Standard GPT-5-class models score around 60%. See the math olympiad AI leaderboard for current rankings. If accuracy difference translates directly to user value, the cost is trivial.

Security-critical code review: Reasoning models find logic bugs and edge cases that pattern-completion models miss. For a code review pipeline where a missed vulnerability costs $10K+ in incident response, $0.05-$0.15 per review is noise.

Hard coding tasks: o3 and Claude Sonnet 4.6 thinking both clear 60%+ on SWE-bench Verified; non-reasoning frontier models land in the 40-50% range. If each incorrect fix triggers a CI run, fewer failures offset the per-query cost.

Agentic planning (single-pass): When an agent plans a complex workflow before executing, reasoning models cut replanning failures. The math works when replanning means re-running expensive downstream steps.

When to Skip It

Not every task benefits from extended thinking. Routing to a non-reasoning model is often the right call.

Skip reasoning mode for chatbot conversations, simple RAG lookups, classification and routing, creative writing, and any latency-critical path where sub-second first-token response is required. Reasoning models add 5-90 seconds of mandatory TTFVT depending on task difficulty - that's an UX problem in interactive applications, not just a cost problem.

Also skip reasoning for structured data extraction. Pulling JSON from a known schema doesn't benefit from chain-of-thought. Use a smaller, faster model.

Latency Comparison

Time to first visible token (TTFVT) - from request submission to the first character of the actual answer:

| Model | Easy task | Hard task | Notes |

|---|---|---|---|

| o4-mini | 5 - 12 sec | 15 - 45 sec | Fastest o-series |

| Grok 4.3 | 4 - 10 sec | 12 - 35 sec | reasoning=low is default; fast for moderate difficulty |

| Gemini 2.5 Flash | 4 - 10 sec | 12 - 35 sec | Lightweight thinking pass |

| Gemini 2.5 Pro | 6 - 15 sec | 20 - 55 sec | Can stream thought summaries |

| o3 | 8 - 20 sec | 30 - 90 sec | reasoning_effort=low cuts this clearly |

| Claude Sonnet 4.6 | 5 - 12 sec | 15 - 50 sec | Extended thinking adds mandatory latency |

| Gemini 3.1 Pro | 7 - 18 sec | 25 - 70 sec | thinking_level=low reduces latency |

| Claude Opus 4.7 | 9 - 22 sec | 30 - 85 sec | Large budget_tokens values add time |

| o3-pro | 20 - 60 sec | 90 - 300 sec | Extended compute; not suitable for interactive use |

| DeepSeek V4-Flash | 5 - 14 sec | 18 - 55 sec | API queue times vary by region |

O3-pro's latency range is the standout. On hard tasks, it can take 5 minutes. Build your UX with this in mind - o3-pro is a batch workload, not a conversational one.

DeepSeek's current pricing page showing V4-Flash thinking mode at $0.28/MTok output - nearly 8x cheaper than R1 was in March 2026.

Source: api-docs.deepseek.com

DeepSeek's current pricing page showing V4-Flash thinking mode at $0.28/MTok output - nearly 8x cheaper than R1 was in March 2026.

Source: api-docs.deepseek.com

Hidden Costs You Need to Know

Truncated reasoning still bills

If your token limit is hit during reasoning, you pay for all reasoning tokens generated before the cutoff, even if no final answer was produced. Set max_tokens to at least (expected_reasoning × 1.5) + expected_output. Budget conservatively.

Anthropic's new tokenizer changes your math

Claude Opus 4.7 ships with a new tokenizer that can use up to 35% more tokens for identical input. If you're migrating from Opus 4.6, benchmark your actual prompts before assuming cost parity. The per-token price is the same; the token count may not be.

Claude's budget_tokens is a soft ceiling

Anthropic's budget_tokens parameter sets a ceiling on thinking tokens but is not a hard cap. The model may exceed it by up to 20% on complex tasks. Monitor actual usage in the response metadata rather than relying on the parameter as a cost control.

Grok's 200K context tier

Grok 4.3 prices requests with more than 200K total tokens at a higher rate. If your agentic pipeline builds up a long conversation context, the cost can jump unexpectedly mid-session. Track total_tokens in each response and plan context compression before you hit the tier boundary.

DeepSeek V4-Pro discount expiration

The 75% discount on DeepSeek V4-Pro expires May 31, 2026. After that, V4-Pro reverts to $1.74/$3.48 per MTok. Any pipeline relying on V4-Pro at discounted rates needs to be re-assessed or migrated to V4-Flash before then.

Reasoning token caching

Standard prompt caching applies to input tokens regardless of whether reasoning is enabled. But reasoning tokens are generated fresh for each request - they're not cacheable across calls. For reasoning-heavy workloads with shared system prompts, your caching savings are proportional to what fraction of your bill is input tokens. On hard math tasks where reasoning tokens dominate, caching helps less.

Free Tier Comparison

| Provider | Model | Free access | Rate limits | Notes |

|---|---|---|---|---|

| Gemini 2.5 Flash thinking | Yes | 15 RPM, 1M TPD | Still free; rate limited but usable for dev | |

| Gemini 2.5 Pro thinking | No | Paid API required | Free tier removed April 1, 2026 | |

| Gemini 3.1 Pro | No | Paid API required | Preview pricing; paid only | |

| OpenAI | o4-mini | ChatGPT Plus quota | ~50 messages/3h | Chat UI only; API always paid |

| OpenAI | o3 | ChatGPT Plus quota | Lower quota than o4-mini | Chat UI only; API always paid |

| OpenAI | o3-pro | ChatGPT Pro subscribers | Limited | Highest ChatGPT tier required |

| Anthropic | Extended thinking | Claude.ai Pro | Chat UI only | API always paid |

| DeepSeek | V4-Flash | 5M token signup credit | Covers ~17M reasoning tokens | Enough for integration testing |

| xAI | Grok 4.3 | SuperGrok subscribers | Rate limited | API requires payment |

Gemini 2.5 Flash remains the best free option for reasoning model development and integration testing. The elimination of the 2.5 Pro free tier was significant - teams that were prototyping with Pro now need to evaluate whether to pay for Pro or accept Flash's lower ceiling.

Choosing the Right Model

The right reasoning model is the cheapest one that passes your accuracy bar. Run evals on your actual data before committing to a tier.

Budget first: Start with DeepSeek V4-Flash at $0.14/$0.28. On tasks where 82-85% accuracy is acceptable, it's the overwhelming cost leader. For free-tier development, Gemini 2.5 Flash.

Balance cost and accuracy: o4-mini ($1.10/$4.40) is the production sweet spot for most teams. It delivers within a few percentage points of o3 on most benchmarks at roughly one-third the effective query cost. Claude Sonnet 4.6 in thinking mode is a strong alternative with Anthropic's tool use ecosystem.

Need the highest ceiling: o3 ($2/$8) for math and formal reasoning. The gap over o4-mini is meaningful on AIME-level problems (96%+ vs 88%) but narrower on code and agent tasks. Run your own evals before paying the premium.

Extremely hard problems: o3-pro ($20/$80) for the subset of tasks where o3 fails. Expected use cases: cutting-edge formal verification, research-grade math proofs, complex security auditing. For most engineering teams this isn't a regular production call.

Long context: Gemini 3.1 Pro (2M context) or Grok 4.3 (1M context) if you need to reason over very long documents. No other reasoning model comes close to Gemini 3.1 Pro's context ceiling at a reasonable per-token rate.

Agent pipelines: o4-mini is the current default - cost-effective for multi-turn, well-supported in agent frameworks. Grok 4.3 is worth evaluating for long-context agent sessions now that Grok 4 is retiring.

For model capability comparisons beyond pricing, see the reasoning benchmarks leaderboard and cost efficiency leaderboard.

FAQ

Which reasoning model is cheapest right now?

DeepSeek V4-Flash thinking mode at $0.14/$0.28 per MTok. For thinking-heavy workloads, its effective per-query cost can run 50-100x lower than premium models like o3 or Claude Opus 4.7.

Is o3 still worth using now that o3-pro exists?

Yes. O3 at $2/$8 handles most hard reasoning tasks. O3-pro is for the specific subset where o3 itself fails - formal proofs, research math, deep security audits. Most teams will never need o3-pro.

What happened to the Grok 4 models?

Grok 4, Grok 4.1 Fast, and related xAI models retire on May 15, 2026. Migrate to Grok 4.3 at $1.25/$2.50, which is cheaper and adds a 1M context window. xAI's documentation has migration guidance for affected endpoints.

Do reasoning tokens expire from the context window?

No. Reasoning tokens are ephemeral - they affect billing but don't appear in the conversation history for subsequent turns. Your context window contains only visible input and output tokens.

Can I see the reasoning tokens?

OpenAI doesn't expose reasoning content for o3, o4-mini, or o3-pro - only the count in usage metadata. Anthropic's extended thinking exposes thinking content blocks (optional). Google's Gemini 2.5 Pro and 3.1 Pro expose thought summaries in streaming mode. Grok 4.3 exposes the full reasoning in reasoning_details.

Does batch API work with reasoning models?

Yes for all major providers. OpenAI's Batch API gives a 50% discount on both input and reasoning output tokens for o3, o4-mini, and o3-pro. Anthropic's Batch API supports extended thinking at 50% off. Google's Batch API supports Gemini thinking mode. If your workload is async-compatible, batch processing is the easiest cost reduction lever.

Sources:

✓ Last verified May 11, 2026