Embedding Models Pricing - April 2026

Embedding API costs compared for OpenAI, Voyage AI, Cohere, Google, Mistral, and AWS - voyage-4-lite and OpenAI 3-small tie at $0.02/MTok for best budget value.

TL;DR

- Three models tie as cheapest at $0.02/MTok: voyage-4-lite, OpenAI text-embedding-3-small, and Amazon Titan Text Embeddings V2

- Best value pick: voyage-4-lite - same price as OpenAI small but with 32K token context window and shared embedding space with the flagship voyage-4-large

- Voyage 4 family (launched January 2026) replaces voyage-3.5 with MoE architecture, same pricing tiers, and a shared embedding space that lets you mix models per query type

- Correction from last update: Mistral Embed is $0.10/MTok, not $0.01/MTok - three sources now confirm this

Quick Verdict

Two models stand out at the $0.02/MTok tier. OpenAI's text-embedding-3-small is the safe default - it's widely supported, has a clean batch discount (50% off), and integrates with every vector database out of the box. Voyage AI's voyage-4-lite matches that price but adds 32K token context and compatibility with the voyage-4 shared embedding space, which unlocks a useful cost trick: embed documents with voyage-4-large once, then use voyage-4-lite ($0.02/MTok) for every query. For high-accuracy RAG with no tolerance for retrieval misses, Google's gemini-embedding-001 at $0.15/MTok leads the commercial MTEB leaderboard at 68.32. Self-hosted NV-Embed-v2 still beats everything on the English MTEB at 72.31, but only if you have A100 time to burn.

Cross-links: our MTEB leaderboard from March 2026 covers the benchmark methodology in depth, and our RAG tools roundup covers the vector databases that will store these embeddings. If you're new to embeddings entirely, what are AI embeddings is worth reading first.

Normalized Pricing Table

All prices per million tokens (MTok). Embeddings are input-only - there are no output token costs. MTEB English scores from the latest available data; N/A means the model isn't yet listed on the public leaderboard. Sorted by price.

| Model | Provider | Price (/1M tokens) | Dimensions | Max Tokens | MTEB Score | Notes |

|---|---|---|---|---|---|---|

| voyage-4-lite | Voyage AI | $0.02 | 1,024 | 32,000 | ~65 | Batch: $0.013. 200M free |

| text-embedding-3-small | OpenAI | $0.02 | 1,536 | 8,191 | 62.26 | Batch: $0.01 |

| Amazon Titan Embed V2 | AWS Bedrock | $0.02 | 1,024 | 8,192 | N/A | AWS ecosystem only |

| voyage-4 | Voyage AI | $0.06 | 1,024 | 32,000 | ~67 | Batch: $0.04. 200M free |

| Cohere Embed 3 English | Cohere | $0.10 | 1,024 | 512 | 64.5 | Short context only |

| Cohere Embed 3 Multilingual | Cohere | $0.10 | 1,024 | 512 | ~64 | 100+ languages |

| Mistral Embed | Mistral | $0.10 | 1,024 | 8,192 | ~63 | General purpose |

| Cohere Embed 4 | Cohere | $0.12 | 1,024 | 128,000 | 65.20 | Multimodal, 128K context |

| voyage-4-large | Voyage AI | $0.12 | 1,024 | 32,000 | N/A | MoE flagship. 200M free |

| text-embedding-3-large | OpenAI | $0.13 | 3,072 | 8,191 | 64.60 | Batch: $0.065 |

| gemini-embedding-001 | $0.15 | 3,072 | 8,192 | 68.32 | Free tier, batch: $0.075 | |

| voyage-code-3 | Voyage AI | $0.18 | 1,024 | 32,000 | N/A | Code retrieval |

| voyage-context-3 | Voyage AI | $0.18 | 1,024 | 32,000 | N/A | Long-context specialist |

| Codestral Embed | Mistral | $0.15 | 1,536 | 32,768 | N/A | Code-specialized, batch 50% off |

| gemini-embedding-2-preview | $0.20 | 3,072 | 8,192 | N/A | Multimodal (text/image/audio/video) | |

| Jina Embeddings v4 | Jina AI | Free (non-comm) | 4,096 | 32,768 | ~71.7 | Qwen Research License; commercial pricing on request |

A note on the Mistral Embed price: our March 2026 update listed Mistral Embed at $0.01/MTok. Three independent sources (CloudPrice, OpenRouter, and Helicone) now confirm the actual rate is $0.10/MTok. The earlier figure was incorrect. If you built cost models on that number, recalculate.

Open-Source Alternatives (Self-Hosted)

Free to download, you pay only for compute.

| Model | Parameters | Dimensions | MTEB Score | Notes |

|---|---|---|---|---|

| Microsoft Harrier-OSS-v1 | 27B | N/A | 74.3 (MTEB v2) | MIT license |

| NV-Embed-v2 | 7B | 4,096 | 72.31 | NVIDIA, tops English MTEB v1 |

| Qwen3-Embedding-8B | 8B | 2,048 | 70.58 | Strong multilingual |

| Jina Embeddings v4 | 3.8B | 4,096 | ~71.7 | Free via API under research license |

| BGE-M3 | 568M | 1,024 | 63.0 | Lightweight, multilingual |

At typical throughput on a single A100, self-hosted NV-Embed-v2 runs around $0.001 per million tokens - 20x cheaper than any commercial API. The tradeoff is everything that comes with running inference infrastructure. If your team already has GPUs allocated, the math is easy. If not, the managed API cost is usually worth avoiding the ops burden.

Voyage AI's internal RTEB benchmark shows voyage-4-large's retrieval lead over comparable models. Vendor-reported data - third-party MTEB submissions pending.

Source: blog.voyageai.com

Voyage AI's internal RTEB benchmark shows voyage-4-large's retrieval lead over comparable models. Vendor-reported data - third-party MTEB submissions pending.

Source: blog.voyageai.com

The Voyage 4 Shift

Voyage AI's January 2026 release of the Voyage 4 family is the most consequential change in this space since Cohere launched Embed 4. All four models - voyage-4-large, voyage-4, voyage-4-lite, and voyage-4-nano (open-weight, Apache 2.0) - share a single embedding space. That sounds like a technical footnote but it has real cost consequences.

In most RAG systems, documents are embedded once and queries are embedded thousands of times per day. With the Voyage 4 shared space, you can use voyage-4-large ($0.12/MTok) for the offline batch document embedding pass and voyage-4-lite ($0.02/MTok) for every live query - getting flagship-quality document representations at budget query costs. Voyage AI claims the asymmetric setup narrows the retrieval gap between the large and lite models.

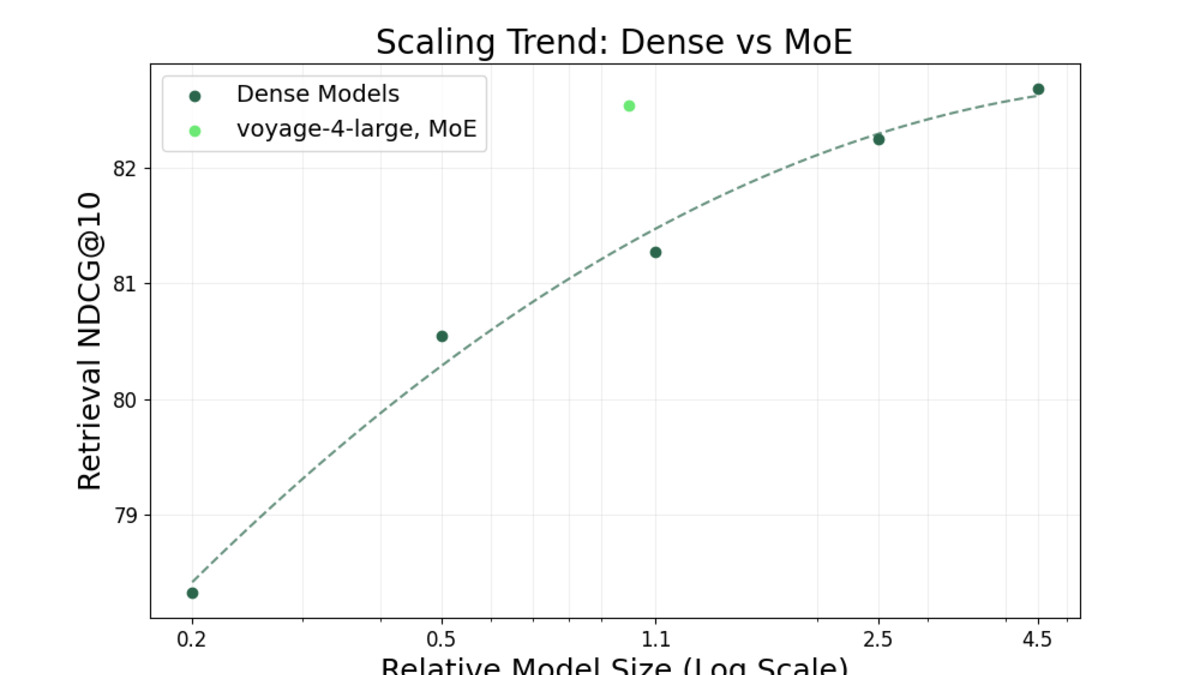

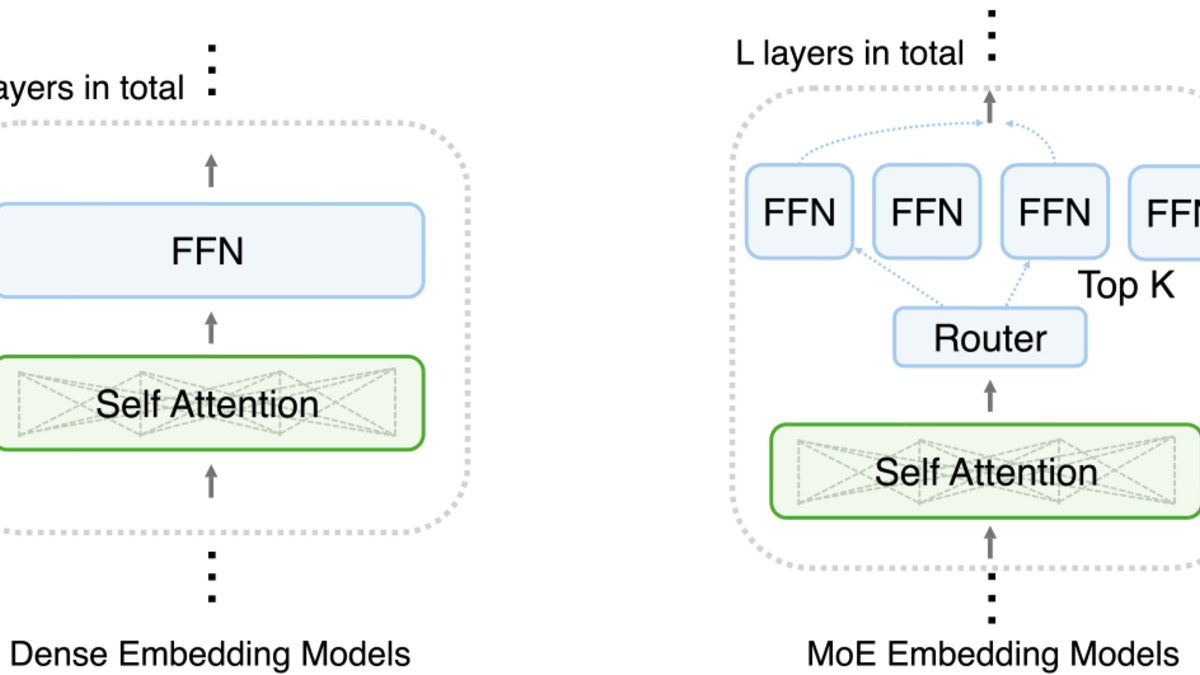

Voyage 4 uses a mixture-of-experts architecture - the first production embedding model to do so. Voyage AI says serving costs are 40% lower than comparable dense models at the same quality tier.

The pricing tiers are unchanged from voyage-3.5: $0.02, $0.06, and $0.12/MTok from lite to large. What improved is quality at every tier. On Voyage AI's internal RTEB retrieval benchmark (29 datasets), voyage-4-large outperforms Gemini Embedding 001 by 8.2% and Cohere Embed 4 by 3.9%. I haven't been able to find a third-party MTEB submission for voyage-4-large yet, so treat those figures as vendor-reported for now.

Voyage AI's MoE architecture routes tokens through specialized expert FFN layers rather than a single dense network, cutting inference cost without degrading retrieval accuracy.

Source: blog.voyageai.com

Voyage AI's MoE architecture routes tokens through specialized expert FFN layers rather than a single dense network, cutting inference cost without degrading retrieval accuracy.

Source: blog.voyageai.com

Hidden Costs

Context Limits Still Matter at the Low End

Cohere Embed 3 English and Multilingual cap at 512 tokens per chunk - roughly 380 words. At anything resembling a full paragraph, you're chunking aggressively and multiplying your embedding call count. Cohere Embed 4 jumps to 128K tokens, which changes the architecture entirely: one API call per document instead of 10-50. If you're evaluating Cohere, skip v3 and go straight to v4 for anything other than very short text.

OpenAI's 8,191-token limit is fine for most use cases. Voyage 4's 32K limit helps when you're dealing with long legal or technical documents where chunking degrades retrieval quality.

Dimension Count and Storage Costs

A 3,072-dimension embedding (OpenAI large, Google Gemini) takes 3x the vector storage of a 1,024-dimension model (Cohere, Voyage). At 100 million documents in Pinecone or Qdrant, that's roughly 1.2 TB versus 400 GB. Storage costs can run $100-300/month at that scale - easily exceeding the embedding API cost difference between the large and small models.

Batch Discounts Vary Widely

OpenAI offers 50% off through the batch API ($0.01/MTok for text-embedding-3-small). Voyage AI gives 33% off via their Files API. Mistral's Codestral Embed offers 50% batch discount. Google's batch pricing for gemini-embedding-001 cuts the cost in half to $0.075/MTok. Cohere doesn't offer batch embedding discounts on its token-priced tier. For any offline indexing pipeline, batch pricing is worth modeling before you commit to a provider.

Re-embedding Costs

When you switch embedding models, you re-embed your entire corpus. At 1 billion tokens, that's $20 with text-embedding-3-small or $120 with Cohere Embed 4. The shared embedding space in Voyage 4 reduces this risk slightly - you can migrate between voyage-4 tiers without re-embedding documents. Cross-provider migration still requires a full re-index.

AWS Ecosystem Lock-In

Amazon Titan Text Embeddings V2 at $0.02/MTok is competitive on price, but the model is only available through AWS Bedrock. It doesn't benchmark publicly on MTEB, and latency depends on your AWS region. If your inference stack already lives in AWS, it's worth testing. Otherwise, a provider with broader ecosystem support makes more sense.

Free Tier Comparison

| Provider | Free Offering | Tokens Included | Expiration |

|---|---|---|---|

| Voyage AI | Free credits | 200M tokens (all voyage-4 models) | None |

| Google (Gemini) | Free tier for both embedding models | 1,500 RPD | None |

| Jina AI | Non-commercial API | Unlimited (research license) | None |

| OpenAI | $5 trial credits | ~250M tokens (3-small) | 3 months |

| Mistral | Free tier (rate-limited) | Limited RPM | None |

| Cohere | Trial API key | 1,000 calls/month | None |

| AWS Bedrock | 1M free tokens (12 months) | 1M tokens | 12 months |

Voyage AI's 200M token free tier for all voyage-4 models is the most useful for prototyping. You can index a meaningful corpus before any billing starts. Google's free tier for gemini-embedding-001 is also generous for development, though rate-limited to 1,500 requests per day.

Price History

Jan 2026 - Voyage AI released the Voyage 4 family (voyage-4-large, voyage-4, voyage-4-lite, voyage-4-nano). Shared embedding space enables asymmetric retrieval. Pricing unchanged from voyage-3.5 tiers.

Mar 2026 - Google released Gemini Embedding 2 Preview, adding multimodal support (image at $0.45/MTok, audio at $6.50/MTok, video at $12.00/MTok) with text ($0.20/MTok). Gemini Embedding 001 remains the price-competitive text-only option at $0.15/MTok.

Jan 2026 - Cohere Embed 4 context window expanded to 128,000 tokens, up from the 8,192 listed in earlier documentation. Text pricing unchanged at $0.12/MTok.

Jan 2026 - Jina Embeddings v4 released under Qwen Research License - free via API for non-commercial use, commercial pricing available on request.

May 2025 - Mistral launched Codestral Embed at $0.15/MTok, specialized for code retrieval tasks with 32K context window.

Embedding prices have stayed remarkably stable compared to LLM API prices, where cuts of 50-80% over 18 months have been common. The main competition in embeddings is on capability - multimodal support, longer context windows, shared embedding spaces - rather than pure cost. Open-source models are where the real disruption is happening.

FAQ

Which embedding model is cheapest per million tokens?

Three models tie at $0.02/MTok: voyage-4-lite, OpenAI text-embedding-3-small, and Amazon Titan Text Embeddings V2. Of these, voyage-4-lite has a clearly longer context window (32K vs 8K).

What's the best embedding model for RAG in 2026?

For most RAG pipelines, voyage-4-lite ($0.02/MTok) or OpenAI text-embedding-3-small ($0.02/MTok) cover 80% of use cases. For highest retrieval accuracy, Google gemini-embedding-001 ($0.15/MTok) leads the commercial MTEB at 68.32.

Are open-source embedding models good enough?

Yes. NV-Embed-v2 scores 72.31 on English MTEB, higher than every commercial API. Microsoft Harrier-OSS-v1 reaches 74.3 on MTEB v2. The catch is GPU infrastructure. For teams with existing compute, open-source wins on quality and cost.

How much does it cost to embed 1 million documents?

Assuming 500 tokens per document (about 375 words): 500M total tokens. At $0.02/MTok (OpenAI small or voyage-4-lite), that's $10. At $0.15/MTok (Gemini Embedding 001), that's $75. Self-hosted NV-Embed-v2 comes in around $0.50 in compute.

Can I mix voyage-4-large for documents and voyage-4-lite for queries?

Yes. The Voyage 4 family uses a shared embedding space, so vectors from different tiers are compatible. You embed documents once with the large model and run queries through the cheaper lite model. Voyage AI reports this narrows the retrieval gap between the two.

Why doesn't Mistral Embed show up as the cheapest option anymore?

The previous version of this page listed Mistral Embed at $0.01/MTok. That was an error. Multiple sources confirm the actual price is $0.10/MTok. At that price, it's comparable to Cohere Embed 3 but with a longer context window.

Sources:

✓ Last verified April 13, 2026