Anthropic Files for IPO, Eyes $1 Trillion Debut

Anthropic confidentially filed its S-1 with the SEC on June 1, targeting an October 2026 IPO at a near-$1 trillion valuation after a 5x revenue surge in six months.

They summarize our coverage. We write it.

Newsletters like this one rebroadcast our headlines - often without the full review, the source reading, or the analysis underneath. Our weekly briefing sends the work they paraphrase, straight from the desk, before they get to it.

Free, weekly, no spam. One email every Tuesday. Unsubscribe anytime.

Anthropic expands Project Glasswing to 150 organizations across 15 countries, with Claude Mythos Preview surfacing 10,000 high-severity vulnerabilities since April.

Trump signed a narrowed AI executive order giving the government 30 days of voluntary pre-release access to frontier models, after industry lobbying gutted the original 90-day mandatory proposal.

Three new papers expose how reasoning traces can be extracted from supposedly hidden model internals, where chain-of-thought hits an architectural ceiling, and how RL teaches models to know when to quit.

The Agent Control Standard defines open middleware hooks that let teams block, allow, or modify AI agent actions before they reach production systems.

Anthropic confidentially filed its S-1 with the SEC on June 1, targeting an October 2026 IPO at a near-$1 trillion valuation after a 5x revenue surge in six months.

Microsoft's Build 2026 keynote ships Project Polaris to replace GPT-4 in GitHub Copilot by August and declares Foundry Local generally available for zero-cloud on-device inference.

Nvidia's RTX Spark packs 20 Arm CPU cores and a Blackwell 2.0 GPU with 6,144 CUDA cores into a 45-80W Windows laptop chip, targeting Apple Silicon head-on.

DuckDuckGo's no-AI search page saw a threefold traffic spike after Google's I/O 2026 overhaul made AI-generated summaries mandatory with no opt-out.

Three papers: smarter CoT trimming cuts reasoning length by 50%, a plug-in context manager rescues frozen agents on long tasks, and a 960K-item clinical benchmark exposes LLM gaps in hospitals.

Learn how to use ChatGPT, Perplexity, Gemini, and Amazon's AI assistant to research products, compare prices, and spot fake reviews before you buy.

A practical beginner's guide to using AI tools to write a stronger resume, craft tailored cover letters, and prepare confidently for job interviews.

A step-by-step guide to uploading PDFs into ChatGPT, Claude, and Gemini, writing prompts that get useful summaries, and verifying results - no technical background needed.

Google's Antigravity 2.0 rewrites the platform from a browser IDE into a five-surface agent suite. The architecture is ambitious, the launch was a mess.

Kore.ai's Artemis platform brings a compiled blueprint language and governance-first architecture to enterprise multiagent AI - ambitious, but Azure-only for now.

Gemini Spark is Google's first 24/7 cloud-persistent AI agent - ambitious, genuinely novel, and still rough around the privacy edges.

Current rankings of the best AI image generation models, including GPT Image 2, Nano Banana 2, Recraft V4.1, HiDream-O1-Image, FLUX 2, Midjourney v8.1, and Ideogram 3.0, scored on human preference, text rendering, and photorealism.

Rankings of the best AI models and agent frameworks on the GAIA benchmark, which tests real-world multi-step tasks requiring web browsing, tool use, and multi-hop reasoning.

Rankings of AI models by cost efficiency in May 2026, comparing performance per dollar across frontier and budget models. Updated with DeepSeek V4, GPT-5.5, and Kimi K2.6.

Cohere Command A+ is a 218B sparse MoE model with Apache 2.0 license, native citations, and a 128K context window that runs on just two H100 GPUs.

NVIDIA Cosmos 3 is an open physical AI omnimodel with Mixture-of-Transformers architecture that natively handles text, images, video, sound, and robot actions in a single 16B or 64B model.

Anthropic's May 2026 flagship model delivers 69.2% on SWE-bench Pro, dynamic parallel workflows in research preview, and Effort Control - all at $5/$25 pricing.

Three papers: smarter CoT trimming cuts reasoning length by 50%, a plug-in context manager rescues frozen agents on long tasks, and a 960K-item clinical benchmark exposes LLM gaps in hospitals.

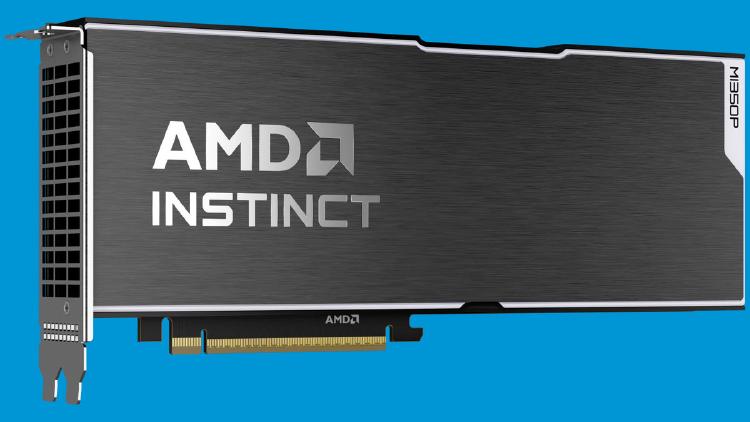

AMD Instinct MI350P brings CDNA 4 to a standard PCIe slot: 144GB HBM3E, 4 TB/s bandwidth, 2.3 PFLOPS MXFP8, and 600W passive cooling for air-cooled servers.

NVIDIA RTX Spark is a 20-core ARM + Blackwell GPU superchip delivering 1 petaFLOP FP4 and 128GB unified memory for AI-first Windows laptops and desktops.

Mistral rebrands Le Chat as Vibe, ships Work Mode with enterprise integrations, a VS Code extension, and remote coding agents powered by Mistral Medium 3.5 at 77.6% SWE-Bench.

Google's Antigravity 2.0 rewrites the platform from a browser IDE into a five-surface agent suite. The architecture is ambitious, the launch was a mess.

Current rankings of the best AI image generation models, including GPT Image 2, Nano Banana 2, Recraft V4.1, HiDream-O1-Image, FLUX 2, Midjourney v8.1, and Ideogram 3.0, scored on human preference, text rendering, and photorealism.

NVIDIA Cosmos 3 is an open physical AI omnimodel with Mixture-of-Transformers architecture that natively handles text, images, video, sound, and robot actions in a single 16B or 64B model.

Nvidia's Cosmos 3 is the first fully open omnimodel for physical AI, trained on 20 trillion tokens to teach robots and autonomous vehicles how to reason and act in the real world.

CVE-2026-48710 in Starlette lets a single malformed HTTP header bypass authentication on vLLM, LiteLLM, FastAPI, and every MCP server in production.

Google is shutting down free access to its open-source Gemini CLI on June 18, replacing it with the proprietary Antigravity CLI - after accepting 6,000+ community pull requests.

CNN filed a copyright and trademark lawsuit against Perplexity AI in federal court, becoming the first television network to take legal action against an AI search company over scraping 17,000+ stories.

ByteDance is weighing up to $70 billion in AI capital expenditure for 2026, nearly tripling its 2025 spend, while locking in offshore compute and a Qualcomm chip deal to sidestep US export controls as Doubao hits 345 million users.