TSMC Q1: $35.9B Record as AI Now Powers 61% of Revenue

TSMC posted $35.9B in Q1 2026 revenue, a 40.6% year-over-year jump, with AI and HPC now accounting for 61% of wafer sales - and CoWoS packaging still fully booked.

TSMC's Q1 2026 results don't leave much to interpret: the company made $35.9 billion in three months, 61% of it from chips destined for AI and high-performance compute workloads. That's not a blip - it's the largest share HPC has ever claimed in TSMC's revenue mix, up from just over 40% two years ago.

The earnings call landed on April 16. By every metric that matters for AI infrastructure, the numbers go the same direction: up, and faster than the previous quarter.

Key Q1 2026 Numbers

| Metric | Q1 2026 | YoY Change |

|---|---|---|

| Revenue | $35.9B | +40.6% |

| Net income | $18.1B | +58% |

| Gross margin | 66.2% | +4.4pp |

| HPC/AI share | 61% | +20% QoQ |

| 3nm revenue share | 25% | N/A |

| Q2 2026 guidance | $39.0-40.2B | +~10% QoQ |

By the Numbers

Process Node Mix

Advanced nodes now dominate TSMC's books. The 3nm node alone represented 25% of total Q1 revenue, and 5nm added another 36%. Combined, those two nodes account for 61% of quarterly revenue. Nodes at 7nm and below collectively contributed 74% of all wafer revenue.

That concentration matters. Most of the AI chips running data centers today - NVIDIA Blackwell, AMD Instinct MI300X, Google's TPU v5 - are 3nm or 5nm parts. The revenue split is effectively a proxy for how much of TSMC's capacity is chasing AI demand versus everything else.

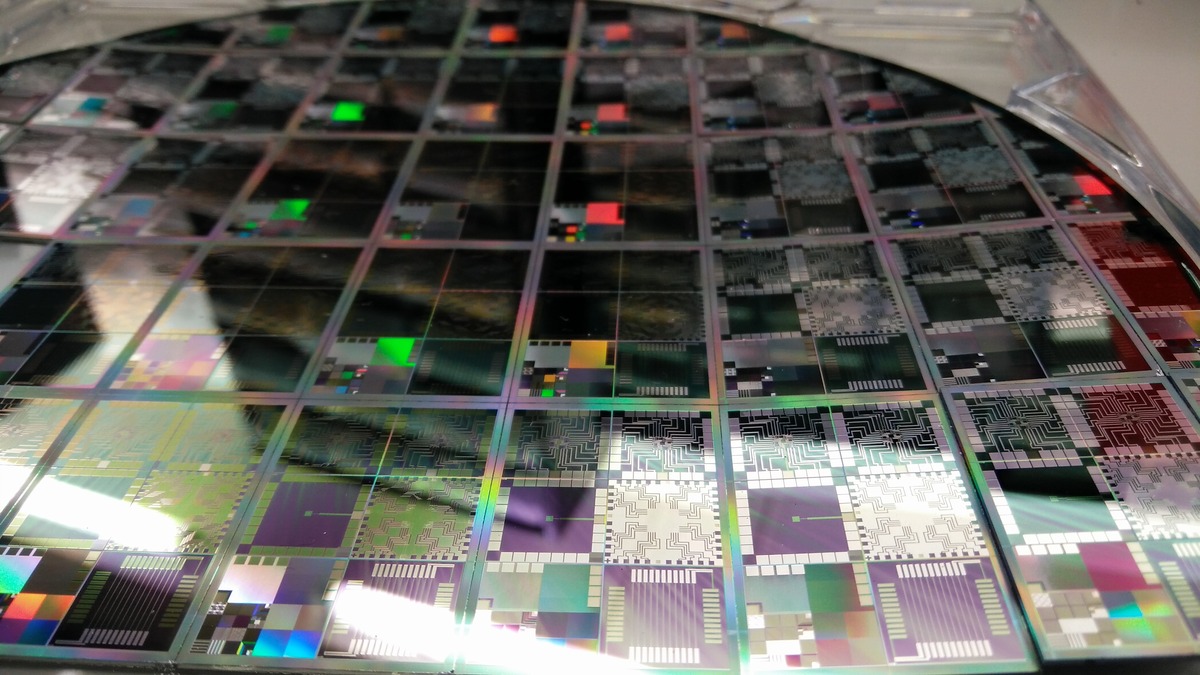

TSMC produces silicon wafers at scale across multiple nodes, but AI customers now dominate the advanced-node allocation.

Source: commons.wikimedia.org

TSMC produces silicon wafers at scale across multiple nodes, but AI customers now dominate the advanced-node allocation.

Source: commons.wikimedia.org

End Market Reality

The HPC segment - which TSMC groups together AI accelerators, edge compute, and 5G infrastructure - grew 20% quarter-over-quarter and reached 61% of revenue. Smartphone chips came in second. Everything else, including automotive, IoT, and industrial, is a distant third.

CEO C.C. Wei didn't hedge when asked about demand durability on the earnings call:

"AI-related demand continues to be extremely robust. The shift from generative AI and the query mode to agentic AI and command and action mode is intensifying computational requirements. Our conviction in the multiyear AI megatrend remains high."

That shift Wei mentioned - from generative to agentic workloads - is worth sitting with. Agentic systems run longer inference chains, maintain state across calls, and execute tool use in loops. Token throughput requirements per user session go up markedly compared to a single-turn chatbot response. NVIDIA's Vera Rubin platform, announced at GTC, is specifically engineered around that workload profile - and every chip in it comes from TSMC.

CoWoS: The Chokepoint No Headline Mentions

Revenue and margin numbers are the story the press release leads with. The more interesting constraint is buried in capacity commentary: CoWoS advanced packaging remains fully booked.

CoWoS (Chip on Wafer on Substrate) is TSMC's high-bandwidth packaging technology. It's what physically connects HBM memory to GPU compute dies in products like the H100, H200, and Blackwell B200. Without CoWoS capacity, a finished GPU die sits in inventory. The packaging step is the actual production bottleneck for the most expensive chips in the AI stack.

NVIDIA's GB200 NVL72 system relies on TSMC's CoWoS packaging to connect HBM3e memory with Blackwell compute dies. Every unit ships only when TSMC can package it.

Source: nvidianews.nvidia.com

NVIDIA's GB200 NVL72 system relies on TSMC's CoWoS packaging to connect HBM3e memory with Blackwell compute dies. Every unit ships only when TSMC can package it.

Source: nvidianews.nvidia.com

Who Gets In

TSMC's CoWoS capacity currently runs at 75,000 to 80,000 wafers per month, with a target to reach 120,000 to 130,000 wafers by end of 2026. That sounds like relief until you look at demand: industry estimates put 2026 CoWoS demand at roughly 700,000 wafers total.

NVIDIA reportedly holds over 60% of total CoWoS allocation for 2025 through 2026. What's left is split among everyone else - AMD, Google, Amazon, Microsoft, and dozens of AI chip startups all competing for the same remaining 40%. Broadcom's record Q1 results earlier this quarter told a similar story from the custom ASIC side: AI chip demand is outrunning the physical infrastructure required to build and package silicon at scale.

What They Measured / What They Didn't

Agentic Demand Is Real and Accelerating

Wei's framing of the generative-to-agentic transition isn't marketing. It maps to something measurable in TSMC's order book. Agentic AI deployments are compute-heavier per user session than pure chatbot workloads, and cloud providers that scaled up on H100 capacity in 2024 are now assessing how quickly to cycle to Blackwell and beyond. TSMC's full-year guidance of over 30% revenue growth in dollar terms reflects those replacement and expansion cycles already locked in.

The 2nm node (N2) entered volume production in Q4 2025 at TSMC's Hsinchu and Kaohsiung fabs ahead of the original schedule. The N2P variant and the A16 process - optimized for HPC workloads with backside power delivery - are both scheduled for the second half of 2026. Several of the AI chips ordered today will land on those nodes.

Arizona and the Geopolitics Underneath

TSMC's Arizona Fab 1 has been shipping N4-class wafers since late 2024. Current production includes NVIDIA Blackwell chips. Fab 2, which will use 3nm, is now on track for equipment installation in Q3 2026 and volume production in H2 2027 - accelerated by a full year from the original plan.

TSMC processes silicon wafers through hundreds of steps before packaging. The Arizona facility now runs NVIDIA's Blackwell production at N4 yields comparable to Taiwan.

Source: commons.wikimedia.org

TSMC processes silicon wafers through hundreds of steps before packaging. The Arizona facility now runs NVIDIA's Blackwell production at N4 yields comparable to Taiwan.

Source: commons.wikimedia.org

TSMC's CFO flagged a real headwind: the Middle East conflict is driving up prices for the specialty chemicals and gases that semiconductor fabs consume. That's expected to pressure margins in H2 2026, despite the otherwise strong guidance. The gross margin guidance for Q2 - 65.5% to 67.5% - is roughly flat with Q1. The company isn't predicting a compression yet, but the warning is there.

The $165 billion, six-fab Arizona commitment is partly industrial policy and partly hedge. TSMC controls roughly 72% of the global foundry market and 90% of advanced node capacity. Geographic concentration in Taiwan is a real supply chain risk, and U.S. customers - especially defense contractors and hyperscalers - have been pushing for domestic production for years.

Should You Care?

If you run inference at any scale, the short answer is yes.

The TSMC earnings aren't abstract finance news. They confirm that AI chip demand isn't slowing. They also confirm that CoWoS packaging is the actual constraint, not wafer production, and that capacity won't catch up to demand this year. If you're waiting for H200 or GB200 allocations, the queue isn't getting shorter. If you're assessing whether to lock in cloud GPU contracts now versus waiting for next-gen chips, TSMC's order book suggests the next generation is already oversubscribed before it ships.

The memory side of the stack has its own supply crunch, and NVIDIA's own record $68B quarterly revenue tells the same story from the demand side. TSMC's numbers are the supply-chain confirmation of what everyone already suspected: compute demand for AI is real, durable, and constrained by physics and packaging, not hype cycles.

The company projects AI chip revenue will grow at a 60% compound annual rate through 2029. Take that forecast with appropriate skepticism. But a company with 66% gross margins and a fully booked order book doesn't usually miss in one direction.

Sources: