Trump Orders Every Federal Agency to Stop Using Anthropic - Pentagon Brands Company a National Security Risk

President Trump directed all U.S. government agencies to immediately cease using Anthropic's technology after the company refused to drop AI safety guardrails for the Pentagon. Defense Secretary Hegseth designated Anthropic a supply chain risk to national security.

The deadline passed at 5:01 PM ET on Friday. Within the hour, President Trump posted to Truth Social, and the most consequential government action against an AI company in history was set in motion.

Trump directed "EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic's technology." Defense Secretary Pete Hegseth then designated Anthropic a "Supply-Chain Risk to National Security" - a classification normally reserved for firms linked to foreign adversaries like China and Russia. The Pentagon has six months to transition off Anthropic's systems. All other federal agencies must comply right away. Every military contractor, supplier, and partner is now barred from doing business with the company.

The dispute came down to two lines Anthropic would not cross: mass domestic surveillance of Americans, and fully autonomous weapons without human oversight.

Key Facts

| Detail | Value |

|---|---|

| Order | All federal agencies must immediately stop using Anthropic |

| Pentagon phaseout | 6 months to transition to alternative providers |

| Supply chain designation | Anthropic classified as national security supply chain risk |

| Contractor impact | All Pentagon contractors barred from Anthropic business |

| Contract at stake | $200 million DoD prototype contract |

| Anthropic valuation | $380 billion |

| Anthropic revenue | $14 billion annually |

| Replacement | xAI's Grok recently cleared for classified systems |

What Trump Said

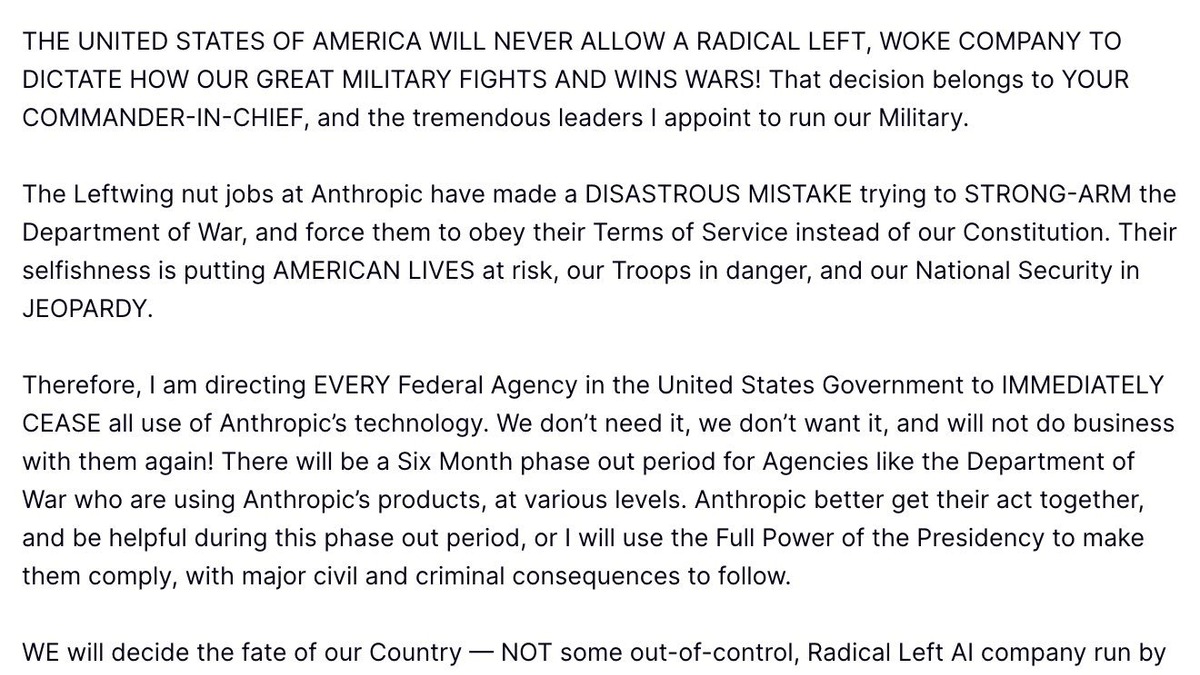

Trump's Truth Social post framed the ban as a national security imperative, calling Anthropic's leadership "Leftwing nut jobs" who made a "DISASTROUS MISTAKE trying to STRONG-ARM the Department of War."

Trump's Truth Social post on Friday evening directing all federal agencies to immediately cease using Anthropic's technology.

Trump's Truth Social post on Friday evening directing all federal agencies to immediately cease using Anthropic's technology.

The post threatened "major civil and criminal consequences" if Anthropic does not cooperate during the phaseout period. Trump added: "We don't need it, we don't want it, and will not do business with them again!"

Hegseth's supply chain designation

Minutes later, Defense Secretary Pete Hegseth followed up with the supply chain risk designation - a move that extends the ban far beyond direct government use. Any company that holds a Pentagon contract, or works in the defense supply chain at any tier, must now prove it has no commercial relationship with Anthropic. For a company whose enterprise business depends on large corporations - many of which hold defense contracts - this is the mechanism that could cause real financial damage.

Hegseth claimed Anthropic delivered "a master class in arrogance and betrayal" and accused the company of attempting to "seize veto power over operational decisions."

Anthropic's Position

Anthropic's two red lines never changed throughout weeks of negotiation. CEO Dario Amodei published a statement on Anthropic's website repeating the company's position:

"We cannot in good conscience accede to their request."

The company drew its limits at two specific uses:

- Mass domestic surveillance - Anthropic supports lawful foreign intelligence and counterintelligence missions but opposes AI-powered mass surveillance of American citizens, citing "serious, novel risks to our fundamental liberties"

- Fully autonomous weapons - Anthropic distinguishes between partially autonomous systems (which it supports, including systems rolled out in Ukraine) and fully autonomous targeting without human oversight, which it says frontier AI systems "are simply not reliable enough" to handle safely

Amodei called the Pentagon's simultaneous threats contradictory - labeling Anthropic both a security risk and essential to national security at the same time. The company pledged to "enable a smooth transition to another provider, avoiding any disruption to ongoing military planning, operations, or other critical missions."

The nuclear scenario

The intensity of the dispute became clearer earlier in the week when the Washington Post reported that a hypothetical nuclear attack scenario was used during negotiations. Pentagon officials asked whether Claude would assist in retaliatory strike planning. Anthropic's answer - that this fell within acceptable defensive use but outside fully autonomous targeting - satisfied neither side and escalated the confrontation.

The Pentagon's Rhetoric

Pentagon Undersecretary for Research and Engineering Emil Michael took the conflict personal. On X, he called Amodei "a liar" with a "God complex," accusing the CEO of wanting "to personally control the U.S military."

This is the same Emil Michael who previously served as Uber's SVP of Business, where he once suggested spending millions to dig up personal information on journalists critical of the company. His appointment to oversee Pentagon research drew scrutiny from defense policy circles, and his public attacks on Amodei represent a sharp departure from typical Pentagon-contractor relations.

The Pentagon designated Anthropic a supply chain risk - a classification normally reserved for adversaries like China and Russia.

The Pentagon designated Anthropic a supply chain risk - a classification normally reserved for adversaries like China and Russia.

The Industry Response

The ban did not happen in a vacuum. The broader AI industry responded in ways that complicate the administration's framing of this as Anthropic acting alone.

450+ employees signed an open letter

Over 450 employees across Google (about 400 signers) and OpenAI signed an open letter titled "We Will Not Be Divided." The letter accused the Department of Defense of attempting to coerce Anthropic and called on leadership at both companies to "put aside their differences and stand together to continue to refuse the Department of War's current demands for permission to use our models for domestic mass surveillance and autonomously killing people without human oversight."

Sam Altman backed Anthropic's red lines

OpenAI CEO Sam Altman told employees in an internal memo on Friday that OpenAI would draw the same red lines as Anthropic - opposing mass surveillance of Americans and fully autonomous weapons. OpenAI holds its own Pentagon contracts for unclassified systems and is in talks for classified access.

xAI steps in

Elon Musk's xAI signed an agreement earlier in the week to allow its Grok model into classified Pentagon systems - the same systems Anthropic is being phased out of. xAI agreed to the "all lawful purposes" standard that Anthropic rejected. Until now, Anthropic's Claude was the only AI model cleared for the Pentagon's most sensitive classified environments.

What It Means for Anthropic's Business

The $200 million Pentagon contract is a rounding error against Anthropic's $14 billion in annual revenue. The supply chain designation is not.

| Impact | Severity | Timeline |

|---|---|---|

| Direct federal revenue loss | Low - $200M of $14B | 6 months |

| Contractor cascade effect | High - defense supply chain companies must cut ties | Immediate |

| Enterprise customer risk | Medium - firms with Pentagon contracts may reassess | Months |

| IPO implications | Unknown - Anthropic planning 2026 IPO | Ongoing |

| Reputational signal | Mixed - praised by safety advocates, penalized by government | Immediate |

The real danger is the second-order effect. Defense contractors operate on thin margins and can't risk their Pentagon relationships. If major cloud providers, consulting firms, and enterprise software companies with defense contracts must prove they have no Anthropic relationship, the company's enterprise sales pipeline could narrow notably.

Amodei has pushed back on this framing, noting that Anthropic's valuation and revenue have only grown since the dispute began. But the supply chain designation was announced hours ago. The market hasn't yet priced this in.

What to Watch

The six-month Pentagon phaseout creates several pressure points:

- DPA escalation - Trump threatened to use "the Full Power of the Presidency" to compel compliance. The Defense Production Act, a Korean War-era statute, could theoretically force Anthropic to provide an unrestricted version of Claude. No tech company has ever been subjected to a DPA order for software.

- xAI readiness - Grok was recently approved for classified systems, but it is unclear whether it can fully replace Claude's capabilities on the Pentagon's most sensitive intelligence and planning workflows within six months.

- OpenAI and Google positioning - Both hold unclassified Pentagon contracts. If they maintain the same red lines Altman described, the administration faces the same standoff with additional providers. If they quietly agree to broader terms, the industry solidarity fractures.

- IPO timing - Anthropic was planning to go public in 2026. A supply chain risk designation from the U.S. government is not the kind of headline you want in your S-1 filing.

- Contractor fallout - The next weeks will reveal how aggressively Pentagon contracting officers enforce the supply chain designation against companies that use Claude in non-defense business lines.

Anthropic bet its government relationship on two principles it wouldn't compromise. The administration called the bet. The rest of the AI industry is now deciding whether to fold or match.

Sources:

- Trump tells government to stop using Anthropic's AI systems - NBC News

- President Trump bans Anthropic from use in government systems - NPR

- Trump orders federal agencies to stop using Anthropic's AI technology - CBS News

- Trump says he plans to order federal ban on Anthropic AI - Fox News

- Statement from Dario Amodei on our discussions with the Department of War - Anthropic

- Employees at Google and OpenAI support Anthropic's Pentagon stand in open letter - TechCrunch

- Google and OpenAI employees sign open letter in solidarity with Anthropic - Engadget

- xAI's Grok approved for Pentagon classified systems - Teslarati