You Do Not Need a Mac Mini to Run AI Agents. You Need a Computer That Makes API Calls

People are spending $2,200 on Mac Minis to run OpenClaw - an agent that calls Claude and OpenAI APIs remotely. The Mac Mini's GPU sits idle. Any old laptop, desktop, or even an Android phone can make HTTP requests just as well.

TL;DR

- The Mac Mini M4 craze created an actual Apple Store shortage in January, with delivery times stretching to six weeks

- The irony: people are buying them to run OpenClaw, which sends API calls to Claude or OpenAI for inference - the Mac Mini's GPU sits completely idle

- OpenClaw's creator himself said it needs 2 vCPUs and 4GB of RAM - the specs of a 2015 laptop

- Any old computer, decommissioned office PC, Raspberry Pi, or even an Android phone you aren't using can run an AI agent that calls cloud APIs

- Nobody is successfully running local models for agentic tasks anyway - our data shows zero shipped apps from the local LLM hardware community

- This is a $2,200 solution to a problem that does not exist

The $2,200 API Client

In January 2026, Apple Stores across the United States ran out of Mac Minis. Not all of them - specifically the M4 Pro configurations with 48GB and 64GB of unified memory, the ones that cost $1,999 to $2,399. Delivery times stretched to five and six weeks. Tom's Hardware confirmed the cause: people buying them to run AI.

The AI in question was OpenClaw, the open-source personal agent that went viral in early 2026. YouTube guides, Reddit threads, and tech influencer posts all converged on the same setup: buy a Mac Mini M4 Pro with maximum unified memory, install OpenClaw, and become an "AI power user."

Here is the part that none of those guides mentioned: OpenClaw doesn't run AI models on your hardware.

OpenClaw is an agent framework. It takes your instructions, breaks them into tasks, and sends API calls to Claude, OpenAI, or whatever model provider you configure. The actual inference - the computationally expensive part, the part that needs fast memory and powerful processors - happens on Anthropic's or OpenAI's servers. Your Mac Mini's job is to run a Node.js process, manage some WebSocket connections, and make HTTPS requests.

Peter Steinberger, the creator of OpenClaw, had to publicly clarify that OpenClaw needs 2 vCPUs and 4 GB of RAM. That's less than a Chromebook. That 64GB of unified memory sitting in your new Mac Mini? Idle. That M4 Pro's neural engine? Idle. The GPU? Idle. You spent $2,200 on a machine whose primary job is to send JSON over HTTPS.

A 2016 ThinkPad does that just fine.

The Unified Memory Misunderstanding

The hype around Apple Silicon unified memory makes sense in exactly one context: running large language models locally, where the model weights need to be loaded into memory and fed to the processor as fast as possible. The Mac Mini M4 Pro's 273 GB/s memory bandwidth is truly impressive for local inference on models up to about 70B parameters.

But nobody buying a Mac Mini for OpenClaw is doing local inference. They're paying for cloud API access to Claude or GPT-5 and using the Mac Mini as a glorified thin client. The 64GB of unified memory serves exactly one function in this workflow: sitting there, unused, while the agent sends your prompt to Anthropic's servers and waits for the response.

If you wanted to actually run models locally, the economics are worse than they look. As we covered in detail, the best locally-runnable model scores 46.8% on SWE-Bench Verified - roughly half of what Claude Opus achieves. Simon Willison, who built 110 tools in 2025, tried local models and found them unable to "handle Bash tool calls reliably enough to operate a coding agent." The gap between local and cloud models for agentic tasks isn't narrow. It's disqualifying.

So the Mac Mini buyers aren't running local models. They aren't planning to run local models. They're running a cloud-connected agent on hardware that could be replaced by anything with a network connection and a terminal.

What You Actually Need to Run an Agent

Here is what OpenClaw - and virtually every other AI agent framework - requires from its host machine:

| Requirement | OpenClaw Spec | What That Means |

|---|---|---|

| CPU | 2 vCPUs | Any processor from the last decade |

| RAM | 4 GB | Basically any computer still functioning |

| Storage | A few GB for the app and config | Trivial |

| Network | Stable internet connection | The actual bottleneck |

| OS | Linux, macOS, or WSL | Runs everywhere |

That's the entire list. There is no GPU requirement. There's no "minimum 48GB unified memory" requirement. There is no reason to buy new hardware at all.

The Old Hardware That Already Does This

Once you understand that running an AI agent means running a lightweight process that makes API calls, the hardware question changes completely. It's no longer "what can run a 70B model?" It's "what can run Node.js and connect to the internet?"

The answer is: basically everything.

The Laptop You Replaced Two Years Ago

That 2019 Dell Latitude or 2020 ThinkPad T480 sitting in a drawer has a quad-core CPU, 8-16 GB of RAM, and runs Linux beautifully. Install Ubuntu Server, set up OpenClaw, and it's functionally identical to a Mac Mini for agent tasks. The API calls go to the same servers. The responses come back at the same speed. The only difference is that one costs $0 and the other costs $2,200.

The Office PC Getting Decommissioned

Businesses cycle out desktop PCs every 3-5 years. A 2021 Dell OptiPlex with an Intel i5 and 16GB of RAM runs agent workloads without breaking a sweat. Companies sell these by the pallet for $100-250 on eBay and asset liquidation sites. For the price of one Mac Mini, you could buy ten of them and have nine left over.

A Raspberry Pi

A Raspberry Pi 5 costs $80. It has a quad-core ARM CPU, 8GB of RAM, and runs Linux. It can make HTTPS requests. It can run Node.js. It can host OpenClaw. If your agent's job is to call Claude's API and manage tasks, a Raspberry Pi does that on 5 watts of power. A Mac Mini M4 Pro draws 60 watts to do the same work.

The Pi 5 even runs small local models if you want to experiment - 15-plus tokens per second with optimized 1-3B parameter models. It won't replace Claude for coding tasks, but it's a perfectly functional agent host.

Your Old Android Phone

This one sounds like a joke. It isn't. Android phones from 2018 onward have multi-core ARM processors, 4-8 GB of RAM, and Wi-Fi. Apps like Termux provide a full Linux terminal on Android. People run Node.js, Python, and even lightweight containers on old phones.

Is it the ideal agent host? No. But it works. And the point isn't that you should run OpenClaw on a phone - it's that the hardware requirements are so minimal that you could, which should make you question why anyone thinks they need a $2,200 Mac Mini.

The Trend Machine

So why are people buying Mac Minis? The same reason they buy any trending tech product: social proof.

The cycle is familiar. An influencer posts a "My OpenClaw Setup" video featuring a Mac Mini. It gets views. Other influencers copy the format. Reddit threads ask "what Mac Mini config for OpenClaw?" Comments recommend maximum memory. Nobody asks why maximum memory. The recommendation becomes conventional wisdom. Apple sells out.

This isn't a conspiracy. It isn't Apple's marketing. It's the same Gear Acquisition Syndrome we documented in the local LLM community - the pattern where buying and configuring hardware becomes the hobby itself, replacing the thing the hardware was supposed to enable. The r/LocalLLaMA subreddit has 50 posts about buying Mac Minis for every zero apps shipped. Now the Mac Minis are being purchased to run agents that don't even use local models. The gear acquisition has outrun even the theoretical justification for the gear.

The CNBC profile of OpenClaw's rise captures the absurdity: the product's creator publicly told users to stop buying expensive hardware, and the buying accelerated anyway.

The E-Waste Math

The environmental angle is harder to dismiss than the financial one.

The world generated 62 million tonnes of e-waste in 2022. Only 17.4% was properly recycled. A Nature Computational Science study projects that aggressive AI adoption will add 2.5 million tonnes of e-waste per year by 2030. IEEE Spectrum and Scientific American have both covered the growing AI hardware waste crisis.

Every Mac Mini purchased to make API calls represents about 1.4 kg of rare earth minerals mined, refined, and shipped across the planet to manufacture a device whose capabilities will never be used. In parallel, functional old hardware that could do the exact same job sits in drawers, closets, and e-waste bins.

The Nature study's key finding: refurbishing and reusing obsolete hardware can cut AI-related e-waste by 42%. In optimistic scenarios combining reuse with modular design and recycling, the reduction reaches 86%.

You don't reduce e-waste by buying new hardware to do a job that old hardware already handles. You reduce it by using what you have.

What You Should Actually Do

If you want to run AI agents (OpenClaw, Claude Code, etc.):

Use whatever computer you already have. Literally any computer from the last decade with a network connection will work. If you don't have a spare machine, a $80 Raspberry Pi 5 is more than enough. If you want something with a screen, a $150 used ThinkPad from eBay does the job beautifully.

If you want to run local models:

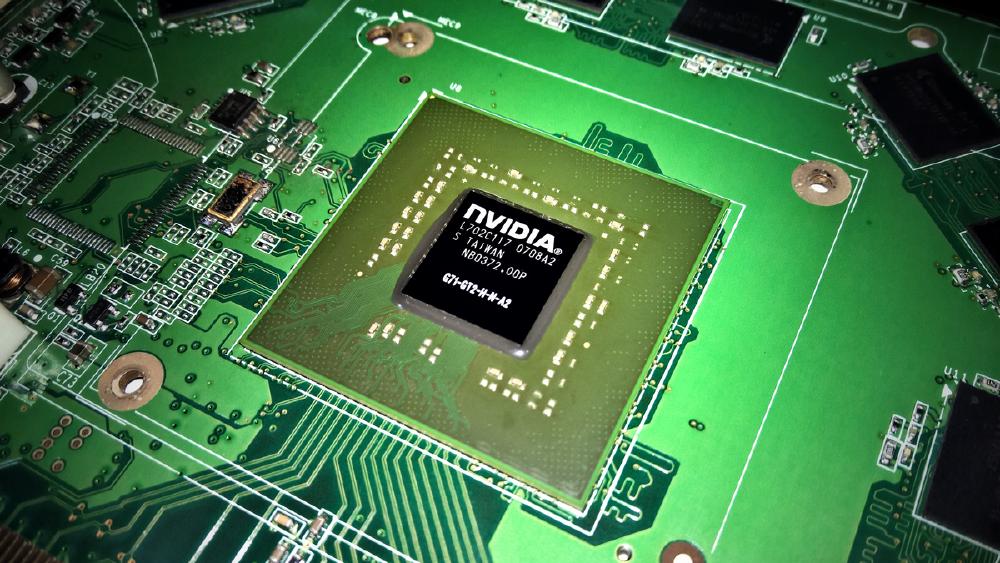

That's a different question with different hardware requirements, and our guide to running LLMs locally covers it in detail. But be honest with yourself about why: as we have documented, local models aren't yet competitive with cloud models for agentic tasks. If you want to learn and tinker, great - but a used desktop with any NVIDIA GPU from the last 8 years outperforms a Mac Mini on inference speed at a fraction of the cost.

If you want the best AI assistant available:

A Claude Pro or ChatGPT Plus subscription costs $20/month. That's $240/year. A Mac Mini M4 Pro with 64GB is $2,200. You'd need to subscribe for over nine years to spend the same amount - and the cloud model will get better every month while the Mac Mini depreciates.

The Mac Mini M4 is a good computer. It's a terrible AI investment. The agents don't need the hardware. The models don't run on the hardware. The only thing the hardware does is make HTTPS requests to someone else's servers - and your old laptop already does that just fine.

Sources

- Tom's Hardware - OpenClaw-Fueled Ordering Frenzy Creates Apple Mac Shortage

- CNBC - From Clawdbot to OpenClaw

- Nature Computational Science - E-Waste Challenges of Generative AI

- Scientific American - Generative AI Could Generate Millions More Tons of E-Waste by 2030

- IEEE Spectrum - Generative AI Has a Massive E-Waste Problem

- TheRoundup - E-Waste Statistics 2026

- Simon Willison - The Year in LLMs 2025

- Raspberry Pi 5

- Stratosphere - LLM Performance on Raspberry Pi 5

- XDA - Used RTX 3090 Remains the Value King for Local AI

- Apple Mac Mini M4 Specs

Last updated