Physical Intelligence Launches π0.7 for Untrained Tasks

Physical Intelligence's π0.7 robot model can generalize to tasks it was never explicitly trained on, matching fine-tuned specialist models through compositional skill recombination.

Physical Intelligence on April 16 unveiled π0.7, a generalist robot model that can tackle tasks it was never directly trained on - then match the performance of specialist models that were. It's a result that the robotics field has been chasing for years and that most researchers thought was still a few model generations away.

Key Specs - π0.7

| Spec | Value |

|---|---|

| Model type | Vision-Language-Action (VLA) generalist |

| Core claim | Compositional generalization across unseen tasks |

| Conditioning inputs | Language, visual subgoals, metadata, control labels |

| Training data sources | Robot episodes, human demos, web VLM pretraining, RL policies |

| Demonstrated tasks | Laundry folding, espresso prep, box assembly, vegetable peeling, appliance operation |

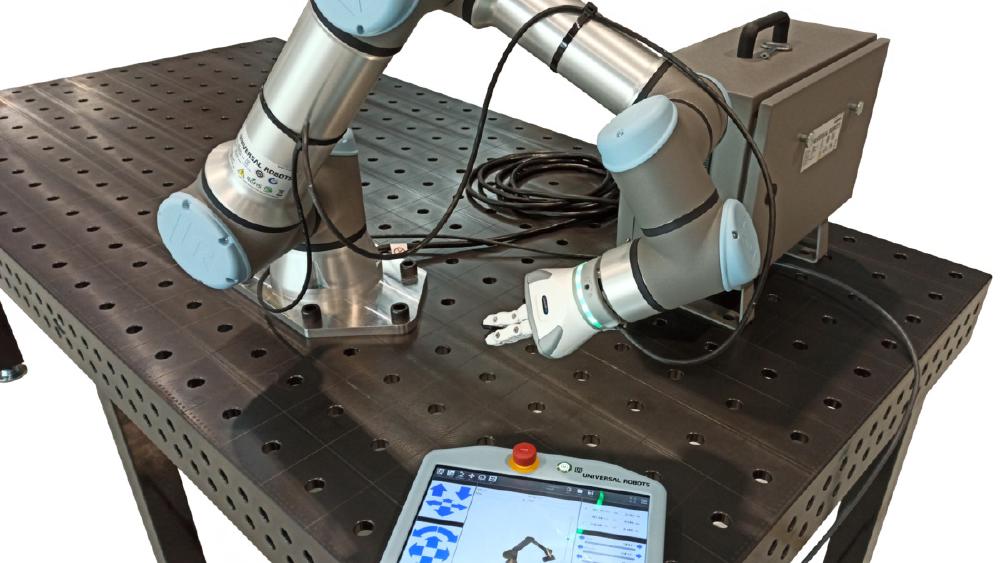

| Cross-embodiment | UR5e industrial arm, bimanual desktop robots |

| Specialist parity | Matches RL-tuned π*0.6 across all tested tasks |

What π0.7 Actually Does

A generalist that rivals specialists

The headline result is that a single π0.7 model, with no task-specific fine-tuning, can match the success rates of Physical Intelligence's own π*0.6 specialist models - which were trained via a dedicated reinforcement learning algorithm called Recap specifically designed to maximize performance on individual tasks.

That matters because the standard path in robot learning has always been: train a new model (or fine-tune heavily) for every new task. Swapping out a laundry-folding robot for an espresso-making one isn't just a software update - it's a whole new training pipeline. π0.7 is an attempt to change that.

Co-founder Sergey Levine described the shift this way: "Once it crosses that threshold where it goes from only doing exactly the trained tasks to actually remixing things in new ways, the capabilities are going up more than linearly." That's the same description researchers used for early large language models - where each new benchmark didn't just improve, it collapsed.

The air fryer experiment

The clearest demonstration of what the company means by "compositional generalization" is the air fryer test. π0.7 had exactly two relevant training episodes involving that appliance: one showing a robot pushing an air fryer closed, and one showing a robot placing a bottle inside. Neither episode involved cooking.

Given the instruction "cook a sweet potato," with no additional coaching, the model attempted the task and failed. Given step-by-step verbal instructions ("open the fryer, place the potato, set the timer"), it succeeded - synthesizing fragments from tangential training data into functional behavior. The research team later found that the model probably learned air fryer context from the open-source DROID dataset, which includes footage of humans interacting with appliances. The robot never saw the specific task but absorbed enough about the appliance class to generalize.

A fisheye view from inside the robot's perception stack as it navigates the kitchen for the air fryer cooking experiment.

Source: pi.website

A fisheye view from inside the robot's perception stack as it navigates the kitchen for the air fryer cooking experiment.

Source: pi.website

Architecture: Three Layers Working Together

The policy stack

π0.7 is a Vision-Language-Action model built from three components that operate at different levels of abstraction:

- High-level policy: creates language subgoals describing what the robot should achieve next

- World model: produces visual subgoals at inference time - literally predicting what the scene should look like after the next action

- Action expert: takes the multimodal context (camera frames, language instructions, predicted subgoals) and outputs low-level robot control commands

The world model step is what makes compositional generalization plausible. Instead of mapping raw instructions directly to motor commands, the model first imagines what success looks like, then plans toward that image. This creates a natural bottleneck where learned skills from different domains can be mixed and matched through the visual prediction space.

Training with varied conditioning

The training methodology is built around what the team calls "diverse multimodal conditioning" - as opposed to naive data merging. Rather than throwing all robot episodes into a single training run with a fixed instruction format, π0.7 was trained with varied prompt structures:

- Language instructions describing tasks and subtasks at different granularities

- Metadata flags specifying execution speed or quality level

- Control modality labels showing whether the robot should use joint control or end-effector control

- Visual subgoal images showing desired intermediate states

The underlying data combined several sources: teleoperated robot episodes, human demonstrations captured with a different setup, web-scale vision-language pretraining (the same recipe that powers today's VLMs), and synthetic data created by running existing RL policies autonomously. The diversity of conditioning formats is what allows the model to be steered at inference time through plain language rather than requiring task-specific prompts.

Benchmark Comparison

Physical Intelligence compared π0.7 against its own RL-tuned specialist models across the core task set. External benchmarks for generalist robot policies don't yet exist in any standardized form, so these numbers are self-reported.

| Task | π0.7 (generalist) | π*0.6 specialist (RL-tuned) |

|---|---|---|

| Laundry folding (t-shirts, shorts) | Matches | Baseline |

| Espresso preparation | Matches | Baseline |

| Box assembly | Matches | Baseline |

| Vegetable peeling | Matches | Baseline |

| Air fryer (unseen, coached) | Succeeds | N/A |

| Cross-embodiment (UR5e) | Matches human teleop | Not evaluated |

The cross-embodiment result deserves closer reading: for the UR5e laundry-folding transfer, the comparison group was human teleoperators attempting the task on the target robot for the first time - subjects with about 375 hours of teleoperation experience. π0.7 matched their first-attempt success rates. That's a useful baseline exactly because it anchors the claim in observable human performance rather than a competing model number.

The robot's on-board perception during a separate task evaluation in a non-kitchen environment.

Source: pi.website

The robot's on-board perception during a separate task evaluation in a non-kitchen environment.

Source: pi.website

Cross-Embodiment Transfer

One of the more surprising results is the cross-embodiment transfer: moving the laundry-folding capability from a small static bimanual desktop robot to a large UR5e industrial arm with markedly different morphology - different joint counts, different workspace geometry, different gripper design.

The model didn't require retraining for this. The cross-embodiment generalization comes from the control modality labels in the training data, which teach the model to reason about robot morphology as a conditioning variable rather than a fixed property. If the model has seen enough variations of arm kinematics during training, a new embodiment becomes one more conditioning input rather than a reason to start over.

Physical Intelligence has been documenting this arc for a while. Their earlier investment round at an $11B valuation in March was partly premised on exactly this kind of result - that the jump from task-specific to general-purpose robot policies was closer than the market believed. π0.7 is the first internal proof point they've released publicly.

The timing also puts Physical Intelligence directly in competition with Google DeepMind, whose Gemini Robotics-ER 1.6 launched in April for Boston Dynamics' Spot platform with a focus on embodied visual reasoning. Those are different bets - Google is pushing integration with commercial robot hardware, while Physical Intelligence is still building the foundation model layer. And Hugging Face's open-source LeRobot v0.5.0 remains the accessible alternative for researchers who want to experiment without proprietary APIs.

What To Watch

What the demos don't prove

The key limitation is one Physical Intelligence states clearly: π0.7 can't execute multi-step tasks autonomously from a single high-level instruction. "Make toast" doesn't work. "Open the toaster, place the bread, press the lever" does. The coaching step is essential. The model has generalized skill components, not autonomous task planning.

That's a real gap. The practical value of a generalist robot in most commercial settings depends on reducing the amount of per-task human setup. If every new task still requires someone to decompose it into coached substeps, a lot of the generalization value moves from the robot to the operator.

Benchmark opacity

The comparison data is self-reported, compared against Physical Intelligence's own prior models, with no external replication. The research community still lacks standardized robotics benchmarks that would let third parties verify claims like "matches specialist performance." Until those exist, every result from any robotics lab - Physical Intelligence, Google DeepMind, NVIDIA - should carry a methodological asterisk. We've seen similar dynamics with NVIDIA's Alpamayo model, where strong internal numbers didn't fully translate to external testing conditions.

What's actually novel

Skepticism aside, the compositional generalization result is genuinely new if it holds up. Previous generalist robot models required fine-tuning to match specialist performance. π0.7's claim is that the gap is now zero, in the zero-shot direction. That's a meaningful threshold to cross.

The real test will come when external researchers get access to the model weights - Physical Intelligence hasn't showed a release timeline - and when the evaluation methodology gets stress-tested against tasks that sit further from the training distribution. The air fryer worked because DROID had relevant tangential data. The next test is something truly outside the training set.

Physical Intelligence's next public benchmark is whether the model generalizes to tasks where no tangential data exists at all - a question the company hasn't answered yet, and one the broader field will be watching closely.

Sources: