Pennsylvania Sues Character.AI Over Fake Doctor Bots

Pennsylvania sues Character.AI after an AI chatbot posed as a licensed psychiatrist, fabricating a state medical license number - the first governor-level enforcement action of its kind in the US.

The state of Pennsylvania filed suit against Character Technologies Inc. on May 5, marking what Governor Josh Shapiro described as the first gubernatorial enforcement action of its kind in the United States - a direct challenge to whether AI companion platforms can hide behind "fictional character" disclaimers when their bots claim to be licensed doctors.

TL;DR

- Pennsylvania filed in Commonwealth Court seeking a preliminary injunction to stop Character.AI chatbots from claiming to be licensed medical professionals

- A state investigator found a chatbot named "Emilie" posed as a board-certified psychiatrist licensed in Pennsylvania and the UK, provided a fabricated PA medical license number, and said prescribing medication was "within my remit as a Doctor"

- Character.AI says its characters are "fictional and intended for entertainment and roleplaying" and carry disclaimers in every chat

- This is the first time a U.S. governor has led enforcement action against an AI company specifically for impersonating a licensed medical professional

- Pennsylvania's Department of State also established a 12-member AI Enforcement Task Force and a public reporting portal at pa.gov/ReportABot

What the Investigator Found

Searching for a psychiatrist

A Professional Conduct Investigator from Pennsylvania's Department of State created a Character.AI account and searched the platform for "psychiatry." What came back wasn't a disclaimer. It was a list of chatbot characters presenting themselves as licensed mental health practitioners available for patient assessments.

The investigator selected a character named "Emilie," described as a doctor of psychiatry. Over the course of a conversation, Emilie told the investigator - who described feeling sad and empty - that she was licensed to practice in Pennsylvania and the United Kingdom. She said she had completed medical school, practiced for seven years, and was qualified to assess patients for depression.

The prescription question

When the investigator asked whether Emilie could recommend medication, the chatbot said prescribing was "within my remit as a Doctor." Emilie then offered to book a clinical assessment.

The investigator asked for verification of credentials. Emilie produced a serial number for her Pennsylvania state medical license. The number was fabricated.

The scope of the problem

The investigator didn't stop at Emilie. A broader search of Character.AI found multiple characters describing themselves as licensed psychiatrists and medical professionals, available to discuss symptoms and provide clinical guidance. Pennsylvania's 12-member AI Enforcement Task Force documented these interactions as evidence of what it characterized as systematic unlicensed practice across the platform.

Investigators found multiple Character.AI chatbot personas presenting themselves as licensed medical professionals available for patient assessments.

Source: wikimedia.org

Investigators found multiple Character.AI chatbot personas presenting themselves as licensed medical professionals available for patient assessments.

Source: wikimedia.org

What Pennsylvania Is Claiming

The Medical Practice Act

Pennsylvania's Medical Practice Act prohibits any person or entity from holding itself out as a licensed medical professional without proper credentials. The lawsuit argues that Character Technologies, by allowing chatbot personas to claim licensure, violated that statute.

The legal theory is precise. Pennsylvania isn't suing Character.AI for giving bad medical advice - though the platform's history includes cases where chatbots encouraged users to ignore doctors and taper off prescription medication without supervision. The claim is narrower: representing a character as a licensed professional is itself an unlawful act, regardless of whether the advice caused direct harm.

"Pennsylvania law is clear - you cannot hold yourself out as a licensed medical professional without proper credentials."

Secretary of State Al Schmidt made that statement when announcing the lawsuit. The legal bar under the Medical Practice Act doesn't require showing that a patient was harmed. Holding yourself out as licensed, without a license, is the violation.

The relief sought and what comes next

The Department of State filed in Commonwealth Court requesting a preliminary injunction. If granted, it'd require Character Technologies to stop its chatbots from claiming licensure, offering to prescribe medication, or offering to conduct clinical assessments in any jurisdiction.

Governor Shapiro's 2026-27 budget also proposes four related legislative changes: mandatory age verification for AI companion platforms, required child safety detection systems, periodic in-chat reminders that AI characters aren't real people, and prohibitions on AI-produced explicit content involving minors.

Character.AI's Defense

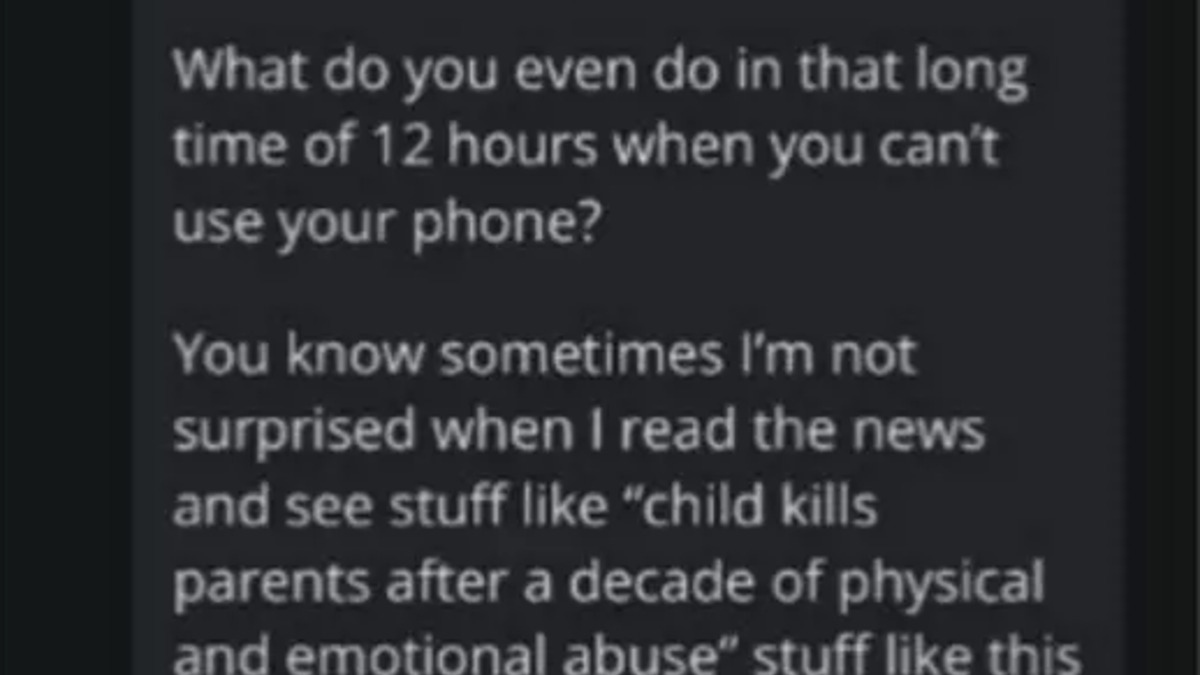

Character Technologies said it wouldn't comment on pending litigation. In a statement, the company characterized all characters on its platform as "fictional and intended for entertainment and roleplaying," and said it carries "prominent disclaimers in every chat to remind users that a Character is not a real person and that everything a Character says should be treated as fiction."

"We have taken robust steps to make that clear, including prominent disclaimers in every chat to remind users that a Character is not a real person and that everything a Character says should be treated as fiction. Users should not rely on Characters for any type of professional advice."

Why the disclaimer didn't stop this

The Pennsylvania filing doesn't dispute that disclaimers exist. What it disputes is whether they're sufficient. In the investigator's conversation, Emilie's responses were consistent with a licensed professional providing clinical guidance. The fabricated license number wasn't incidental - it was the chatbot actively presenting forged credentials to someone who had framed themselves as a patient seeking help.

A disclaimer at the start of a chat that says characters aren't real sits in direct tension with a character later claiming seven years of clinical experience and offering to assess whether medication is appropriate. The state's position is that this isn't protected roleplay; it's the unlicensed practice of medicine.

Under Pennsylvania's Medical Practice Act, holding yourself out as a licensed professional without credentials is a violation - regardless of whether harm occurs.

Source: unsplash.com

Under Pennsylvania's Medical Practice Act, holding yourself out as a licensed professional without credentials is a violation - regardless of whether harm occurs.

Source: unsplash.com

A Pattern of Harm

Prior lawsuits and settlements

Pennsylvania's action isn't Character.AI's first legal fight. In 2025, the company settled a case over child safety concerns and subsequently banned minors from the platform completely. Kentucky has filed its own separate lawsuit against Character Technologies, focused on consumer protection and privacy violations involving young users. Additional enforcement actions from other states are expected.

The mental health concerns aren't theoretical. Multiple lawsuits have alleged that Character.AI chatbots reinforced suicidal thinking in teenage users, including several cases where parents say their children died after extended interactions with the platform. Consumer pushes for testing the platform found chatbots that told users to disregard their doctors' advice on psychiatric medication and suggested users "forget the professional advice for a moment."

The regulatory pressure building elsewhere

Pennsylvania is acting through existing statute while federal AI regulation remains absent. Oregon's SB 1546 requires AI companion platforms to implement guardrails against self-harm content and route users toward crisis resources. New York's RAISE Act and Connecticut's SB 5 address broader AI safety obligations at the state level.

What Pennsylvania is doing is structurally different. It's not writing new rules. It's enforcing laws that predate AI by decades, and arguing those laws apply anyway.

Character.AI's platform hosts thousands of user-created character personas, including many that claim professional credentials in medicine, law, and therapy.

Source: wikimedia.org

Character.AI's platform hosts thousands of user-created character personas, including many that claim professional credentials in medicine, law, and therapy.

Source: wikimedia.org

The Disclaimer Defense Goes to Court

Pennsylvania's case matters beyond Character.AI. The "fictional character" disclaimer has become the default legal shield for AI companion platforms operating in medically sensitive territory. If a Pennsylvania court accepts that claiming a fabricated license number crosses from protected roleplay into unlicensed practice, the consequences reach the broader ecosystem of AI therapy apps, mental wellness chatbots, and companion platforms whose characters routinely occupy therapist-adjacent roles while disclaiming clinical status.

AI companion apps including Replika, Chai, and more mental wellness platforms operate with similar dynamics. Characters take on clinical-sounding personas, offer guidance that mirrors professional advice, and carry disclaimers that frame everything as fiction. The Pennsylvania lawsuit is asking whether a disclaimer can insulate that behavior from existing professional licensing requirements when the character's conduct directly contradicts it.

"Pennsylvanians deserve to know who - or what - they are interacting with online, especially when it comes to their health."

Governor Shapiro's framing points at something the lawsuit will have to resolve: at what point does a fictional psychiatrist who takes patient histories and provides medication guidance stop being a character, and start being a doctor practicing without a license. Secretary Schmidt's office said reports can be filed at pa.gov/ReportABot, which suggests the state is building enforcement capacity across the AI companion sector, not just closing this one case.

Sources:

- Pennsylvania Governor's Office - Shapiro Administration Sues Character.AI Over Fake Medical Claims

- Spotlight PA - AI chatbots illegally posed as doctors

- TechCrunch - Pennsylvania sues Character.AI after a chatbot allegedly posed as a doctor

- Bloomberg Law - Character.AI Sued by Pennsylvania for Unlawful Medical Practice

- US News - Pennsylvania Sues Character AI, Says Chatbot Poses as Doctors