OpenMythos Recasts Claude Mythos as Looped MoE Transformer

Kye Gomez open-sourced OpenMythos, a PyTorch reconstruction that hypothesizes Anthropic's Mythos is a Recurrent-Depth Transformer with Mixture-of-Experts routing and Multi-Latent Attention.

Kye Gomez, founder of the swarms agent framework, published OpenMythos on GitHub this week: a PyTorch-first "first-principles theoretical reconstruction" of Anthropic's unreleased Claude Mythos model. The repo does not contain Mythos weights. What it contains is a concrete architectural hypothesis about what Mythos actually is, wired up as runnable code.

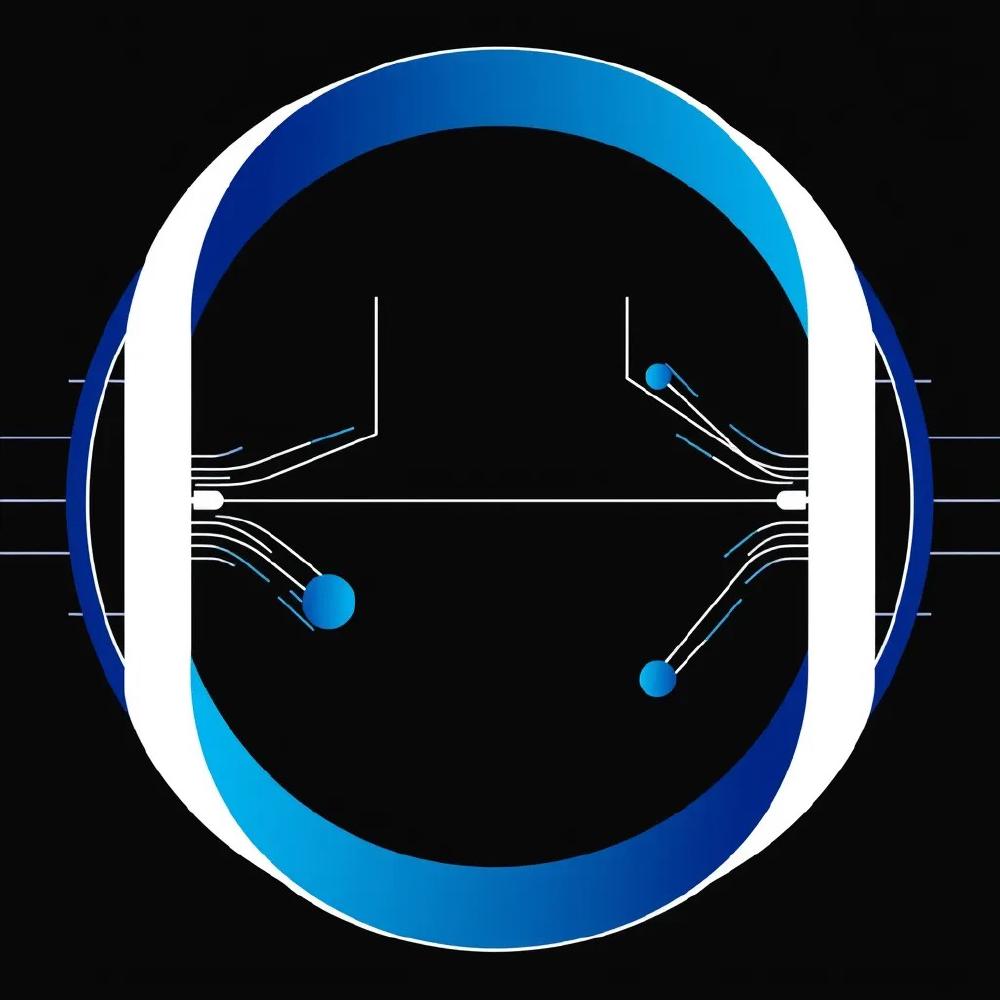

The hypothesis, in one sentence: Mythos is a Recurrent-Depth Transformer (RDT) with a Mixture-of-Experts feed-forward and Multi-Latent Attention, looping the same block up to sixteen times per forward pass.

TL;DR

- OpenMythos is an open-source PyTorch implementation reconstructing what Kye Gomez thinks Anthropic's Mythos architecture looks like - not leaked weights

- Core claim: Mythos is a Recurrent-Depth Transformer (RDT) that loops one shared block up to 16 times per forward pass, with Mixture-of-Experts routing inside the loop

- Reasoning happens in continuous latent space, not via emitted tokens, a design direction Meta FAIR published as COCONUT in 2024

- Attention defaults to DeepSeek-V2 style Multi-Latent Attention, claimed to cut KV cache memory 10-20x at production scale

- Stability mechanisms: LTI-constrained residual injection, Adaptive Computation Time halting, depth-wise LoRA adapters per loop step

- Parameter efficiency pitch: a 770M RDT matches a 1.3B standard transformer on the same training data, if the Parcae/Prairie 2026 empirical result holds

What the architecture actually proposes

The top-level shape is Prelude plus Recurrent Block plus Coda. The Prelude and Coda are ordinary transformer layers, run once on input and output respectively. Everything interesting happens in the middle.

Inside the Recurrent Block, a single TransformerBlock is applied iteratively across up to T=16 steps in one forward pass. The frozen encoded input e is re-injected at every step through a linear time-invariant update rule:

h_{t+1} = A * h_t + B * e + Transformer(h_t, e)

The constraint that A has spectral radius below one (rho(A) < 1) is a stability condition borrowed straight out of classical control theory. Without it, looping a block sixteen times is a reliable way to blow up activations. With it, you get a contractive map with a bounded-energy driving term from the input. This is the closest a transformer architecture gets to a discrete-time dynamical system.

The feed-forward inside the looped block is not a dense MLP. It is a Mixture-of-Experts layer modeled on DeepSeekMoE: a large pool of fine-grained routed experts plus a small set of always-active shared experts. Only a sparse top-K of the routed experts fire for any given token. The critical detail in OpenMythos is that the router chooses a different expert subset at each loop iteration. That means loop step three and loop step ten are not the same computation repeated. They activate different experts and, therefore, compute different functions against the same weights.

Gomez's framing: MoE supplies domain breadth, the loop supplies reasoning depth. Neither alone gets you both.

Reasoning in latent space, not tokens

The most substantive design claim is that Mythos does its work in continuous latent space rather than via a chain of emitted tokens. Nothing is decoded between loop iterations. The model is not talking to itself in English the way a chain-of-thought trace does. It is iterating on a hidden state vector and only producing a token at the end.

This is not a Gomez invention. It is the same direction Meta FAIR published in late 2024 as Chain of Continuous Thought (COCONUT), and the looped-transformer framing has been formally analyzed for expressivity and convergence by Saunshi et al. (2025). If Mythos really is shaped this way, Anthropic would be shipping a production version of a research direction the rest of the field has spent two years studying in smaller settings.

The practical consequence is that reasoning depth becomes an inference-time knob, not a parameter-count decision. Run more loop iterations, do more thinking. The Adaptive Computation Time mechanism from OpenMythos halts per position once a learned confidence threshold is passed, which means easy tokens exit early and hard tokens can consume more compute.

KV cache and efficiency math

Attention in OpenMythos defaults to the Multi-Latent Attention head from DeepSeek-V2, which caches a compressed low-rank KV latent instead of full K and V tensors. Gomez cites a 10 to 20 times reduction in KV cache memory at production scale. At long context lengths on dense production traffic, KV cache is the binding memory constraint long before weights are. If Anthropic is actually running a looped model with MLA, the per-request memory footprint at 200K context is considerably smaller than a standard transformer of comparable intelligence would demand.

Three additional stability mechanisms round out the design:

- LTI-constrained injection, as above, prevents loop-step divergence

- Adaptive Computation Time halting gives per-token early exit

- Depth-wise LoRA adapters give each of the T loop steps a small amount of per-iteration expressiveness without duplicating the base block weights

Gomez cites Parcae, Prairie et al. (2026) for the claim that a 770M-parameter RDT matches a 1.3B-parameter standard transformer trained on the same data. If that result replicates, the scaling debate moves from "how many parameters did you train" to "how many loop steps can you afford at inference." That is a different cost structure, and one that favors customers willing to pay more for harder questions and less for easy ones.

How seriously to take this

OpenMythos is not a leak. It is one well-informed developer's guess about an architecture Anthropic has said nothing concrete about in public. Anthropic has acknowledged Mythos exists, that it sits above Opus in capability, and that it is being handled carefully due to cybersecurity risk. They have not published any architectural detail. The original Mythos disclosure came through a CMS misconfiguration, not a paper.

Gomez has a track record that cuts both ways. The swarms framework has real adoption, but his public output has also drawn criticism in ML circles for repackaging other people's work without clean attribution. OpenMythos, to its credit, cites its building blocks explicitly and leans on widely-published research (DeepSeek-V2 MLA, DeepSeekMoE, COCONUT, Saunshi et al.), rather than claiming novelty where there is none.

The code itself is the interesting artifact. Whether or not Mythos is actually an RDT+MoE stack, OpenMythos is a concrete reference implementation of a non-trivial architecture combining four distinct research threads: looped transformers, continuous-latent reasoning, fine-grained MoE routing, and compressed-KV attention. For teams trying to understand what a post-dense-transformer production architecture might plausibly look like, it is a useful thing to read, run, and stress-test, regardless of whether the Mythos label ever turns out to be earned.

What to watch next

The falsifiable version of Gomez's hypothesis is straightforward. If Anthropic eventually publishes a model card or paper for Mythos and the architecture is a Recurrent-Depth Transformer with MoE and MLA, OpenMythos becomes a piece of credible prior-art reverse engineering. If it is something else entirely, say a dense transformer with test-time compute via a different mechanism, OpenMythos becomes an instructive architectural exercise whose name does not age well.

Either way, the code compiles and runs today. That is more than most Mythos takes can claim.

Sources:

- OpenMythos on GitHub

- Training Large Language Models to Reason in a Continuous Latent Space (COCONUT)

- Reasoning with Latent Thoughts: On the Power of Looped Transformers (Saunshi et al.)

- DeepSeek-V2: A Strong, Economical, and Efficient Mixture-of-Experts Language Model

- DeepSeekMoE: Towards Ultimate Expert Specialization

- Anthropic Mythos exposed via CMS misconfiguration