OpenClaw Passes The Fake-Star Audit - Mostly

We ran our fake-star methodology against OpenClaw and 10 ecosystem variants, sampling 361,000-star profiles and fork ratios. The main repo looks clean. Most clones look clean. One repo with 6,532 claimed stars has vanished.

A week ago we published an investigation into GitHub's fake star economy: $0.06-per-star marketplaces, VCs treating star counts as sourcing signals, and the fingerprints of manipulation we found across blockchain and AI repositories.

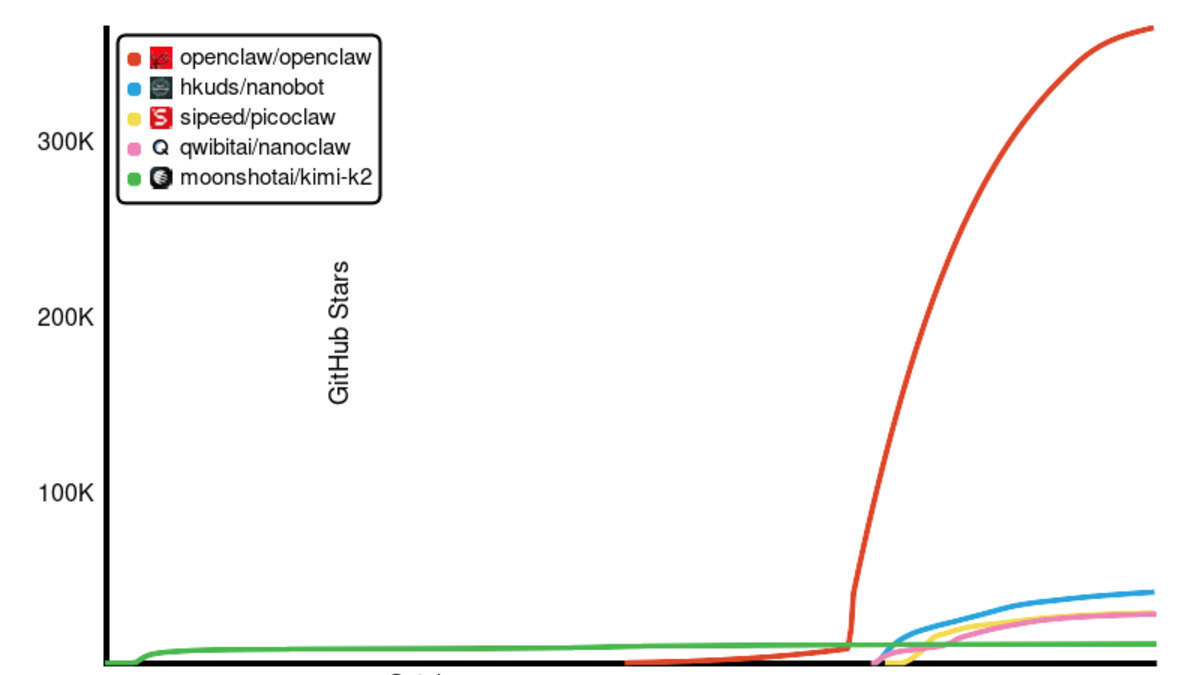

The obvious next question was whether the same methodology applies to the year's most-starred AI project. OpenClaw crossed 250,000 stars in early March and surpassed React in roughly 60 days. That's either the fastest organic adoption curve in open source history or the most expensive star-buying campaign in open source history. We ran the audit.

TL;DR

- OpenClaw's main repository has 360,891 stars, 73,617 forks, F/S ratio 0.204 - healthier than LangChain (0.155) and close to Flask (0.235)

- Profile sample shows 0% ghost accounts, 0% zero-repo accounts, 0% zero-follower accounts, median stargazer age of 4,451 days (more than 12 years)

- Nine of ten top variants pass basic manipulation heuristics, F/S ratios between 0.076 and 0.451

- ClawRouter, claimed at 6,532 stars on the community index, returns 404. The repository has been deleted

- Moonshot AI's Kimi-K2 (branded as KimiClaw in the index) shows F/S 0.076 - below AutoGPT's 0.090 baseline and worth flagging

- First 100 starrers of the main OpenClaw repo include zero accounts under 30 days old, median GitHub user ID 9.9 million (accounts from roughly mid-2014)

Why audit OpenClaw

Three signals made it the obvious case. The growth curve (zero to 250,000 stars in 60 days) matches the exact pattern our original piece flagged as either genuine virality or professional star-buying. OpenClaw's creator Peter Steinberger was acqui-hired by OpenAI in February and the project moved to a foundation - the funding-round context where inflated metrics carry the most commercial value. And openclawindex.pages.dev lists dozens of rewrites and adjacent tools claiming tens of thousands of stars, which is exactly where a cloned-and-pumped framework would hide.

We pulled the top 10 ecosystem repositories plus the canonical main repo, sampled fork and star counts via the GitHub API, and for the two largest fetched 100-starrer samples plus 10 profile inspections each. The remaining eight got meta-only pulls. ClawRouter failed during collection for a reason we return to.

The main repo

OpenClaw reports 360,891 stars and 73,617 forks on the canonical repository at github.com/openclaw/openclaw. That gives a fork-to-star ratio of 0.204, comfortably inside the organic band from our original baselines. Flask sits at 0.235. LangChain at 0.155. AutoGPT at 0.090. OpenClaw is closer to Flask than to LangChain.

| Metric | OpenClaw | Flask | LangChain | AutoGPT |

|---|---|---|---|---|

| Stars | 360,891 | 71,000 | 133,000 | 183,000 |

| Forks | 73,617 | 16,685 | 20,615 | 16,470 |

| Fork-to-star ratio | 0.204 | 0.235 | 0.155 | 0.090 |

| Watcher-to-star ratio | 0.0049 | 0.029 | 0.006 | 0.005 |

The profile sample tells the same story. Ten random starrers from the first page, full profiles pulled. Every one has at least three public repos and seven followers. The median stargazer has 22 public repos, 53 followers, and a GitHub account 4,451 days old, more than twelve years. Older than LangChain's stargazer median (2,967 days) and within striking distance of Flask's (4,801 days).

Across the first 100 starrers by GitHub user ID, the median ID is 9,907,838 - ID 10 million corresponds to accounts created around mid-2014. Only 5% of the sample has IDs above 200 million (the cutoff for accounts from 2025 or later). Zero accounts in the sample were under 30 days old when they starred.

Paid campaigns use accounts with median ages of 400-1,200 days, 30-40% zero-repo rates, and 50-80% zero-follower rates. OpenClaw's first-wave starrers are working developers who joined GitHub before the original iPhone shipped.

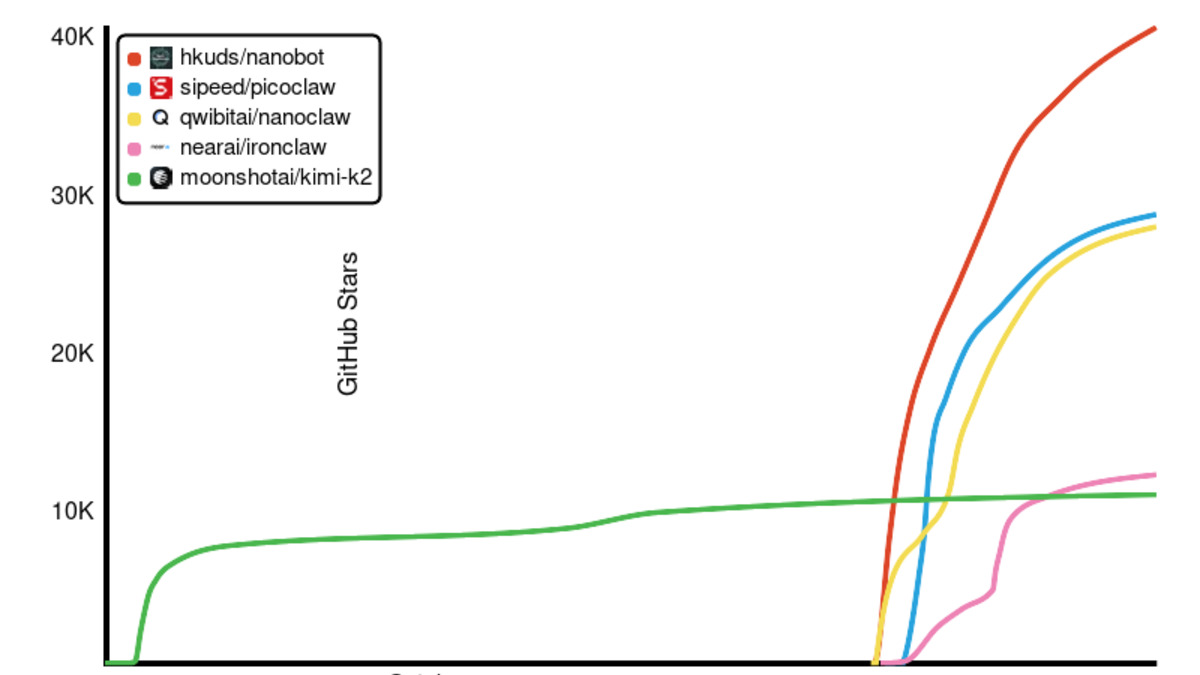

The ten variants

| Repo | Stars | Forks | F/S | Assessment |

|---|---|---|---|---|

| Nanobot (HKUDS) | 40,164 | 7,067 | 0.176 | Clean |

| PicoClaw (sipeed) | 28,347 | 4,047 | 0.143 | Clean |

| nanoclaw (qwibitai) | 27,565 | 12,428 | 0.451 | Unusually high |

| IronClaw (near.ai) | 11,884 | 1,362 | 0.115 | Clean |

| Kimi-K2 (Moonshot) | 10,633 | 813 | 0.076 | Low |

| NullClaw | 7,267 | 851 | 0.117 | Clean |

| ClawRouter | - | - | - | Deleted (404) |

| MimiClaw (memov.ai) | 5,217 | 766 | 0.147 | Clean |

| LobsterAI (NetEase) | 5,060 | 768 | 0.152 | Clean |

| Archestra | 3,587 | 469 | 0.131 | Clean |

Seven of the ten sit in the 0.115-0.176 fork-to-star band, the same range as LangChain, browser-use, crewAI, and dify. Nanobot from HKUDS launched February 1 and captured 40,000 stars in under three months - exactly the growth curve that usually warrants suspicion. But its 0.176 fork ratio matches browser-use, and its first-page starrers have median account ages of 2,796 days. That reads like a well-marketed launch from an established academic lab, not a purchased campaign.

nanoclaw from qwibitai runs the opposite direction at F/S 0.451, twice Flask's 0.235. Forks running that far ahead of stars usually means either a template being used as a scaffold, or a fork-farming script inflating activity metrics. The 708 open issues is genuine-engagement signal (fork-farming produces near-zero issue volume), so the reading is scaffolding. We flag it anyway.

Kimi-K2 from Moonshot AI lands at F/S 0.076, the lowest in the ecosystem and just below AutoGPT's 0.090 baseline. Not below the 0.05 manipulation threshold, but worth a second look. Context cuts the other way: Kimi-K2 is a language model release, not an agent framework. Model repos always have lower fork ratios because nobody forks a weights dump. LLaMA, Gemma, and Mistral show the same pattern. It landed on the OpenClaw index under "Hosting" because Kimi-K2 is a backend commonly plugged into OpenClaw deployments, which creates the apples-to-oranges comparison.

The repository that vanished

One unambiguous finding: ClawRouter at github.com/BlockRunAI/ClawRouter returns 404. The community index still lists it at 6,532 stars. The repository doesn't exist. Either the owner deleted it, GitHub removed it for terms-of-service violations, or the owner account itself was deleted.

We can't confirm which without GitHub cooperation, but the pattern matches the CMU ICSE 2026 study: 90.42% of repositories flagged as having fake-star campaigns were deleted by GitHub as of January 2025. Removal is the platform's standard enforcement response. ClawRouter's 404 isn't proof of manipulation, but it's the exact disposition GitHub uses for repos that fail integrity review. And if this was a false positive, nobody has restored it.

Methodology

Same pipeline as the original investigation, two layers. Fork and star metadata for all 11 targets via the GitHub REST search API. Profile sampling for OpenClaw and Nanobot: first 100 stargazers with starred_at timestamps, plus 10 random profile fetches each covering followers, repos, account age, bio.

GitHub user IDs increase monotonically, so a piecewise map (ID 217 = Feb 2008, 50M = Mar 2019, 100M = Feb 2022, 150M = Oct 2023, 190M = Dec 2024) estimates account creation from IDs alone, accurate to within a few weeks. Detection thresholds match the original piece: F/S below 0.05 with 10,000+ stars, ghost rate above 15%, zero-follower rate above 40%. Scripts and raw data at _agents/research/openclaw-star-audit/.

The blind spot

Two conclusions deserve equal weight. One: the fork-to-star ratio plus ghost-account sampling works as a first-pass filter, OpenClaw doesn't manipulate, the audit produced a correct negative. Two: OpenClaw uses a technique sophisticated enough to evade our heuristics - aged accounts with populated profiles, stars distributed across weeks, forks generated at organic ratios.

The CMU StarScout study identified aged-account campaigns specifically designed to bypass the ghost-profile fingerprint. They cost more ($0.85 per star premium versus $0.06 budget) and take longer to assemble. Our methodology targets the cheap end of the market.

300,000 stars at premium prices would run about $255,000 at GitHub24's EUR 0.85 rate. That is a commercial decision with a paper trail. OpenAI's due diligence during the February acqui-hire would have surfaced it. The foundation running the project publishes financial disclosures. Nothing in either points at a star purchase. Occam's razor: the stars are real.

VC firms sourcing deals via the ROSS Index and automated star-trackers should run something like this on every sub-$50M company they look at. Most don't. For OpenClaw, a project that looked too good to be true looks, on the data we have, like it actually is.

Sources:

- Our prior investigation: Inside GitHub's Fake Star Economy (Awesome Agents, April 13, 2026)

- He et al. - Six Million (Suspected) Fake Stars in GitHub (ICSE 2026)

- Dagster - Detecting Fake GitHub Stars

- Du et al. - Understanding Promotion-as-a-Service on GitHub (ACSAC 2020)

- OpenClaw ecosystem variant index

- Our OpenClaw review (Elena Marchetti, Feb 27, 2026)

- OpenClaw hits 250K GitHub stars, surpasses React (March 7, 2026)

- OpenClaw creator joins OpenAI (Feb 2026)