OpenAI Rebuilt Its Voice AI Stack for 900M Users

OpenAI published how they rearchitected their WebRTC stack to serve 900M weekly voice users on Kubernetes using a split relay and transceiver model.

OpenAI published a detailed engineering post on May 4 explaining how they rearchitected their WebRTC infrastructure to serve 900 million weekly active users without turning their Kubernetes cluster into an UDP port management problem.

The post, written by Yi Zhang and William McDonald (Members of Technical Staff), walks through why the standard WebRTC deployment model falls apart at scale and what they built instead. If you've tried to run real-time media services on Kubernetes and hit the same walls, this is worth reading closely.

TL;DR

- OpenAI uses WebRTC (built on Pion, written in Go) for ChatGPT voice and the Realtime API

- The conventional one-port-per-session model fails on Kubernetes at 900M-user scale

- Fix: a stateless relay routes packets using routing metadata encoded in the ICE username fragment

- All WebRTC session state (ICE, DTLS, SRTP) stays in one stateful transceiver service

- Geo-steered ingress via Cloudflare's Global Relay fleet puts the first hop close to users

- Justin Uberti (one of WebRTC's original architects) and Sean DuBois (Pion's creator) both work at OpenAI

Why WebRTC - and What It Handles

Most engineers associate WebRTC with browser video calls. OpenAI uses it as the underlying transport for everything real-time: ChatGPT voice, the Realtime API for external developers, and agentic workflows where a model needs to process audio while a user is still speaking.

The appeal is practical. WebRTC standardizes the infrastructure work that would otherwise need custom per-platform code: ICE for NAT traversal, DTLS and SRTP for encrypted transport, Opus for audio codec negotiation, and client-side jitter buffering plus echo cancellation. OpenAI builds on top of Pion, the Go-based open-source WebRTC library.

The post credits two names prominently: Sean DuBois (Pion's creator and maintainer) and Justin Uberti (one of WebRTC's original architects from its early Google days). Both now work at OpenAI. Mentioning this openly in an engineering post about a proprietary architecture change isn't typical, but it suggests these aren't just consultants - they're shaping the actual infrastructure decisions.

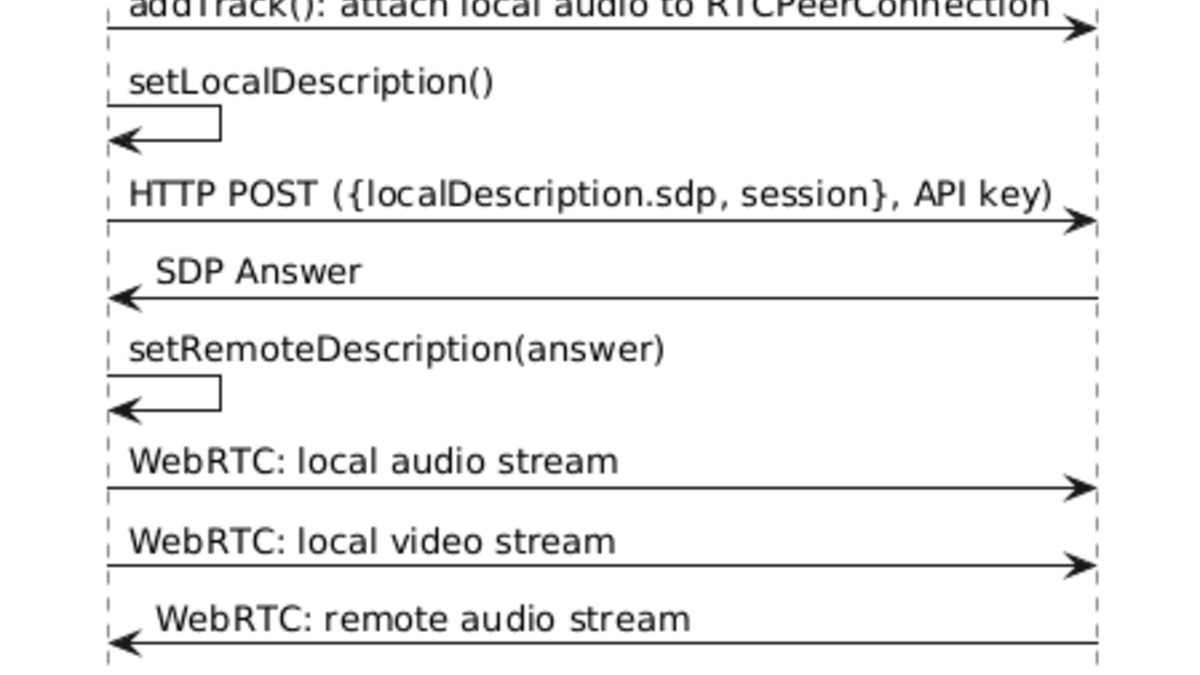

The WebRTC signaling and media flow between a browser client and OpenAI's backend: SDP exchange, ICE candidate negotiation, then continuous audio streaming.

Source: webrtchacks.com

The WebRTC signaling and media flow between a browser client and OpenAI's backend: SDP exchange, ICE candidate negotiation, then continuous audio streaming.

Source: webrtchacks.com

What Matters for Voice AI Specifically

For voice applications, the key property is that audio arrives as a continuous stream. A model can start transcribing, reasoning, calling tools, or generating speech while the user is still talking - instead of waiting for a complete audio upload. That difference separates conversational AI from push-to-talk. The gpt-realtime-mini model, which powers the speech-to-speech Realtime API, depends on this.

The blog post frames the infrastructure requirements clearly: global reach for 900M+ weekly active users, fast connection setup so speech can start immediately, and low, stable media round-trip time so turn-taking feels natural.

The Kubernetes Problem

The initial implementation was a single Go service using Pion that handled both signaling and media termination. It works. It's what currently runs ChatGPT voice and the Realtime API. The problem is scaling it.

Port Exhaustion

Standard WebRTC allocates one UDP port per session. At high concurrency, that means exposing tens of thousands of public UDP ports. Cloud load balancers aren't designed for this - each additional range adds complexity in load balancer config, health checking, firewall policy, and rollout safety. Large UDP port ranges are also hard to audit from a security standpoint.

Kubernetes makes this worse. Pods scale up, down, and reschedule constantly. Requiring each pod to reserve and advertise a stable large port range makes that elasticity impractical.

Single-port-per-server solves the port count on a single machine, but doesn't fix the problem across a distributed fleet. The first packet from a client can still land on the wrong pod.

State Stickiness

WebRTC session state isn't cheap to move. ICE connectivity checks, the DTLS handshake, SRTP encryption keys - all of these need to stay with the process that created the session. If a packet lands on a different process, setup fails or media breaks. This is why WebRTC is difficult to run in auto-scaling environments even after you solve the port problem.

The physical infrastructure behind voice AI at scale: distributed server racks across multiple geographic regions, connected by high-speed networking.

Source: unsplash.com

The physical infrastructure behind voice AI at scale: distributed server racks across multiple geographic regions, connected by high-speed networking.

Source: unsplash.com

The Solution: Split Relay and Transceiver

OpenAI's answer separates packet routing from protocol termination. A stateless relay handles the public UDP surface. A stateful transceiver owns the WebRTC session. They're distinct services with different scaling properties.

The comparison table from the post is worth reproducing:

| Approach | Pros | Cons |

|---|---|---|

| Native UDP (one port/session) | Direct media path, no forwarding | Huge port range, bad on Kubernetes |

| One port/server | Smaller footprint | Doesn't fix cross-fleet routing |

| TURN relay | Clients only reach one address | Allocation overhead, hard to migrate |

| Relay + Transceiver (OpenAI) | Small fixed UDP surface, standard WebRTC | One extra hop, custom coordination |

The Relay

The relay doesn't decrypt media or run ICE state machines. It reads enough of the first STUN packet to extract the ICE username fragment (ufrag), decodes routing metadata encoded there during session setup, and forwards the packet to the transceiver that owns the session. Every subsequent packet - DTLS, RTP, RTCP - flows through an established session entry without re-parsing.

The client sees a single stable virtual IP (VIP) and port, like 203.0.113.10:3478, fronting the relay fleet. From the client's perspective, nothing about the WebRTC session changes. Standard WebRTC behavior across.

The relay's in-memory session state is intentionally minimal: a mapping from <client IP:Port> to <transceiver IP:Port>, plus counters and cleanup timers. A Redis cache holds these mappings so a relay restart doesn't require waiting for the next STUN packet to rebuild routing.

ICE Ufrag as a Routing Hook

The interesting design decision is how first-packet routing works. Rather than adding a hot-path lookup service that could become a bottleneck or failure point, OpenAI encodes routing metadata directly into the server-side ICE ufrag during signaling. The ufrag contains enough information for the relay to identify the destination cluster and owning transceiver from the packet header alone.

This keeps routing deterministic and removes the need for any synchronous external call on the critical media path. The ufrag still looks like a standard ICE credential to the client.

Client first packet (STUN binding request)

-> Relay reads server-side ufrag

-> Decodes: cluster ID + transceiver address

-> Forwards to owning transceiver

-> Transceiver completes ICE, DTLS, SRTP

-> Media flows; relay routes on cached session entry

The Transceiver

The transceiver owns all WebRTC protocol state: ICE, DTLS, SRTP keys, codec negotiation, session lifecycle. Backend services for inference, transcription, and speech generation talk to the transceiver over simpler internal protocols and scale independently. They don't need to participate in WebRTC at all.

Go Implementation Without Kernel Bypass

The relay is written in Go. Two Linux socket optimizations do the heavy lifting:

SO_REUSEPORT allows multiple relay goroutines on the same machine to share an UDP port. The kernel distributes incoming packets across them, eliminating a single-reader bottleneck.

runtime.LockOSThread pins each UDP-reading goroutine to an OS thread. Combined with SO_REUSEPORT, packets from the same flow tend to land on the same CPU core, improving cache locality and reducing context switching overhead.

Pre-allocated packet buffers and minimal data copying keep garbage collector pressure low during high-throughput periods.

The post notes that this implementation handled global real-time media traffic with a small relay footprint, so they stayed with the simpler approach rather than reaching for kernel bypass frameworks like DPDK. That's a considered choice. Kernel bypass adds operational complexity and debugging difficulty that's hard to justify unless you've truly exhausted what careful userspace Go can do.

Global Relay and Geo-Steering

With the public UDP surface now small and fixed, the same relay pattern deploys globally. OpenAI's Global Relay fleet distributes ingress points geographically - ICE candidate addresses observed since September 2025 show endpoints in Chicago, Virginia, and Austin.

Signaling uses Cloudflare geo and proximity steering: the initial HTTP or WebSocket request reaches a nearby transceiver cluster. The SDP answer then provides a Global Relay address close to that cluster, so media enters OpenAI's network near the user rather than traversing the public internet to a distant region first.

The result is lower first-hop latency for both session setup and the initial ICE connectivity check. For voice AI, sub-300ms end-to-end latency separates a system that feels conversational from one that feels like a phone menu. Every millisecond shaved before speech can start counts.

Where It Falls Short

The architecture is well-suited to OpenAI's specific workload. It isn't a universal answer.

| Limitation | Detail |

|---|---|

| One extra network hop | All media passes through relay before reaching transceiver |

| Custom coordination required | The ufrag routing metadata protocol is proprietary |

| Not designed for multi-party | Group calls or human handoff need a SFU, not this pattern |

| Video pricing opacity | Per-snapshot cost is about $0.0675; no published scaling formula |

The relay model is optimized for point-to-point, latency-sensitive sessions. The post is explicit about this: most OpenAI sessions are 1:1 between a user and a model. If you're building a product that needs human agents to join live sessions, or multi-user collaborative voice, you'd likely reach for a different architecture.

The Redis dependency for session recovery is also worth noting. A Redis failure during a relay restart means some sessions wait for the next STUN packet to re-establish routing. The post describes this as recoverable, but doesn't publish failure rates or recovery time data.

For developers building on the best AI voice agents today, the practical takeaway is that WebRTC - not WebSockets - is the connection mode OpenAI has optimized this infrastructure for. If you're seeing inconsistent latency with the Realtime API, the relay infrastructure and its geographic coverage are part of what determines your first-hop cost.

Sources:

- How OpenAI delivers low-latency voice AI at scale - OpenAI Engineering, May 4, 2026

- How OpenAI does WebRTC in the new gpt-realtime - WebRTCHacks

- Realtime API with WebRTC - OpenAI Developer Docs

- Updates for developers building with voice - OpenAI Developers Blog