Pentagon Accepts OpenAI's Red Lines - the Same Ones It Rejected From Anthropic

OpenAI secured a Pentagon classified network deal with prohibitions on mass surveillance and autonomous weapons - the exact same terms that got Anthropic banned from all federal agencies hours earlier.

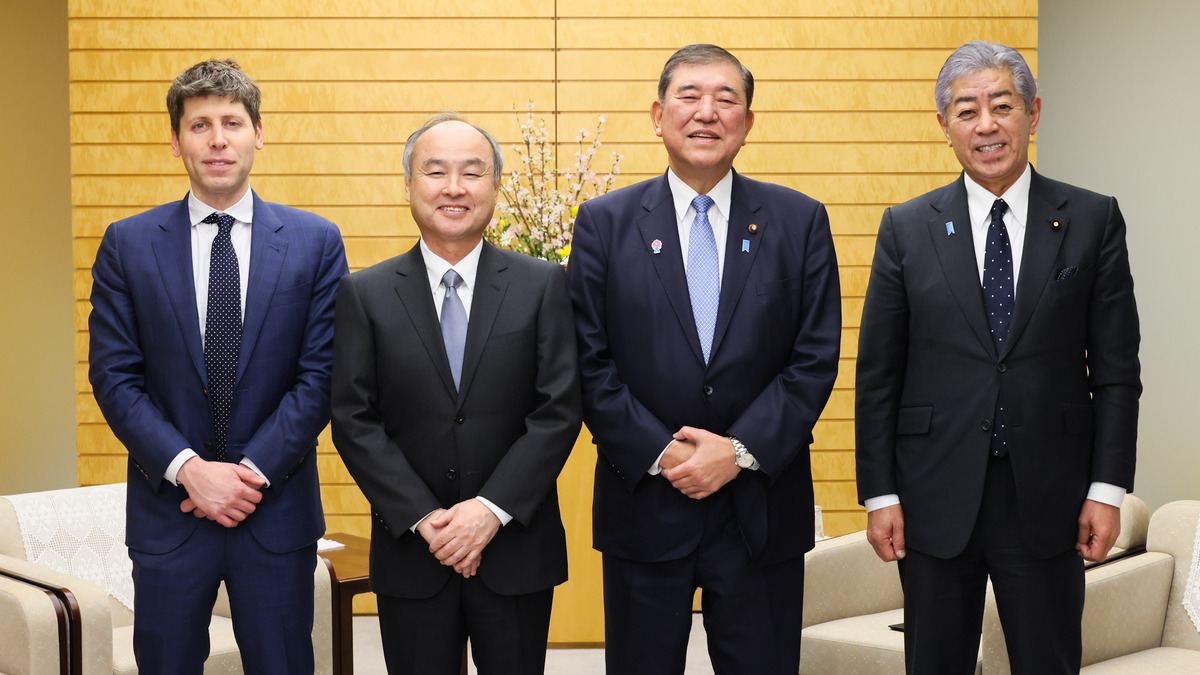

Sam Altman posted to X on Friday night: "Tonight, we reached an agreement with the Department of War to deploy our models in their classified network." He then listed OpenAI's two core safety principles - prohibitions on domestic mass surveillance and human responsibility for the use of force, including autonomous weapons. The Defense Department, Altman said, "agrees with these principles, reflects them in law and policy, and we put them into our agreement."

Those are the same two red lines that got Anthropic banned from every federal agency hours earlier.

Claim / Our Take

- What OpenAI says: The Pentagon agreed to the same safety red lines Anthropic asked for - no mass surveillance, no autonomous weapons - and put them in writing

- What actually happened: The Pentagon accepted language from OpenAI that it spent months rejecting from Anthropic, with the key difference being framing - OpenAI embedded safeguards as technical model controls, not contractual policy restrictions

- What's missing: The full contract text is not public, OpenAI's "technical safeguards" are undefined, and the deal gives OpenAI broad latitude to decide how those safeguards are built

Sam Altman announced the Pentagon deal on X late Friday night, hours after Anthropic was banned from all federal agencies.

Sam Altman announced the Pentagon deal on X late Friday night, hours after Anthropic was banned from all federal agencies.

What They Showed

The deal terms

According to reporting from Fortune, CNN, and NPR, the OpenAI-Pentagon agreement includes several specific provisions:

- Cloud-only deployment. OpenAI retains control over where its models run, limiting deployment to cloud environments. Edge systems - drones, aircraft, autonomous platforms - are excluded.

- Refusal rights. If the model refuses to perform a task, the government agreed not to force OpenAI to override it.

- Safety stack control. OpenAI builds its own "layered system of technical, policy, and human controls" between the model and real-world deployment.

- Named red lines. Prohibitions on autonomous weapons, domestic mass surveillance, and critical decision-making automation are written into the contract.

- Embedded personnel. OpenAI deploys researchers with security clearances who can track usage and advise on risks.

Altman framed the deal as collaborative. "The DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome," he wrote.

The Anthropic comparison

These terms map almost exactly to what Anthropic had been negotiating for months. Anthropic's two red lines were the same: no mass domestic surveillance of Americans, no fully autonomous weapons without human oversight. The Pentagon rejected those terms and designated Anthropic a supply chain risk to national security.

"We are asking the DoW to offer these same terms to all AI companies, which in our opinion we think everyone should be willing to accept," Altman wrote.

What We Checked

The public record makes a full contract comparison impossible - neither agreement has been released. But based on available reporting, the real terms appear to overlap almost completely. Here is what each company asked for, side by side:

| Term | Anthropic's Position | OpenAI's Position |

|---|---|---|

| Mass domestic surveillance | Contractual prohibition | Technical model controls + contractual language |

| Autonomous weapons | Contractual prohibition on fully autonomous systems | Cloud-only deployment prevents edge use; contractual prohibition |

| Deployment environment | Not publicly specified | Cloud only - no edge systems (drones, aircraft) |

| Model override rights | Demanded right to refuse use cases | If model refuses, government won't force override |

| Personnel oversight | Not publicly specified | Embedded researchers with security clearances |

| Contract framing | Policy restrictions imposed by Anthropic | Technical safeguards built by OpenAI |

| "All lawful uses" language | Rejected | Accepted, with carve-outs above |

The critical difference isn't what was restricted. It's how the restrictions were packaged.

Anthropic framed its limits as ethical policy positions - the company was telling the Pentagon what it could not do. OpenAI framed the same limits as technical controls - the models simply would not perform certain tasks, and the deployment architecture made others physically impossible.

Same outcome. Different sales pitch.

The Pentagon accepted safety red lines from OpenAI that it spent months rejecting from Anthropic - raising questions about whether the dispute was ever really about the terms themselves.

The Pentagon accepted safety red lines from OpenAI that it spent months rejecting from Anthropic - raising questions about whether the dispute was ever really about the terms themselves.

The Gap

The framing problem

Semafor reported that "OpenAI framed compliance as model capability limitations; Anthropic positioned it as ethical policy enforcement." This distinction matters politically. The Trump administration spent weeks accusing Anthropic of being "woke" and trying to "seize veto power over operational decisions." It's hard to accuse a company of being preachy when its models simply refuse to do something - that looks like a technical limitation, not a moral stance.

But from an engineering perspective, there is no functional difference. A contractual prohibition and a hardcoded model refusal achieve the same result: the military doesn't get to use the model for that task. The Pentagon accepted this when it came from OpenAI's technical stack. It rejected it when it came from Anthropic's legal team.

What "technical safeguards" actually means

Altman said OpenAI will build "technical safeguards to ensure its models behave as they should." This is thin on specifics. What does a "layered system of technical, policy, and human controls" look like in practice?

For context: OpenAI's models already have system-level safety filters. These are prompt-level instructions and RLHF-trained refusals that prevent certain outputs. They work most of the time. They're also routinely bypassed through jailbreaks, prompt injection, and creative context manipulation. Any engineer who has worked with frontier LLMs knows that model-level refusals aren't the same as guaranteed enforcement.

Cloud-only deployment is a stronger safeguard. If models never touch edge hardware, they can't directly control weapons systems. But cloud-only also means the Pentagon needs reliable network connectivity to use OpenAI's models in the field - a genuine operational constraint in contested environments.

Defense Secretary Pete Hegseth designated Anthropic a "supply chain risk to national security" - then accepted nearly identical terms from OpenAI the same day.

Defense Secretary Pete Hegseth designated Anthropic a "supply chain risk to national security" - then accepted nearly identical terms from OpenAI the same day.

The timing

The deal was announced hours after Trump banned Anthropic. Fortune reported that the contract wasn't yet signed at the time of Altman's all-hands meeting on Friday. The speed suggests OpenAI had been negotiating in parallel for some time, but the public announcement was strategically timed to fill the vacuum Anthropic's departure created.

Dean Ball, a former AI adviser in the Trump administration, called the government's action against Anthropic "simply attempted corporate murder" and said he "could not possibly recommend starting an AI company in the United States." Senator Mark Warner raised concerns that the decisions appeared "driven by political considerations" rather than analysis.

The xAI factor

Elon Musk's xAI signed an agreement earlier in the week to deploy Grok on the same classified systems - but under the "all lawful purposes" standard that Anthropic rejected. xAI accepted no red lines. OpenAI accepted the same red lines as Anthropic but packaged them differently. The Pentagon approved both, and punished only the company that framed its limits as an ethical demand.

| Company | Red Lines | Framing | Pentagon Response |

|---|---|---|---|

| Anthropic | No mass surveillance, no autonomous weapons | Contractual policy restrictions | Banned from all federal agencies |

| OpenAI | No mass surveillance, no autonomous weapons | Technical model safeguards | Deal approved |

| xAI | None stated | "All lawful purposes" | Deal approved |

Verdict

OpenAI's deal includes the same meaningful protections Anthropic fought for. The models will not be used for mass domestic surveillance. They won't power autonomous weapons. Humans will remain in the loop for use of force. These are the right guardrails, and getting the Pentagon to agree to them in writing is meaningful.

But the lesson the AI industry just learned is not about safety principles. It's about packaging. Anthropic told the Pentagon no and got destroyed. OpenAI said yes with conditions and got a classified network contract. The end result for the models may be identical. The end result for the companies could not be more different.

The question nobody in Washington is answering: if the Pentagon was willing to accept these red lines from OpenAI all along, why did it spend months trying to force Anthropic to drop them?

Sources:

- OpenAI announces Pentagon deal after Trump bans Anthropic - NPR

- OpenAI strikes deal with Pentagon hours after Trump admin bans Anthropic - CNN

- OpenAI is negotiating with the U.S. government, Sam Altman tells staff - Fortune

- OpenAI strikes deal with Pentagon hours after Anthropic was blacklisted by Trump - CNBC

- Hours after Pentagon bans Anthropic, OpenAI strikes defense deal - Semafor

- OpenAI strikes deal with Pentagon to use tech in classified network - Al Jazeera

- OpenAI strikes deal with Pentagon after Trump orders government to stop using Anthropic - NBC News

- Former Trump AI adviser torches president's war on Anthropic - Mediaite