OpenAI Aims for AI Research Intern by September 2026

OpenAI's chief scientist Jakub Pachocki has laid out a two-stage plan to deploy an autonomous AI research intern by September 2026 and a full AI researcher by March 2028, backed by $1.4 trillion in planned compute spending.

OpenAI's chief scientist Jakub Pachocki gave the most concrete timeline the company has ever offered for fully automated scientific research: an autonomous AI research intern running by September 2026, and a complete AI researcher - capable of managing independent projects - by March 2028.

The announcement came through coverage in MIT Technology Review and The Decoder this week. Pachocki framed it as OpenAI's "North Star," the single goal pulling together its work on reasoning models, agents, and interpretability. This isn't a product preview. It is a stated engineering objective with a deadline.

TL;DR

- September 2026 target: an autonomous AI system that handles end-to-end research tasks taking a human researcher days to complete

- March 2028 target: a fully independent multi-agent AI researcher capable of managing entire research programs

- OpenAI has committed roughly $1.4 trillion in infrastructure spending and plans to reach 30 gigawatts of compute capacity

- The project spans mathematics, physics, biology, and chemistry - Pachocki says "small new discoveries" are possible by the intern milestone

What OpenAI Actually Committed To

The roadmap has two distinct milestones, and conflating them would miss the difference in what each one implies.

The September 2026 Intern

The intern isn't a general-purpose AI assistant with better coding. Pachocki describes a system running on hundreds of thousands of GPUs, capable of taking a specific research problem and handling it end-to-end - designing experiments, running them, analyzing results, and proposing follow-on work - without requiring moment-to-moment human supervision.

The scope is narrower than it sounds. At launch, it covers a "small number" of well-defined research problems, not open-ended discovery across all science. The target areas are mathematics, physics, and life sciences including biology and chemistry. Pachocki expects it to make "small new discoveries" by this point, which sets a measurable bar.

The March 2028 Researcher

The 2028 milestone is a different claim entirely. A fully autonomous AI researcher, in Pachocki's framing, would run independent research projects at a scale and complexity beyond what any individual human researcher could manage - coordinating across subproblems, tracking long-running experiments, and producing "big discoveries."

Sam Altman posted a notably candid caveat on X: "We may totally fail at this goal, but given the extraordinary potential impacts we think it is in the public interest to be transparent about this." That transparency is itself a shift. Altman previously mentioned 2028 as the year OpenAI would have a "legitimate AI researcher," but the specific September 2026 intern milestone is new.

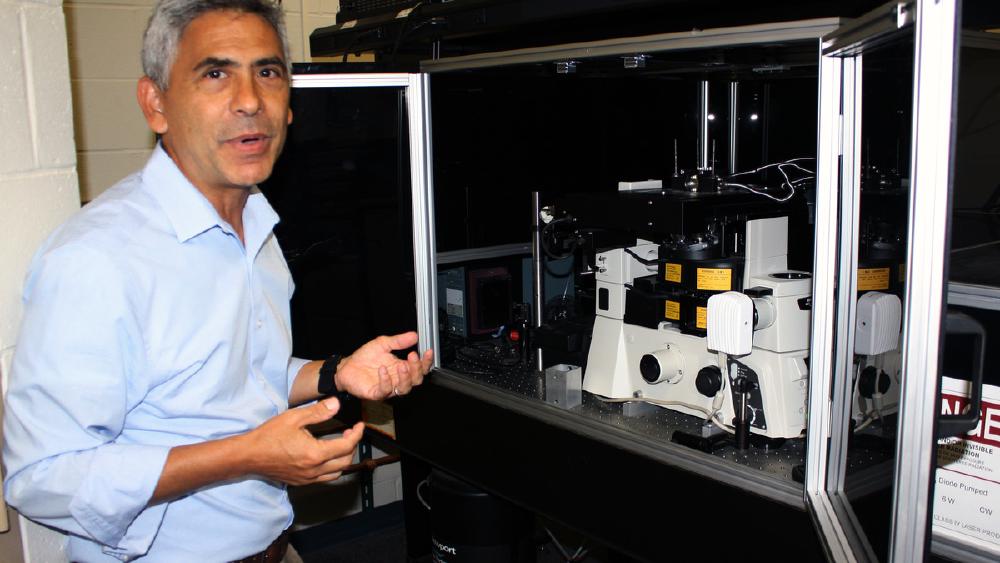

Jakub Pachocki, who joined OpenAI in 2017 and became Chief Scientist in 2024, played key roles in GPT-4 and the reasoning model line.

Source: commons.wikimedia.org

Jakub Pachocki, who joined OpenAI in 2017 and became Chief Scientist in 2024, played key roles in GPT-4 and the reasoning model line.

Source: commons.wikimedia.org

The Compute Bet Behind the Roadmap

No roadmap of this scale holds up without asking what it requires. The numbers here aren't speculative.

$1.4 Trillion and 30 Gigawatts

OpenAI has committed roughly $1.4 trillion in infrastructure spending - confirmed across multiple sources including MIT Sloan Management Review - and plans to reach around 30 gigawatts of compute capacity. The long-term target is to add one gigawatt of new computing power per week, at roughly $20 billion per gigawatt over a five-year buildout.

Stargate, the data center project under construction in Abilene, Texas, is the physical infrastructure underpinning this. Partners include AMD, Broadcom, Google, Microsoft, Nvidia, Oracle, and SoftBank. This is a broader pattern - OpenAI has been signaling massive compute investment for months, and the researcher roadmap is the stated reason for why that scale is necessary.

What Pachocki Said

"I think we are getting close to a point where we'll have models capable of working indefinitely in a coherent way just like people do. Of course, you still want people in charge and setting the goals. But I think we will get to a point where you kind of have a whole research lab in a data center."

That framing is careful. "People in charge and setting the goals" is doing heavy lifting. The question of what it means to maintain human oversight over a research system operating at tens of thousands of simultaneous experiment threads doesn't have a clean answer.

The Stargate project in Abilene, Texas will eventually house some of the largest compute clusters ever built. The scale required for an autonomous AI researcher is significant.

Source: commons.wikimedia.org

The Stargate project in Abilene, Texas will eventually house some of the largest compute clusters ever built. The scale required for an autonomous AI researcher is significant.

Source: commons.wikimedia.org

What the Intern Would Actually Do

Research automation isn't new. Andrej Karpathy recently showed what a single-GPU autoresearch loop looks like for a narrow ML problem. The gap between that and what Pachocki is describing is the difference between a proof of concept and production infrastructure.

The Target Task Profile

The intern's task profile - a few days of work for a human researcher, handled end-to-end - maps to things like: running ablation studies across model architectures, testing a mathematical conjecture against known counterexamples, screening candidate molecules for a target biological property. These are well-scoped, repeatable, and verifiable. The success criterion is that the AI completes them reliably with results that hold up.

That's a meaningful bar. The goal isn't to help a researcher think faster; it's to replace them on specific tasks entirely.

Chain-of-Thought Oversight

Pachocki also introduced a five-layer safety model with a component called "Chain-of-Thought Faithfulness" - a method designed to allow auditing of a model's internal reasoning. Some parts of that reasoning, he acknowledged, would remain unsupervised.

That admission should not slide past quietly. A system making research decisions at scale, with portions of its reasoning process that humans can't inspect, raises questions that OpenAI's safety teams have not fully resolved. The chain-of-thought faithfulness work is framed as progress on this problem, not a solution.

What It Does Not Tell You

The Verification Problem

Scientific research has a replication crisis in human-produced work. An autonomous AI researcher operating at massive scale could worsen that problem significantly. How do you verify that the "small new discoveries" are discoveries and not artifacts of the training distribution? Pachocki did not address this directly, and it's a question the field will need to answer before the September milestone arrives.

Competing Timelines

Google DeepMind published its own AGI measurement framework this week, a cognitive taxonomy of ten faculties used to track progress toward general intelligence. That framing is more cautious than OpenAI's - it defines measurement criteria rather than deployment targets. These aren't the same thing, and comparing them directly overstates the agreement in the field about what AI researchers and AGI actually mean.

OpenAI is setting deadlines. DeepMind is building yardsticks. Both reflect genuine ambition, but the approach to accountability is different.

Who Checks the Intern's Work

A human research intern's outputs get reviewed by senior researchers. An AI intern running on hundreds of thousands of GPUs, generating experimental results faster than any team can read, creates a supervision problem that scales inversely to its productivity. The faster it works, the harder it is to catch errors.

Pachocki's answer - people set the goals, AI executes - assumes that goal-setting is where errors get caught. That's not how research actually works. Errors emerge during execution, in the design of experiments, in the interpretation of results.

The September 2026 date is specific enough to be meaningful. In 18 months, we'll know whether the intern exists, what it can actually do, and whether "small new discoveries" holds up under scrutiny. That's a shorter feedback loop than most AI roadmap announcements, which tend to keep their commitments vague enough to survive indefinitely. Pachocki's framing either ages well or it doesn't.

Sources:

- OpenAI is throwing everything into building a fully automated researcher - MIT Technology Review

- OpenAI targets full-scale autonomous AI researcher by early 2028 - The Decoder

- OpenAI Sets 2026 Goal for AI Research Intern, Plans $1.4T Compute Push - MIT Sloan Management Review

- OpenAI Aims To Create An AI Research Intern By Sept 2026 - OfficeChai (Altman quote)