NVIDIA's Vera Rubin Arrives at GTC 2026 With 6 Chips

NVIDIA opens GTC 2026 with the Vera Rubin platform - six co-designed chips delivering 50 PFLOPS of inference per GPU and 10x lower token cost than Blackwell.

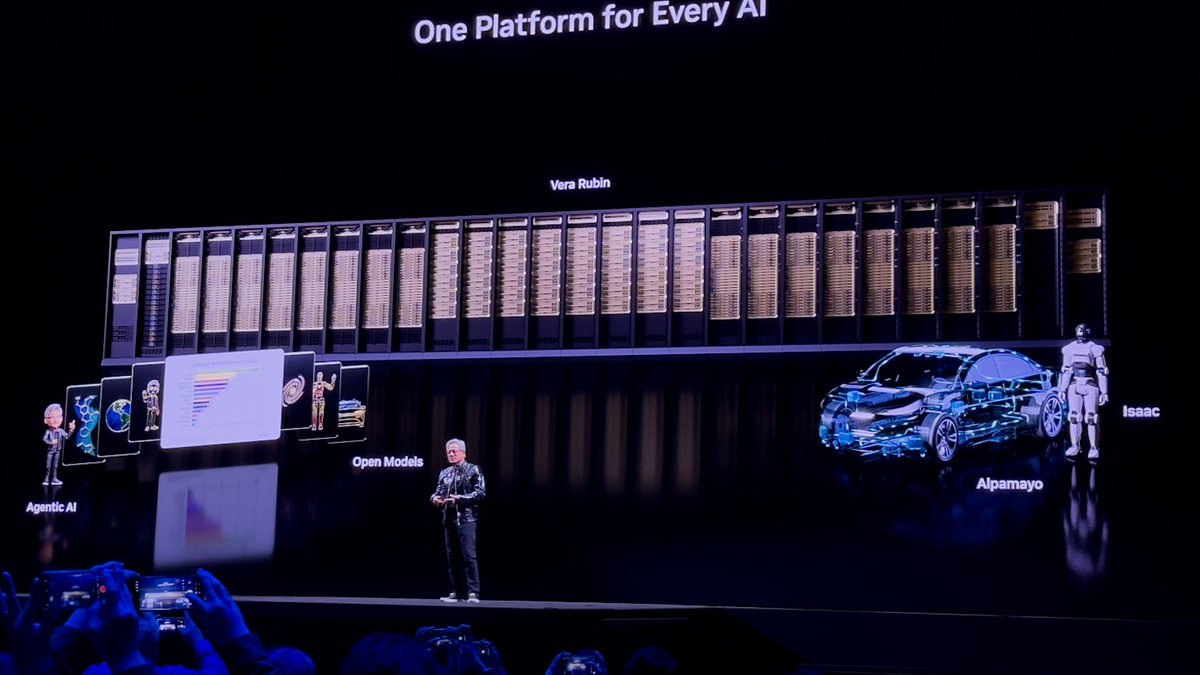

Jensen Huang walked onto the floor of SAP Center in San Jose at 11 a.m. Pacific today, delivering the opening keynote of GTC 2026 to 30,000 attendees from 190 countries. The centerpiece: the Vera Rubin platform, a six-chip architecture NVIDIA says will cut AI inference costs by a factor of ten compared to Blackwell.

TL;DR

- Six co-designed chips: Vera CPU, Rubin GPU, NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU, Spectrum-6 Ethernet

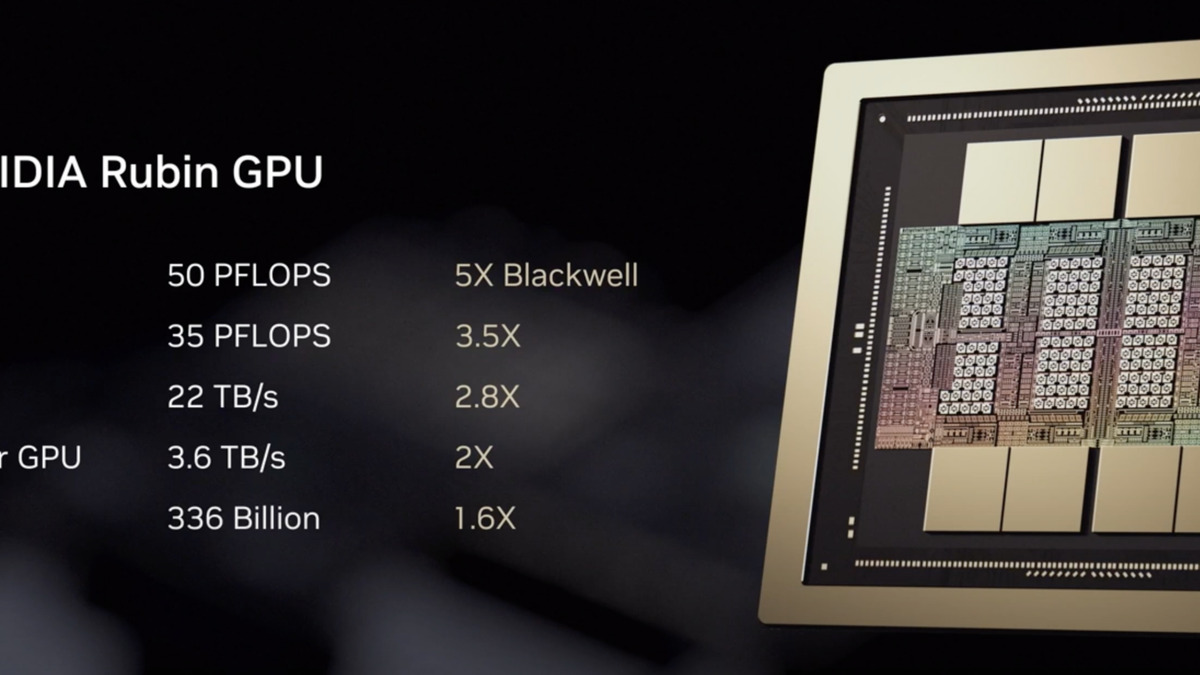

- Each Rubin GPU delivers 50 PFLOPS of NVFP4 inference - a 5x improvement over Blackwell GB200

- NVL72 rack packs 72 GPUs and 36 CPUs, rated at 3.6 exaFLOPS of inference performance

- 10x lower inference cost per token than the previous generation, per NVIDIA's figures

- Production starts second half of 2026; cloud providers including AWS, Google Cloud, and Microsoft are first in line

- Feynman, the next architecture (2028), previewed for the first time - built on TSMC's 1.6nm process

What NVIDIA Announced

The Vera Rubin platform has been in rumor circulation since last year, but today's keynote put firm specs on the table. NVIDIA describes it as "extreme co-design" - every chip in the stack was built to work with the others, rather than assembled from off-the-shelf parts.

| Chip | Role | Key spec |

|---|---|---|

| Vera CPU | Orchestration and memory | 88 Olympus cores, 1.5TB LPDDR5X |

| Rubin GPU | Compute | 50 PFLOPS NVFP4, 288GB HBM4 |

| NVLink 6 Switch | Intra-rack fabric | 3.6 TB/s per GPU, 260 TB/s per NVL72 |

| ConnectX-9 SuperNIC | Scale-out networking | 1.6 Tb/s per GPU |

| BlueField-4 DPU | Storage and infrastructure | 64-core Grace, 20M IOPs NVMe |

| Spectrum-6 Switch | Ethernet fabric | 102.4 Tb/s, co-packaged optics |

The Vera CPU replaces the Grace CPU from the previous generation. Its 88 Olympus cores use Armv9.2 architecture and connect to Rubin GPUs through NVLink-C2C at 1.8 TB/s coherent bandwidth. NVIDIA positions it as the memory and coordination engine for agentic workloads that need fast context access across large models.

The Rubin GPU carries 336 billion transistors - a 1.6x increase over Blackwell - and uses sixth-generation HBM4 with 22 TB/s bandwidth per GPU. That's a 2.8x jump in memory bandwidth over the prior generation.

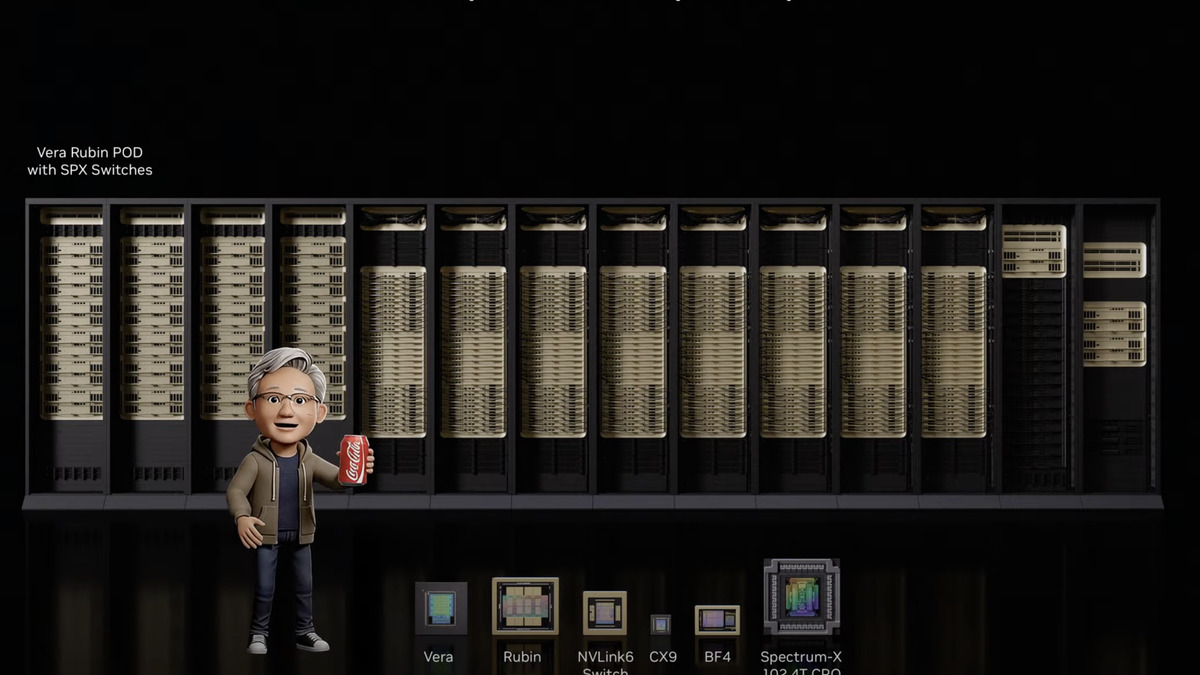

NVIDIA's Vera Rubin platform comprises six chips engineered together: Vera CPU, Rubin GPU, NVLink 6 Switch, ConnectX-9, BlueField-4, and Spectrum-6.

Source: storagereview.com

NVIDIA's Vera Rubin platform comprises six chips engineered together: Vera CPU, Rubin GPU, NVLink 6 Switch, ConnectX-9, BlueField-4, and Spectrum-6.

Source: storagereview.com

NVL72 Rack Numbers

The flagship configuration is the NVL72: 72 Rubin GPUs and 36 Vera CPUs connected in a single all-to-all NVLink 6 fabric, delivering 3.6 exaFLOPS of NVFP4 inference and 2.5 exaFLOPS of training. Total HBM4 capacity in a single rack: 20.7 TB, with 1.6 PB/s of bandwidth. An 8-GPU HGX Rubin NVL8 form factor is also available for x86 server deployments.

NVIDIA claims the NVL72 delivers 10x the inference throughput of Blackwell at one-tenth the cost per token. For mixture-of-experts models - the architecture behind DeepSeek and many current frontier models - the company says you need one-quarter as many GPUs to train compared to the previous generation.

"Rubin arrives at exactly the right moment, as AI computing demand for both training and inference is going through the roof," said Huang in the official announcement.

The Agentic AI Pitch

NVIDIA is framing Vera Rubin explicitly around agentic workloads, not just raw LLM throughput. The Vera CPU's design - 88 cores, high memory bandwidth, coherent interconnect to GPUs - targets the context management and tool-calling overhead that makes today's agents slow. The Spectrum-6 switch adds co-packaged optics, putting silicon photonics inside the rack for the first time, which addresses the power constraints that bottleneck large-scale inference clusters.

The BlueField-4 DPU adds AI-native key-value cache sharing, a specific optimization for inference serving where multiple requests share context. That's a direct response to the way large-scale deployments like those at OpenAI, xAI, and Anthropic actually run inference.

The Rubin GPU delivers 50 PFLOPS of NVFP4 inference compute and 288GB of HBM4 memory per chip.

Source: storagereview.com

The Rubin GPU delivers 50 PFLOPS of NVFP4 inference compute and 288GB of HBM4 memory per chip.

Source: storagereview.com

Major AI labs are listed as launch partners: Amazon Web Services, Anthropic, Google Cloud, Meta, Microsoft, OpenAI, Oracle Cloud Infrastructure, xAI, CoreWeave, Lambda, Nebius, and Nscale. NVIDIA also announced a separate multiyear partnership with Thinking Machines Lab to deploy at least one gigawatt of Vera Rubin capacity for frontier model training.

What It Does Not Tell You

The 10x cost reduction figure needs careful reading. NVIDIA is comparing NVFP4 inference on Rubin against Blackwell in rack-level cost-per-token calculations. That framing flatters the new platform in ways that matter to know.

First, Blackwell isn't old. It launched in 2025 and is still being rolled out at scale. Buyers who committed to Blackwell purchases 12 months ago aren't in a position to swap to Rubin when it ships in the second half of 2026.

Second, the NVL72 is a liquid-cooled, custom-networking rack. Operators who don't have liquid-cooled data center infrastructure face sizable retrofitting costs before they can run Vera Rubin at full scale. NVIDIA's numbers don't include that.

Third, the 50 PFLOPS figure is NVFP4 - a format that not every model uses, and that requires appropriate quantization pipelines. FP8 and BF16 performance figures weren't highlighted in today's materials.

The Feynman preview added at the end of the keynote is worth noting separately. Targeted for 2028 on TSMC's 1.6nm A16 process, it's explicitly positioned for reasoning and long-context agentic workloads. Huang described it as designed around massive key-value cache storage and long-term memory - the hardware counterpart to reasoning models that need to hold context across thousands of steps. No performance numbers were given; this was positioning, not product.

Jensen Huang presenting the Vera Rubin platform details. GTC 2026 runs through March 19 in San Jose.

Source: storagereview.com

Jensen Huang presenting the Vera Rubin platform details. GTC 2026 runs through March 19 in San Jose.

Source: storagereview.com

GTC 2026 runs through March 19, with more than 700 technical sessions. The DGX Spark, NVIDIA's workstation-class system, is available for purchase at the conference venue. Vera Rubin NVL72 systems ship from cloud providers in the second half of 2026 - the question for operators is whether the infrastructure requirements and lead time justify waiting, or whether Blackwell at current prices closes the gap on total cost of ownership before Rubin becomes widely available.

Sources: