NVIDIA's Secret Chip Fuses GPU and Groq for OpenAI

NVIDIA will unveil a new inference processor built on Groq's LPU architecture at GTC 2026, with OpenAI as its first major customer allocating 3 GW of dedicated capacity.

NVIDIA is about to do something it has never done before: ship an inference chip that's not a GPU. According to a Wall Street Journal report published late Friday, the company has been building a new processor that integrates Groq's Language Processing Unit technology - the same deterministic, SRAM-based architecture that made Groq the fastest inference engine on the market. The chip is expected to debut at GTC 2026 on March 16 in San Jose, with OpenAI lined up as its first and largest customer.

This isn't a minor product refresh. It's NVIDIA publicly admitting that GPUs alone can't win the inference war.

TL;DR

- NVIDIA will unveil a new inference processor incorporating Groq's LPU technology at GTC 2026 on March 16

- OpenAI is the lead customer, allocating 3 GW of dedicated inference capacity for the new chip

- The processor uses on-chip SRAM instead of HBM, delivering up to 80 TB/s memory bandwidth - roughly 10x what an H100 achieves

- NVIDIA paid $20 billion in December to license Groq's tech and hire its founder Jonathan Ross

- The chip targets latency-sensitive workloads like OpenAI's Codex programming tool

How the Deal Came Together

The backstory starts with frustration. All through 2025, OpenAI engineers working on Codex - the company's code-generation product - found that NVIDIA's GPU-based inference was too power-hungry and too slow for the real-time, latency-sensitive workloads they needed. Internal teams attributed Codex's performance limitations directly to GPU hardware, according to sources cited by the Wall Street Journal.

OpenAI started shopping around. It had conversations with Cerebras, SambaNova, and - most notably - Groq, whose LPU chips were already delivering inference speeds that no GPU could match. As we noted in our Groq review, single-chip throughput on Llama models was hitting speeds that made GPU-based providers look like they were running on dial-up.

NVIDIA saw the threat. In December 2025, it moved to neutralize Groq by signing a $20 billion licensing deal - its largest ever - and hiring Groq founder and CEO Jonathan Ross along with President Sunny Madra and the bulk of the engineering team. The deal was structured as a non-exclusive license plus acqui-hire rather than a full acquisition, a move widely interpreted as a way to avoid triggering mandatory merger reviews.

"NVIDIA has long been one of our most important partners... Their upcoming generations should be great," Sam Altman said in a statement.

AI inference workloads are driving demand for specialized hardware that goes beyond traditional GPU architectures.

AI inference workloads are driving demand for specialized hardware that goes beyond traditional GPU architectures.

Inside the Architecture

Why SRAM Changes Everything

The fundamental difference between a GPU and Groq's LPU comes down to memory. GPUs use High Bandwidth Memory (HBM) - off-chip DRAM stacks that sit next to the compute die and communicate over a relatively narrow bus. Even the H100's 3.35 TB/s of HBM bandwidth becomes a bottleneck during inference, when the chip spends most of its time waiting for model weights to arrive from memory.

Groq's approach throws that model out completely. The LPU stores model weights directly in on-chip SRAM - not as cache, but as primary storage. A single LPU chip holds around 230 MB of SRAM and delivers roughly 80 TB/s of internal memory bandwidth. That is a 24x advantage over the H100.

The tradeoff is capacity. 230 MB isn't enough to hold even a small language model, so you need to link hundreds of LPU chips together. But when you do, the latency characteristics are fundamentally different from anything a GPU cluster can achieve.

Deterministic Execution

The other key innovation is deterministic scheduling. In a GPU, hardware schedulers dynamically manage instructions at runtime - deciding which threads run where, arbitrating memory access, handling cache misses. This creates unpredictable "tail latency" and jitter that makes guaranteeing response times impossible.

Groq removes all of that. The compiler schedules every memory load, every operation, and every packet transmission at compile time. The hardware has no autonomy to make runtime decisions. There are no cache misses because there's no cache. There are no branch prediction errors because there's no branch predictor. Every clock cycle is accounted for.

The result: GPUs typically run at 30-40% use during inference because they're waiting on memory. Groq's architecture can sustain close to 100% compute use.

The NVIDIA Integration

What NVIDIA is building for GTC is not a standalone LPU. According to reports from TrendForce and other industry analysts, the new chip integrates Groq's deterministic execution model and SRAM-based memory into a hybrid design that also taps NVIDIA's existing strengths in interconnects and software tooling. The chip reportedly uses TSMC's A16 process node with 3D stacking technology - similar in concept to AMD's X3D approach - to layer SRAM dies on top of the compute die.

| Spec | Traditional GPU (H100) | Groq LPU | NVIDIA-Groq Chip (Expected) |

|---|---|---|---|

| Architecture | Dynamic scheduling | Deterministic | Hybrid deterministic |

| Primary Memory | HBM3 (off-chip) | SRAM (on-chip) | SRAM + 3D stacking |

| Memory Bandwidth | ~3.35 TB/s | ~80 TB/s | Not yet disclosed |

| Inference Focus | General (train + infer) | Inference only | Inference only |

| Process Node | TSMC 4N | GlobalFoundries 14nm | TSMC A16 (reported) |

| Key Workload | Training + inference | Low-latency inference | Agentic AI + inference |

OpenAI's 3 GW inference commitment would require data center capacity equivalent to several facilities of this scale.

OpenAI's 3 GW inference commitment would require data center capacity equivalent to several facilities of this scale.

Who Gets It First

OpenAI is the headline customer. The company has committed to 3 GW of dedicated inference capacity powered by the new chip - a staggering allocation that underscores how much compute modern AI agents consume. For context, 3 GW is roughly the output of three large nuclear power plants.

The immediate use case is Codex, OpenAI's code-generation platform, where real-time responsiveness is critical. But the broader target is agentic AI workloads - autonomous systems that need to make thousands of inference calls per task with guaranteed latency bounds. That's precisely what deterministic hardware excels at.

NVIDIA also secured a major win by bringing OpenAI back into its ecosystem. As we reported when the original $100 billion NVIDIA-OpenAI infrastructure deal collapsed in February, OpenAI had been actively diversifying its chip suppliers. This new inference chip appears to be the olive branch that worked.

The Competitive Landscape

NVIDIA controls roughly 90-95% of the data-center GPU market for AI training. But inference is a different game, and the company faces real competition:

- Google and Amazon both have custom inference silicon (TPUs and Trainium/Inferentia chips) that their cloud customers already use

- Cerebras recently closed its own billion-dollar funding round and offers wafer-scale inference chips

- SambaNova raised $350 million with Intel backing

- Specialized inference ASICs are projected to capture 45% of the inference market by 2030

By absorbing Groq's technology, NVIDIA is trying to cut off the most credible challenger before it could scale independently. Groq had grown from 356,000 to over 2 million developer registrations in a single year and was processing over a billion API calls monthly.

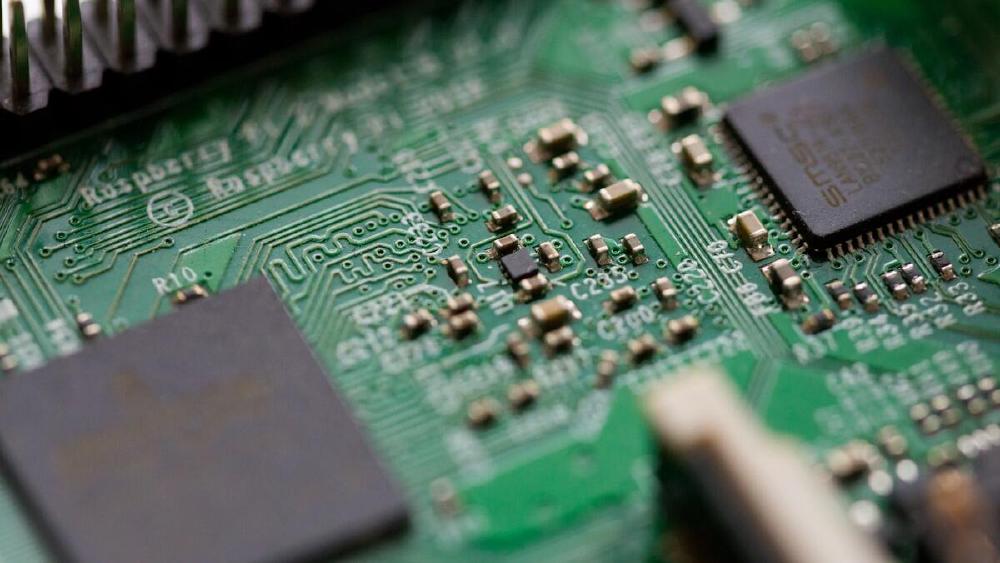

Integrating Groq's SRAM-based LPU design into NVIDIA's chip ecosystem presents significant engineering challenges around memory density and software tooling.

Integrating Groq's SRAM-based LPU design into NVIDIA's chip ecosystem presents significant engineering challenges around memory density and software tooling.

Where It Falls Short

The SRAM Capacity Problem

The fundamental limitation of SRAM-based inference is density. A single Groq LPU holds 230 MB. Running a 70B parameter model at FP16 precision requires roughly 140 GB of weight storage. That means you need over 600 chips just for the weights of one model. Compare that to eight H100s with 80 GB of HBM each.

The 3D stacking approach NVIDIA is reportedly using will help, but it won't close a gap measured in orders of magnitude. Until SRAM density improves dramatically, these chips are best suited for smaller, distilled models or as part of a hybrid deployment where GPUs handle the heavy lifting and LPU-style chips handle the latency-critical last mile.

Software Ecosystem Lock-in

CUDA is the reason NVIDIA leads AI compute. Every major framework, every training pipeline, every inference runtime is built on it. A new inference chip with a fundamentally different programming model - even one made by NVIDIA - will face adoption friction. Developers will need new toolchains, new profiling tools, and new mental models for writing efficient code.

NVIDIA has the resources to build that ecosystem, but it'll take time. And in the meantime, the existing GPU inference stack keeps getting better with each CUDA update.

Regulatory Questions

The $20 billion licensing-plus-acqui-hire structure was clearly designed to avoid antitrust scrutiny. But regulators are paying attention. The U.S. DOJ subpoenaed NVIDIA in late 2024, and the UK, EU, France, South Korea, and China have all opened inquiries into NVIDIA's market practices. If regulators decide that this deal is effectively an acquisition by another name, the fallout could be significant.

What to Watch

GTC 2026 kicks off March 16. Jensen Huang's keynote is scheduled for 11 a.m. PDT at the SAP Center in San Jose. If the reports are accurate, this will be the first time NVIDIA has unveiled a non-GPU compute product designed for AI workloads.

The key numbers to watch: actual memory bandwidth figures, power consumption per inference operation, and whether the chip ships with CUDA compatibility or requires an entirely new software stack. Those details will determine whether this is a genuine architectural shift or an expensive insurance policy.

Either way, the inference era just got a lot more interesting.

Sources:

- Wall Street Journal - NVIDIA plans new chip to speed AI processing (via SiliconANGLE)

- WCCFTech - OpenAI Is Set to Be the Biggest Customer for the Upcoming NVIDIA-Groq AI Chip

- Analytics Insight - NVIDIA's GTC 2026 Reveal: AI Processor Featuring Groq Technology for OpenAI

- Techzine - NVIDIA is working on a chip for AI inferencing with Groq technology

- IntuitionLabs - NVIDIA's $20B Groq Acquisition: Why It Paid 2.9x Valuation for LPU Tech

- Groq - Non-Exclusive Inference Technology Licensing Agreement

- The Motley Fool - NVIDIA's Acqui-Hire of Groq Eliminates a Potential Competitor