Netflix VOID Erases Video Objects and Rewrites Physics

Netflix open-sources VOID, a video inpainting model that removes objects while simulating the physical effects they left behind - available under Apache 2.0 with a HuggingFace demo.

Netflix shipped its first public open-source AI model this week - a video inpainting system that doesn't just erase objects from footage, it figures out what those objects were doing to the scene and corrects for that too.

Key Specs

| Spec | Value |

|---|---|

| Model | VOID (Video Object and Interaction Deletion) |

| Base | CogVideoX-Fun-V1.5-5b-InP (5B params) |

| License | Apache 2.0 |

| Min VRAM | 40GB (A100-class) |

| Resolution | 384x672 default, up to 197 frames |

| GitHub Stars | 449 at launch |

| arXiv | 2604.02296 (April 2, 2026) |

The paper, published April 2 by researchers from Netflix and INSAIT Sofia University, introduces what the team calls "physical interaction-aware video inpainting." The name VOID stands for Video Object and Interaction Deletion. The code, weights, and a HuggingFace demo space are all live today.

What It Actually Does

Most object removal tools handle the easy case: erase a static prop sitting on a table, fill the gap with background texture, done. VOID targets the harder case where the removed object was doing something - casting a shadow, pushing other objects around, occluding part of the scene.

The model addresses what the paper calls "causal downstream physical effects." Remove a person holding a ball, and VOID doesn't just paint over the person. The Gemini API analyzes the scene to identify which surrounding regions were physically influenced by that person, and the diffusion pass regenerates those regions consistently with a world where the person was never there.

One of the sample scenes bundled in the VOID GitHub repository, showing an input frame before object removal processing.

Source: github.com/Netflix/void-model

One of the sample scenes bundled in the VOID GitHub repository, showing an input frame before object removal processing.

Source: github.com/Netflix/void-model

The team built a synthetic training dataset using two existing tools: Google's Kubric for object-only physics sequences and Adobe's HUMOTO for human-object interaction clips rendered through Blender. This side-steps the need for expensive manual annotation of real removal pairs.

How the Pipeline Works

Masking format

VOID uses a quadmask encoding rather than a binary mask. Each pixel carries one of four values: remove (0), overlap (63), physically affected region (127), or keep (255). The "affected region" value is the key addition - it tells the model that a patch of the scene needs to change even though the object itself didn't occupy that pixel.

Generating the affected-region mask automatically is where the Gemini API comes in. It reasons about which parts of the scene the removed object was interacting with and expands the mask accordingly before any diffusion work begins.

Two-pass inference

The inference pipeline runs two sequential passes, each with its own weights file. The first pass (void_pass1.safetensors) produces a base inpainting result. The second pass (void_pass2.safetensors) applies optical flow warping before the noise input to correct temporal consistency artifacts on longer clips. Both passes run on the CogVideoX-Fun-V1.5-5b-InP base model, an Alibaba architecture fine-tuned for inpainting tasks.

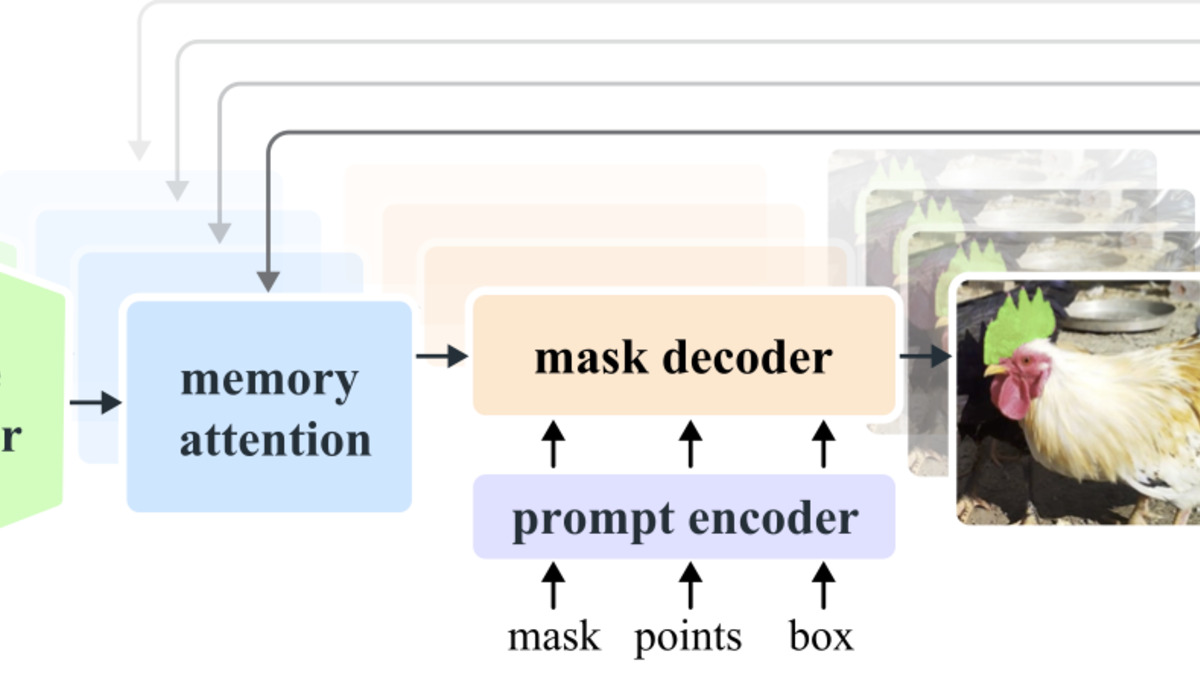

SAM2 from Meta handles the initial object segmentation. If you know which frame the object first appears in, SAM2 spreads the mask forward through the clip automatically. Sophie's beat usually covers SAM releases - Meta's SAM 3.1 last quarter already made multi-object segmentation fast enough for production use. VOID is one of the first public models to chain SAM2 and a video diffusion model in the same open-source pipeline.

SAM2's streaming memory architecture handles video segmentation frame-by-frame, spreading object masks through the clip. VOID uses it as the first stage of its two-pass pipeline.

Source: github.com/facebookresearch/sam2

SAM2's streaming memory architecture handles video segmentation frame-by-frame, spreading object masks through the clip. VOID uses it as the first stage of its two-pass pipeline.

Source: github.com/facebookresearch/sam2

Benchmark Numbers

The paper assessed against six competing methods using human preference ratings from 25 participants. The results aren't close:

| Method | User Preference |

|---|---|

| VOID | 64.8% |

| Runway | 18.4% |

| Generative Omnimatte | - |

| DiffuEraser | - |

| ROSE | - |

| MiniMax-Remover | - |

| ProPainter | - |

Runway came in second at 18.4%, which means all five remaining methods split less than 17% of the remaining votes combined. User preference studies with 25 participants are small, but the margin is wide enough to be meaningful.

For context on what open-source Apache 2.0 video models have been achieving recently, Google Gemma 4 shipped multimodal capabilities this week under the same license - the open-weight space is moving quickly on multiple fronts.

Hardware Requirements and Access

The 40GB+ VRAM floor is the main blocker for individuals. That puts VOID out of reach for anyone without an A100, H100, or equivalent workstation GPU. The team built the system on 8x A100 80GB with DeepSpeed ZeRO Stage 2 for training, which gives a sense of the scale involved.

For testing without local hardware, the HuggingFace demo at sam-motamed/VOID is live. The GitHub repo includes a Jupyter notebook that handles model weight downloads automatically.

The moving-ball sample tests VOID's ability to remove an object mid-trajectory without leaving motion-blur artifacts or inconsistent lighting.

Source: github.com/Netflix/void-model

The moving-ball sample tests VOID's ability to remove an object mid-trajectory without leaving motion-blur artifacts or inconsistent lighting.

Source: github.com/Netflix/void-model

The Apache 2.0 license covers both the code and model weights, meaning commercial use is unrestricted. Production VFX studios and post-production pipelines can integrate this without legal risk - a meaningful factor given how cautious the film industry has been about AI model licensing terms.

What To Watch

The quadmask format is non-standard. Any pipeline integration requires producing the four-value mask before running inference, which isn't plug-and-play with tools that output standard binary masks. The team ships example code, but production adoption will depend on whether wrapper tooling emerges for common compositing applications.

The 40GB VRAM requirement will likely shrink with time. The model runs in BF16 with FP8 quantization already enabled, and the community has consistently pushed similar-scale video diffusion models to run on 24GB cards within months of release. Watch the GitHub issues for quantization experiments.

The user study had 25 participants. That's a valid proof-of-concept number, but studios assessing this for production removal work should run their own evaluations against representative material before committing to a pipeline change.

Finally, the affected-region mask generation depends on the Gemini API for automated scene analysis - meaning the pipeline has a cloud dependency by default. Running fully offline requires either substituting a local vision-language model or creating the affected-region masks manually, which is doable but adds friction for air-gapped deployments.

Sources:

Last updated