Ai2 Drops MolmoWeb - Open-Source Web Agent Beats GPT-4o

Ai2's MolmoWeb is a fully open-source web agent that navigates browsers by screenshot alone, beating GPT-4o-based agents at the 8B scale with weights, training data, and code all released under Apache 2.0.

The Allen Institute for AI shipped MolmoWeb today - an open-source web browsing agent that controls a browser the same way a human does: by looking at screenshots, reasoning about what's on screen, and clicking. No HTML parsing. No accessibility trees. Just pixels and a model smart enough to navigate them.

The release covers everything: model weights, training code, the full dataset pipeline, and a live demo. It's Apache 2.0 across the board. That puts it in a different category from OpenAI Operator, Google Project Mariner, and Anthropic's computer use - all of which remain closed or API-only.

Key Specs

| Spec | Value |

|---|---|

| Models | MolmoWeb-4B, MolmoWeb-8B |

| Language backbone | Qwen3-8B (8B variant) |

| Vision encoder | SigLIP 2 (google/siglip-so400m-patch14-384) |

| WebVoyager (8B) | 78.2% pass@1, 94.7% pass@4 |

| License | Apache 2.0 |

| Code | github.com/allenai/molmoweb |

| Models | huggingface.co/allenai/MolmoWeb-8B |

How It Works

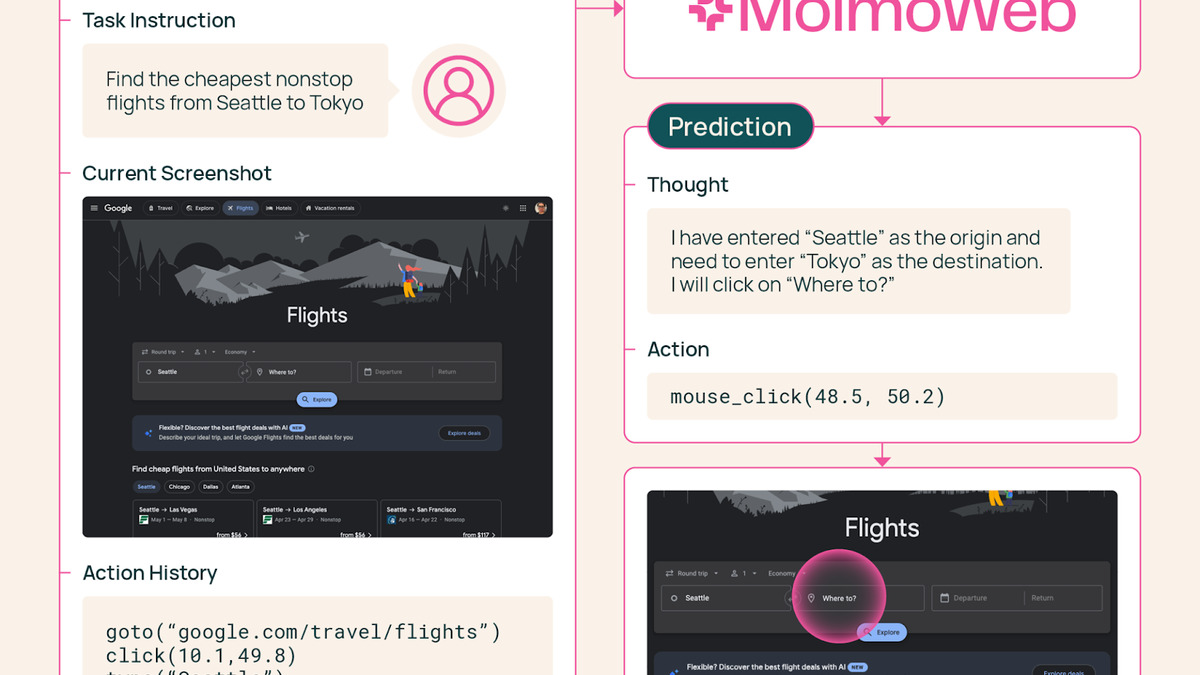

MolmoWeb runs a tight loop. It receives a natural-language task, takes a screenshot of the current browser state, generates a reasoning chain about what to do next, then executes one action using normalized screen coordinates. Repeat until done.

Supported actions: click, type, scroll, navigate to URL, open new tab. The model maintains an action history across steps, so it can recover when pages redirect unexpectedly or when a click lands on the wrong element.

No HTML, No Accessibility Tree

The decision to operate purely from screenshots is deliberate. Accessibility trees are brittle - single-page apps often break them, and many dynamic elements aren't exposed at all. A screenshot-based agent sees exactly what the user sees, including canvas elements, custom widgets, and anything that doesn't have proper ARIA markup.

The tradeoff is harder text reading. OCR-like errors from compressed screenshots are a documented limitation, and the agent can misread small type or compressed images.

Training Without Distillation

MolmoWeb wasn't trained by copying traces from proprietary vision models. Ai2 generated synthetic trajectories from text-only accessibility-tree agents, augmented with human demonstrations. That matters for licensing: there's no question of terms-of-service violation or inherited restrictions from GPT-4o or Claude.

The resulting dataset, MolmoWebMix, is the largest public human web interaction dataset released to date:

- MolmoWeb-HumanTrajs: 36,000 human task trajectories across 1,100+ websites, covering 590,000+ individual subtask demonstrations

- MolmoWeb-SyntheticTrajs: 108,000 synthetically generated trajectories from accessibility-tree agents and multi-agent pipelines

- MolmoWeb-SyntheticGround: 362,000 screenshot QA pairs (~2.2 million total examples) for GUI perception training

All three datasets are on Hugging Face under the allenai/molmoweb-data collection.

The MolmoWeb demo interface, running on molmoweb.allen.ai. The agent reads the screen as a human would and outputs normalized click coordinates.

Source: allenai.org

The MolmoWeb demo interface, running on molmoweb.allen.ai. The agent reads the screen as a human would and outputs normalized click coordinates.

Source: allenai.org

Benchmark Results

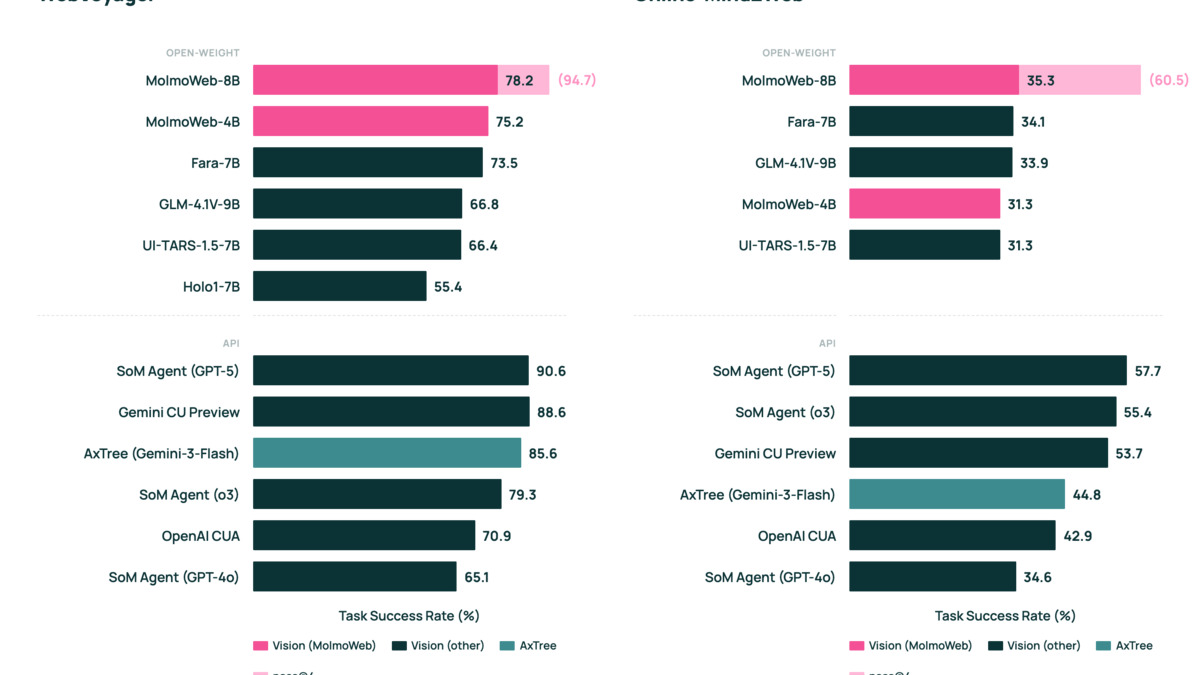

The 8B model sits above every publicly available open model at comparable scale and lands within range of agents built on frontier closed models.

| Agent | WebVoyager | Notes |

|---|---|---|

| OpenAI CUA | 87.0% | Closed, GPT-4o based |

| Surfer-H + Holo1-7B | 92.2% | Closed system |

| MolmoWeb-8B | 78.2% | Open weights + data |

| Microsoft Fara-7B | 73.5% | Open weights, less training data |

| UI-TARS-1.5-7B | ~72% | Open weights |

| Holo1-7B standalone | ~70% | Open weights |

| MolmoWeb-4B | Beats Fara-7B | At matching step budgets |

On Online-Mind2Web, the 8B model reaches 35.3% pass@1 - rising to 60.5% with pass@4 test-time scaling. On DeepShop, it hits 42.3%. On WebTailBench, 49.5%. The ScreenSpot v2 visual grounding numbers exceed both Claude 3.7 Computer Use and OpenAI CUA.

The pass@4 jumps are the more interesting signal. WebVoyager goes from 78.2% to 94.7% with four attempts per task. That's test-time compute scaling working on a 8B model, which means the failure modes are often recoverable, not fundamental.

Benchmark comparison from the Ai2 blog post. MolmoWeb-8B beats open-weight alternatives at the same scale across multiple web navigation tasks.

Source: allenai.org

Benchmark comparison from the Ai2 blog post. MolmoWeb-8B beats open-weight alternatives at the same scale across multiple web navigation tasks.

Source: allenai.org

The Ai2 Context

This release lands during one of the messier weeks in Ai2's recent history. CEO Ali Farhadi stepped down on March 12, replaced on an interim basis by Peter Clark - the same researcher who held the role between Oren Etzioni and Farhadi. Today, Microsoft announced it had hired Farhadi, along with Hanna Hajishirzi (who leads OLMo development at Ai2), Ranjay Krishna, and COO Sophie Lebrecht, all for Mustafa Suleyman's superintelligence team at Microsoft AI.

The departure has a structural cause: the Fund for Science and Technology (FFST), the $3.1 billion foundation that funds Ai2 per Paul Allen's instructions, is shifting from guaranteed annual grants to proposal-based processes that favor applied work over frontier model research. The board acknowledged publicly that "the cost to do extreme-scale open model research is extraordinary" and "really hard to do inside a nonprofit."

Ai2 isn't shutting down. All 2026 programs remain funded, and OLMo, Molmo, and the agent work continue. But the MolmoWeb release reads partly as a capstone - a statement from a team doing serious work while it still can.

Peter Clark's statement on the direction: "Our mission remains unchanged: advancing AI research and engineering for the common good, and turning our open breakthroughs into lasting, real-world impact."

The Allen Institute for AI is a Seattle-based nonprofit research institute founded in 2014 by Paul Allen.

Source: Wikimedia Commons

The Allen Institute for AI is a Seattle-based nonprofit research institute founded in 2014 by Paul Allen.

Source: Wikimedia Commons

Ai2's decision to release MolmoWeb as fully open - including training data - is a deliberate contrast to the approach taken by most of the field. Anthropic's computer use remains API-gated. OpenAI Operator is a product, not a research artifact. Anthropic's acquisition of Vercept last month brought in Allen Institute alumni specifically to improve closed computer use capabilities.

Ai2 is releasing the recipe, not just the dish.

What To Watch

OCR and Timing Sensitivity

Screenshot-based navigation has hard limits. Compressed images, small fonts, and high-DPI displays all introduce reading errors. Pages that load content steadily can catch the agent mid-render. Ai2 acknowledges both in the paper and the live demo is limited to a whitelist of supported sites for exactly this reason.

No Login Flows or Financial Transactions

The training deliberately excluded authentication flows and financial transactions. That's a safety call, but it's also a significant scope limit. Most real enterprise workflows touch credentials or payments at some point.

The Leadership Gap

With Farhadi and Hajishirzi both moving to Microsoft, the researchers most associated with the OLMo and Molmo open model lines are now building proprietary systems. Whether Ai2 can sustain the same pace of releases under new leadership - and a funding model that rewards applied work - is the open question.

The models are on Hugging Face at allenai/MolmoWeb-4B and allenai/MolmoWeb-8B. The code is at github.com/allenai/molmoweb. The paper is arXiv 2601.10611.

Sources:

- Ai2 releases open-source web agent to rival closed systems from OpenAI, Google, and Anthropic - GeekWire

- MolmoWeb - Allen Institute for AI

- allenai/MolmoWeb-8B - Hugging Face

- allenai/molmoweb - GitHub

- Microsoft hires top AI researchers from Allen Institute - The Decoder

- Allen Institute for AI CEO Ali Farhadi steps down - GeekWire